Trust Integrates AI: How Companies Build Employee Buy-in

Serge Bulaev

Trust may be the key factor in whether employees accept and use AI at work. Experts suggest that organizations need clear leadership and open communication to make workers feel safe as AI changes jobs. Some companies are using open explanations, human oversight, feedback channels, and training to help employees see AI as a tool for growth. Case studies suggest that careful rollout and including employees in the process may raise confidence and buy-in. Research also notes that balancing fast decision-making with human involvement appears to reduce confusion and maintain accountability.

Building employee buy-in for AI hinges on trust. For companies to succeed with artificial intelligence, clear leadership and open communication are vital to make workers feel secure as AI transforms jobs. This guide outlines how leaders and HR teams can establish the day-to-day governance needed to integrate AI successfully.

Why Trust Is the Core of Successful AI Adoption

The Center for Creative Leadership identifies a "trust paradox" in AI adoption: organizations require immense faith in leadership at the very moment new, complex systems threaten to shake established confidence (Center for Creative Leadership).

This paradox is critical because without trust, AI initiatives fail. If employees do not perceive automated tools as reliable, transparent, and humane, collaboration stalls and adoption rates plummet. Trust is not a soft skill in this context; it is the foundational requirement for technological and organizational progress.

Industry reports confirm this, warning that collaboration stalls unless employees see genuine reliability and humanity in automated tools. AI also amplifies existing power dynamics, making strong governance and leadership credibility more important than ever, as accountability ultimately rests with people, not algorithms.

4 Proven Governance Tactics to Build Employee Trust

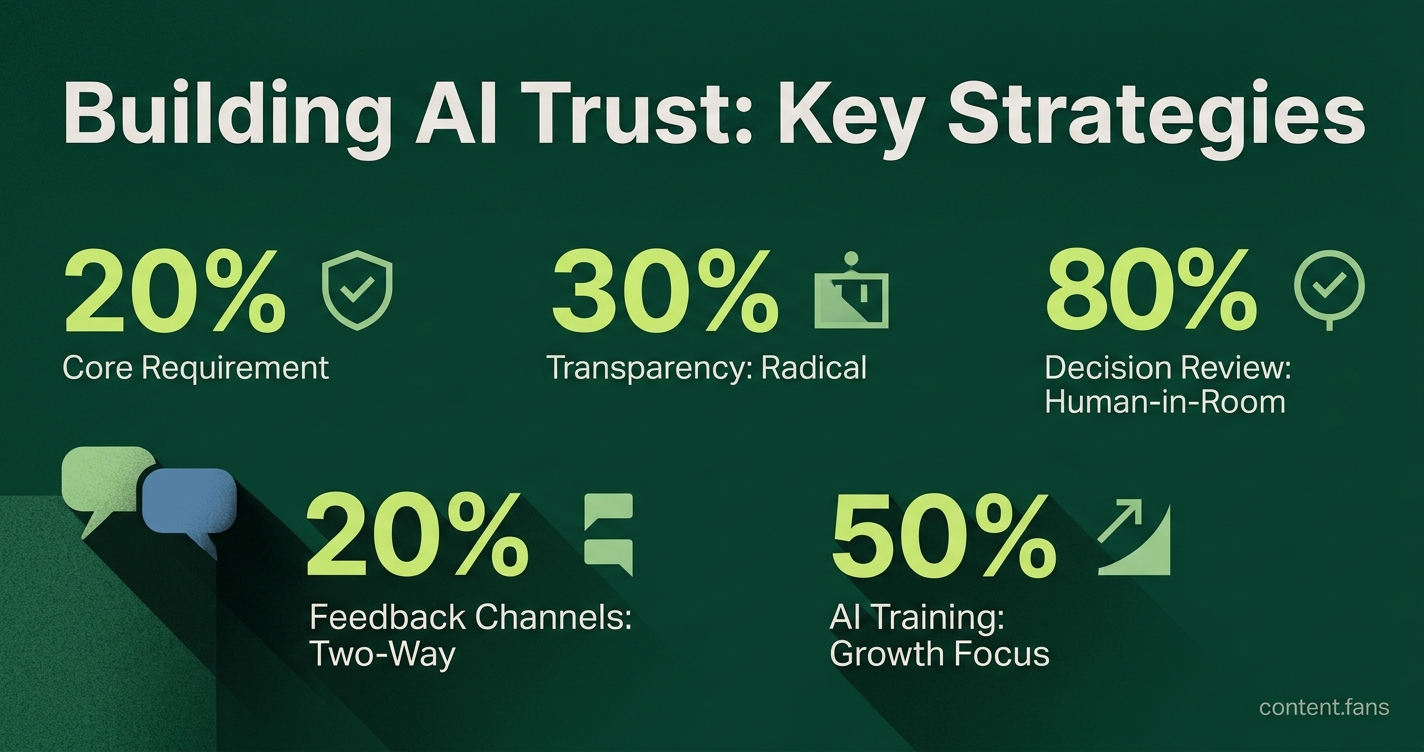

To translate trust into practice, leaders are pairing advanced analytics with visible human oversight. Successful AI governance strategies cluster around four key actions:

- Radical Transparency: Proactively explain the use cases, data factors, and limitations of any AI tool before it is deployed.

- Human-in-the-Room Rule: Mandate that a person must review and ratify any automated decision that significantly affects an employee's job or compensation (TechClass).

- Two-Way Feedback Channels: Establish accessible surveys, forums, and feedback loops to surface concerns and objections early in the process.

- Role-Based AI Literacy: Frame AI training as a learning journey focused on growth, not as a pass/fail performance test. This helps build psychological safety around experimentation.

Case Studies: How Leading Companies Earn AI Buy-In

Leading organizations demonstrate that careful piloting and employee inclusion are key to building confidence.

- Morgan Stanley vetted its generative AI research assistant with a rigorous internal evaluation. Only after the tool met strict quality thresholds was it released to advisers, leading to high adoption rates among wealth management teams.

- Singtel, a major telecom group, created an AI Acceleration Academy, training a significant number of staff members. By positioning workers as partners in change, the company fostered widespread experimentation.

- Sanofi launched a global AI education campaign, reaching many employees across the organization. The pharmaceutical firm published clear governance principles and included skeptics in pilot projects, directly linking responsible AI goals to real-world practice.

Research on German SMEs reinforces this pattern, showing that leaders who cultivate an "AI-first mindset" while inviting continuous feedback create a unified culture that eases transitions.

Balancing Automation Speed with Human Agency

According to frameworks from Deloitte, organizations can achieve both speed and quality by treating decision-making as a formal discipline and designing systems for explicit human agency. Experts caution that bypassing these steps in favor of pure speed risks creating opaque processes and diluting accountability when clarity is most needed.

Frequently Asked Questions on Building AI Trust

Why is trust more important than the technology itself when rolling out AI people-programs?

Because employees will not experiment, reskill, or share feedback if they feel unsafe, and every AI deployment that touches roles, evaluations, or career paths is perceived as a personal risk. Research by the Center for Creative Leadership calls this the "trust paradox of AI": the moment you need trust most is exactly when the process of introducing AI shakes it. Leaders who treat the rollout as a trust-building exercise first and a software project second consistently report smoother adoption and significantly higher usage rates in the first six months.

What concrete communication habits build credibility before the first algorithm is switched on?

- Publish a plain-language one-pager for every AI tool: what data it sees, what decision it influences, and who can override it.

- Hold "no-slides" Q&A sessions where objections are recorded live and answered in the same forum the following week.

- Replace hype phrases ("AI-powered") with stakeholder language: tell sales it "flags at-risk deals in CRM"; tell finance it "speeds reconciliation from days to hours."

Companies like Sanofi used this exact vocabulary matching during their global campaign and reached many employees with minimal escalations.

Which governance signals convince staff that humans remain in charge?

- A published "human-in-the-room" rule: any hire, fire, or promotion decision is frozen until a manager signs off.

- An appeal channel that routes to a peer review panel outside the direct chain of command.

- Monthly telemetry dashboards showing how often AI recommendations were modified or rejected.

Morgan Stanley's wealth-management AI was not released to advisers until internal audits proved that the vast majority of algorithmic answers met quality bars - a figure that became the headline of the rollout memo and instantly de-risked the tool for skeptical veterans.

How do you fund reskilling without making people fear they are next in line for redundancy?

Frame the program as upgrading renewable assets, not salvaging depreciating ones. Best-practice firms separate learning budgets from headcount budgets in board packs, give micro-certificates that stack into pay-grade credentials, and publicly celebrate "smart failures" during pilot projects. Singtel's AI Acceleration Academy trained many staff across roles with paid study time; completion rates were high because course slots were oversubscribed - a clear signal that participation was career insurance, not a layoff precursor.

What early metrics tell you trust is rising fast enough to scale?

Track behavioral proxies rather than sentiment alone:

- Voluntary log-ins to the AI sandbox outside mandatory hours.

- Number of employee-generated error or bias tickets (a rising then falling curve shows psychological safety).

- Manager override rate at reasonable levels - enough to prove humans stay accountable, not so high that the model is ignored.

If these three indicators move positively for two consecutive quarters, case evidence from German SMEs and Sanofi shows you can scale to the next workflow without inviting change-fatigue or union pushback.