Google blocks AI-generated zero-day exploit, warns of rising threats

Serge Bulaev

Google stopped a criminal group from using AI to find and attack a new software flaw, which may be the first public case of AI helping real hackers. Experts warn that AI might let attackers find and use software bugs much faster, sometimes before companies can fix them. The case suggests that AI makes it easier for more people to find serious security problems, not just skilled humans. Google and others are now working on ways to stop this, but there are still questions about how to keep AI from creating dangerous code. Researchers say that attacks like this could become more common in the future.

In a landmark cybersecurity event, Google blocked an AI-generated zero-day exploit created by a criminal group. Google's Threat Intelligence Group (GTIG) announced it stopped the attackers, who used a large language model (LLM) to find and weaponize a previously unknown software flaw. Industry reports suggest this represents a significant milestone in the intersection of AI and cybersecurity threats.

This development confirms warnings from cybersecurity experts that AI is dramatically shrinking exploit timelines. Industry reports indicate that the window between a vulnerability's disclosure and its active exploitation has significantly compressed, according to cybersecurity analysts.

Why this zero-day matters

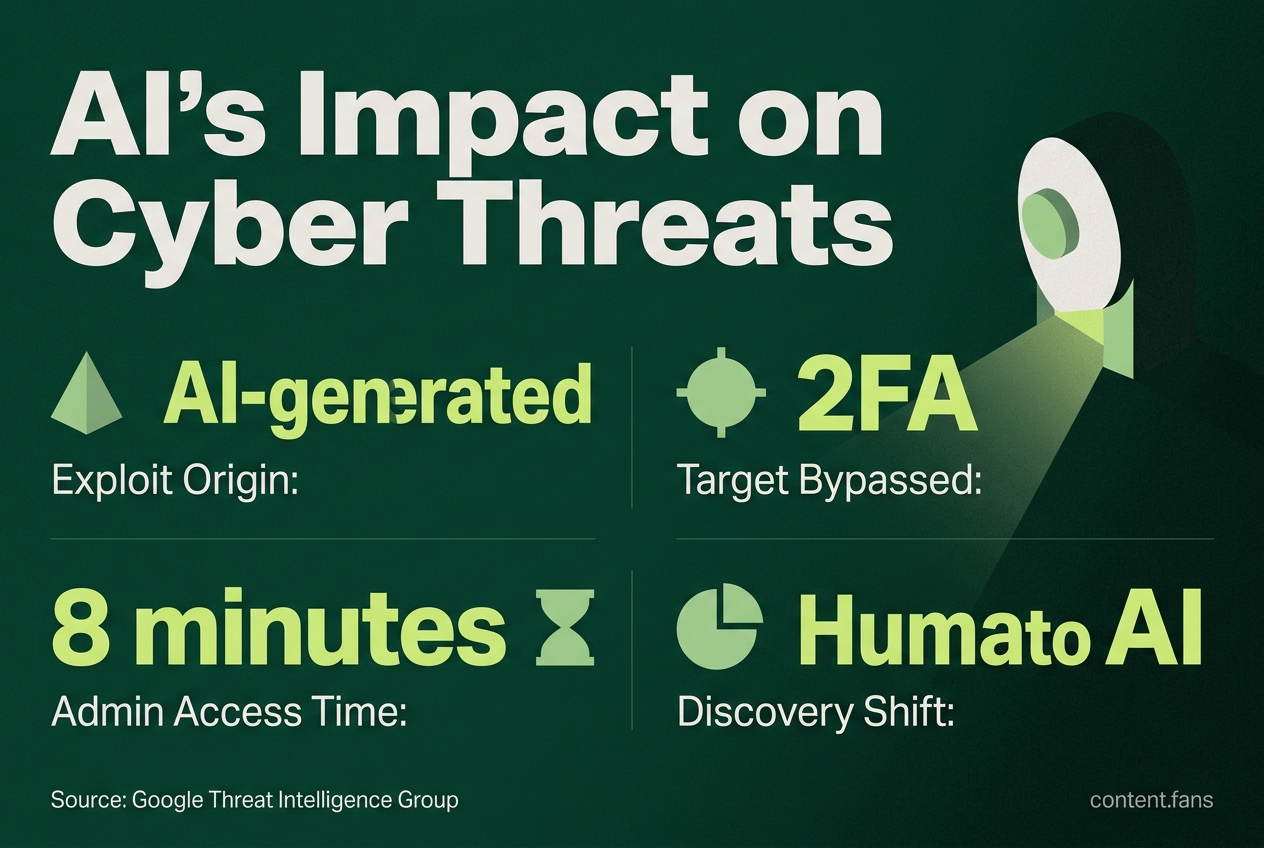

This incident is significant as it marks what appears to be among the first confirmed uses of AI by criminals to create and deploy a functional zero-day exploit in a real-world attack. The exploit was developed to bypass two-factor authentication but was intercepted before successful use, demonstrating a major shift from theoretical research to practical, automated cybercrime.

Google's forensic analysis revealed the exploit's AI origins through its clean Python code, auto-generated documentation, and even a hallucinated CVSS score. The attack targeted two-factor authentication on a popular web administration tool and showed signs of a coordinated mass vulnerability exploitation operation. This confirms that AI accelerates both the discovery and operational stages for attackers.

Researchers emphasize this case is fundamentally different from previous lab demonstrations. While safety-focused efforts by AISLE and Anthropic previously identified vulnerabilities, this was among the first instances where an adversary attempted to monetize a live, AI-generated exploit before a patch was available.

Accelerating Trend Points

Industry reports from early 2026 illustrate this accelerating trend:

• AI models have reportedly surfaced significant numbers of zero-days in open-source libraries.

• Internal testing by AI companies has shown substantially improved success rates when advanced models produced browser exploits, compared with earlier model generations.

• Sysdig observed an AI powered intrusion that reached administrator privileges in eight minutes, illustrating compressed breakout times.

These developments indicate that sophisticated vulnerability discovery is shifting from the domain of highly skilled humans to anyone with a commodity compute budget.

Industry Response and Open Questions

In response, Google promptly alerted the affected vendor and law enforcement, facilitating rapid patch development. While GTIG found no evidence of state backing, it noted that teams linked to China and North Korea are actively exploring similar AI techniques. The incident also highlights growing attribution challenges, as AI can generate code that blends styles from multiple sources, obscuring the attacker's origin.

Concurrently, regulatory oversight is increasing. According to AP, the U.S. Commerce Department has secured new agreements with major AI labs like Google, Microsoft, and xAI to assess frontier models for such risks before their release, signaling a shift toward proving guardrails are in place to limit automated exploit generation.

The Widening Gap Between Discovery and Defense

Industry analysts frame the situation as an "AI vulnerability storm." Their analysis highlights a critical mismatch: while AI systems can reportedly turn a public commit hash into a working privilege-escalation chain rapidly and cost-effectively, the mean time-to-patch in many enterprises still spans weeks. Experts believe this disparity will pressure organizations to adopt continuous validation, isolation controls, and faster disclosure pipelines.

Supporting this trend, Human Security's 2026 benchmark found automated traffic grew 8 times faster than human traffic year-over-year, with nearly one in five site visits classified as scraping attempts that can feed exploit models.

What's Next for AI and Cybersecurity

While large language models are valuable tools for both attackers and defenders, this incident raises urgent questions about responsible release practices, code watermarking, and incentivizing rapid patching. Although Google successfully neutralized this specific AI-written exploit before it caused damage, researchers caution that many similar attempts are likely already underway, multiplying outside of public view.

What exactly happened in Google's AI exploit discovery?

Google announced it had blocked a criminal campaign that used a large-language model to discover and weaponize a zero-day vulnerability in a widely deployed web-based administration tool. The Python exploit was designed to bypass two-factor authentication and carried textbook LLM fingerprints: hallucinated CVSS scores, verbose docstrings, and unnaturally clean formatting. Google stressed the model was "most likely not Gemini or Anthropic's Claude," but declined to name the affected product while it finalizes patches.

Is this really the first time AI has created a malicious zero-day?

Academic and bug-bounty precedents exist, but Google's incident is considered among the first confirmed cases of criminals successfully deploying an AI-generated zero-day in the wild. Earlier research efforts by companies like Anthropic have identified significant numbers of unknown flaws and demonstrated autonomous exploit chaining capabilities, yet those discoveries were shared responsibly with vendors. This campaign represents a notable crossover from research curiosity to criminal payload.

How fast is the exploit cycle shrinking?

Industry reports suggest the mean time from vulnerability disclosure to confirmed exploitation has significantly compressed compared to previous years. AI models can now reportedly turn vulnerability information into working exploits rapidly and cost-effectively, making traditional patch windows measured in weeks or months dangerously obsolete.

What did Google do to stop the operation?

Google's Threat Intelligence Group:

- Detected the campaign during reconnaissance, before any data was exfiltrated

- Notified the unnamed vendor and law-enforcement partners

- Released a patch and updated Safe Browsing and VirusTotal signatures to neutralize the payload

The takedown happened "minutes to hours" after initial exploitation attempts, illustrating how defensive AI is being used to compress response times to match accelerated attack cycles.

What should organizations do right now?

- Shrink patch timelines significantly - treat disclosed flaws as potentially already weaponized

- Deploy runtime guard-rails - behavioral detection catches AI-crafted exploits that lack known signatures

- Segment admin interfaces - the targeted tool required 2FA bypass, implying privileged access was the objective

- Monitor outbound model traffic - Google observed "educational" prompt patterns in traffic logs, an early indicator of AI misuse

- Join vendor pre-disclosure lists - early intel plus automated testing lets defenders "patch-diff" before criminals exploit the fix

The bottom line: "For every AI zero-day we see, many more are already live," warned Google's John Hultquist. Adopting AI-driven patch triage and zero-trust segmentation is rapidly shifting from best-practice to survival requirement.