OpenAI, Microsoft adopt MRC for AI supercomputer networks

Serge Bulaev

The main limit for AI supercomputers may be moving from chips to network fabric, as data suggests network slowdowns can waste many GPUs. Companies like OpenAI and Microsoft have started using Multipath Reliable Connection (MRC), which may help fix issues like latency and congestion by spreading data across many network paths. Spending on networking is growing and could remain about one-third of all AI infrastructure costs. Early results suggest MRC can help model training continue even if some network links fail, though analysts note that more data is needed to know the full impact. It appears that strong network design, not just adding more chips, may bring the biggest benefits to AI systems in the next few years.

The critical bottleneck for AI supercomputer networks is no longer chips, but the network fabric itself. OpenAI and Microsoft are now adopting Multipath Reliable Connection (MRC) to solve network congestion and latency, which can idle thousands of GPUs and undermine performance gains from added compute.

The AI Bottleneck Shifts from Silicon to Network Fabric

According to industry reports, as AI training clusters scale to massive sizes, performance gains from raw GPU counts are plateauing. The true governor of throughput is now network congestion and tail latency. Engineers at OpenAI report that a single delayed data transfer can halt thousands of accelerators, effectively erasing the value of massive GPU investments. This operational reality is forcing a strategic pivot toward more resilient and intelligent network design.

How MRC Solves Critical Network Pain Points

The focus is shifting because network issues like burst congestion, tail latency, and single-path fragility have become the primary brake on scaling large AI models. Multipath Reliable Connection (MRC) directly addresses this by intelligently spraying data packets across hundreds of network paths, ensuring resilience and consistent performance.

Released as an open standard through the Open Compute Project, MRC extends the RoCEv2 protocol, allowing a single data flow to be sprayed across hundreds of network paths. This approach directly counters three core fabric stressors:

- Long-Tail Latency: By creating massive parallelism, MRC mitigates the impact of the slowest packets that would otherwise stall collective operations.

- Burst Congestion: Packet spraying naturally balances load during intense, bidirectional parameter updates, preventing buffers from flooding.

- Single-Path Fragility: Using Segment Routing v6 (SRv6), MRC enables microsecond-level failover, rerouting traffic around a failed link without forcing a full job restart.

This technology is being tested and piloted at major AI facilities, with growing adoption across cloud infrastructure providers.

Investment Trends and the Rising Cost of Networking

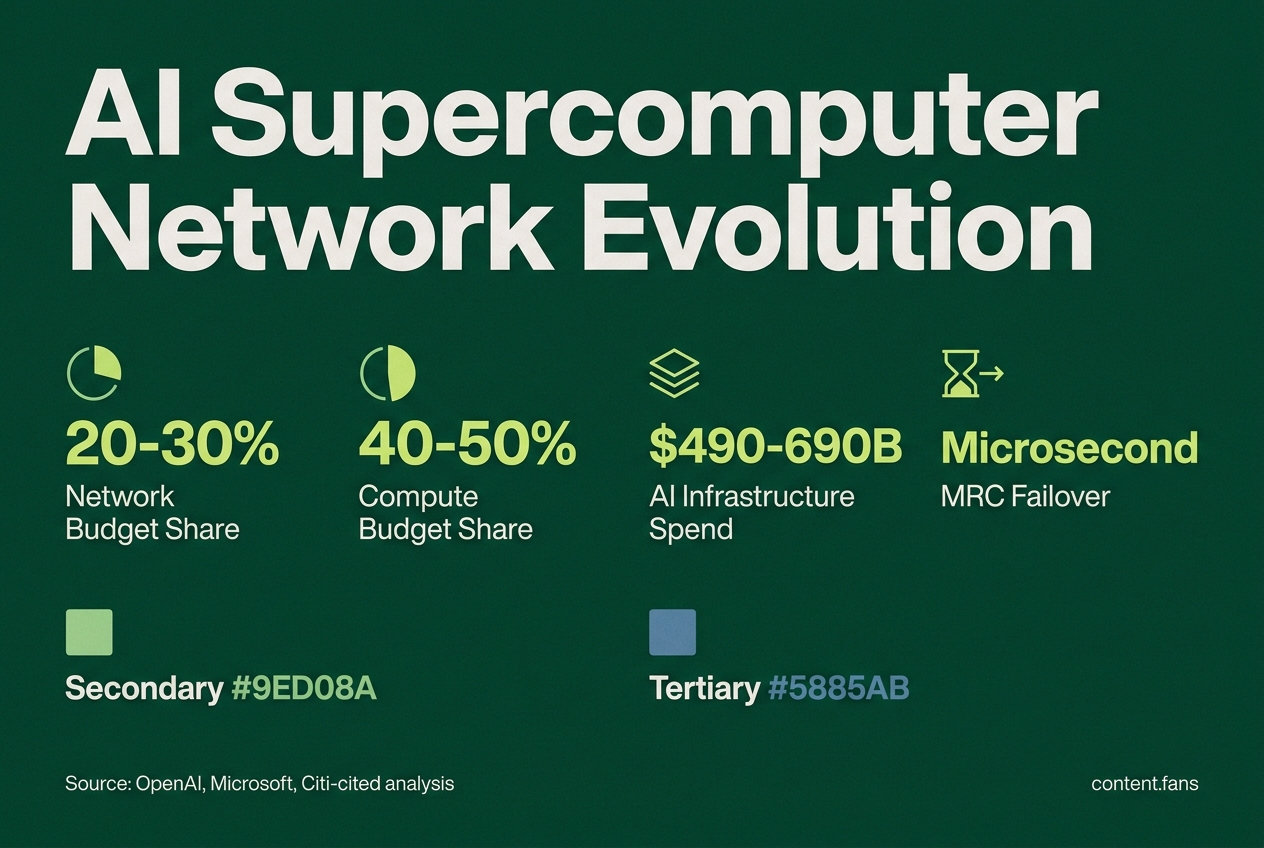

This technical shift is reflected in capital expenditure. While compute hardware still commands 40-50% of AI infrastructure budgets, networking has grown to capture 20-30% - a market valued at approximately $50-70 billion, based on a Citi-cited analysis on YouTube. This share is steadily increasing as hyperscalers recognize that fabric health is a critical lever for performance. Financial analysts estimate that of the projected $490-690 billion in AI infrastructure spending for 2026, networking will remain tightly coupled to GPU outlays, making it a primary focus for upgrades.

Early Results and the Future of AI Fabrics

Early metrics from production deployments are highly positive. Engineers report microsecond recovery from link failures, a dramatic improvement over the seconds-long delays in legacy setups. This resilience has allowed frontier LLMs for services like ChatGPT to train without the multi-hour interruptions that previously plagued large-scale runs.

While analysts caution that more data is needed to understand the long-term impact, the initial evidence is compelling. As dataset sizes and inference demands grow, the spending ratio may tighten even further. For now, the conclusion is that strong network design, not just adding more silicon, will drive the most significant performance benefits in AI systems for the foreseeable future.