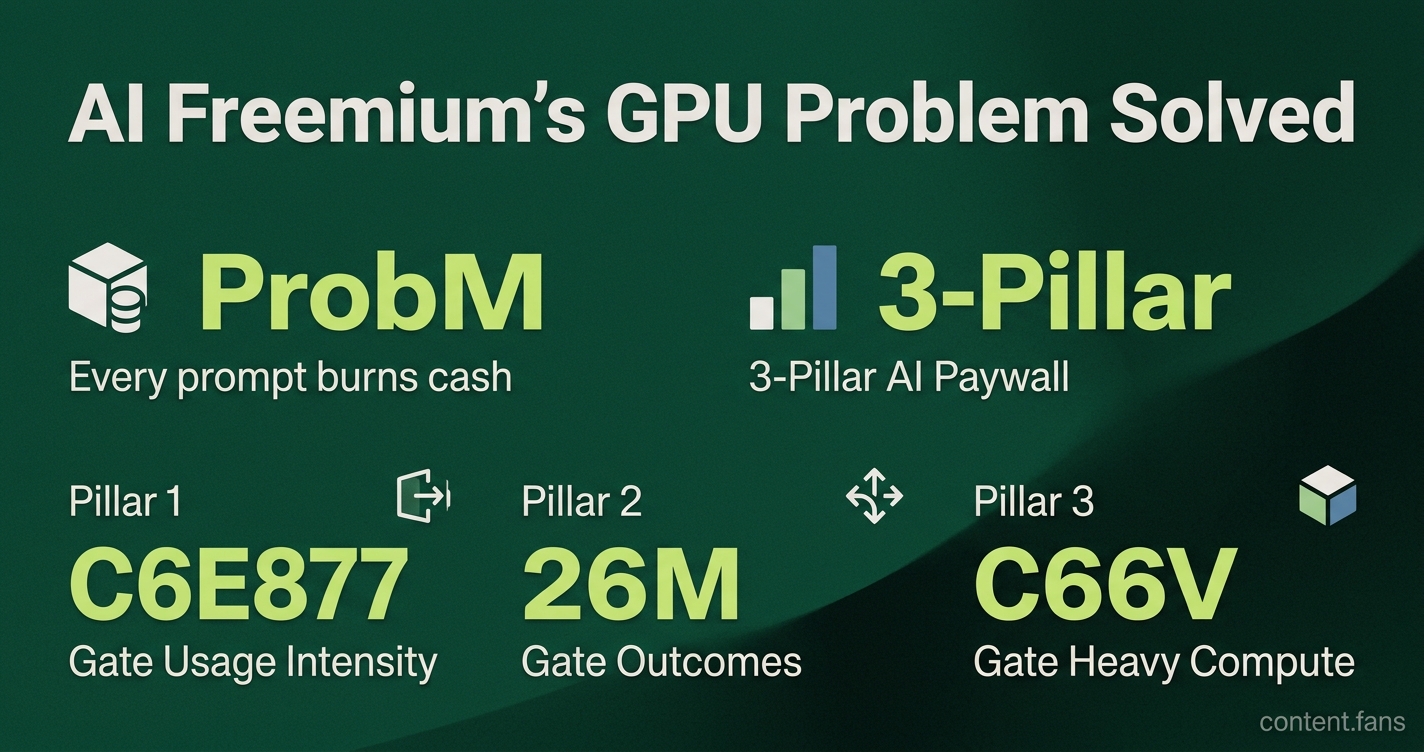

Google vet unveils 3-pillar AI paywall to fix freemium's GPU problem

Serge Bulaev

Vikas Kansal, who worked on Google Gemini, suggests that old "freemium" pricing does not work well for AI apps because every user action costs a lot in GPU usage. His three-pillar framework may help by putting limits on how much people can use, what special features they get, and the most expensive tools like video rendering. This approach might let companies control costs and still offer some free access. Early signs suggest that more companies are trying mixed pricing based on real computing used, and this could lead to more stable profits. The framework appears to help balance free use for many with the need to pay for heavy or advanced use.

A new 3-pillar AI paywall framework from Google Gemini veteran Vikas Kansal offers a solution to the GPU cost problem plaguing freemium AI apps. Drawing on his experience, Kansal argues that legacy SaaS models collapse when every user prompt spins costly GPUs, demanding a new approach to monetization.

This view is supported by a guest essay in Lenny's Newsletter, which notes that "serving one more free user costs almost nothing in SaaS, but every prompt burns cash in AI." Kansal expands on this insight in a LinkedIn post, outlining a tiering approach that maps pricing to real compute spend rather than to a single "smartest model."

The nut graf: Kansal's model measures three concrete cost drivers - usage intensity, desired outcomes, and heavy-compute modalities - and turns each into a distinct upgrade lever.

Why freemium stalls for GPU-hungry apps

The three-pillar AI paywall is a pricing strategy that gates features based on their underlying compute cost. It creates separate upgrade triggers for usage intensity (like prompts per day), specific outcomes (like automated workflows), and heavy-compute modalities (like video generation), aligning price with the real cost of service.

Traditional SaaS relies on near-zero marginal cost, making broad free tiers sustainable. AI reverses that math. Each long prompt can light up dozens of GPUs; power users may create significantly higher bills than average users. As Kansal puts it, gating only the smartest model "turns power users into GPU-melting freeloaders."

Google's first Gemini Advanced tier illustrates the risk: many users stayed on the free model for quick queries yet expected high-compute sessions during peak demand, leading to unpredictable spend according to the newsletter account.

The Three Pillars in Practice

• Gate usage intensity - Free buckets cover low-volume prompts and smaller context windows, while higher tiers unlock more daily prompts and expanded context capabilities.

• Gate outcomes - Features that collapse multi-step tasks, such as one-click automation flows, live behind paywalls because they demonstrably save time and therefore carry clear ROI.

• Gate the heaviest compute modalities - Resource-intensive features like advanced video rendering or real-time simulations remain exclusive to higher tiers; text and basic image generation stay open to encourage adoption.

Kansal recommends setting the "hard paywall the moment a user wants to render a cinematic video," keeping margin-eating workloads controllable. Outcome gating, meanwhile, aligns price with productivity: if an agent books meetings or drafts full slide decks, businesses can quantify the benefit and justify the upgrade.

Early market response

Hybrid pricing is already gaining traction. Industry reports suggest a growing number of AI companies are pairing pay-per-token or pay-per-call models with prepaid credits, indicating companies prefer predictable but elastic revenue. Enterprise AI deals increasingly focus on performance metrics rather than traditional seat counts, reflecting rising comfort with outcome-based contracts.

Major AI providers are implementing tiered approaches that reflect compute costs. For example, providers often keep larger context windows available while charging for volume usage, illustrating Pillar 1 dynamics. Meanwhile, computationally intensive features like real-time AI models typically remain restricted to paid users following Pillar 3's heavy-compute principles.

Takeaways for product teams

Developers evaluating paywalls can benchmark against Kansal's matrix:

- Map every tier to a clear compute driver (tokens, calls, GPU hours).

- Quantify outcome features in user-facing language (minutes saved, tasks collapsed).

- Keep expensive modalities scarce until conversion makes economic sense.

This framework may indicate a path to predictable margins without throttling growth, giving AI vendors room to accommodate both casual and power users while staying solvent.

Why does traditional freemium break down for AI products?

Every prompt fires GPUs, so each free user burns real money.

In classic SaaS the marginal cost is pennies; in AI it scales linearly with volume. The old playbook - give away the basics, gate the "smartest model" - turns power users into GPU-melting freeloaders who never upgrade.

What exactly is the three-pillar paywall?

- Gate usage intensity - prepaid bundles of prompts, tokens and context-window size

- Gate outcomes - charge for one-click workflows that save hours (think "generate 50-slide deck from 3 bullet points")

- Gate heavy-compute modalities - reserve cinematic video, real-time 3-D or large context windows for the top tier

The pillars tie upgrade triggers to measurable cost drivers and user ROI, not to an abstract "premium model" switch.

How does prepaid intensity pricing help finance and marketing?

Predictable unit economics.

Instead of praying that heavy users convert before the monthly bill arrives, you sell tiered buckets (e.g., varying prompt allowances). Revenue is collected up-front, GPU budgets are pre-funded, and finance can finally sleep at night.

Google's move from a single "Gemini Advanced" tier to multiple pricing buckets is the live case study.

Why are outcomes the highest-conversion upsell?

Users pay when they can see time saved in hard numbers.

Collapsing a 20-step research task into one click or turning a 3-hour report into a 5-minute prompt delivers an instant ROI story, making the upgrade feel free. Pillar 2 monetizes productivity, not raw compute - the part of the value stack customers actually budget for.

Which heavy modalities work best as upgrade triggers?

Anything that screams "this must be expensive" to the user:

- cinematic video generation

- real-time world models

- large context windows with premium pricing structures

Reserve these for premium tiers and you get both a capacity throttle and a shiny object that converts curious experimenters into paying customers.