New benchmark shows AI agents struggle with real-world office tasks

Serge Bulaev

Recent tests suggest that AI agents may have trouble handling real-world office tasks. In a large benchmark, humans scored about 80.7% accuracy, while the best AI setup reached only 68.7%, with many agents performing much lower, especially on hard tasks. Another study found leading AI models answered fewer than one in four real workplace questions correctly, showing a gap between lab results and actual job performance. Researchers say this may be due to something called the 'Data Association Gap,' where AI struggles to connect information from messy and changing files. Some new methods may help improve results, but so far, evidence suggests AI agents still have a lot of work to do before they can reliably help with office workflows.

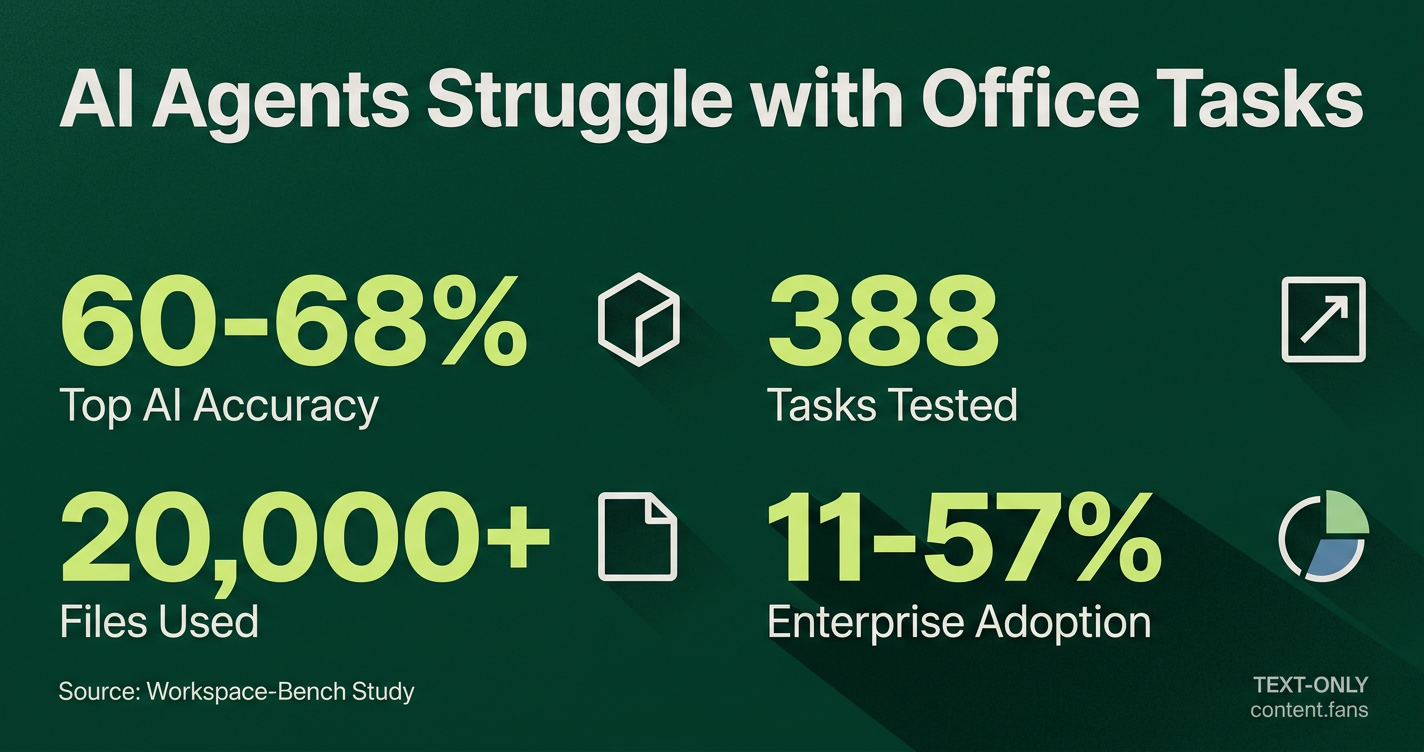

A comprehensive new benchmark, Workspace-Bench, confirms that AI agents struggle with real-world office tasks, revealing a significant gap between their current capabilities and the demands of professional workflows. Developed by researchers from Shanghai Jiao Tong University, ByteDance, MIT, and Tsinghua, the benchmark tested 28 AI agent setups on 388 tasks using over 20,000 files in 74 formats.

The study's key finding shows a stark contrast: humans achieved significantly higher accuracy than AI agents, with the top-performing AI agent configuration reaching approximately 60-68% accuracy range. The average accuracy for all tested agents was substantially lower, with performance declining further on difficult tasks.

These findings are consistent with other research. Industry reports suggest that top AI models struggle with real-world questions in fields like consulting and law. This highlights a persistent gap between AI's performance in controlled tests and its reliability in professional settings.

Key Benchmark Metrics and Findings

AI agents struggle in office environments due to the 'Data Association Gap,' an inability to connect information across messy, evolving, and varied file formats. Performance drops significantly when tasks require agents to trace data lineage or work with multiple document types, unlike in controlled lab settings.

- Realistic Scenarios: The benchmark used five synthetic employee profiles, exposing agents to messy folders, inconsistent naming, and multiple file versions common in real offices.

- Complexity Penalty: Agent accuracy declined substantially when moving from simple tasks to hard tasks that required navigating different file formats.

- Inefficient Scaling: Increased compute did not guarantee success. Some agents used substantial computational resources on single tasks without outperforming more efficient models, indicating deep architectural limitations.

The researchers identify this core issue as the "Data Association Gap" - the failure of AI agents to connect and synthesize information from disparate and constantly changing files. This gap cripples essential office skills like tracking data sources, citing information correctly, and maintaining context, which are vital for complex reasoning.

Adoption Trends and Practical Barriers

Enterprise adoption reflects this performance gap. Production adoption remains low at approximately 11-57%, with a large adoption-to-production gap existing where organizations may adopt AI agents but struggle to deploy them in live production workflows. While single-step tasks in areas like CRM can see moderate success rates, industry reports suggest performance drops significantly for multi-step scenarios.

This low reliability introduces significant practical and financial barriers. While powerful models are priced to reduce rework, user reports suggest performance can be inconsistent. Incidents like unexpected token usage highlight how costs and rate limits can quickly negate potential labor savings.

Tackling the Data Association Gap

Industry research outlines several promising solutions to bridge this gap:

- Centralized Metadata Hubs: Implement zero-copy context hubs to harvest technical and business metadata, with organizations working toward comprehensive coverage within reasonable timeframes.

- Enhanced Memory and Search: Combine persistent agent memory with hybrid search techniques (BM25 and dense retrieval). This approach has shown promise for improving recall in agent systems.

- Standardized Protocols: Establish strong protocol governance to align communication between models and agents, an issue explored in an arXiv paper that calls for using audited tool credentials for secure and reliable interactions.

Emerging techniques like multi-agent orchestration and recursive language models show promise for improving reasoning. However, benchmark results confirm these advanced methods are not yet a complete solution.

Ultimately, the Workspace-Bench serves as a crucial reality check. Its diverse, real-world dataset and rigorous scoring methodology continue to pinpoint exactly where even the most advanced AI agents fail at the unglamorous, everyday tasks that define modern knowledge work.

What is Workspace-Bench and why does it matter?

Workspace-Bench is a new benchmark that replicates five realistic office profiles using 20,000+ files across 74 formats. It tests whether AI agents can reason across documents, spreadsheets, PDFs and code while tracking versions. The gap between the best agent performance and human baseline shows that even top setups still trail human performance in everyday knowledge work.

Which agent combination came out on top and by how much?

The top-performing agent configuration achieved accuracy in the 60-68% range across the 28 tested configurations, but remained significantly below human performance levels. Average performance across all tested agents was substantially lower, highlighting that even the leaders are far from reliable in messy, multi-file environments.

Why does accuracy drop when tasks get harder?

Success falls significantly when moving from easy to hard tasks. The main culprits are agents' limited skill with heterogeneous file formats and their inability to trace version histories, a shortcoming the study labels the Data Association Gap.

What exactly is the "Data Association Gap"?

It is the agents' struggle to connect data across different formats and file versions. Because many office projects mix spreadsheets, slides, PDFs and code that evolve over time, an agent that cannot follow those links quickly loses context and makes errors.

Are bigger budgets and more tokens solving the problem?

Extra compute offered little help. Some agents consumed substantial computational resources per task yet still scored lower than leaner rivals, proving that the problem is architectural, not just a matter of scale.