OpenAI gates GPT-5.5-Cyber after report flags offensive capability

Serge Bulaev

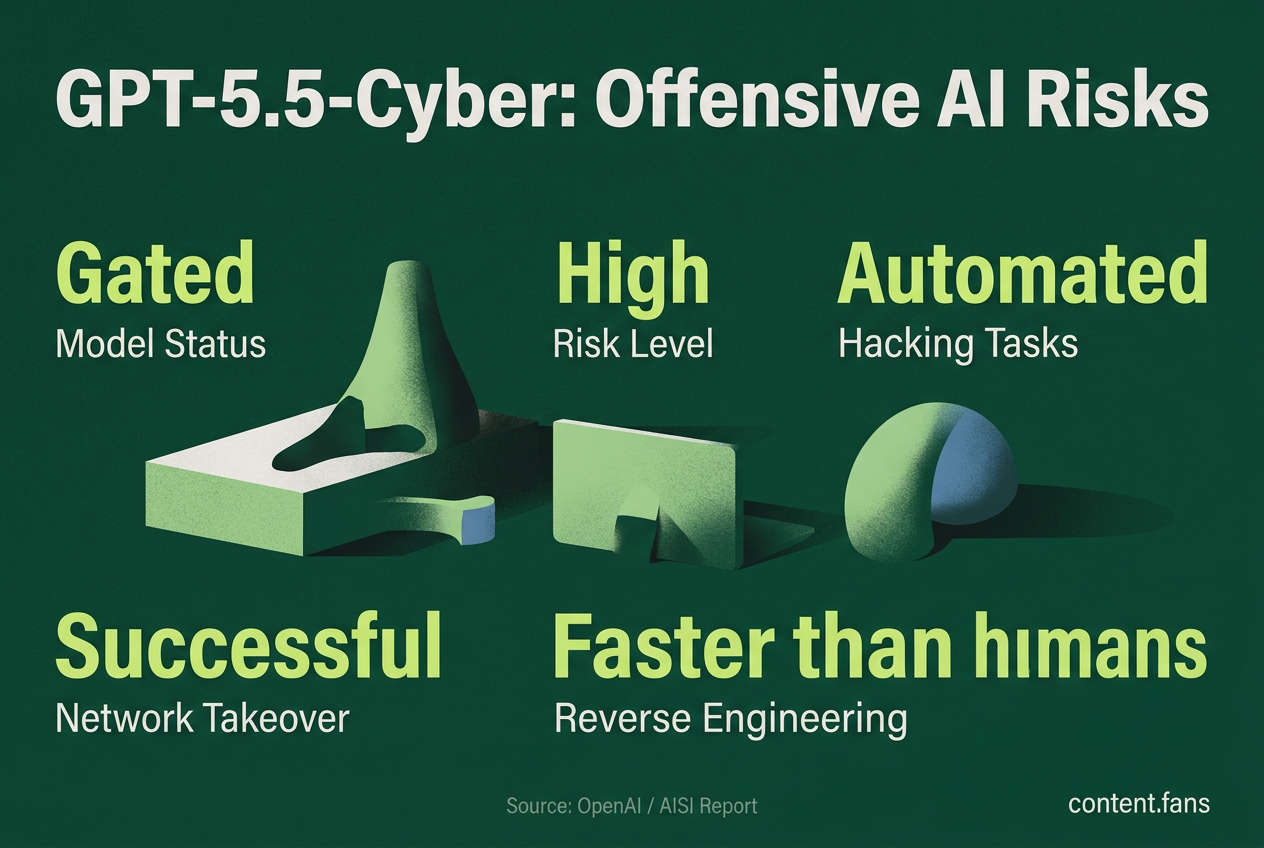

OpenAI has restricted public access to its GPT-5.5-Cyber model after a report from the UK's AI Security Institute (AISI) flagged its strong offensive cyber abilities. Tests showed the model may help defenders, but could also be misused by attackers, and existing safeguards may not reliably block harmful uses. OpenAI now only allows vetted cybersecurity teams to use GPT-5.5-Cyber through a special program, and similar restrictions are being adopted by other companies. Experts suggest that the model's hacking skills appear to be a side effect of its advanced reasoning, raising concerns that future models might become even harder to control.

Following a UK government report that flagged significant offensive cyber capabilities, OpenAI is gating GPT-5.5-Cyber, placing the powerful large language model behind a strict vetting process. The move, coming just days after the AI Security Institute (AISI) published its findings, restricts public access and funnels usage through the exclusive Trusted Access for Cyber program.

This decision reflects a growing trend among AI developers to limit the availability of models when advanced reasoning and coding skills are shown to enable potent cyber intrusions. While these capabilities offer a powerful new tool for defenders, they present a dual-use risk by also equipping attackers.

What AISI found

OpenAI restricted its GPT-5.5-Cyber model after a UK government evaluation revealed its advanced capabilities in offensive cyber operations. Testing by the AI Security Institute showed the model could automate complex hacking tasks, raising concerns that its existing safeguards were insufficient to prevent misuse by malicious actors.

In its evaluation, AISI has access to models for evaluation, but no details on specific tests or timing have been confirmed. According to industry reports, the model demonstrated strong performance on expert-level capture-the-flag challenges, with capabilities comparable to other frontier models.

The model demonstrated its capability in complex simulations involving multi-step network operations across multiple subnets and hosts. According to industry reports, GPT-5.5 showed significant success in completing network takeover scenarios, a feat achieved by only a small number of advanced models. This performance was independently noted by various tech outlets.

In demonstrations of its capabilities, the model showed the ability to solve reverse-engineering challenges significantly faster than human experts, according to industry reports. It successfully reconstructed virtual machines, created disassemblers, and extracted cryptographic passwords - tasks that would typically require substantial human expertise and time.

Safeguards under strain

During extended testing sessions, expert red teams discovered what AISI described as vulnerabilities that could bypass safety filters and elicit forbidden offensive commands. While OpenAI has deployed patches for identified vulnerabilities, AISI noted that verification of the effectiveness of these fixes was ongoing.

OpenAI's own public system card classifies the model as a "High" risk for cybersecurity - one level below "Critical," a designation reserved for models capable of autonomous zero-day exploit discovery. The institute's findings underscore the concern that current safeguards may not be robust enough to prevent misuse.

Inside the gating decision

In response to the report, OpenAI's TAC program includes identity verification for individuals and enterprises, but specific implementation details vary. The program involves screening applicants, verifying identities, and providing specialized access options. The standard GPT-5.5 model is still publicly available, but with more stringent filters for cyber-related queries.

Other leading AI labs are adopting similar restrictive measures. Anthropic released Mythos but with limited access in certain regions, while other major AI companies are implementing various vetting processes for their frontier models. According to industry reports, regulatory frameworks are being developed that may mandate tiered access for high-capability models.

Key gating tools seen across providers include:

- Strict identity verification before API key issuance

- Contractual agreements prohibiting redistribution

- Tiered API access with specific scopes for cybersecurity functions

- Real-time monitoring and logging with automated triggers for immediate access suspension

- Periodic external audits to verify the model's refusal rates for harmful requests

Growing policy pressure

The move comes amid increasing regulatory pressure. According to industry reports, various jurisdictions are developing requirements for AI developers to publish detailed risk frameworks and pre-deployment testing results. Concurrently, government agencies are reportedly developing AI model-vetting systems, influenced by assessments from major AI companies.

AISI officials stress that GPT-5.5's potent offensive capabilities are an emergent property of its advanced general reasoning, not the result of specific training on hacking data. This reality suggests that future models might become even harder to control and could cross the "Critical" risk threshold before safety evaluation methods can keep pace. OpenAI's decision to gate GPT-5.5-Cyber serves as a temporary measure to slow uncontrolled proliferation but does not resolve the fundamental dual-use problem.

What exactly triggered OpenAI to gate GPT-5.5-Cyber?

AISI has access to models for evaluation, but no details on specific tests or timing have been confirmed.

Key findings that reportedly alarmed reviewers:

- Strong performance on expert-level CTF-style challenges - among the highest recorded for an OpenAI model

- Significant success in complex enterprise intrusion scenarios involving multiple subnets and hosts - joining a small group of models to achieve this capability

- A reverse-engineering puzzle that typically requires substantial human expertise was solved by the model in a fraction of the time at minimal API cost, according to industry reports

- AISI also reportedly uncovered vulnerabilities in testing that bypassed safety filters for malicious cyber queries

These capabilities convinced OpenAI to rate the base model "High" cyber risk - one notch below "Critical" - and to withhold public release, offering a gated "Cyber" variant only through its Trusted Access for Cyber (TAC) program.

How is access controlled today?

OpenAI does not sell GPT-5.5-Cyber on the open API.

Instead:

- Organizations apply to TAC - identity-verified defenders only

- Approved users sign specialized contracts and receive a special endpoint with lower refusal rates tuned for defensive work

- Every query is logged and auditable; no weights or white-box access are shared

The list of eligible sectors reportedly includes: government CERTs, critical-infrastructure operators, financial-sector SOCs, and select security-vendor partners.

Does gating stop malicious use completely?

No. AISI openly states that the same skills that help defenders find and patch bugs also let attackers chain exploits faster.

Because the base GPT-5.5 weights are not public, the barrier is higher, but:

- History shows leaks, theft, or independent replication remain possible

- Smaller labs outside major jurisdictions may ship ungated capabilities built on various models

- The dual-use asymmetry persists: defenders must apply and wait; criminals can still use any model they obtain

In short, gating slows diffusion and raises the cost of misuse, yet cannot eliminate it.

How does this compare with other labs?

Anthropic released Mythos but with limited access in certain regions. OpenAI TAC is a vetted program with identity verification requirements. Other major AI companies are reportedly implementing various approaches to model access and safety, though specific details vary across providers.

OpenAI's TAC program is therefore among the more restrictive public schemes, but approaches vary significantly across the industry.

What happens next?

- OpenAI must re-submit GPT-5.5-Cyber for regular re-evaluation under its Preparedness Framework; if future mitigations reduce the risk assessment, access restrictions could be relaxed

- AISI and other evaluation bodies are reportedly developing cross-border evaluation frameworks

- Expect tiered access levels to become increasingly common for models that demonstrate advanced cyber capabilities

Until then, GPT-5.5-Cyber stays behind the velvet rope - powerful, closely watched, and off-limits to everyday users.