Enterprises Adopt 4 Controls for AI Agent Governance, Compliance

Serge Bulaev

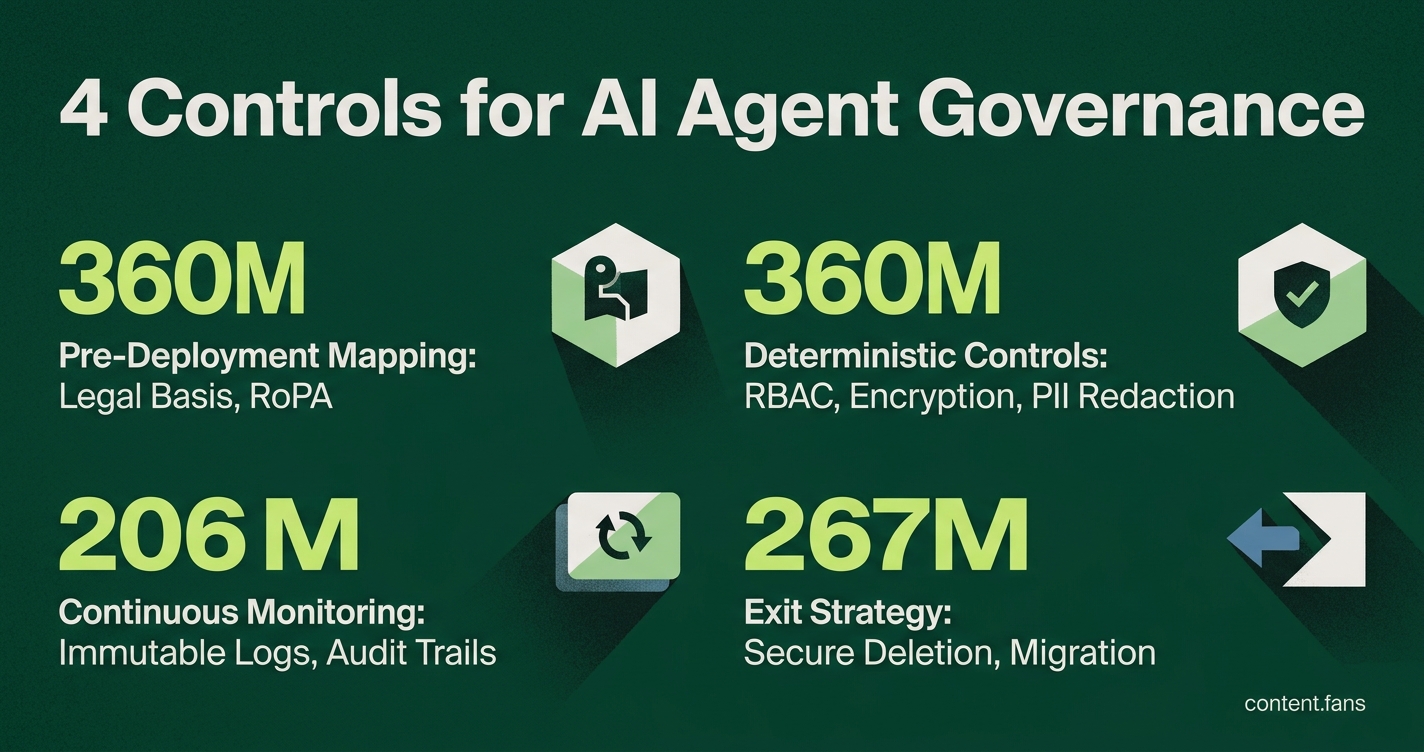

Enterprises may need to use four main controls to manage risks and compliance when using cloud-based AI agents. First, before deployment, they should map all data sources accessed by the agent and confirm legal bases for using each type of data. Second, during operations, organizations might set up strict controls like filtering out data without consent, encrypting data, and using automated redaction and strong access controls. Third, continuous monitoring and logging appear to help maintain security and traceability, with periodic permission reviews and audit trails for incident response. Finally, having a clear exit plan, including secure data deletion and export, is suggested to ensure proper closure at the end of a vendor relationship.

Effective AI agent governance is central to deploying cloud-based AI helpers, as enterprises navigate complex risks. Security teams must protect logs, legal departments must manage data deletion rights, and engineers require deterministic safeguards. This guide maps four proven controls to the agent lifecycle to ensure privacy, compliance, and operational uptime.

1. Pre-Deployment Mapping and Legal Footing

Before deployment, organizations must document all data an AI agent will access, its purpose, and the legal authority for its use. Industry best practices recommend that teams map every data source the agent will access, paying special attention to personal data (MTM Video). This involves confirming a legal basis for each data category, finalizing Data Processing Agreements (DPAs), and, for EU data, creating a GDPR Records of Processing Activity (RoPA) entry.

Concurrently, a thorough AI risk assessment is crucial. As the MTM guide notes, high-risk applications like hiring tools require conformity assessments and human oversight plans under the upcoming EU AI Act, creating an essential baseline for future audits.

Enterprises secure AI agents through four key governance controls: pre-deployment data mapping to establish a legal basis; deterministic operational controls like encryption and redaction; continuous monitoring and logging for traceability and incident response; and a clear exit strategy for secure data deletion and migration upon contract termination.

2. Deterministic Controls During Operations

When an agent is live, its controls must operate automatically. This includes implementing a pipeline that deterministically filters out non-consented data before it reaches the AI model, as described by OneTrust's chief innovation officer (HumanX talk). Augment this filtering with essential technical safeguards:

- Role-based access control (RBAC) with least privilege principles

- AES-256 encryption for data in transit and at rest

- Automated PII redaction for all cloud API calls

- Multi-factor authentication (MFA) for administrative consoles

These controls align directly with SOC 2 criteria for Security and Confidentiality, as well as HIPAA's encryption and audit standards.

3. Continuous Monitoring, Logging, and Rights Management

Hosted agents offer system and console logs via logstream API. To manage this, OvalEdge highlights the importance of immutable lineage logs, which enable teams to "maintain traceability through automated monitoring instead of periodic assessments" (OvalEdge). Regular quarterly permission reviews are also recommended to prevent unauthorized scope creep.

Comprehensive audit trails are fundamental for incident response, especially as research indicates a growing number of breaches involve agentic AI. Incident playbooks, updated based on frameworks like NIST SP 800-61r3, should include procedures for preserving prompts and system snapshots before using kill switches or revoking API keys to contain threats like prompt injection.

4. Retention, Deletion, and Exit Planning

A robust exit plan completes the governance framework. Enterprises must define a secure, encrypted format for exporting chat histories and embeddings. Crucially, they need a tested procedure to ensure the complete deletion of all keys, APIs, and stored content from vendor systems within a reasonable timeframe following contract termination.

What is Dreaming memory in hosted AI agents, and why must enterprises control it?

Dreaming memory is persistent, cloud-stored context that agents retain between sessions. If left unmanaged, it becomes a hidden compliance surface that can hold personal data, API secrets, or regulated health records without encryption or retention limits. One in eight reported AI breaches linked to agentic systems involve persistent data exposure.

Controls: encrypt at rest with AES-256, enforce 90-day maximum retention, and give users a self-serve delete endpoint that purges both memory and derived embeddings.

Which four baseline controls map directly to SOC 2, GDPR, and HIPAA for hosted agents?

| Control | SOC 2 Trust Service Criterion | GDPR Article | HIPAA Safeguard |

|---|---|---|---|

| AES-256 encryption + KMS | Security & Confidentiality | Art. 32 - Security of processing | §164.312(a)(2)(iv) |

| Role-based access (RBAC) + MFA | Security & Privacy | Art. 25 - Data protection by design | §164.308(a)(4) |

| Immutable audit logs 13-month TTL | Processing integrity & Availability | Art. 30 - Records of processing | §164.312(b) |

| Signed DPA / BAA | Confidentiality & Privacy | Art. 28 - Processor agreement | §164.308(b) |

Providers that cannot produce the SOC 2 Type II report and refuse a Business Associate Agreement should be dropped from vendor short-lists.

How should incident-response playbooks differ for agent-specific failure modes?

Standard cyber playbooks assume human actors; agents introduce model drift, prompt injection, and RAG poisoning.

Add these steps:

- Kill-switch the agent gateway (keeps logs intact).

- Rollback to last-known-good model; isolate poisoned RAG segments.

- Capture agent action traces - every tool call, API request, and retrieved chunk.

- Notify within 72 hours under GDPR; trigger EU AI Act conformity review if the agent is high-risk.

Download the open-source AI Incident Response Playbook Template and run quarterly tabletops.

What data-minimisation techniques keep hosted agents compliant before the prompt ever leaves the enterprise?

- Pre-filter pipelines deterministically strip non-consented rows; the agent never sees them.

- Field-level redaction replaces SSNs, MRNs, and credit-card numbers with format-preserving tokens.

- Self-hosted PII micro-service returns only masked embeddings to the cloud agent.

- Prompt-level redaction libraries detect and mask new PII in real time, significantly reducing GDPR personal-data surface area in early adopters.

How can security teams verify that a SaaS agent vendor actually enforces the advertised retention period?

- Insert canary records with unique identifiers into production data.

- After the advertised TTL, request full data-export under GDPR Art. 20.

- If canary records re-appear, the vendor is not deleting; escalate to legal and consider contract termination.

- Pair the test with quarterly permission reviews and immutable deletion receipts stored in your own SIEM to create an audit trail that auditors accept.