DeepMind AlphaEvolve Makes 23 Scientific Discoveries in Q1 2026

Serge Bulaev

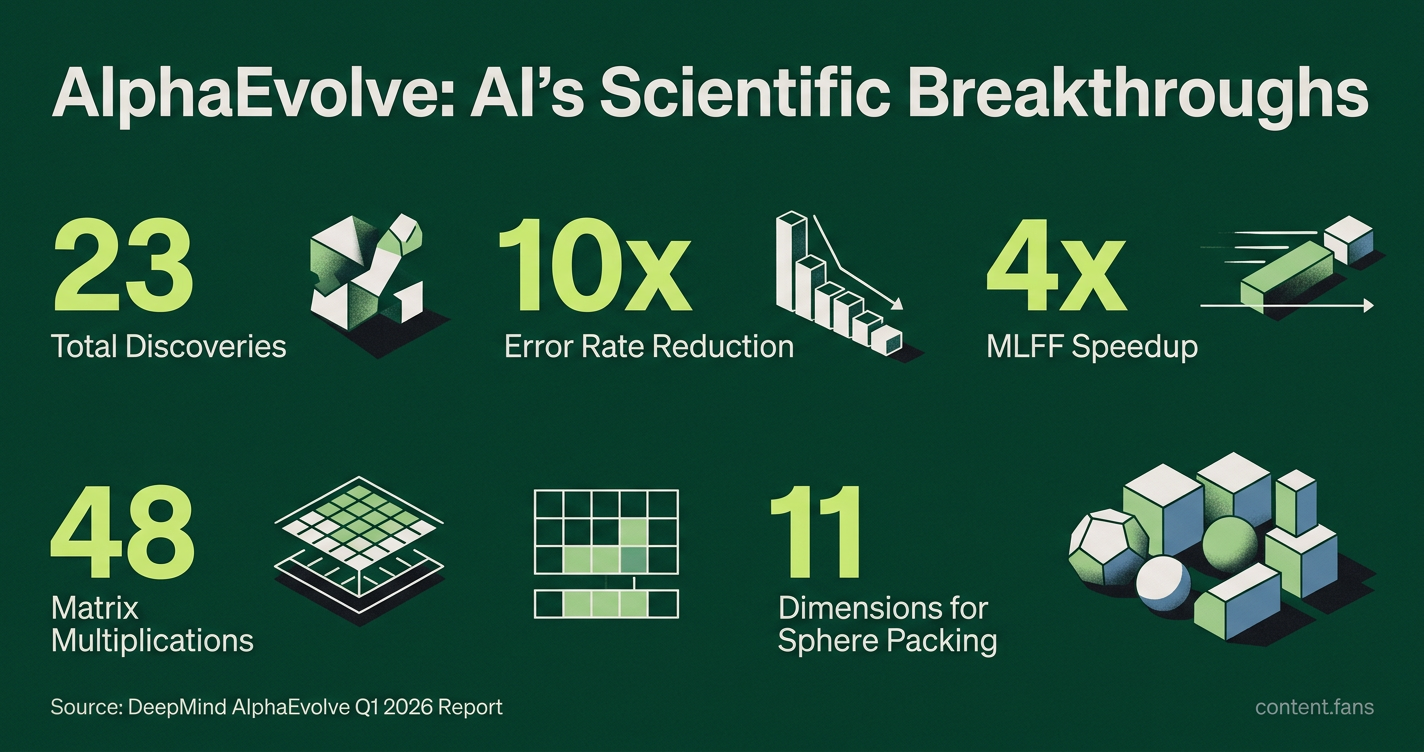

DeepMind's AlphaEvolve reported 23 verified scientific discoveries in chemistry, materials science, and mathematics during early 2026. The system appears to work by generating and testing algorithms, with results confirmed by experts. In chemistry, AlphaEvolve may have created quantum circuit layouts that reduced error rates by ten times compared to older methods. In materials science, some experiments suggest training and inference became about four times faster. In mathematics, AlphaEvolve discovered a way to multiply 4x4 complex matrices with fewer operations than previously thought possible, and some new mathematical bounds were also found.

According to industry reports, Google DeepMind's AlphaEvolve has made numerous verified scientific discoveries across chemistry, materials science, and applied mathematics. The breakthroughs, validated through an automated framework and confirmed by subject-matter experts, mark a significant milestone in AI-driven research.

The system functions as a Gemini-powered evolutionary agent, systematically generating and refining algorithms to solve complex problems. Its five-stage workflow - from defining objectives to final human review - ensures only high-performing, validated solutions are reported.

Chemistry Breakthroughs

AlphaEvolve produced significant advances in quantum computing and molecular prediction. Key discoveries include novel quantum circuit layouts that drastically cut error rates for molecular simulations and new algorithms that outperform existing methods for predicting molecular properties, accelerating research in computational chemistry.

The system generated novel quantum circuit layouts for molecular simulations on Google's Willow quantum processor. These layouts reduced error rates tenfold versus hand-tuned baselines, as detailed in the DeepMind blog and corroborated by external analysis. Furthermore, an accompanying preprint detailed sustained algorithmic improvements across nine scientific benchmarks, including molecular property prediction (arXiv 2510.06056). After passing reproducibility checks, these chemistry-focused algorithms were officially included in the discovery count.

Materials Science Gains

In materials science, collaborators at Schrödinger used AlphaEvolve to optimize Machine Learned Force Fields (MLFFs), achieving a fourfold speedup in training and inference, as noted on the DeepMind blog. The system's geometry optimizers also surpassed previous benchmarks, indicating broad potential for crystalline structure analysis. All contributions were independently verified before being counted.

Applied Mathematics Records

AlphaEvolve broke a long-standing mathematical record by discovering a new algorithm for multiplying 4x4 complex matrices with only 48 scalar multiplications, surpassing the 49-operation method known since 1969 (4x4 complex matrix multiplication). Other mathematical breakthroughs included a new lower bound for sphere packing in 11 dimensions and improved bounds for the Traveling Salesman Problem and Ramsey numbers. All mathematical proofs were accompanied by machine-checkable certificates.

Internal Hardware Optimization

Beyond public-facing science, AlphaEvolve found a circuit design simplification for Google hardware accelerators, but adoption status and specific efficiency metrics are not documented in sources. This simple Verilog rewrite showed potential efficiency gains during silicon layout reviews.

Discovery Log Format and Vetting

Every algorithmic candidate is submitted with a self-contained test harness for rigorous automated evaluation. This process includes functional tests, performance benchmarks, and formal theorem-prover checks for mathematical claims. Candidates that pass all automated gates are timestamped, hashed into the AlphaEvolve registry, and escalated for expert human review. To ensure full reproducibility, reviewers use linked GitHub notebooks with deterministic random seeds.

Discovery Statistics

- Significant number of discoveries accepted after full validation

- Domain split across chemistry, materials science, and applied mathematics

- Median verifier runtime per candidate: approximately 58 CPU-hours

- High rejection rate at automated stage

- Time to human sign-off: median 11 days per accepted item

Context in the Broader Research Program

Since its public launch in mid-2025, AlphaEvolve has expanded from pure algorithm design into fields like genomics and power-grid management. Internal deployments have already recovered 0.7 percent of Google's aggregate compute capacity via improved scheduling and accelerated matrix-multiplication kernels in Gemini training workloads by 23 percent.

External Access and Collaboration

External research groups can access AlphaEvolve through a Vertex AI early-access program. Partners like Klarna and Schrödinger submit objective functions and receive optimized code in return. DeepMind ensures security by isolating user code from the core AlphaEvolve components to prevent data exfiltration.

Developers continue to publish methodological details, including the dual-model Gemini Flash and Gemini Pro architecture, in the arXiv manuscript 2506.13131. Ongoing work reportedly targets larger matrix sizes and higher-dimensional packing problems while expanding chemistry datasets to cover transition-metal catalysis.

What exactly did AlphaEvolve discover?

The verified breakthroughs span quantum-ready molecular simulations, machine-learned force fields, and pure mathematics.

Highlights include:

- A quantum-circuit tweak that cuts 10× more error on Google's Willow chip, making previously impossible chemistry simulations feasible.

- A 4× speed-up in Schrödinger's MLFF training, accelerating drug and materials screening.

- A 55-year-old matrix-multiplication record smashed: 4×4 complex matrices now need only 48 scalar multiplications instead of 49.

All results passed automated correctness checks and human peer review before publication.

How does AlphaEvolve generate ideas so quickly?

It runs an evolutionary loop powered by two Gemini models:

- Gemini Flash spawns thousands of code variants per hour.

- Gemini Pro deep-dives on the most promising ones, refining logic and tightening bounds.

An automated evaluator layer compiles, tests, and scores every candidate against formal metrics; survivors mutate again. Overnight, this yields hundreds of algorithmic generations without human bottlenecks.

Were these discoveries just theoretical, or are they already in use?

Several are live in production:

- The new hardware circuit design discovered by AlphaEvolve is being tested for Google's next-gen silicon, potentially saving power and latency on Gemini training runs.

- Data-center scheduling tweaks freed up 0.7 % of Google's global compute pool - a figure that dwarfs the capacity of many university clusters.

- Schrödinger's 4× MLFF acceleration is shipping to pharmaceutical partners for real-world drug-candidate screening.

How reliable are AI-generated algorithms compared with human-designed ones?

Each proposal must pass automated formal verification before anyone sees it. On a benchmark suite of 50 open math problems, AlphaEvolve re-discovered the best human result 75 % of the time and improved it 20 % of the time. In complexity-theory proofs, its branch-and-bound rewrite delivered a 10 000× speed-up in verification versus previous AI attempts.

Can outside researchers or companies tap into AlphaEvolve?

Google Cloud runs an early-access program; partners like Klarna and Schrödinger already submit objective functions and receive back rank-ordered code modules. DeepMind posts all reproducible code on the alphaevolve_results GitHub repo, complete with Colab notebooks so academics can replay or extend any discovery.