EU alleges Meta fails to protect underage users on Facebook, Instagram

Serge Bulaev

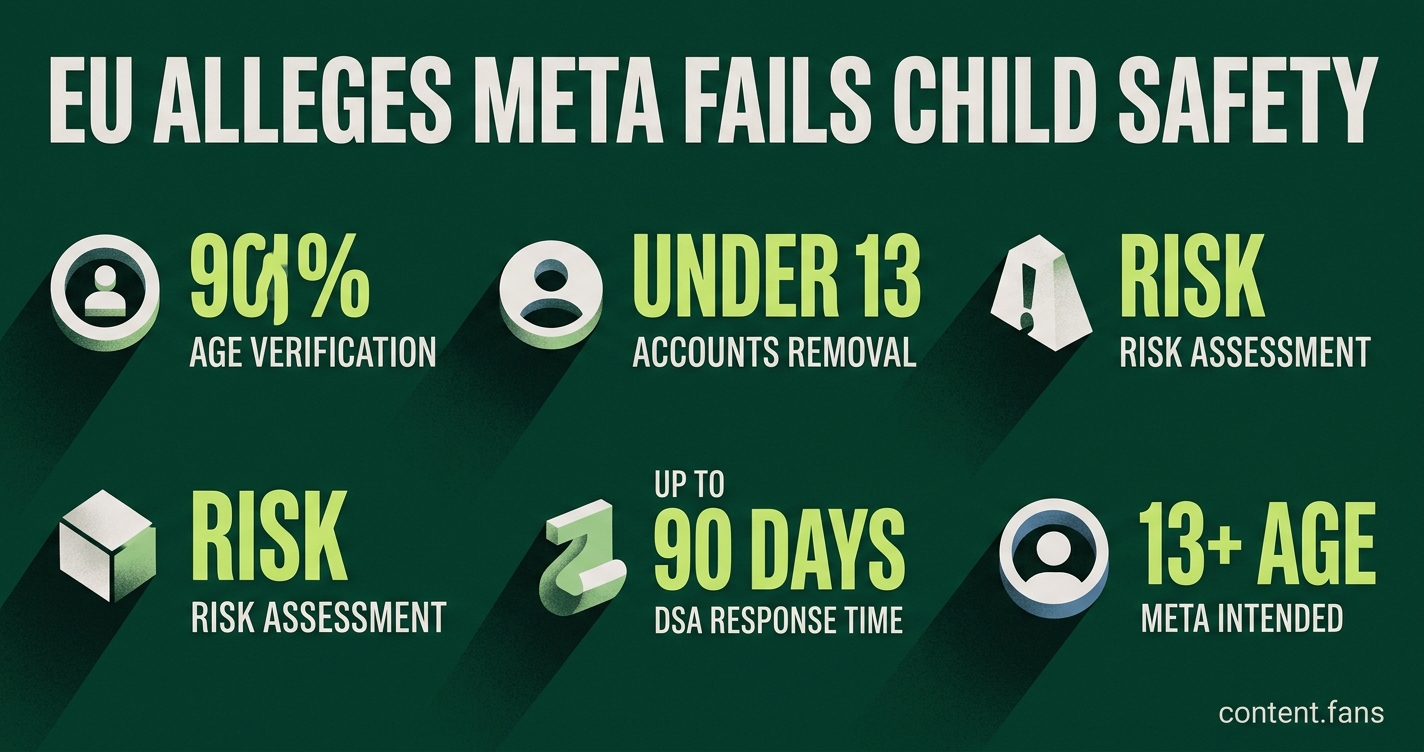

The EU says Meta may not be doing enough to keep children under 13 off Facebook and Instagram. Investigators say kids can easily get around age checks and that Meta's tools to find and remove underage users do not seem to work well. Meta says it already has ways to find underage accounts and is promising more steps soon, but the EU suggests these may not be strong enough. The case might lead to a big fine for Meta and could set rules for how social networks protect children in the future.

The EU alleges Meta fails to protect underage users on Facebook and Instagram, with regulators citing ineffective age verification and slow removal of children's accounts. These preliminary charges highlight the growing pressure on social media giants under the Digital Services Act (DSA) to prove their safety measures are effective.

What EU Investigators Found on Meta's Platforms

European Commission investigators found that Meta's age-verification systems for Facebook and Instagram are easily bypassed with a false birthdate. They also determined that the platforms' tools for reporting and removing accounts held by children under 13 are not effective, according to the official preliminary findings.

According to industry reports, Meta's sign-up process can be circumvented with a simple false birthdate. Officials also noted that reporting tools on both platforms appear ineffective at identifying and deleting accounts of users under 13. The investigation further criticizes Meta for an "inadequate" risk assessment, which fails to evaluate how its algorithms might recommend harmful content to minors.

If these findings are upheld, Meta could face significant financial penalties. The DSA provides a timeline for the company to respond with evidence, system upgrades, or new commitments before a final penalty is decided.

Meta's Response and Next Steps

Meta has pushed back against the allegations. A spokesperson quoted by The Guardian stated, "Instagram and Facebook are intended for people aged 13 and older and we have measures in place to detect and remove accounts from anyone under that age." The company described age verification as an "industry-wide challenge" and pledged to announce "additional measures" soon. While Meta reportedly uses AI and ID checks to flag accounts, investigators believe these methods may not meet the DSA's "highly effective" standard.

Broader DSA Scrutiny on Child Safety

This investigation is part of a wider EU focus on child safety online. The Commission has also opened probes into other major platforms:

- TikTok: A formal DSA probe launched February 19, 2024, covering addictive design, recommender 'rabbit hole', and minors risks, with preliminary findings announced February 6, 2026.

No final penalties have been issued in these cases, as platforms have up to 90 days to respond to formal proceedings, and most investigations are ongoing.

What Constitutes an Effective Age Gate Under the DSA

Experts suggest that DSA compliance requires a layered approach to age verification that balances effectiveness with privacy. Key components of an effective system include:

- AI-driven analysis of user behavior and public content.

- Device-level signals from mobile app stores.

- Targeted ID or biometric checks for accounts flagged as suspicious.

- Rapid deletion of verification data to comply with privacy regulations.

This multi-faceted strategy aligns with the European Commission's 2025 "blueprint" for age assurance, which moves beyond simple self-declaration and promotes privacy-preserving technologies like EU Digital Identity Wallets.

Meta must now demonstrate that its existing or future systems meet these stringent expectations. Analysts believe this case is significant, as it could establish a crucial precedent for how the Digital Services Act is enforced to protect children online across all major platforms.

What exactly is the EU accusing Meta of under the Digital Services Act?

According to industry reports, the European Commission's preliminary findings say Meta has no effective system to stop children under 13 from opening accounts on Facebook and Instagram. Brussels also argues that Meta's risk assessments ignore the real danger of serving age-inappropriate content to minors, a direct obligation under Articles 28, 34 and 35 of the DSA.

How big could the penalty be if the charges are confirmed?

If the case moves from "preliminary" to "final", the DSA lets regulators impose significant financial penalties on Meta based on the company's worldwide annual turnover - a sum that could reach substantial amounts based on the company's latest financial reports. No final fines for age-verification lapses have been issued to any platform since the Act took effect, but the Commission has opened similar proceedings against other platforms, showing it is willing to use the full toolkit.

What counter-arguments has Meta made public so far?

A Meta spokesperson said the firm "disagrees" with the Commission's view, insisting that both services are "intended for people aged 13 and older" and that AI-based detection already removes many under-age accounts. The company blames "an industry-wide challenge" and promises additional measures, although no detailed roadmap or tech specs have been released.

What does "effective age verification" look like in 2026?

Best-practice guides from regulators and NGOs recommend a layered approach:

- AI & behaviour flags - scan public videos, friend graphs and activity patterns to spot likely under-13s without asking every user for ID.

- Targeted high-assurance checks - request a government ID plus a live selfie only when the system already suspects a breach, cutting friction for everyone else.

- Privacy-first design - use third-party "over-18" tokens or zero-knowledge proofs so the platform never stores raw identity data.

Early trials show that combining steps 1 and 2 catches significantly more under-age accounts than simple birth-date pop-ups, while step 3 keeps compliance teams comfortable with GDPR.

What happens next in the EU procedure?

Meta now has a formal written phase to submit evidence and propose remedies. The Commission will weigh that response, possibly run a market test of any new tools, and then decide whether to make the findings final. Until that moment, no fine is due, but every week of delay increases reputational risk and the chance that national regulators in Dublin, Berlin or Paris open parallel cases of their own.