ChatGPT palm trend raises biometric privacy concerns for OpenAI Images 2.0

Serge Bulaev

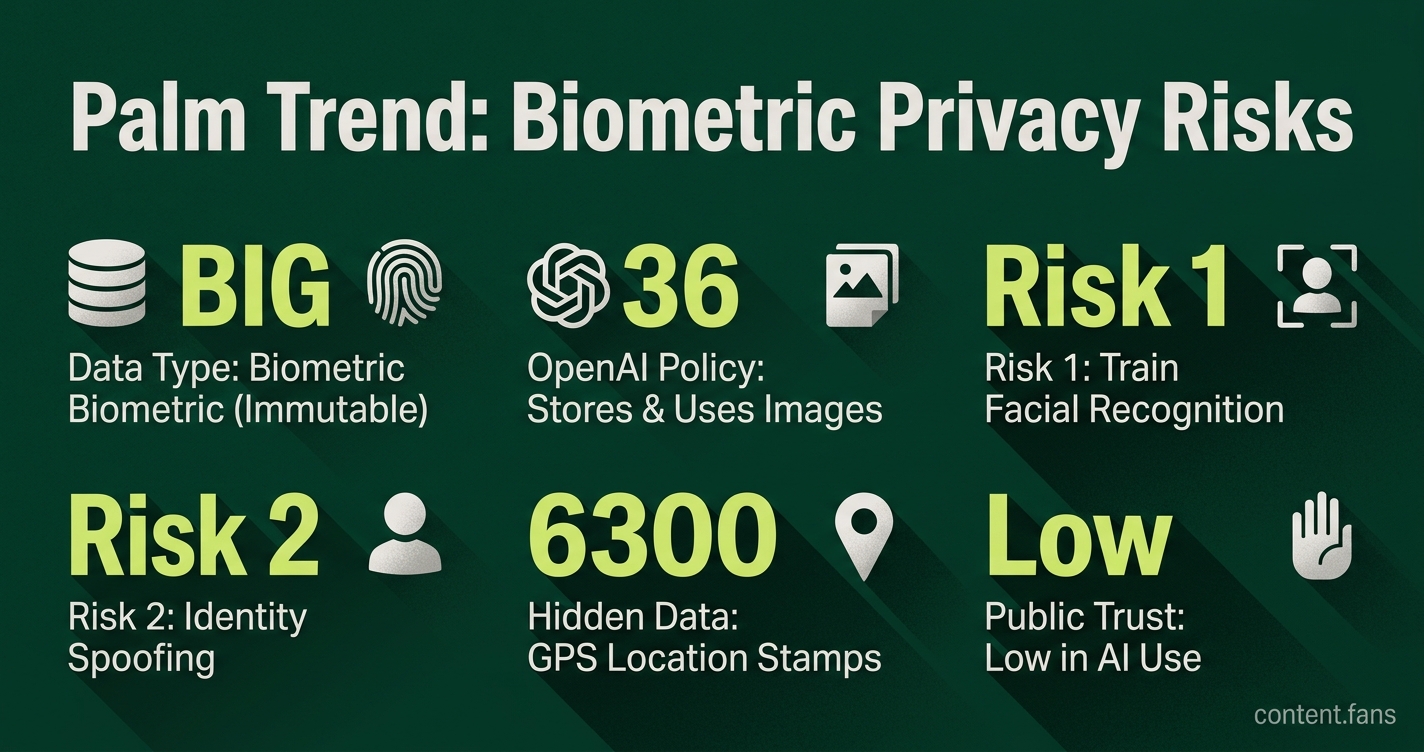

A new trend where people use ChatGPT Images 2.0 to "read" their palms and faces has raised worries about biometric privacy. Some posts, including a meme, suggest that agencies like the CIA may now have more fingerprints and facial data, but this claim is unverified. Experts say the real risk may be that uploaded images could later help train face recognition models, and biometric data cannot be changed like a password. OpenAI's privacy policy does not mention a way to opt out of biometric data collection, and some metadata like GPS location might also be collected. Simple steps like hiding faces, stripping location data, and using privacy settings might help, but do not guarantee full protection.

The viral ChatGPT palm trend, where users upload photos for AI-driven "readings," is sparking significant biometric privacy concerns. While memes joke about the CIA acquiring new fingerprint data, the unverified claim highlights a real and growing anxiety over how companies like OpenAI handle sensitive, unchangeable personal data.

This social media fad took off as users shared AI-generated fortunes about their love lives and careers. However, the fun has a serious side: every high-resolution photo uploaded is processed by OpenAI's servers, prompting a wave of warnings from privacy-conscious users about potential surveillance.

An analysis by Private Internet Access found that OpenAI's general privacy terms apply to image uploads, with the company able to store and use uploaded images, account details, IP addresses, and location data for service improvement unless a user enables Temporary Chat mode.

What the policy actually says

The primary risk involves the permanent nature of biometric data. Unlike a compromised password, fingerprints and facial geometry cannot be changed. Security experts warn that these images could be used to train facial recognition models or, if leaked, exploited for identity spoofing, making any data breach irreversible.

OpenAI's privacy policy outlines that it collects user-uploaded content but fails to provide a specific opt-out for biometric data collection. Furthermore, as a Times Now report highlights, hidden metadata like GPS location stamps can be included with photo uploads, broadening the scope of what OpenAI retains.

While security experts interviewed for an India Today report dismiss fears of CIA involvement as hyperbole, they emphasize the credible threat of these images being used to train facial recognition algorithms. The core concern is that biometric data is immutable; once compromised, it cannot be reset like a password.

Wider biometric anxiety online

This trend emerges amid widespread public distrust in AI and data collection. Industry reports suggest a significant portion of Americans lack confidence in the government's responsible use of AI, while many adults are more concerned than excited about AI developments. These anxieties are heightened by high-profile incidents like the $25 million deepfake fraud that hit Arup in February 2024.

While the palm reading trend is largely harmless fun, it exists in a digital ecosystem where synthetic media makes it difficult to distinguish fact from fiction. The rise of "slopaganda" - virally spread propaganda created with generative AI - further erodes public trust in online content.

Simple precautions

OpenAI's Privacy Filter is designed to redact contact information like emails and phone numbers, but it does not remove unique biometric identifiers. To mitigate risks, security experts recommend several precautions:

- Avoid posting any image that shows full palms or faces.

- Strip EXIF location data before sharing photos.

- Use Temporary Chat or similar opt-out toggles.

- Consider a VPN to mask IP addresses.

While these measures can reduce your digital footprint, they don't guarantee that your data will be deleted from all system backups. The viral meme may have exaggerated the immediate threat, but it successfully focused public attention on a critical, unresolved issue: the long-term retention of uniquely identifying images for a fleeting moment of digital entertainment.

What does OpenAI officially say about biometric data collected through ChatGPT image uploads?

According to industry reports, OpenAI's general ChatGPT privacy policy applies to photo uploads. According to that policy:

- Images, prompts, IP address, device identifiers and location data are all collected.

- The company may retain and use the content to "improve its services," which includes training future models unless you actively opt out through "Temporary Chat" mode.

- There is no explicit biometric opt-out for palm prints or facial geometry.

Are the memes claiming the CIA now has my palm print true?

No evidence supports the viral meme. Security researchers and news outlets alike dismiss the CIA claim as hyperbole. While OpenAI can share data when legally required, routine or bulk transfers to intelligence agencies are not described in any current policy. The meme is best viewed as a reflection of public anxiety rather than a factual disclosure.

Why are security experts worried about uploading palm and face photos?

Unlike a password, biometric traits cannot be changed once leaked. Multiple sources warn that high-resolution images of palms and faces can reveal:

- Fingerprints and hand geometry that could be extracted for spoofing.

- Facial landmarks usable for training or breaching facial-recognition systems.

- Metadata such as GPS coordinates and timestamps embedded in the original file.

Experts quoted by Times Now urge users to "think twice" before joining the trend.

What concrete steps can I take to protect my biometric privacy today?

- Avoid the trend altogether - the simplest safeguard.

- If you still want to test the feature, enable "Temporary Chat" mode to reduce retention (but images may still be stored temporarily).

- Strip EXIF metadata from photos before uploading.

- Mask your IP by using a VPN or Tor, since location data is logged.

- Periodically request your data export and exercise your right to deletion under applicable privacy laws.

How do viral memes shape our trust in AI services?

Broader surveys reveal the climate in which these jokes spread. Industry reports suggest many Americans distrust government agencies to use AI responsibly, while a significant portion worry about AI-generated misinformation, including deepfakes using stolen biometric data.

In this environment, a meme that yokes an AI product to surveillance fears can go viral precisely because the underlying trust deficit already exists.