US Government Tests AI Models from Microsoft, Google, xAI for Security

Serge Bulaev

The U.S. government may test AI models from Microsoft, Google, xAI, OpenAI, and Anthropic for national security risks before they are released to the public. The Center for AI Standards and Innovation (CAISI) says these tests look for cybersecurity problems, misuse risks, and unpredictable behaviors. Some models are tested with fewer safety limits so experts can see how bad the worst-case situations might be. This program does not require government approval before release, but it might become required in the future. The main risks being checked include cyberattacks and unexpected failures, and lessons learned may help set new safety standards.

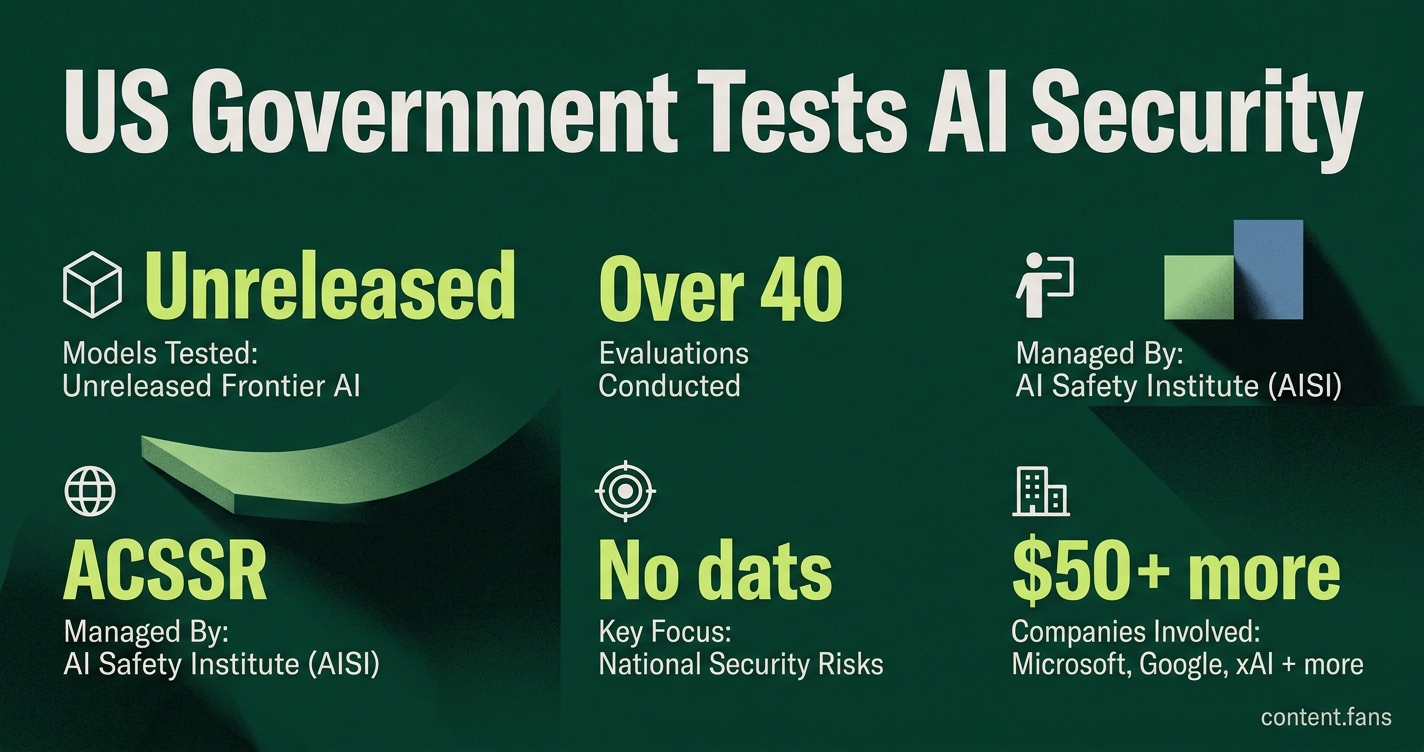

The U.S. government is testing advanced AI models from leading tech companies like Microsoft, Google, and xAI before they are publicly released to evaluate national security risks. According to a CIO.com report, these voluntary agreements give agencies access to unreleased frontier systems for rigorous safety evaluations. The program is managed by the AI Safety Institute (AISI), part of the National Institute of Standards and Technology.

AISI engineers probe the models for cybersecurity vulnerabilities, risks of misuse, and unpredictable autonomous behaviors. As the BBC notes, this process has involved over 40 evaluations, sometimes on models with relaxed safety filters to assess worst-case scenarios. The tests are conducted in classified settings, with findings shared with developers to patch security flaws.

Which companies are involved and why

The U.S. government is testing unreleased "frontier" AI models from major technology firms to proactively identify and mitigate national security threats. These evaluations focus on potential misuse, cybersecurity vulnerabilities, and unexpected behaviors before the powerful systems are deployed publicly, helping to establish future safety standards.

The list of participants has grown to include a cohort of top AI developers. According to industry reports, Microsoft, Google, and xAI are recent additions, while OpenAI and Anthropic have been involved in the program. This group of firms provides AISI with early access to their most advanced systems for targeted national security assessments.

| Company | Model access scope | Stated purpose |

|---|---|---|

| Google DeepMind | Gemini family and yet-to-be-named successors | Probe unexpected multimodal outputs and potential military misuse |

| Microsoft | Next-generation large language and agentic systems | Build shared datasets to stress-test for undisclosed capabilities |

| xAI | Pre-public releases tied to its aerospace projects | Assess autonomous decision chains that may affect dual-use applications |

AISI conducted evaluations of Mythos Preview's autonomous vulnerability discovery and exploitation capabilities.

How the testing process works

AISI follows a structured, collaborative process for each evaluation:

- Developers deliver model snapshots, some with curtailed guardrails.

- Government teams run red-team exercises that simulate hostile actors.

- Findings are returned to the company within agreed timelines.

- AISI publishes aggregate lessons in biannual best-practice notes.

Experts believe these agreements "help strengthen visibility into autonomous behaviors while accelerating standards to mitigate risks," signaling a move toward embedding security-by-design principles in commercial AI development.

Policy backdrop

This voluntary testing program supports broader U.S. federal directives on AI governance. Recent policy initiatives require agencies to assess potential risks - including legal and privacy concerns - for any AI used in national security. Furthermore, legislative proposals encourage public-private sandboxes to develop a standardized model oversight regime, responding to concerns that AI capabilities are outpacing governance.

What risks are being targeted

While international analyses identify threats like AI-facilitated bioweapon design and misinformation, AISI's testing primarily concentrates on two key areas:

- AI-enabled cyberattacks: Assessing a model's ability to find and exploit software vulnerabilities.

- Unpredictable systemic failures: Identifying emergent behaviors that could cause widespread disruption.

Although the program is currently voluntary, reports from Reuters indicate the White House is considering an executive order that could make this vetting compulsory for AI models exceeding a specific capability threshold. Officials have not commented on potential policy changes, emphasizing the program's current technical focus.

What exactly is the US government testing when it gets early access to new frontier models?

Teams at NIST's AI Safety Institute (AISI) run two parallel tracks:

- Capability discovery - models are prompted to write long code chains, design biochemical protocols, plan cyber-intrusions, and generate synthetic media to see if any unexpected "agent-like" behaviors surface.

- Mitigation stress-testing - safety filters are deliberately weakened so red-teamers can measure how easily the model enters a harmful regime and whether simple guard-rails can stop it.

AISI has completed more than 40 of these dual reviews, several on models that have still not been released to the public.

Which companies are now part of the voluntary early-access list?

The line-up has grown from the original participants (OpenAI and Anthropic) to include additional firms:

- Google DeepMind - Gemini family

- Microsoft - Copilot and Azure OpenAI stacks

- xAI - Grok series

- OpenAI - GPT lineage (continuing)

- Anthropic - Claude family (continuing)

All sign the same template agreement that gives government engineers pre-deployment access, shared data sets, and a feedback channel for safety improvements.

Why is "weakened safety" a deliberate step in the tests?

Developers normally ship models with refusal training, alignment fine-tuning, and output filters. AISI asks for special builds where those layers are scaled back or switched off so evaluators can:

- Measure the raw risk ceiling (worst-case capability)

- Check whether lightweight post-training patches can still contain the danger

- Generate hard numeric scores that NIST can use in future standards

This "red-line" approach caught headlines after Anthropic's Mythos build located zero-day flaws in network firmware within minutes, prompting fresh White House talks on mandatory vetting.

What kinds of national-security risks sit at the top of the checklist?

The testing protocol focuses on several high-impact, dual-use domains:

- Cyber offense - autonomous vulnerability discovery, exploit writing, lateral movement planning

- Biochemical design - step-by-step lab protocols that lower the skill barrier for toxins or pathogens

- Synthetic influence - mass-produced, high-fidelity disinformation, deep-fake blackmail, or scam campaigns

- Military task planning - logistics, target selection, or swarm-control algorithms that could plug directly into weapon systems

Each domain gets a severity matrix (probability × impact) and must stay below agreed thresholds before the model is cleared for wide release.

Could these voluntary deals become compulsory?

For now the arrangements are opt-in, but draft language circulating in Congress and a possible forthcoming executive order would:

- Require every frontier model above a computational threshold (roughly 10^26 FLOP) to obtain a government certificate before public launch

- Make the AISI evaluation template the default standard, turning today's voluntary data sharing into a licensing step

- Impose civil penalties for undisclosed release or circumvention of the process

Industry lobbyists expect a transition period during which the current voluntary pool becomes the blueprint for the mandatory regime.