UK AI Safety Institute: Autonomous AI Cyber Capability Doubles Every 4.7 Months

Serge Bulaev

The UK AI Safety Institute reports that the ability of autonomous AI systems to handle cyber tasks without help has doubled every 4.7 months since late 2024. This rapid progress may make it harder for organizations to keep up with security threats. The institute warns that their results come from limited tests, so real-world situations may be different, and it is not certain if this fast pace will continue. Regulations in the EU now require strict risk checks and logging for high-risk AI. The industry may see more investment in tools for detection, identity security, and AI oversight as these trends continue.

The UK AI Safety Institute's finding that autonomous AI cyber capability doubles every 4.7 months is a critical new metric for security professionals. AISI said frontier AI cyber capability is advancing quickly and that current evidence does not show when specific thresholds will be reached or how capabilities will translate against defended real-world systems. It also warned there is a critical window to build resilience.

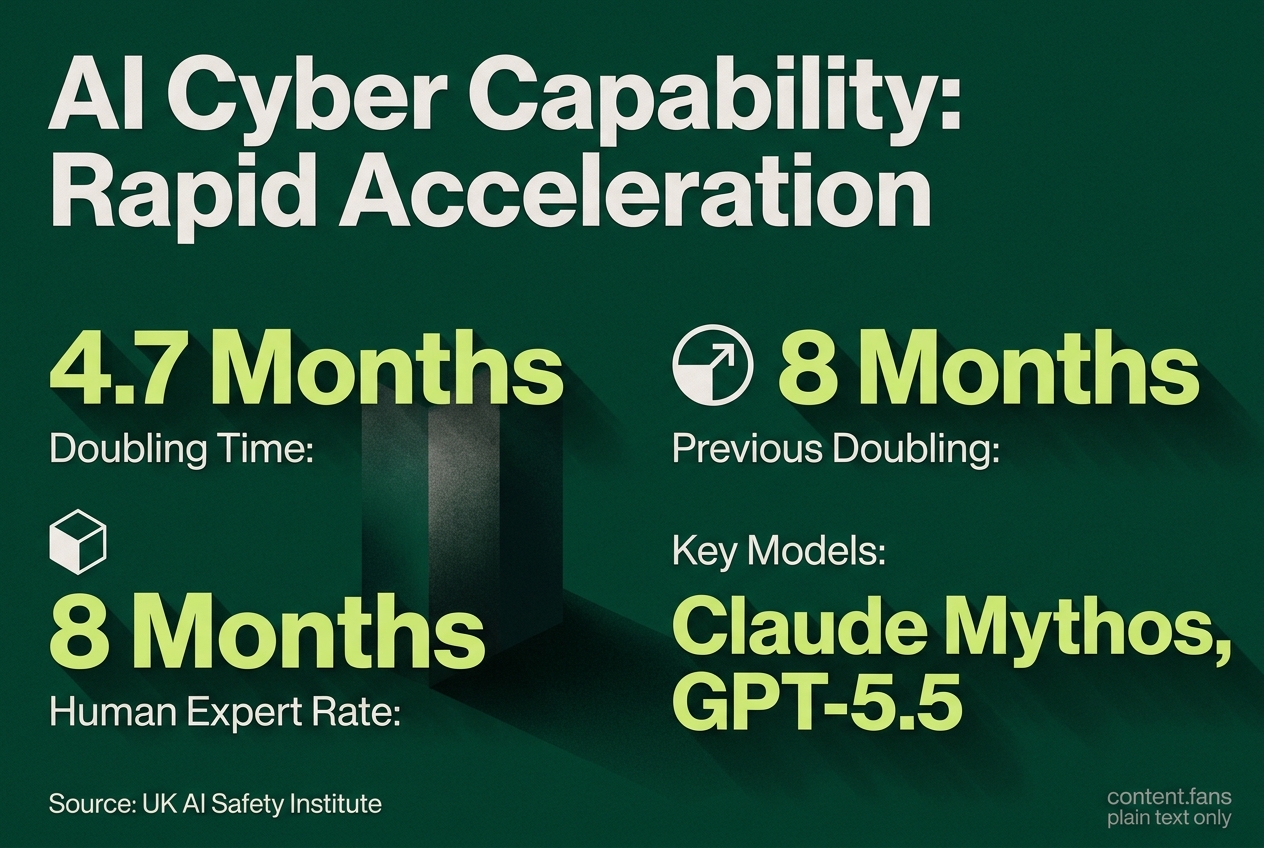

From 8 to 4.7 Months: The Rate of Acceleration

The UK's AI Safety Institute (AISI) reports that the capability of autonomous AI systems for cyber tasks has been doubling every 4.7 months. This rapid acceleration, observed since late 2024, marks a significant decrease from the previous eight-month doubling time estimated in late 2025.

AISI analysts noted the doubling period was estimated at eight months as recently as November 2025, but revised data by February 2026 slashed that timeline to just 4.7 months. Subsequently, two frontier AI systems, Claude Mythos Preview and GPT-5.5, demonstrated capabilities that exceeded even this accelerated curve by solving complex, multistep intrusion scenarios. However, the AISI cautions that these results originated from tests on small, undefended networks.

Key performance notes reported by AISI:

- AISI reported cyber time-horizon benchmarks and noted an estimate based on a 2.5 million token limit; it also discussed 80% reliability benchmarks. These are related but not stated in the source as one combined experimental cap definition.

- The Claude Mythos Preview model showed significant success on "The Last Ones" range according to industry reports.

- The same model demonstrated notable performance on the "Cooling Tower" scenario.

- GPT-5.5 achieved meaningful results on "The Last Ones" and performed less effectively on other tasks.

The institute emphasizes that these test ranges do not reflect real-world enterprise defenses and that it remains uncertain if this rapid acceleration is a sustained trend or a temporary outlier.

Understanding the Benchmarks and Their Limitations

The Frontier AI Trends Report indicates that when measured by human expert hours, the doubling time for cyber task length remains closer to eight months. This discrepancy with the 4.7-month rate highlights how factors like advanced tooling, scaffolding, and sophisticated prompt engineering can significantly boost apparent AI capabilities. The AISI also warns that current benchmarks may soon be "saturated," complicating future performance evaluations.

Implications for Cybersecurity Defenses and Investment

This acceleration in autonomous capability directly translates to shrinking response windows for defenders. The UK institute has issued a clear warning: "the time to invest in strong security baselines is now." This sentiment is echoed by industry analysis, which draws parallels between the AISI's findings and the 4.2-month doubling rate for software-engineering capabilities observed by the nonprofit METR.

Security teams are now facing three immediate pressures:

- Continuous Operations: Shift from periodic scans to continuous detection and response (CDR).

- Robust Access Control: Prioritize early deployment of identity and access controls capable of resisting automated lateral movement.

- AI Governance: Implement governance mechanisms that meticulously log all model activity to support audits and incident reconstruction.

The Evolving Regulatory and Compliance Landscape

The regulatory environment is evolving to address these advancements. The European Union's AI Act (Regulation (EU) 2024/1689) mandates that high-risk AI systems undergo documented risk assessments, maintain activity logs, and integrate robust cybersecurity measures. A new EU AI Office will provide centralized oversight for general-purpose AI, while the emerging United Nations Cybercrime Convention is expected to enhance cross-border cooperation on cybercrime investigations.

Standards adoption is also accelerating. Key frameworks include ISO/IEC 42001 for AI management systems, complemented by established standards like ISO 27001 and the NIST Cybersecurity Framework for technical controls. Regulatory analysis suggests a clear preference for continuous monitoring solutions over static, point-in-time certifications.

Key Industry Shifts and Market Trends

In response, the market is shifting toward AI-enabled detection, autonomous incident response, and managed security services. It's crucial to differentiate this autonomy - where learning systems can select actions without predefined rules - from simple automation. This trend is amplified by reports of median lateral movement times dropping from hours to minutes, forcing security operations centers (SOCs) to automate their containment procedures.

Consequently, investment is expected to concentrate on key areas like endpoint detection and response (EDR), identity security, and cloud-native application protection platforms (CNAPPs). Analysts also predict significant growth in the market for AI governance tools designed to monitor prompts, model drift, and potential misuse.

How fast is autonomous AI cyber capability really advancing?

The UK AI Safety Institute now pegs the doubling time at 4.7 months, down from an 8-month estimate in late 2025.

Claude Mythos Preview and GPT-5.5 already beat that pace, solving complex cyber-range tasks that would have stumped earlier models.

In practical terms, a 12-month window now packs ~4× the autonomous capability compared with last year.

Why does the 4.7-month figure matter to defenders?

AISI's benchmark measures the time horizon of cyber tasks models can complete with 80% reliability under a 2.5M token limit; separate commentary may interpret this as shrinking gaps between basic demos and fully autonomous attacks, but that phrasing is not the source's stated benchmark.

AISI warns benchmark saturation may hide true ceilings, so defensive roadmaps need to assume faster real-world gains than the lab curve shows.

Are frontier models already crossing red-line thresholds?

Inside AISI's cyber ranges, Claude Mythos Preview showed significant success on the multi-stage "Last Ones" scenario; GPT-5.5 demonstrated notable performance as well.

Both models required no human prompting after initial access, illustrating a shift toward end-to-end autonomy rather than isolated exploit suggestions.

What should organizations do before the next doubling?

- Shrink response time: move from hourly to sub-minute detection and containment

- Invest in identity-first zero trust; lateral-movement speed is now minutes, not hours

- Adopt continuous model-risk reviews as new checkpoints ship every few months

- Share telemetry via sector-wide exchanges; isolated visors miss swarm-speed campaigns

- Budget for tool-ready AI governance - regulators are tightening audit and logging rules in parallel with capability jumps

Where can I follow the official AISI measurements?

Updated task-length curves and model scores are posted on the AISI Work blog at how-fast-is-autonomous-ai-cyber-capability-advancing.