OpenAI unveils disaggregated Realtime-2 voice models for enterprises

Serge Bulaev

OpenAI has introduced new voice models called Realtime-2, Realtime-Translate, and Realtime-Whisper, which may help enterprises by splitting tasks like reasoning, translation, and transcription into separate parts. This separation might let companies control costs and speed, and different industries are already testing the models for things like customer calls, global support, and medical notes. OpenAI's new Deployment Company may help customers use these voice tools in their daily work. The 128K token capacity of Realtime-2 is likely more than most calls need, and developers are advised to watch for higher costs and delays if they use too many tokens. These new models suggest companies can build flexible voice systems without having to rebuild everything from scratch.

OpenAI's launch of its disaggregated Realtime-2 voice models - Realtime-2, Realtime-Translate, and Realtime-Whisper - marks a significant shift in enterprise AI. With a 128K token context, these models separate reasoning, translation, and transcription, allowing developers to build highly efficient, task-specific voice applications. This release aligns with OpenAI's strategic move toward the "practical adoption" of AI in daily operations, supported by significant investment in its new OpenAI Deployment Company.

Why the split matters for enterprise

OpenAI's disaggregated voice models provide enterprises with modular components for reasoning (Realtime-2), translation (Realtime-Translate), and transcription (Realtime-Whisper). This separation allows teams to build custom voice pipelines, selecting the most cost-effective and performant model for each specific task, enhancing both flexibility and efficiency.

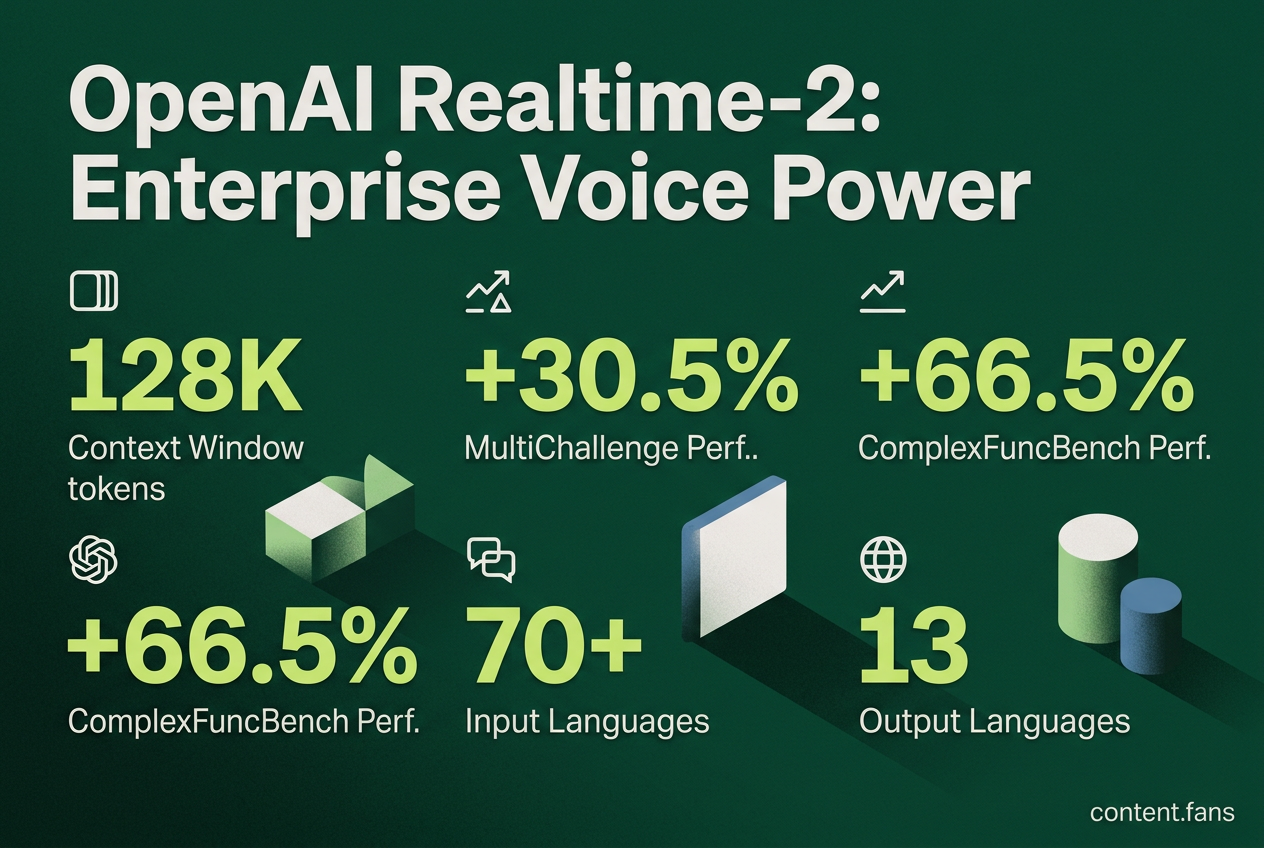

By splitting the voice stack into three distinct API endpoints, development teams gain granular control over application latency and cost. Realtime-2 manages complex dialog, Realtime-Whisper provides streaming transcription, and Realtime-Translate handles multilingual communication. According to available sources, OpenAI cited performance improvements showing 30.5% vs 20.6% results on MultiChallenge audio and 66.5% vs 49.7% on ComplexFuncBench compared to previous models.

Early adoption patterns

- Contact Centers: Deploying Realtime-2 agents to manage routine calls, complete with interruption handling and "preamble" fillers that improve the user experience by reducing perceived lag.

- Global Support: Embedding Realtime-Translate to facilitate real-time conversations across over 70 input languages and 13 output languages.

- Regulated Industries: Leveraging Realtime-Whisper to stream live transcripts for automated compliance monitoring dashboards.

- Healthcare: Piloting voice-driven triage systems where Realtime-2 interprets clinical terminology and generates structured notes for electronic health records.

- Field Services: Integrating translation and transcription into mobile apps, enabling technicians to log tasks hands-free.

Alignment with OpenAI Deployment Company

The new OpenAI Deployment Company supports this modular approach by embedding engineers directly within customer teams. This hands-on service model is designed to accelerate voice integration by helping enterprises refactor workflows around the three specialized models, rather than providing a one-size-fits-all bot.

128K context - useful but often overhead

While Realtime-2 features an impressive 128K token context window, most applications won't require its full capacity. For instance, a typical five-minute support call consumes only 4,000 to 5,500 tokens. The extended window serves as valuable headroom for complex scenarios, but developers must balance its use against potential increases in latency and cost, making techniques like rolling summaries essential.

Snapshot: model selection cheat sheet

- Realtime-2: Ideal for intelligent voice agents that can reason, interact with tools, and manage interruptions ("barge-in").

- Realtime-Translate: Best for live multilingual meetings, global customer support, and cross-language communication.

- Realtime-Whisper: The solution for ultra-low-latency transcription, live captions, and compliance logging.

By combining these models with existing Text-to-Speech (TTS) services, enterprises can construct powerful, modular voice pipelines without overhauling their entire tech stack. This focus on orchestration highlights OpenAI's broader strategy of moving AI from prototypes into production workflows across key sectors like healthcare, science, and large-scale customer operations.

What makes OpenAI's Realtime-2 family different from earlier bundled voice models?

OpenAI replaced the single "do-everything" voice stack with three specialized primitives:

- Realtime-2 handles live conversational reasoning

- Realtime-Translate performs streaming speech-to-speech translation across 70+ input and 13 output languages

- Realtime-Whisper supplies ultra-low-latency transcription

This disaggregation lets enterprises route each task to the model that is cheapest and fastest for that job, improving both cost and capability trade-offs instead of locking all features into one large, expensive endpoint.

How does the 128K-token context window change voice-agent design?

The 128K window lets a single call hold roughly five hours of spoken conversation or large documents alongside the dialogue, enabling:

- long technical-support or intake sessions without truncation

- real-time retrieval of policy PDFs, manuals, or knowledge-base articles during the call

- multi-issue troubleshooting that references earlier parts of the conversation

Most voice calls need only 2,000-5,000 tokens, so the extra space is optional headroom for complex enterprise workflows rather than a hard requirement for every deployment.

Which enterprise teams are already integrating Realtime-2, Translate, and Whisper?

According to industry reports, early adopters include:

- Global contact centers that embed Translate for live multilingual support

- Healthcare providers piloting Realtime-2 for clinical-documentation assistants that summarize doctor-patient dialogue in real time

- Field-service companies adding Whisper to mobile apps for hands-free note-taking during repairs

OpenAI's newly-formed Deployment Company is placing forward-deployed engineers inside customers' operations to accelerate these roll-outs and redesign workflows around voice-first agents.

How do the three models perform on public benchmarks?

According to available sources, OpenAI cited performance improvements showing 30.5% vs 20.6% results on MultiChallenge audio and 66.5% vs 49.7% on ComplexFuncBench compared to previous models. Industry reports suggest significant improvements in call-quality benchmarks after switching to Realtime-2, illustrating the practical impact of task-specific tuning.

When should a developer choose a competitor like Google or Deepgram over OpenAI's stack?

Choose OpenAI when you need reasoning-plus-voice in one tightly-integrated API - for example, an agent that answers questions, calls tools, and handles interruptions. Choose cloud rivals such as Google Speech-to-Text, Azure Translator, or Deepgram when you require:

- broader enterprise compliance certifications

- on-prem or single-tenant hosting

- heavy customization of acoustic models for niche vocabularies

For pure high-volume transcription without conversational logic, specialized ASR providers often deliver lower per-minute cost and richer diarization features than the general-purpose Whisper endpoint.