Palo Alto Networks: AI Speeds Cyberattacks 4X, Firms Have 5 Months

Serge Bulaev

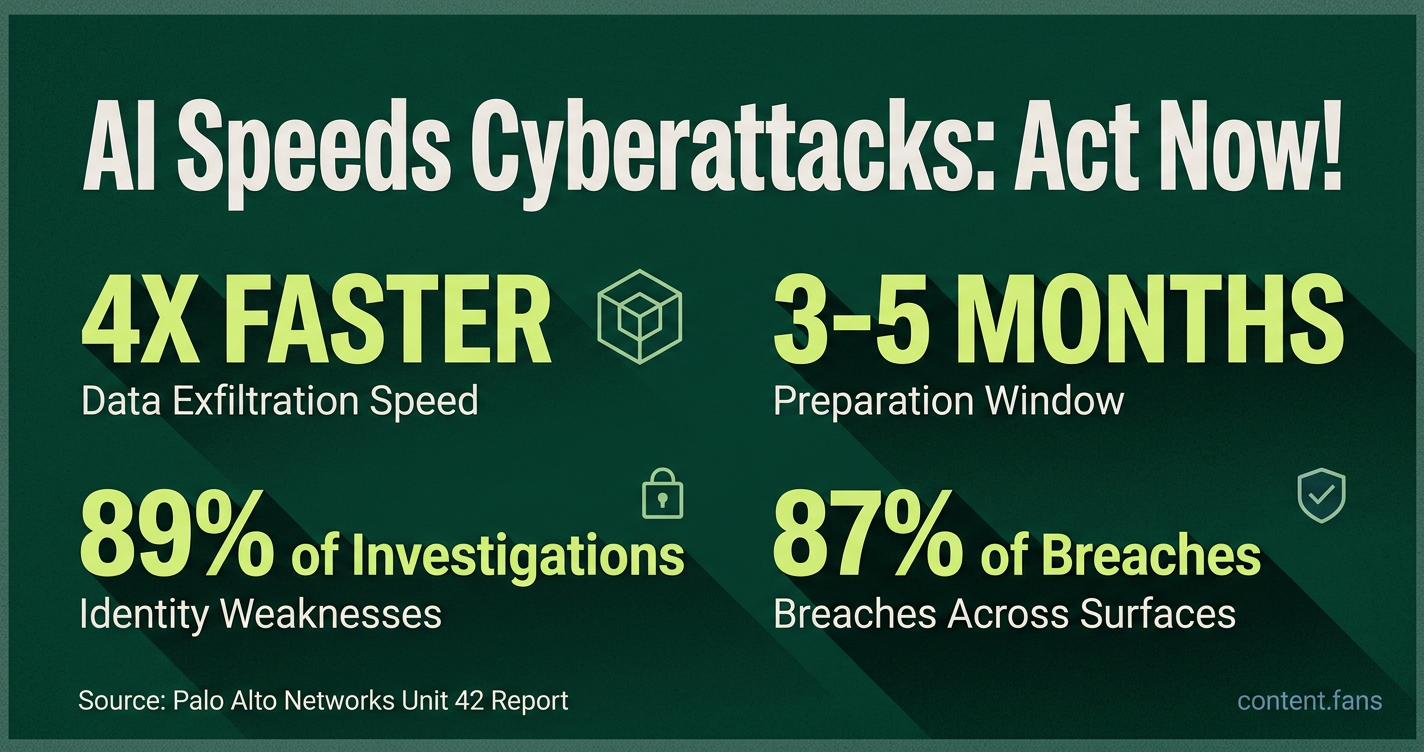

Palo Alto Networks warns that cyberattacks using AI are becoming much faster and may become normal soon. Their research suggests attackers can now steal data four times faster than a year ago by using AI tools. Experts say companies might have only three to five months before these new attack methods are common. The evidence suggests large-scale, fully automatic attacks are still being tested, but the time for organizations to prepare may be running out. Security teams that work together and focus on identity protection may be better able to stop these new types of attacks.

Palo Alto Networks warns that as AI speeds cyberattacks, the threat landscape is rapidly evolving. Their Unit 42 research shows attackers now exfiltrate data four times faster than a year ago. By pairing large language models (LLMs) with automated tools, adversaries are compressing the entire attack lifecycle, making advanced, AI-driven cyberattacks the new normal.

AI-Driven Attacks: The New Normal

According to the Unit 42 2026 Global Incident Response Report, generative AI enables adversaries to compress the entire intrusion lifecycle. Technology chief Lee Klarich warns firms have a narrow three-to-five-month window to prepare before these AI-accelerated attack tactics become routine and widespread in the threat landscape.

In a televised interview, Klarich cautioned that firms have "a three-to-five-month window" before these tactics become routine. This assessment is based on the Unit 42 report, which found that identity weaknesses appeared in 89 percent of investigations and 87 percent of breaches spanned multiple attack surfaces (press release).

Frontier-scale models are at the center of this concern. CNBC reports Klarich highlighted Anthropic's experimental "Mythos" system as a tool that can rapidly enumerate and chain exploits, though the vendor has limited its access to trusted testers. OpenAI's rumored "GPT-5.5-Cyber" has also been a topic of analyst discussion but remains unconfirmed.

Google's Threat Intelligence Group provided real-world corroboration, disclosing on May 11, 2026, that it disrupted a zero-day exploit against a web administration tool. As reported by The Verge, investigators noted stylistic fingerprints suggesting an LLM drafted the Python payload and spotted a "hallucinated CVSS score" within the code, halting what Google called a planned "mass exploitation event."

How Attackers Are Using AI Today

- Parallel reconnaissance across hundreds of targets.

- Rapid exploit writing and debugging with conversational agents.

- Scalable ransomware deployment that reduces operator workload.

- Insider misuse of corporate chatbots for denial-of-service scripts.

- Phishing-resistant deepfake voice or video to defeat identity checks.

Defensive Priorities in a Compressed Timeline

Palo Alto Networks recommends shifting from siloed security products to an integrated platform with identity at its core. Industry guidance converges on four immediate actions:

- Roll out phishing-resistant multi-factor authentication for all privileged roles.

- Shorten emergency patch service-level objectives and apply virtual patching where physical updates lag.

- Build a living asset inventory that spans endpoints, cloud workloads, SaaS, and AI tools.

- Rehearse incidents involving deepfake fraud, token theft, and AI-generated malware, ensuring that legal, HR, and communications teams can respond at the same speed as technical staff.

The available evidence suggests that while large-scale autonomous attacks are still in early testing, the window for preparation is closing quickly. Security teams that unify detection, response, and identity controls into a single workflow will be better positioned to withstand the next wave of AI-enabled intrusions.

What does Palo Alto Networks mean by "AI speeds cyberattacks 4X"?

The Unit 42 2026 Global Incident Response Report shows the fastest attacks moved from initial access to data theft four times faster in 2025 than in 2024.

Key driver: criminals now run AI reconnaissance, phishing and code-generation in parallel across hundreds of targets, then swarm the weakest link.

Median "breakout time" compressed to < 45 minutes for AI-assisted intrusions, forcing defenders to measure response in minutes, not days.

Which frontier models are being abused?

Palo Alto calls out Anthropic's Mythos research preview as an example of powerful code-generation being tested by red-teamers and criminals alike.

An OpenAI GPT-5.5-Cyber variant (referenced in briefings but not yet a commercial product) was seen inside dark-web tutorials showing how to auto-write exploits.

Google separately confirmed it stopped a real-world attempt to use an external LLM to craft a zero-day and plan a mass exploitation event, proving the risk is current, not theoretical.

How short is the "window to outpace adversaries"?

Palo Alto warns organizations have three-to-five months (counting from early 2026) before AI-driven attacks become the everyday norm.

After that, un-patched systems will be breached faster than patches can be tested.

CISOs are urged to move to weekly or daily patch cadences for internet-facing assets and adopt virtual patching when downtime is impossible.

What four defensive priorities buy the most time?

- Faster patching - aim for ≤ 7 days on critical CVEs; use exposure-management scanners to verify.

- Better asset visibility - 89 % of 2025 incidents exploited forgotten or shadow-IT systems.

- Stronger authentication - phishing-resistant MFA and just-in-time admin tokens blunt AI-generated deep-fake logins.

- Realistic incident rehearsal - tabletop deep-fake CEO fraud and ransomware scenarios cut mean-time-to-contain by 35 % in customer tests.

Where can teams find measurable goals for readiness?

The UK's AI Security Institute recommends setting service-level objectives (SLOs) such as:

- ≤ 24 h to patch critical vulnerabilities

- ≤ 60 min to revoke exposed credentials

- ≤ 15 min to quarantine compromised endpoint

Track these weekly; share red-team results with the board to keep AI-risk funding data-driven, not fear-driven.