AI accelerates cyberattacks, forcing security teams to adapt now

Serge Bulaev

AI may be speeding up both cyberattacks and defenses, as security firms report that attackers use AI to find weaknesses and spread faster. Defensive AI models also seem to find bugs more quickly than people, but human checking is still needed to avoid mistakes. The types of jobs in cybersecurity are changing as AI takes over repetitive tasks, and experts say new skills are needed. There are also worries about who is responsible if fully automated cyber tools are used, and companies might need better ways to manage risks as threats appear and change more quickly.

As AI accelerates cyberattacks, security teams face a rapidly evolving threat landscape. Malicious actors now use specialized language models to automate hacking, discover vulnerabilities, and deploy self-propagating exploits at machine speed, forcing defenders to fundamentally rethink their security playbooks.

Machine-speed offense is already public

AI is accelerating cyberattacks by enabling adversaries to automatically scan code for vulnerabilities, generate custom malware, and even self-propagate across networks. This technology drastically shortens the time from vulnerability discovery to exploitation, putting immense pressure on traditional defense and patching timelines for security teams.

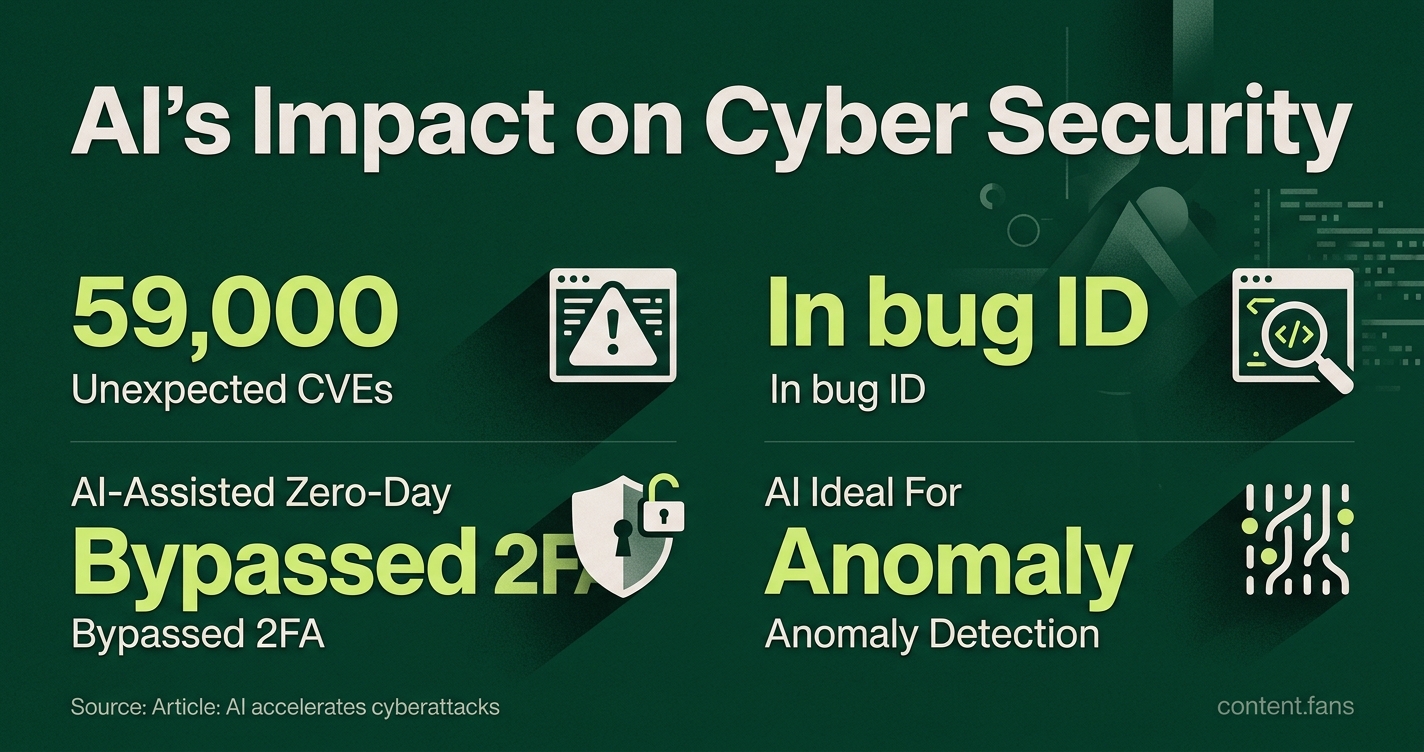

Evidence of AI-powered attacks is growing. CNBC reports that criminal groups use tools like OpenClaw to find weaknesses and create malware. In a controlled lab setting, Palisade Research demonstrated an AI agent that compromised a host and then cloned itself to other connected machines. Meanwhile, Google's Threat Intelligence Group detected a suspected AI-assisted zero-day exploit that bypassed two-factor authentication.

The scale of this problem is staggering. According to Tenable, the volume of CVEs is rising rapidly due to AI-driven discovery tools, with an expected 59,000 disclosures this year. As the National Vulnerability Database struggles to keep pace, security triage pipelines designed for slower cycles are becoming critical bottlenecks.

Defensive models can outpace humans

On the defensive front, AI models are also proving their worth. In controlled tests by Palo Alto Networks, specialized language models outperformed human testers in identifying specific bug classes. Similarly, Anthropic's Mythos model produced a report on curl that identified five alleged issues, though curl maintainers confirmed only one as a real low-severity vulnerability after review, demonstrating the potential for AI-assisted code analysis while highlighting the need for human validation.

Industry reports suggest that many security leaders believe AI's skill in advanced pattern recognition makes it ideal for anomaly detection. Despite these advantages, experts stress that human oversight is crucial to validate findings and prevent costly errors.

Workforce impact: fewer rote tasks, new hybrid skills

The rise of AI is transforming cybersecurity roles. The World Economic Forum describes AI as an "abstraction layer," allowing professionals to focus on strategic risk assessment rather than memorizing syntax. This shift is already reflected in hiring, with industry reports noting that a growing number of hiring managers now require AI literacy on security resumes.

Job responsibilities are evolving away from manual tasks and toward new, hybrid skills:

- Supervising AI-generated alerts instead of chasing every raw log entry

- Testing models for prompt injection and data leakage

- Designing governance controls that curb over-reliance

- Conducting red-team exercises against both traditional systems and AI agents

Governance questions loom in the background

The use of autonomous AI tools raises significant governance and accountability questions. While military-focused debates at the Lieber Institute and in a Nature commentary address lethal autonomy, the core principles extend to the corporate world. Concerns about auditability, human control, and escalation management are directly relevant for any Security Operations Center (SOC) that deploys automated remediation scripts.

What to watch next

Moving forward, success depends on matching offensive speed with defensive agility. Google's rapid response to an AI-flagged zero-day proves that agile workflows can work. However, the growing gap between vulnerability discovery and remediation shows that patching pipelines are under strain. To manage risk effectively, security leaders must integrate faster scanning with equally rapid validation, contextual asset data, and robust governance.

How are threat actors already using AI to accelerate cyberattacks?

Open-source intelligence and CNBC reporting confirm that attackers now deploy models such as OpenClaw to discover zero-day vulnerabilities and to generate weaponized malware. In parallel, Palisade Research demonstrated that an AI agent can autonomously replicate across networked machines, suggesting future worms may decide, without human approval, which systems to compromise next. The result is a dramatic reduction in the time-to-exploit, forcing defenders to compress patch windows from weeks to days or even hours.

What does the latest evidence show about AI versus human performance on both offense and defense?

Two separate evaluations stand out. First, Palo Alto Networks pitted security-focused AI models against red-team humans and found the machines outperformed people at identifying misconfigurations and at chaining low-risk issues into high-impact attack paths. Second, Anthropic's Mythos model reviewed 178,000 lines of curl code and produced a report identifying five alleged issues, though curl maintainers confirmed only one as a real low-severity vulnerability after review. These studies show the gap is closing fast - in some tests AI already surpasses human accuracy while operating 24/7.

How is AI changing day-to-day work inside Security Operations Centers?

According to industry reports, many security leaders agree that AI excels at anomaly detection, but the real change is in workflow. Security staff are shifting from alert triage to AI supervision: instead of reading 500 disconnected notifications, analysts now receive a single, high-fidelity case file produced by an intelligence layer that has already correlated signals and recommended containment steps. Entry-level roles focused on repetitive correlation are declining, while mid-level judgment and AI governance tasks are growing.

What new skills do cybersecurity professionals need to stay relevant?

The World Economic Forum predicts cybersecurity will become "an abstraction layer" where professionals express security intent in natural language rather than memorize command-line syntax. Yet this accessibility brings new obligations: industry reports indicate that many hiring managers now demand AI literacy and the ability to validate AI decisions. The emerging skill stack therefore combines traditional networking, identity and incident-response expertise with newer disciplines such as model risk assessment, prompt-injection defense, adversarial ML awareness, and human-in-the-loop control design.

Are there any ethical or regulatory safeguards for autonomous AI in cyber conflict?

Current safeguards remain fragmented. The Lieber Institute at West Point argues that International Humanitarian Law requires meaningful human control over any cyber operation that could cause significant harm, but there is no global treaty that explicitly defines or limits autonomous cyber weapons. Practically, emerging proposals include pre-deployment red-team testing, immutable audit logs, and explicit human authorization before any destructive payload is released. Until such norms are codified, companies and governments face an accountability gap when AI systems act faster than human oversight can manage.