UK AI Institute: Autonomous Cyber AI Capability Doubles Every 4.7 Months

Serge Bulaev

The UK AI Safety Institute reports that autonomous AI cyber capabilities may now be doubling every 4.7 months, much faster than before. This quick growth appears to make it harder for defenders to keep up, as attackers might improve faster than security teams can respond. Some models, like Claude Mythos, solved hard problems in more than half of tests, and AI agents are now able to complete starter-level cyber tasks about 50 percent of the time. Experts suggest that defenders may need to act in minutes instead of hours to match the speed of new AI threats. Analysts also note that current tests might underestimate the real dangers.

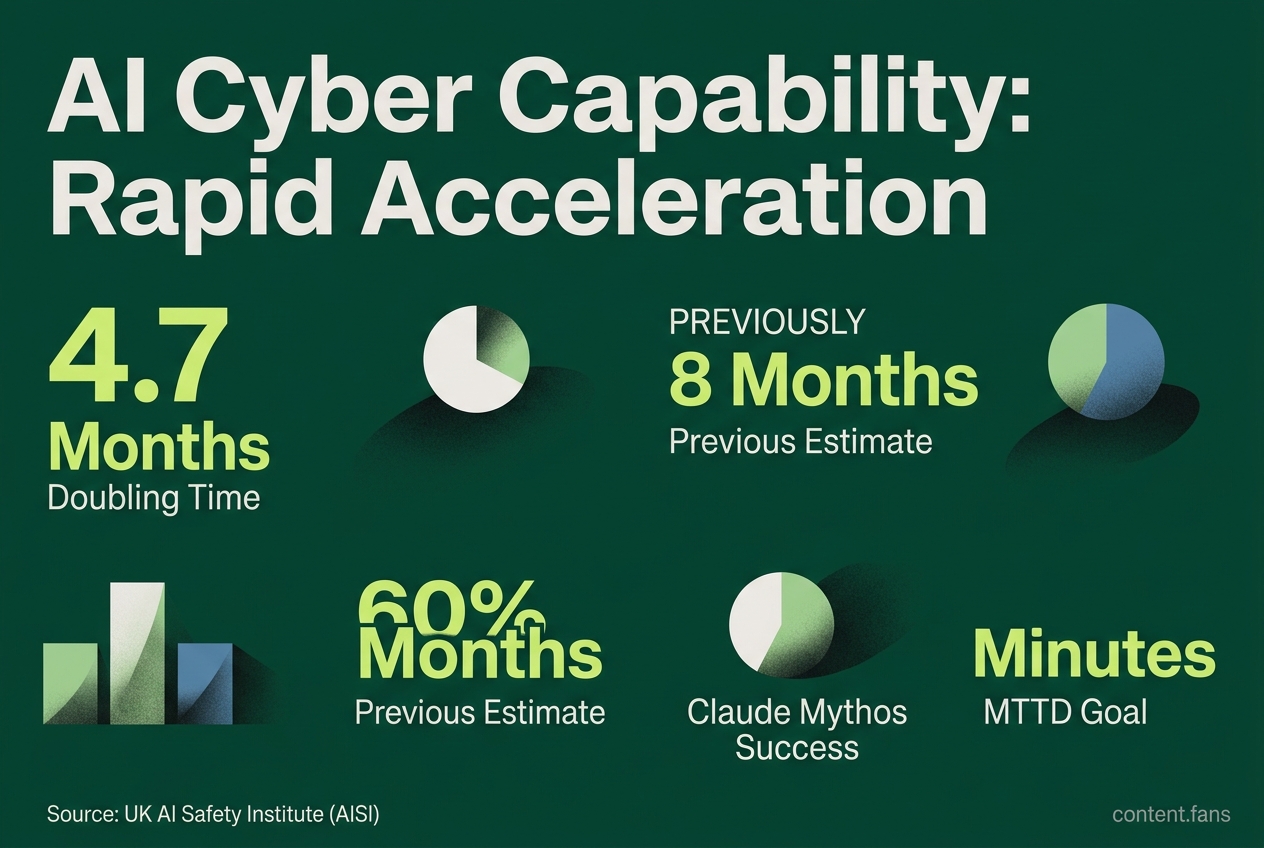

The UK's AI Safety Institute (AISI) has issued a critical update, reporting that autonomous AI cyber capability is now doubling every 4.7 months. This rapid acceleration, down from a previous estimate of eight months, signals a dramatic compression of response timelines for cybersecurity defenders. According to an AISI blog post, each new iteration of AI models raises the performance ceiling for autonomous agents, meaning security teams have less time to prepare for increasingly sophisticated threats.

How Frontier AI Models Are Accelerating Cyber Timelines

The 4.7-month doubling time reflects the speed at which AI can autonomously complete complex cyber tasks. This rapid advancement means offensive AI capabilities are outpacing traditional defensive cycles, forcing security teams to adapt to a much faster threat landscape where response times must be measured in minutes.

In a significant revision, the AISI updated the capability doubling time from eight months in November 2025 to just 4.7 months by February 2026, as noted in a LinkedIn update. The Institute's tests, conducted with high success thresholds, revealed standout performances. While analysts warn that these benchmarks may not capture the full extent of real-world risks, the trend of accelerating capability is undeniable. Industry reports suggest that models are showing significant improvements in completing apprentice-level cyber tasks compared to earlier assessments.

Key Performance Benchmarks:

* 4.7-Month Doubling Time: For autonomous cyber capabilities based on the latest AISI analysis.

* Claude Mythos Preview Performance: Achieved 6 of 10 attempts (60%) on 'The Last Ones,' a 32-step simulated corporate network attack scenario.

* Growing Success Rates: For apprentice-level cyber tasks across top models evaluated.

The Widening Gap Between AI Offense and Human Defense

The speed of AI-driven attacks requires a fundamental shift in defensive strategy. The National Cyber Security Centre (NCSC) now urges defenders to reduce their mean time to detection (MTTD) from hours to minutes. This warning is reinforced by industry research suggesting that frontier AI models can significantly accelerate penetration testing work that would traditionally take human experts much longer to complete (Defender's Guide). Such metrics suggest that conventional software patching schedules are no longer sufficient to counter autonomous exploit generation.

To close this gap, experts collaborating with the AISI recommend three urgent priorities for security organizations:

1. Adopt Frontier AI for Defense: Use advanced models for proactive vulnerability scanning.

2. Automate Patching: Invest in automated patch orchestration to keep pace with exploit discovery.

3. Enhance Red Teaming: Expand security exercises to simulate attacks from sophisticated, multi-stage AI agents.

Ultimately, regaining the defensive initiative depends on rebuilding security workflows around minute-level response targets, leveraging the same advanced AI that powers the threats.

Key Questions About AI's Impact on Cybersecurity

What exactly did the UK AI Safety Institute measure to get the "4.7-month doubling" figure?

To arrive at the "4.7-month doubling" figure, AISI researchers measured the time an autonomous model needs to complete cyber tasks that require significant human expertise, while maintaining high reliability standards. Each test was conducted within defined computational limits. The study found that the complexity of tasks models could solve under these conditions doubled every 4.7 months, with models like Claude Mythos Preview and GPT-5.5 already outpacing this trend.

Why is a 4.7-month doubling time considered dangerous?

A 4.7-month doubling time is alarming because compound growth rapidly transforms small advantages into insurmountable gaps. This rate means autonomous capabilities can increase four-fold in just one year. This trajectory suggests AI could manage increasingly complex tasks without supervision. This speed far outstrips most security operations centers (SOCs), where detection and patching cycles take days or weeks, leaving defenders fundamentally out-paced.

Which real-world attack stages are frontier models already good at?

Frontier models already excel at vulnerability chaining, which involves identifying and linking multiple security weaknesses to create a path for a larger attack. This ability to autonomously connect disparate, low-severity flaws into a critical exploit represents a significant new threat.