OpenAI's o1-preview AI outperforms doctors in ER diagnosis

Serge Bulaev

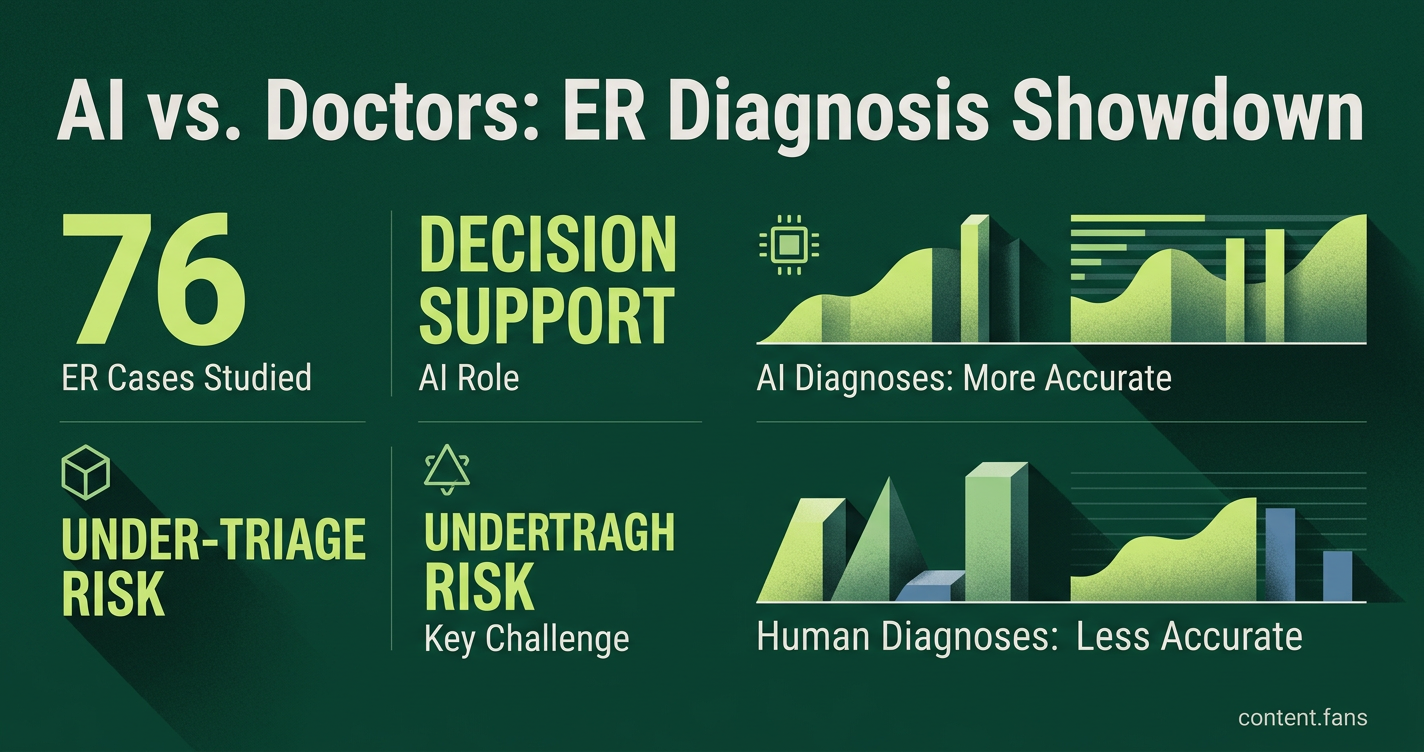

Recent research suggests that OpenAI's o1-preview AI may help doctors in emergency rooms by giving more accurate or similar diagnoses than human clinicians in some cases. Early tests in Boston showed the AI model included the right or very close diagnosis more often than doctors, especially when information was limited. However, studies also found the AI might under-triage high-risk patients and still faces challenges like bias and the need for doctor supervision. Experts warn that safety, fairness, and clear rules must be in place before using these systems widely in hospitals.

New research indicates OpenAI's o1-preview AI can outperform doctors in emergency room diagnoses, offering more accurate results with limited data. A pivotal study shows the large language model (LLM) can aid clinicians, but experts caution that significant challenges around safety, bias, and oversight must be addressed before widespread clinical adoption.

Early Clinical Evidence and Emerging Benchmarks

A pivotal study from Harvard and Beth Israel assessed OpenAI's o1-preview against real patient data from 76 emergency-room encounters. The model consistently included the correct or a very similar diagnosis in its differential list more often than human clinicians, signaling its potential as a powerful second-opinion tool.

A Harvard and Beth Israel team benchmarked o1-preview against established physician reasoning tasks. Their paper revealed the model surpassed clinicians in identifying diagnoses and next steps, particularly with the sparse data common in early ER visits link. However, complementary research highlighted a troubling trend: while models converge on facts, their reasoning diverges on complex cases, leading to potential under-triage of high-risk patients link. Another Oxford analysis confirmed stable accuracy using retrieval-augmented generation and called for larger trials.

Guardrails and Ethical Prerequisites

Before AI is deployed in diagnostics, regulators and ethicists insist on adherence to core principles including justice, transparency, and accountability, as outlined in a comprehensive NIH review link. Since AI can function as a "black box," explainability is crucial for gaining clinician trust and ensuring informed patient consent.

Key implementation challenges documented across recent literature include:

- Under-triage risk when acuity is high

- Variable performance during multi-stage reasoning

- Dataset bias that may disadvantage under-represented groups

- Integration of real-time laboratory and imaging updates

- Requirement for physician-in-the-loop oversight

To address these issues, experts recommend multidisciplinary oversight and frame LLMs strictly as decision-support tools, not autonomous agents. Any clinical rollout must be accompanied by transparent audit trails, fairness checks, and clear patient opt-out provisions. Wide adoption depends on resolving these safety and governance questions while proving tangible benefits during high-pressure triage.

What did the study actually show?

The Harvard Medical School and Beth Israel Deaconess team gave de-identified records from 76 real Boston emergency-room cases to OpenAI's o1-preview model.

The AI included the correct diagnosis or a very close alternative more often than 100-plus expert clinicians.

Median scores tell the story:

- AI: 89 % on complex management vignettes

- Physicians using conventional tools: 34 %

Despite the headline-ready numbers, the authors stress that the model is a second-opinion tool, not a replacement physician.

Why use ER data instead of textbook examples?

Real ED charts are noisy, incomplete and chaotic - exactly the setting where mistakes cost lives.

By testing on consecutive cases collected during a 24-hour peak influx, the study avoided the "laboratory illusion" of synthetic problems.

Result: 67 % of o1-preview's triage calls were spot-on with only the limited data available at the bedside, beating two senior doctors who scored 55 % and 50 %.

How has OpenAI improved since o1-preview?

The September 2024 chain-of-thought architecture is the headline upgrade.

The model now "thinks out loud" step-by-step, cutting hallucinations and mirroring clinician reasoning.

Benchmarks since then show:

- 97 % accuracy on landmark diagnostic cases

- 78 % correct on 70 New England Journal "mystery" cases (GPT-4 hit 73 %)

Yet the system still lags in probabilistic reasoning and critical-diagnosis flags, reminding us progress remains uneven.

What guardrails are being proposed before deployment?

Leading centres agree on four non-negotiable steps:

- Prospective, multicentre trials of at least 10 000 patients

- Human-in-the-loop override at every decision point

- Continuous fairness audits to flag under-triage in high-acuity cases (a known LLM weakness)

- Patient consent protocols covering AI involvement in their chart review

Industry experts suggest that comprehensive consent frameworks are being developed to address AI involvement in clinical decision-making.

When might patients actually see this tech?

Pilot programmes are already live.

Weill Cornell's emergency department is stress-testing retrieval-augmented versions of o1-preview on overnight shifts, Mount Sinai is mapping AI "blind spots", and Oxford has run 2 370 predictions against real charts in 79 consecutive patients.

Many regulatory experts suggest that extensive safety monitoring will be required before any broad rollout, with limited decision-support deployment being explored by various institutions.