CME, Silicon Data Launch Futures Market for GPU Compute Capacity

Serge Bulaev

CME Group and Silicon Data plan to launch a futures market for GPU computing capacity, pending regulatory approval. Contracts may let people lock in prices for renting specific GPUs, using daily indexes from Silicon Data. Prices for GPU rentals have changed a lot over the past two years, which makes budgeting hard for companies using AI. Experts say matching different GPU types under one price index is difficult, and final contract details are still being reviewed. It appears demand for clear GPU pricing is growing as the market gets bigger and more concentrated.

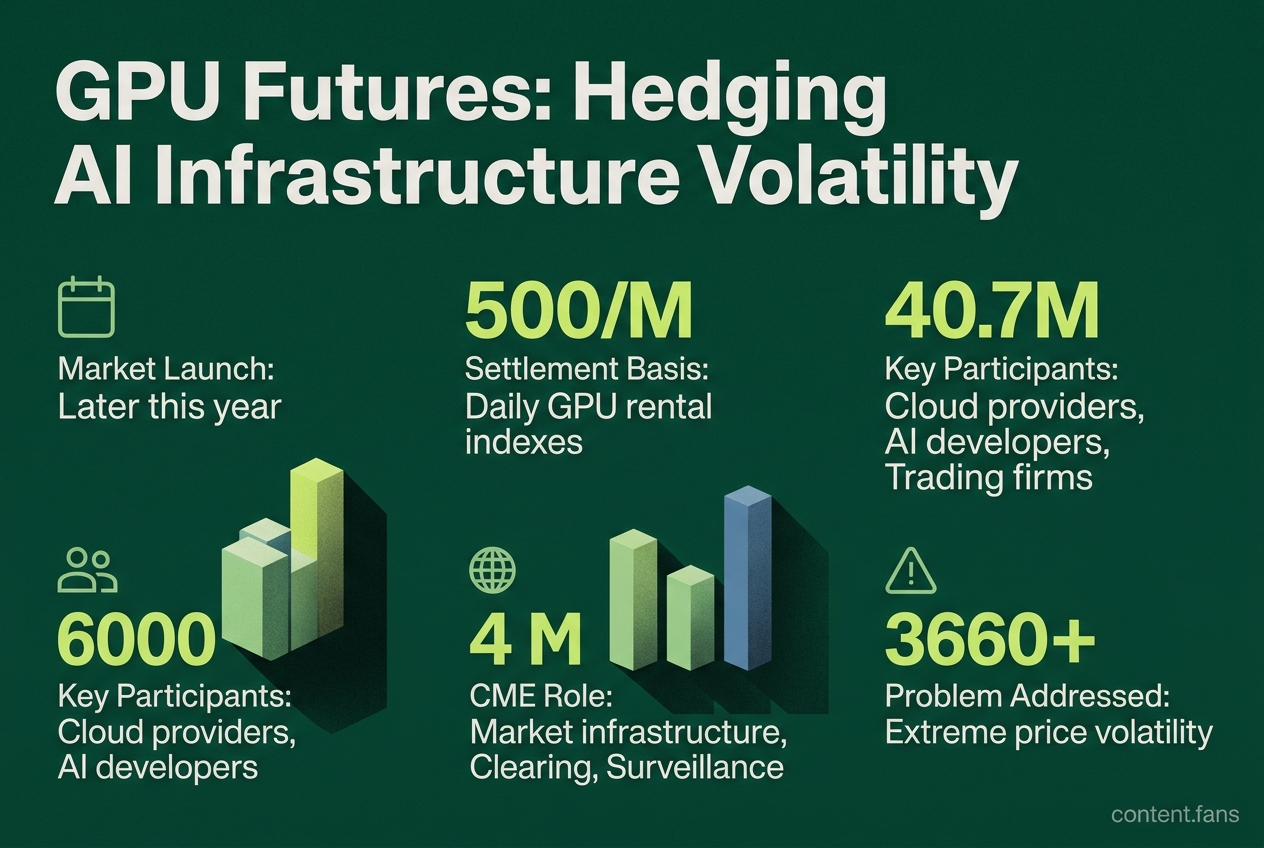

CME Group and Silicon Data have announced plans to launch a futures market for GPU compute capacity, a landmark move to bring financial hedging tools to the volatile world of AI infrastructure. Pending regulatory review, the exchange operator plans to list "compute futures" later this year. These contracts will be settled against daily GPU rental indexes from Silicon Data, allowing market participants to lock in future prices for processing power.

This initiative addresses the extreme price volatility in the GPU rental market over the last two years. The contracts are expected to use Silicon Data's GPU benchmark indices, which provide standardized reference pricing for on-demand GPU rental rates (CNBC). CME Group will provide the essential market infrastructure, including clearing and surveillance, modeled on its existing energy and metals futures markets (PR Newswire).

Why a hedge is timely

Extreme price swings in GPU rental costs have made financial planning difficult for AI companies. According to industry reports, on-demand rates for high-end GPUs have experienced significant declines over recent years, creating substantial budget uncertainty. This futures market offers a critical tool for hedging against such volatility and stabilizing costs.

Industry data illustrates this instability, with GPU pricing experiencing dramatic fluctuations driven by supply changes and discounts, disrupting budget planning for AI projects. Compounding the issue, price differences for identical hardware can vary significantly across different providers, creating substantial planning challenges.

Contract design

The proposed futures will be financially settled against Silicon Data's benchmark index. Each contract will represent a set amount of standardized compute time, with final details pending regulatory approval. Key market participants expected to use the product include:

- Cloud providers hedging to guarantee revenue.

- AI developers locking in costs for long-term training budgets.

- Quantitative trading firms arbitraging price differences in the GPU spot market.

Further details on settlement and tick size will be released following the regulatory review.

Standardization hurdles

A key challenge is creating a single, reliable index across different GPU models and generations. While Silicon Data plans to use performance-normalized metrics to weight prices, factors like regional energy costs and network infrastructure can create 'basis risk' - a mismatch between the futures price and a local cash price. Analysts suggest that if the market gains traction, separate sub-indexes for different chip types, such as those optimized for AI inference, may be necessary.

Broader market signals

The move comes as the GPU-as-a-Service market is experiencing significant growth according to industry projections. Market concentration is also increasing, with industry reports suggesting that a growing number of 'neo-cloud' providers are gaining substantial control over rentable GPU capacity. This consolidation highlights the growing need for transparent pricing mechanisms. Furthermore, easing supply chain issues have recently led to more stable component deliveries and less spot price volatility. This could result in lower margin requirements for the futures contracts than initially anticipated. However, the official launch date remains unconfirmed as CME Group and Silicon Data await final regulatory clearance.

What is the compute futures market that CME and Silicon Data are creating?

It is a regulated marketplace where standardized contracts tied to daily GPU benchmarks can be bought or sold, letting organizations lock in future compute prices instead of accepting spot-market volatility. CME supplies the exchange, clearing and market structure, while Silicon Data's indices - the world's first daily benchmarks for on-demand GPU rental rates - provide the reference price. The contracts are expected to be implemented following regulatory review, with formal rulemaking and broader implementation likely extending into 2026 and possibly 2027.

Who will use these GPU compute futures?

- AI developers who need price certainty for multi-month training runs

- Cloud providers that want to hedge inventory or lock in margins

- Data-center operators financing new GPU fleets

- Financial institutions seeking exposure to the growing GPU-as-a-Service market without buying hardware

Early adopters include neocloud specialists such as CoreWeave and Lambda Labs, which together with hyperscalers account for a significant portion of currently quoted H100 capacity.

How volatile has GPU pricing been without this tool?

Extremely. According to industry reports, GPU pricing has experienced dramatic swings that make long-term budgeting almost impossible. Futures contracts aim to address this significant price volatility by giving buyers and sellers a transparent reference curve they can trade against.

What problems still stand in the way of a single benchmark?

Different GPU architectures (Hopper vs. Ada vs. Blackwell), memory footprints and regional power costs all move the effective price per FLOP. Contracts therefore specify performance tiers rather than a single chip model, and CME will list regional variants so a Singapore data-center operator is not forced to quote against U.S. Midwest rates. The exchange has not yet released the exact weighting methodology, but industry observers expect separate indices for training-grade and inference-grade GPUs.

Why does commoditizing compute capacity matter?

Because it turns GPUs into a tradable asset class much like crude oil or memory chips. Once capacity can be hedged, CFOs can treat AI infrastructure as an opex line item with predictable cash-flows, encouraging larger capital commitments. Industry reports suggest that GPU spend already consumes a significant portion of technical budgets at young AI companies; introducing financial risk controls could accelerate the sector's move from experimentation to production at scale.