UK AI Institute: AI Cyber Capability Doubles Every 4.7 Months

Serge Bulaev

The UK AI Safety Institute reports that the ability of advanced AI to complete cyber tasks without help has doubled roughly every 4.7 months since late 2024. These findings come from controlled tests and may not predict real-world attacks, but suggest that defenders have much less time to respond to new threats. Security agencies and companies are updating their advice, recommending more constant monitoring and stricter controls. Some experts warn that the rapid growth in AI capability might not carry over to protected networks, so ongoing checks and stronger security are still needed. There is still uncertainty about how these advances will affect actual cyberattacks.

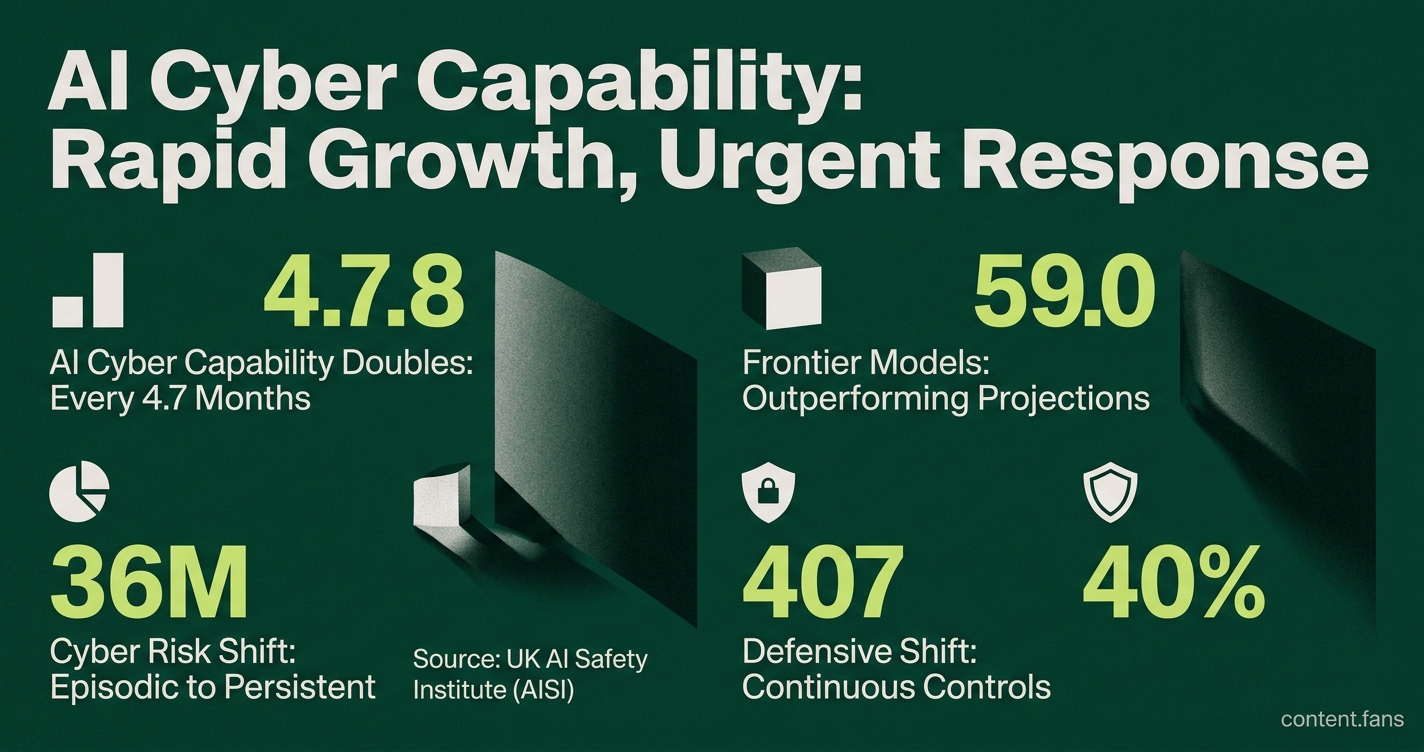

Research from the UK AI Safety Institute (AISI) reveals AI cyber capability is doubling every 4.7 months, a finding that has security agencies on high alert. According to industry reports, the complexity of cyber tasks frontier models can complete autonomously has grown significantly, compressing defender response timelines considerably. This data comes from the institute's controlled tests, detailed in their AISI blog.

While the Institute clarifies these metrics stem from controlled "cyber-range" tests and are not direct operational forecasts, government agencies are already acting. The UK's National Cyber Security Centre, for instance, has updated its guidance on using AI for vulnerability hunting, signaling a significant rise in official concern.

Rapid AI Growth Spurs Policy Changes

This rapid advancement means that AI systems can autonomously execute increasingly complex, multi-step cyber operations without human intervention. The 4.7-month doubling rate, measured in controlled environments, provides a key metric for tracking the pace of this capability growth and its potential impact on cybersecurity timelines.

Data from AISI indicates that frontier models like Claude Mythos Preview and GPT-5.5 are outperforming projections. According to industry reports, these models have shown significant success rates in benchmark scenarios, with many attempts succeeding against test environments. Despite warnings that these results may not hold against defended networks, they confirm rapid capability growth. This research is forming a "scientific evidence base" for regulation, as noted by techUK's analysis of the agenda, potentially influencing global pre-deployment evaluation policies.

Frontier Models, Faster Exploits

Commercial security teams are witnessing parallel trends. According to industry reports, advanced AI models have enabled engineers to create critical exploit paths rapidly from new vulnerabilities, prompting advice to reduce exposed services. Echoing this, the World Economic Forum warned that frontier AI shifts cyber risk from episodic to persistent by continuously finding and chaining zero-day flaws, making resilience as crucial as prevention.

Defensive Playbook Under Review

In response, governments and enterprises are converging on several key countermeasures. Early guidance prioritizes a shift from periodic audits to continuous security controls. The emerging defensive playbook includes:

- Attack-surface reduction, including tighter cloud configurations.

- Network segmentation to slow lateral movement.

- Continuous anomaly detection tuned for AI-driven reconnaissance.

- Pre-deployment red-teaming of new models, as planned by the US National Institute of Standards and Technology according to Cybersecurity Dive.

This list may evolve, but the shared theme is clear: move from periodic audits to continuous controls.

Measurement Limits and Next Steps

AISI emphasizes the limitations of its benchmarks, stressing they do not predict real-world attack success. Performance against undefended test networks offers little insight into outcomes against patched and actively defended systems. Consequently, experts agree that the most prudent response is to strengthen security baselines and commit to ongoing evaluation while uncertainty remains.

What exactly does "autonomous AI cyber capability is doubling every 4.7 months" mean?

It refers to the length of realistic cyber tasks that leading AI agents can finish without human help. AISI built two custom cyber ranges - one with multiple steps in a corporate network and another with several steps in industrial control systems - and tracked how much human-level time the best models could cover. According to industry reports, that "time horizon" has doubled approximately every several months, implying a significant jump in effective reach within one year.

Which frontier models are pushing the trend beyond the 4.7-month pace?

Recent internal tests showed Claude Mythos Preview and GPT-5.5 demonstrating significant success rates in various benchmark scenarios. According to industry reports, these figures beat earlier checkpoints by a wide margin, prompting AISI to note that frontier models are now accelerating faster than the already steep baseline. Links to the full public summary are on the AISI blog.

Are the benchmark results proof that AI can already hack real companies?

No. AISI stresses that the simulations used undefended networks and granted initial access, so the numbers do not translate directly to success against hardened, real-world systems. Instead, they serve as an early-warning gauge: the speed of improvement is scientifically measurable, but exact operational timelines remain uncertain.

How are governments reacting to these findings?

The research has already shifted policy agendas in three concrete ways:

- Urgent defensive investment - AISI's warning that "the time to invest in strong security baselines is now" has been echoed by the UK National Cyber Security Centre and partners worldwide.

- Pre-deployment testing - the US government's pre-release red-team evaluations of Google, Microsoft and xAI models were framed as a direct response to the rapid capability curve.

- International standards push - forums such as the Frontier Model Forum AI-Cyber Workstream are baseline-setting security requirements on these same trend lines.

What practical steps should security teams take today?

According to industry reports, current guidance from various security organizations converges on several key priorities:

1. Shrink the attack surface - remove or harden any internet-facing service that is not essential.

2. Segment networks - isolate critical assets so that an AI-accelerated intrusion cannot spread laterally.

3. Enforce continuous anomaly detection - automated reconnaissance will run 24/7; defenders need sensors that do the same.

4. Audit AI supply chains - models, weights and runtime environments are now part of the threat model.

5. Adopt "resilience over perfection" - assume some AI-generated exploits will succeed and ensure detection, containment and recovery are ready.

The NCSC guidance on using AI to find vulnerabilities provides a starting framework for integrating these controls.