OpenAI Debuts Realtime-2, Translate, Whisper for Enterprise Voice AI

Serge Bulaev

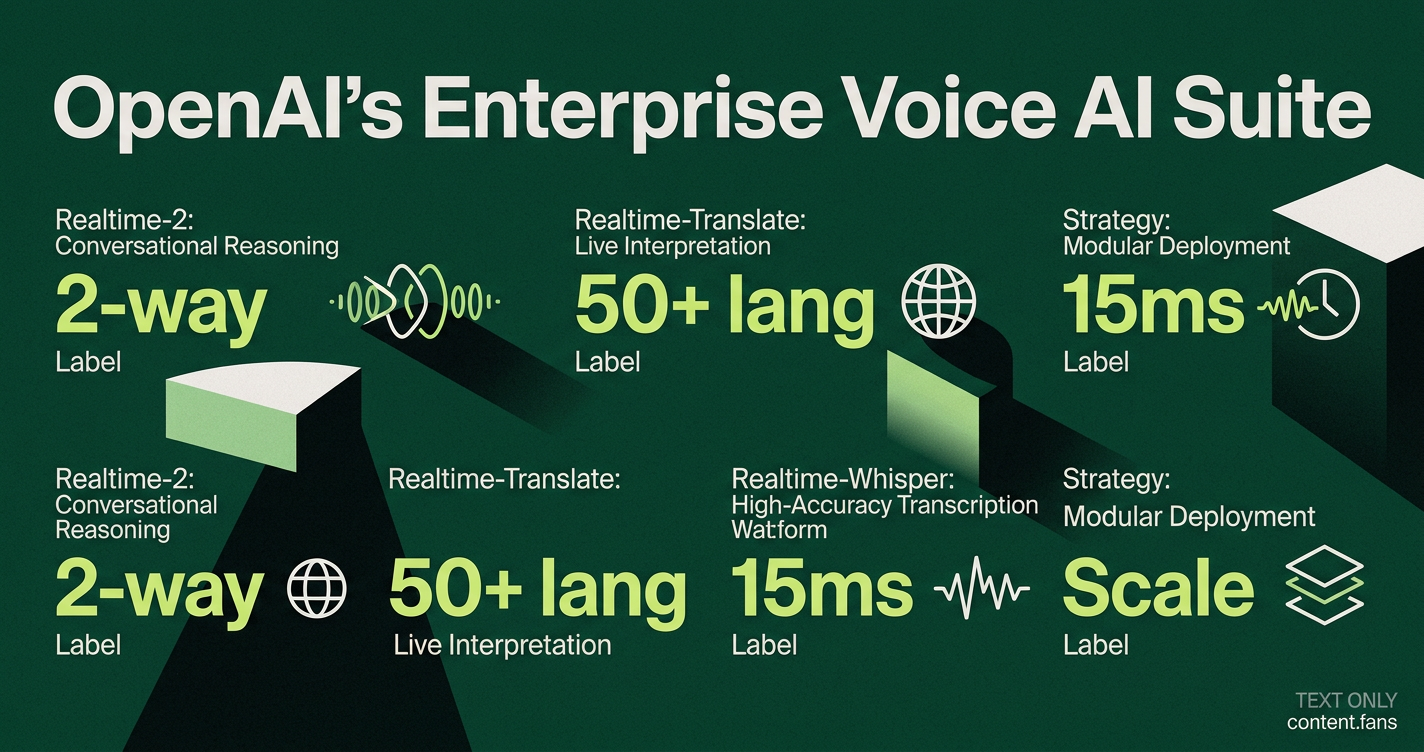

OpenAI has released three new voice AI models called Realtime-2, Realtime-Translate, and Realtime-Whisper that may help businesses handle reasoning, translation, and transcription separately. These models give companies more control by letting them use only the parts they need instead of one big system. Some experts suggest this change fits OpenAI's goal of making AI more useful in daily work, not just for showing off new technology. The models might lower costs and are designed to work well with current business tools, but savings and speed could depend on how they're used. Adoption appears to be growing as companies look for fast, practical benefits from these new tools.

OpenAI's new enterprise voice AI models - Realtime-2, Realtime-Translate, and Realtime-Whisper - are capturing enterprise attention with a disaggregated, modular approach. Instead of a single monolithic stack, this trio divides voice AI into its core primitives: reasoning, translation, and transcription. This gives development teams granular control to build more efficient and cost-effective voice solutions.

Analysts suggest the release aligns with OpenAI's focus on driving practical adoption. In a January Business Insider interview, CFO Sarah Friar emphasized the need to "close the gap between what AI now makes possible and how people, companies, and countries are using it day to day." This strategy signals a shift toward delivering immediate workflow value over purely conceptual demonstrations.

What Each Model Targets

OpenAI's voice AI suite includes three distinct models designed for specific enterprise needs. Realtime-2 provides conversational reasoning for customer support, Realtime-Translate offers live language interpretation for global communications, and Realtime-Whisper focuses on high-accuracy, real-time transcription for searchable records and analysis.

- Realtime-2 is engineered for live, speech-to-speech reasoning. It is ideal for contact centers, internal help desks, and hands-free field support. A YouTube walkthrough demonstrates its ability to manage customer inquiries with a natural, conversational flow.

- Realtime-Translate provides on-the-fly interpretation, designed for global meetings and multilingual customer service interactions.

- Realtime-Whisper concentrates on high-fidelity, real-time transcription, serving as a low-risk starting point for organizations wanting searchable call logs before committing to full voice automation.

The Strategic Value of Disaggregation

Separating voice AI into distinct components prioritizes modular deployment over a rigid, all-in-one system. This unbundling allows developers to select the most efficient and cost-effective tool for each task. For instance, a support workflow can use Realtime-Whisper for basic transcription, escalate complex cases to Realtime-2 for reasoning, and only engage Realtime-Translate when language barriers exist. This can significantly lower per-minute costs, though final savings depend on call volume and latency requirements.

Enhanced Context: A Powerful Tool with Trade-Offs

The updated API features an expanded context window, enabling a voice agent to hold more transcript and tool outputs within a single prompt. According to industry reports, this expanded capacity represents a significant improvement for complex orchestrations. However, engineers must monitor the time-to-first-token, as more context can increase response delays. With a three-second pause often perceived as a dropped call, developers continue to use aggressive summarization and external memory to maintain performance.

Emerging Enterprise Use Cases

- Contact Center Modernization: Deploying voice agents for tier-1 support, offering instant translation for global customers, and using transcripts for automated quality assurance.

- Healthcare Intake: Capturing symptoms via voice, providing multilingual discharge instructions, and assisting clinicians with automated notes.

- Global Collaboration: Facilitating translated board meetings and creating searchable transcripts for compliance purposes.

- Field Operations: Enabling hands-free troubleshooting for technicians who are unable to use a keyboard.

Adoption is accelerating as businesses seek solutions that integrate seamlessly with existing tools. The success of Realtime-2, Realtime-Translate, and Realtime-Whisper will ultimately depend less on raw performance and more on their ease of integration into established phone systems, CRMs, and compliance pipelines.

What are the three new OpenAI voice models and how do they differ?

Realtime-2 is a speech-to-speech reasoning engine for natural conversation, Realtime-Translate handles live multilingual translation, and Realtime-Whisper delivers high-accuracy transcription. Instead of one bundled model, each focuses on a single primitive so enterprises can route every call to the best tool for the job.

Why did OpenAI split voice into separate models?

Disaggregation cuts cost and latency. A support call that needs only transcription never touches the heavier reasoning model, while a complex troubleshooting flow can still invoke Realtime-2. Early testers report significant cost savings compared with monolithic voice stacks.

How does the expanded context window change voice agents?

Inside one session the model can hold substantial amounts of spoken text, letting agents remember prior steps, policy excerpts or CRM notes without forced summarization. OpenAI warns that time-to-first-token rises with token count, so production systems still compress or retrieve history selectively.

Where are enterprises deploying the trio first?

Healthcare chains use Realtime-Whisper for clinical dictation, airlines pair Realtime-Translate with English-only agents to serve Spanish and Mandarin callers, and SaaS support teams let Realtime-2 handle tier-1 tickets end-to-end. CFO Sarah Friar calls 2026 the year of "practical adoption", with voice cited as the fastest path from pilot to daily use.

Should I build on a bundled rival or adopt this disaggregated stack?

Choose disaggregated if you need per-call cost visibility, best-of-breed accuracy or strict compliance rules. Prefer bundled platforms when head-count is limited and speed-to-launch outweighs margin control. Industry data shows many Voice-AI platforms struggle with cost visibility - OpenAI's separate metering aims to fix that.