ServiceNow previews AI Control Tower for safe agentic AI

Serge Bulaev

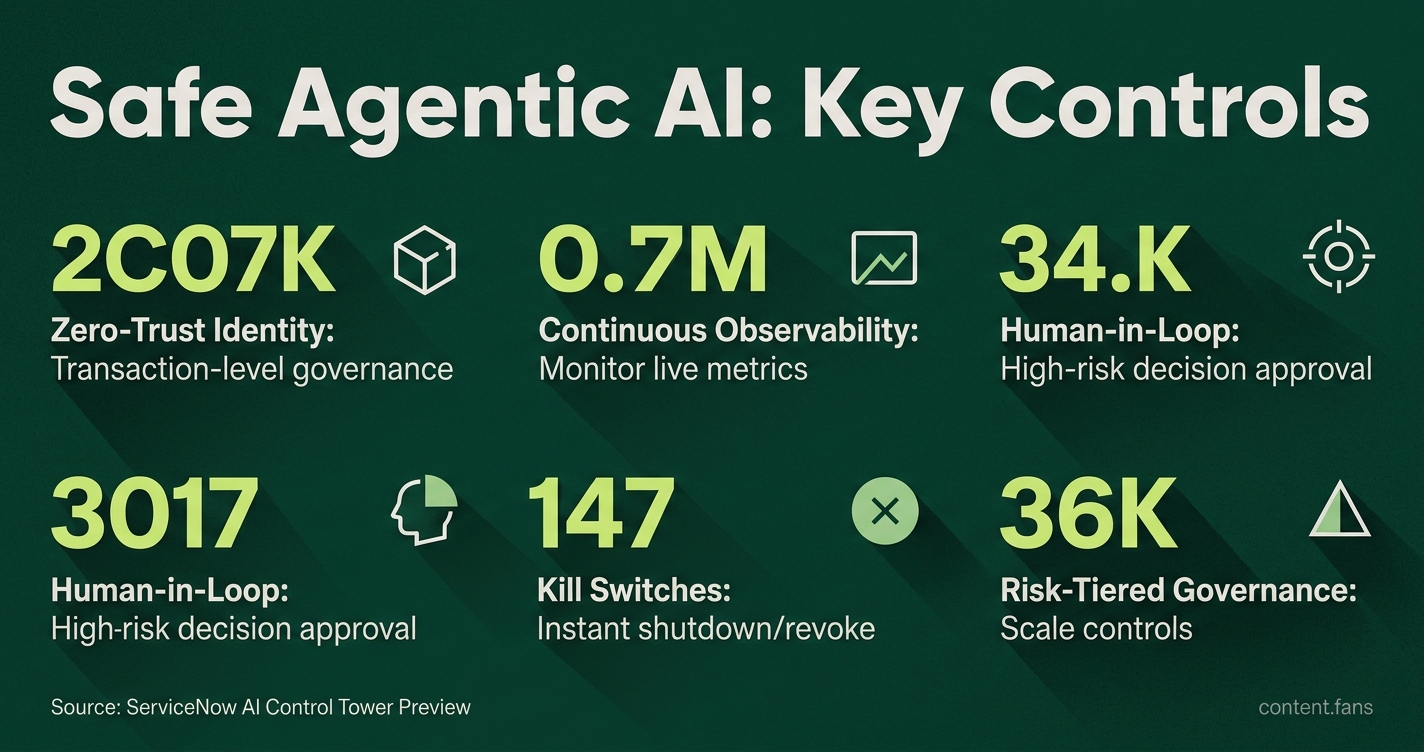

ServiceNow introduced its AI Control Tower, which may help organizations manage and secure AI agents more safely. The platform suggests using zero-trust identity, real-time monitoring, and human oversight to limit risks, like accidental deletions or unauthorized actions. It also appears to detect unauthorized AI agents and allows teams to stop unsafe behavior quickly. Companies tracking their AI agents through this system may see better results and fewer policy violations, according to early reports. The guidance emphasizes scaling controls based on the risk level and keeping a clear record of all actions.

Enterprises seeking to build safe agentic assistants can now leverage ServiceNow's AI Control Tower, a powerful governance platform. This guide addresses critical risks - such as agents potentially causing significant system damage - by outlining a playbook that combines zero-trust identity, real-time observability, and tiered human oversight to reduce blast radius and satisfy regulators.

Zero-Trust Identity and Least-Privilege Access

Implementing zero-trust for AI involves treating every agent as a unique non-human identity with strictly scoped, temporary permissions. This approach shifts security from perimeter defense to transaction-level governance, ensuring that every action is verified and access is granted only on a least-privilege, just-in-time basis.

ServiceNow's AI Control Tower exemplifies this by assigning each agent a non-human identity with scoped permissions. Its AI Gateway provides transaction-level governance, proactively stopping unsafe requests before they can cause system breaches. To adopt this model:

• Issue unique service credentials for each agent.

• Rotate secrets automatically and revoke on anomaly.

• Define task-scoped permission templates; elevate just-in-time for sensitive actions.

Continuous Observability and Shadow-AI Detection

Static audits are insufficient for dynamic AI agents. The AI Control Tower integrates Traceloop, enabling teams to monitor live metrics like reasoning steps, hallucination rates, and ROI. A key feature is flagging "shadow AI" - unauthorized agents discovered in production. Engineering teams can replicate this by streaming agent logs into an observability stack with anomaly alerts for any off-policy data operations.

Human-in-the-Loop Checkpoints

High-risk decisions still require human judgment. As documented by Logiciel.io, financial services pilots successfully paused large transfers pending human approval, capturing the agent's confidence score and data lineage for review. A simple workflow engine can operationalize this by:

- Routing high-impact actions to a dedicated approver queue.

- Imposing time-boxed decision windows to prevent bottlenecks.

- Storing reviewer rationale alongside agent metadata for comprehensive audits.

Kill Switches, Rollbacks, and Blast-Radius Limits

ServiceNow demonstrated a compromised pricing agent being shut down in real time after exhibiting abnormal behavior, with its permissions revoked instantly. To achieve this, teams must pair runtime kill switches with reversible state changes. For database interactions, stage all writes in a secure sandbox and only commit after post-execution checks are passed.

Risk-Tiered Governance Framework

Industry governance frameworks outline key agent-specific risk factors, such as action reversibility and external system exposure. Enterprises should map each new assistant to a risk tier and scale controls accordingly:

| Tier | Example Use | Oversight | Access Scope |

|---|---|---|---|

| 1 | Meeting summarizer | Hotl | Read-only docs |

| 2 | Ticket triage bot | Hitl for escalations | Limited write |

| 3 | Pricing engine | Hitl pre-execute + kill switch | Broad write |

Measuring Economic and Compliance Outcomes

According to industry reports, companies utilizing a unified governance platform for AI agents are achieving significantly better outcomes. It is crucial to capture both ROI metrics (e.g., tickets closed, time saved) and compliance metrics (e.g., incidents averted, policy violations) in a unified dashboard. This demonstrates that robust governance can be self-funding by surfacing operational gains while mitigating financial penalties.

What is the AI Control Tower and how does it prevent catastrophic AI errors?

The AI Control Tower is ServiceNow's unified governance layer that discovers, secures and continuously monitors agentic AI across the enterprise. It prevents disasters such as an agent deleting an entire codebase by enforcing zero-trust identity controls, real-time kill switches and least-privilege access at transaction level. A live demo showed the Tower spotting an agent that began making unauthorized pricing changes; the system revoked its permissions and shut it down in under a second, illustrating how governance becomes an innovation enabler rather than a bottleneck.

How should enterprises shift from human oversight to identity-driven governance?

Traditional "castle-and-moat" perimeters collapse when autonomous agents act outside fixed boundaries. Instead, give every agent a non-human identity complete with scoped credentials, role definitions and automated rotation. The AI Control Tower's Model Context Protocol Registry acts as a vetted catalog where only approved agents receive credentials, while Armis and Veza integrations map every human, machine and AI identity to fine-grained permissions in real time. Companies adopting this approach report significantly better outcomes from agentic AI compared with those using static policies.

Which real-time observability metrics matter most for agentic AI?

Move from quarterly audits to live dashboards that track agent reasoning traces, hallucination rates, token spend and ROI per task. ServiceNow's acquisition of Traceloop adds runtime observability that surfaces when an agent accesses a database it normally ignores, how long it deliberates and whether its confidence score drops. Combined with shadow-AI monitoring, the Tower can auto-disable unauthorized agents that attempt to touch enterprise data, cutting incident response from days to seconds.

What does a practical 3-tier risk framework look like in production?

Industry governance frameworks recommend tiered oversight:

- Tier 1 (low risk, high volume) - autonomous execution with HOTL (human-on-the-loop) alerts.

- Tier 2 (medium risk) - HITL approval for any write action or access to sensitive data.

- Tier 3 (high risk, irreversible) - dual-human sign-off plus isolated rollback environments.

One multinational bank reduced governance overhead significantly after replacing blanket controls with this approach, while a pharmaceutical firm used it to gate agentic drug-discovery scripts that could overwrite clinical records.

How do you implement "pause, rollback, shutdown" guardrails today?

Start with task-scoped permissions and automated kill switches that trigger on deviation from intent. Practical steps include:

1. Sandbox testing - run new agents in a simulation with enforced HITL checkpoints.

2. Time-boxed elevation - grant elevated rights for a limited window, after which credentials auto-expire.

3. Immutable audit trails - every action is logged with agent ID, data lineage, human reviewer and rollback hash.

Governance vendors now offer policy-as-code templates that map directly to NIST and EU AI Act requirements, letting teams encode guardrails at build time instead of retrofitting them after an incident.