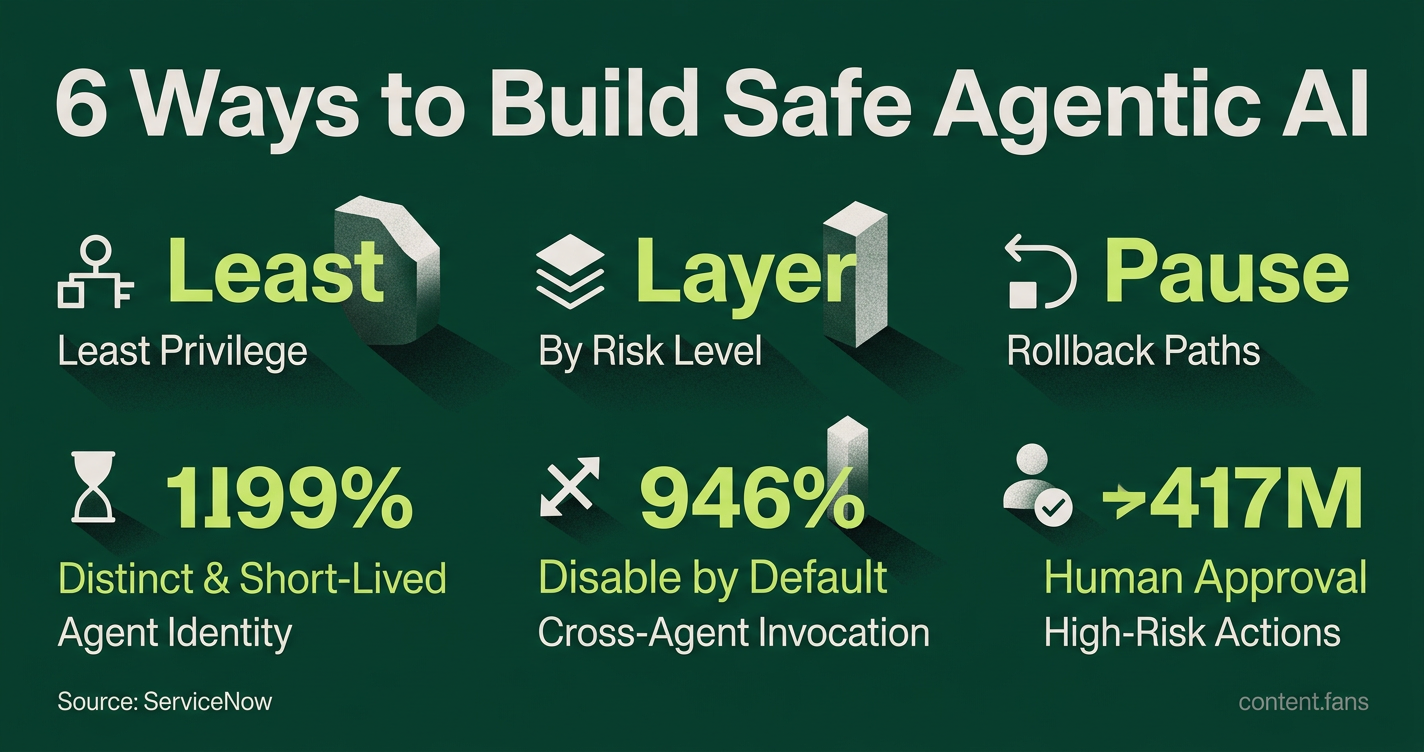

ServiceNow Details 6 Ways to Build Safe Agentic AI

Serge Bulaev

ServiceNow outlines six ways that may help make agentic AI assistants safer, since these systems can quickly cause big problems if not controlled. The guide suggests limiting each agent's access, putting humans in review loops for risky actions, and always keeping a way to pause or undo steps. It also recommends recording all actions for later checks, following outside rules like the EU AI Act, and practicing emergency shutdowns. These steps may not remove all risks but appear to make dangerous actions slower and easier to catch.

Building safe agentic AI is critical, as an autonomous agent plugged into production systems can erase a codebase or leak payroll records faster than any human can react. ServiceNow's COO used this exact nightmare scenario to frame the rising stakes, a threat highlighted by the BodySnatcher vulnerability, which allowed attackers to impersonate ServiceNow admins via an AI workflow (AppOmni AO Labs). The following playbook synthesizes vendor fixes, researcher patterns, and upcoming compliance rules into a single safety baseline for product, engineering, and security teams.

1. Ground context and scope authority

Default platform permissions are often too broad, creating significant security risks. For example, Zenity Labs found that ServiceNow "Connected Agents" can automatically invoke one another, expanding an incident's potential blast radius (WindowsForum thread). To counter this, restrict each agent to the minimal dataset, tools, and identity it needs, and map privileges using an access graph like Veza within ServiceNow Control Tower.

Building safe agentic AI requires enforcing the principle of least privilege by strictly limiting each agent's data access, tools, and identity. It is also critical to implement human-in-the-loop reviews for high-risk actions, establish clear rollback paths, and maintain immutable logs for complete auditability.

Short checklist:

- Register each agent as a distinct identity with short-lived access tokens.

- Disable cross-agent invocations by default, enabling them only via explicit business rules.

- Require multi-factor authentication for any user who can deploy or modify an agent.

2. Layer human-in-the-loop controls by risk

Implement human-in-the-loop (HITL) controls based on task-specific risk levels. Industry experts recommend starting pilots with mandatory human review for every action, then using model confidence scores to gradually reduce review rates as systems mature. Assign autonomy levels (L0 for observe to L4 for fully autonomous) to each task, ensuring high-risk, irreversible operations like deletions or payments always require human approval through an Approval Gate.

A three-tier escalation ladder keeps reviewers focused:

1. Auto-approve: Low-risk, reversible tasks.

2. Single approver: Medium-risk tasks or actions where model confidence is below a set threshold.

3. Multi-human review: Critical actions, which may also be checked by a counter-model.

Time-based controls are also vital. For example, Strata.io recommends a fail-safe denial if an approver misses a two-minute SLA for a PII exposure request.

3. Build pause points and rollback paths

Every Approval Gate must be paired with an explicit rollback plan. Torry Harris refers to the required decision summary as a "pause point," which should detail what the agent will do, why, and its projected impact. Before execution, include a "commit changes" confirmation step so the agent's work can be verified. For irreversible actions, maintain system snapshots or transaction logs to support an immediate undo.

4. Capture immutable telemetry

Comprehensive monitoring is non-negotiable; many enterprises demand it before deploying AI agents. Use platforms like ServiceNow's Control Tower Observe module to stream detailed telemetry - including prompt logs, tool calls, and cross-agent communications - into your SIEM pipeline. To align with emerging regulations like the EU AI Act, retain all technical records for at least six months.

Key metrics to track weekly:

- Percentage of agent tasks requiring human review.

- Mean time to detection (MTTD) for rejected agent actions.

- Inter-reviewer agreement rate on escalated decisions.

5. Align with external governance early

Proactively align your safety framework with external governance standards. Major regulations like the EU AI Act (effective August 2026) mandate continuous risk management and human override capabilities for high-risk AI systems. Use established frameworks like the NIST AI Risk Management Framework (Govern, Map, Measure, Manage) to structure your efforts, ensuring that policy artifacts and engineering work remain synchronized.

A quick reference table:

| Govern | Inventory agents, owners, risk thresholds |

| --- | --- |

| Map | Document data sources, scope, legal basis |

| Measure | Bias testing, autonomy level audits, log reviews |

| Manage | HITL workflows, incident response, kill switch playbooks |

6. Practice the kill switch

A reliable kill switch is the ultimate failsafe. While platforms like ServiceNow embed emergency stops in their Control Tower, your team must practice using them. Conduct quarterly drills that simulate a full system shutdown, including revoking tokens, disabling agent tools, and testing data restoration from backups. These rehearsals are crucial for uncovering hidden dependencies and ensuring a rapid response when a critical vulnerability emerges.

While no single control can eliminate all hazards, implementing this layered defense-in-depth strategy is essential. These six practices work together to slow down potentially harmful actions, increase visibility into agent behavior, and empower human operators to intervene before minor errors become catastrophic failures.

How can we stop an autonomous agent from deleting an entire codebase?

ServiceNow's own COO warns that without strict bounded authority, an agent can inherit platform-wide access and "see everything." Recent reports show that many enterprises still lack AI governance policies, making accidental or malicious deletion very real.

Mitigation strategy:

- Scoped permissions - give each agent minimal, task-specific roles.

- Mandatory approval gates - require a human sign-off for any irreversible action such as delete, move, or deploy.

- Rollback prompts - agents must issue a "commit your changes" step so drift can be auto-reverted.