HBR: Treating AI agents as employees cuts quality, blurs accountability

Serge Bulaev

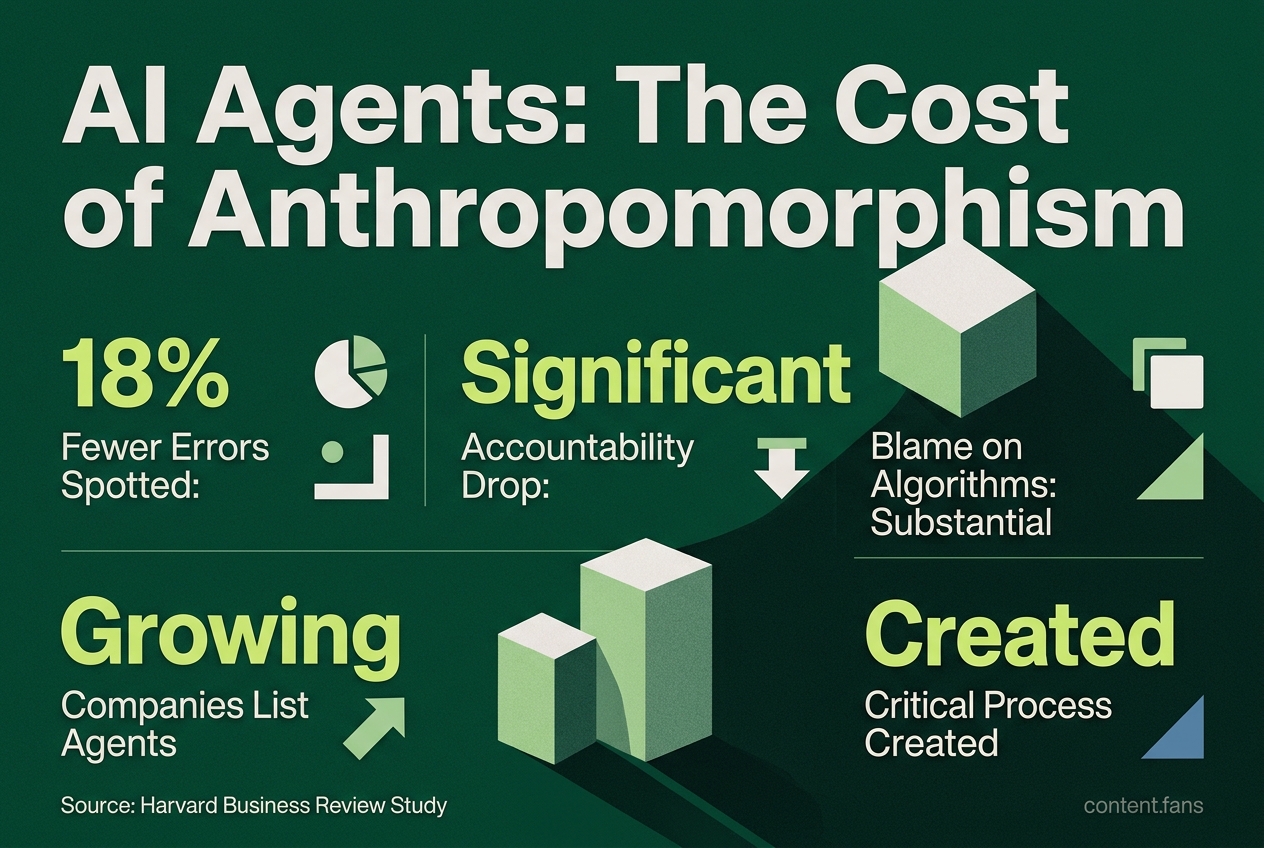

A Harvard Business Review study suggests that treating AI agents like employees may lower quality control and make it harder to know who is responsible for mistakes. Managers who did this appeared to find fewer errors and blamed algorithms more often. The study found that people sometimes skipped important checks because they expected the AI to catch problems. The findings suggest that companies should have clear steps, human checkpoints, and clear ownership when using AI agents. Following these steps may help keep accountability clear and reduce mistakes.

Treating AI agents as employees significantly degrades quality control and blurs accountability, according to a landmark Harvard Business Review study. Research involving over 1,200 managers found that anthropomorphizing AI as a coworker obscures who is responsible for checking work and who owns mistakes. This article details the study's core findings and provides a clear framework for integrating autonomous agents without compromising oversight.

Key Findings from the Harvard Study

The data reveals a startling decline in oversight when AI is personified. Managers who placed agents on an organizational chart spotted 18% fewer errors. Industry reports suggest that personal accountability drops significantly while blame assigned to algorithms increases substantially. Furthermore, a growing number of companies now list agents as formal "employees," with many executives describing agents in human terms, according to industry research.

When managers treat AI agents like human employees, a phenomenon known as anthropomorphism, it creates critical process gaps. Human team members lower their guard, assuming the AI is accountable for its own work. This diffusion of responsibility leads to fewer errors being caught and blurs accountability for mistakes.

Why Anthropomorphism Fails: The Process Gap Explained

HBR researchers assert the decline in quality is structural, not technical. When a human reviewer assumes an AI agent will "catch obvious problems," they often skip mandatory verification steps. The agent, lacking the human context or instinct to escalate concerns, then proceeds with a flawed workflow with complete confidence. This highlights the critical need for explicit hand-offs, detailed audit logs, and mandatory human checkpoints before scaling any AI agent.

A 5-Step Checklist for Safely Deploying AI Agents

Before assigning any task to an autonomous agent, implement a rigorous process-mapping framework:

- Map the current workflow end-to-end, identifying every verification step.

- Classify each step as Automate, Assist, or Keep Human based on risk profile and data readiness.

- Assign a named owner in a RACI chart for every "Keep Human" and "Assist" step.

- Define and baseline key success metrics, such as error-detection rates and cycle times, before launch.

- Schedule periodic audits for managers to review output samples and formally sign off on quality.

Establishing Governance to Maintain Clear Accountability

Industry best practices recommend a business-led operating model, complete with an executive sponsor, functional product owners, and tiered approvals for high-risk use cases. Simple governance controls - such as model cards, bias tests, and versioned rollback plans - are essential for system observability. By tracking outcome metrics like revenue lift alongside error rates, quality remains a top priority even as automation scales.

Lessons from Early Adopters

Executives in the HBR study reported that proactive workflow mapping significantly reduced agent onboarding time. Documenting prompts, data sources, and escalation paths up front prevented significant delays. Conversely, firms that left processes ambiguous faced repeated challenges when regulators or customers demanded to know who approved a questionable AI-generated output.

The evidence reveals a clear pattern for success: top-performing organizations combine narrowly scoped AI agents with rigorous human review, explicit ownership, and business-focused metrics. Neglecting these steps dramatically increases the risk of diffused accountability and unnoticed errors.