Anthropic Launches Claude Managed Agents in Public Beta

Serge Bulaev

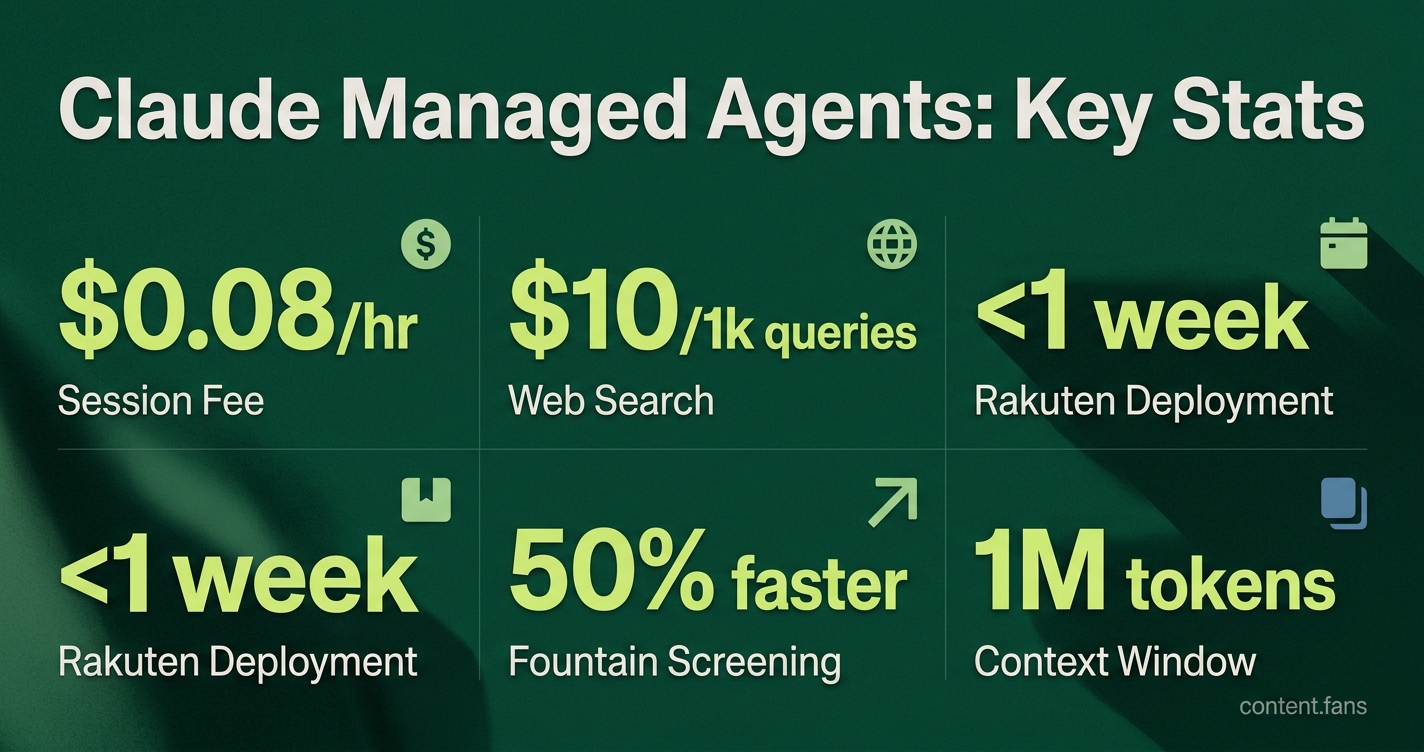

Anthropic launched Claude Managed Agents in public beta in April 2026. This service may let teams run agents easily by handling session memory and orchestration on Anthropic's servers, so users can focus on designing prompts. Pricing stays pay-as-you-go, with standard token rates and a session hour fee, and usage costs might range from $10 to $150 per month depending on the workload. Early reports suggest companies like Rakuten quickly set up agents for different departments, and some legal and financial teams appear to have sped up their work using these agents. Tests suggest Claude Managed Agents may cost more than some competitors but offers detailed billing and large context windows.

Anthropic's Claude Managed Agents recently entered public beta, offering a powerful, hosted agent platform. This service transforms the Claude API from a simple text completion tool into a turnkey agent runtime. By managing agent state, session memory, and orchestration on its own servers, Anthropic frees development teams from complex container operations, allowing them to concentrate on high-value prompt design. Industry analysts view this as a significant move from raw model access toward managed cognitive services.

Consumption Pricing and Session Runtime

Claude Managed Agents is a fully hosted service by Anthropic that allows teams to deploy persistent AI agents. It handles all backend infrastructure, including session memory and orchestration, enabling developers to build complex, stateful applications by focusing solely on prompt design and business logic without managing servers.

Claude Managed Agents follows a transparent, pay-as-you-go pricing structure. A Finout.io Blog breakdown confirms users pay standard token prices plus an active runtime fee. Monthly costs vary significantly depending on usage patterns. According to industry reports, there are no per-agent licenses, and access is enabled via a simple API header.

Key pricing details include:

- Token Rates: Identical to the standard Claude API.

- Session Fee: $0.08 per active session hour, metered to the millisecond.

- Idle Time: Free of charge while waiting for user input or tool confirmations.

- Web Search Add-on: $10 per 1,000 queries.

Early Enterprise Deployments

Early enterprise adoption has been swift, with impressive results emerging just weeks after the beta launch. According to industry reports, e-commerce giant Rakuten deployed specialized agents across its product, sales, marketing, and finance teams in under a week. Another case study showed Fountain reducing candidate screening time by half and doubling conversions using a hierarchical agent system.

The platform is also gaining traction in regulated industries. A legal department cut marketing contract review times from days to hours, while a financial services firm significantly accelerated regulatory document audits, leveraging the platform's built-in memory and policy enforcement.

Competitive Context

In the competitive landscape, Claude Managed Agents demonstrates strong performance. Industry benchmarks suggest it performs competitively with Google's Vertex AI Agent Engine on standard evaluation tasks. While Claude's costs may be higher in some scenarios, Anthropic justifies the premium with advanced features like millisecond-level billing precision and a massive one-million-token context window. These capabilities enable customers to rapidly deploy governed, powerful agents without managing infrastructure - a key factor that allowed teams like Every's Spiral to move from setup to production in a single day.

What exactly are Claude Managed Agents, and how do they differ from a normal Claude API call?

Claude Managed Agents is a fully-hosted agent layer that Anthropic runs on its own servers.

Instead of sending a single prompt and getting a single reply, you deploy a persistent agent that can remember past turns, call your custom tools, and keep working while your laptop is closed.

The bundle includes the Claude model, a control harness tuned for that model, and the elastic infrastructure - you never see a container or VM, you just POST a configuration and receive a secure agent URL.

How does pricing work - are there hidden fees for memory, tools, or idle time?

Billing is purely pay-as-you-go:

- Tokens - charged at the same rates as the normal Claude API (cache reads cost 10 % of input)

- Session runtime - $0.08 per active hour, metered to the millisecond. Waiting for user input, tool callbacks, or queue time is free

- Add-on tools - web search, for example, costs $10 per 1,000 queries, but only if you invoke it

There are no per-agent licenses, monthly platform fees, or surcharges for memory storage.

How quickly can a company roll an agent into production?

Speed is emerging as a core selling point.

According to industry reports, Rakuten had separate agents for product, sales, marketing and finance live in under one week each.

Every's Spiral famously configured a managed agent in one afternoon and pushed it to users the next day, showing that the hosted model removes the usual DevOps lead time.

Which real-world results have early customers published?

- Fountain - cut fulfillment-center staffing time from weeks to <72 h and doubled applicant conversions

- CRED - doubled dev velocity across 15 M-user fintech stack while maintaining production quality

- Enterprise legal team - shrank marketing-contract turnaround from 2-3 days to 24 h and freed 14 lawyer-hours per week

- Artemis - reduced security-incident resolution time by 96 %

These examples span retail, staffing, fintech, legal-tech and cyber-security, illustrating cross-industry utility.

How do Managed Agents compare with rival platforms from Google, OpenAI and Microsoft?

According to industry benchmarks, Claude and Google Vertex AI Agent Engine both demonstrate strong performance on standard evaluation tasks, while OpenAI shows competitive results as well.

Cost-wise, Vertex AE appears more cost-effective than Claude on many standard tasks - representing a premium for Claude services.

Differentiators that may justify the gap include mid-stream steering, disconnect/reconnect sessions, automatic memory compaction, and millisecond-level billing precision.

Google remains cost-competitive and Microsoft offers free orchestration, yet Anthropic leads in autonomous cognition features and keeps the stack model-agnostic for multi-cloud deployments.