Regulators Eye AI Pricing Power, Consider New Policy Levers

Serge Bulaev

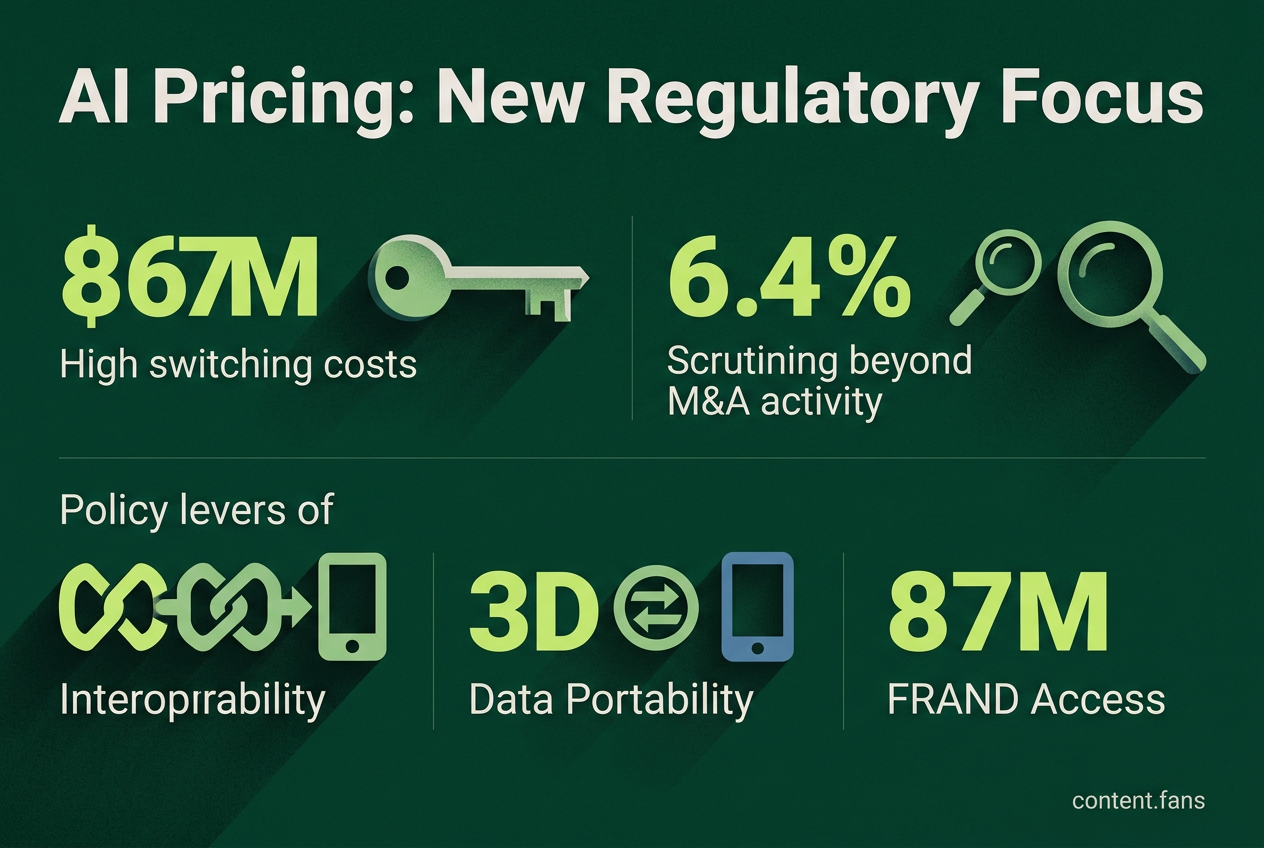

Regulators are paying closer attention to how AI vendors, like Anthropic, may be able to raise prices or change billing terms without losing customers. Some reports suggest that pricing models with surcharges and higher switching costs make it hard for users to move to other providers. New policy ideas being discussed include rules for easier switching, more price transparency, and fair access to important resources. There is debate about how much data scarcity really limits competition. Regulators may introduce new rules if they find strong evidence that current pricing practices hurt competition and consumers.

Regulatory and competition risks surrounding AI vendor pricing power are now at the center of antitrust discussions. This focus intensified after Anthropic ended flat-rate access to its Claude model, pushing heavy users to metered billing. A Dapta AI report noted this demonstrated the vendor's ability to change terms without losing customers.

AI pricing models often feature complex structures with significant premium surcharges, as analyzed by Kingy AI. While headline token prices may not change, vendors can increase value density, which raises switching costs. Developers who optimize their entire workflow - from prompts to financial controls - around one API find it expensive and difficult to migrate later.

Regulatory Thresholds for Antitrust Scrutiny

Antitrust enforcers are expanding their oversight beyond traditional merger reviews to address AI pricing power. While Hart-Scott-Rodino filing thresholds exclude many minority AI investments from automatic review (FTC Competition Matters), regulators are adapting. For instance, European authorities now use "call-in powers" to investigate smaller tech deals, and concerns are growing over vertical foreclosure related to essential resources like cloud compute and energy-efficient data centers. These actions signal a move toward scrutinizing market power even without a formal merger.

Regulators are scrutinizing AI pricing power by looking beyond M&A activity. They now focus on vendor lock-in, high switching costs, and control over key resources like compute and data. This allows them to investigate potentially anti-competitive pricing strategies even when deals fall below standard reporting thresholds.

Policy Tools Under Discussion

To address concerns about market concentration, policymakers are considering several new regulatory levers:

- Interoperability Mandates: Requiring open APIs for models and messaging gateways.

- Data Portability: Extending rights under the EU Data Act to simplify switching between providers (multi-homing).

- Usage Transparency: Mandating standardized cost disclosures for better comparability.

- Advance Notification: For exclusive contracts related to chips or cloud infrastructure.

- FRAND Access: Ensuring premium features (like context length or speed) are available on fair, reasonable, and non-discriminatory terms.

These tools aim to reshape the market architecturally. For example, the Digital Markets Act (DMA) already requires gatekeepers to prevent self-preferencing and allow third-party AI assistants to compete fairly. Similarly, the European Commission is considering compelling Google to share search data with rival AI tools, moving from reactive fines to proactive structural remedies.

The role of data as a competitive barrier is still heavily debated. While industry reports identify significant barriers across the AI value chain, from training data to GPU access, others argue that data scarcity concerns are exaggerated and warn against premature regulation. This debate highlights the challenge for regulators: they must find clear evidence that market concentration harms consumers before intervening.

Vendor pricing strategies for enterprises reflect this tension. Anthropic's move from per-seat licenses to mandatory consumption pledges, for instance, helps stabilize its revenue but can increase the total cost for customers who misjudge their usage. Although tools like spend caps can mitigate this risk, they ultimately keep customers locked into a single vendor's ecosystem.

Precedents from the cloud computing industry offer a roadmap for potential regulatory action. When cloud providers charged high data egress fees, regulators proposed rules to enhance portability and simplify switching. Similar concepts are now being considered for large language models. The adoption of such rules will likely depend on whether regulators can prove that complex pricing schemes are designed to block competitors, rather than just to recover the substantial costs of training frontier AI models.

What makes AI pricing power different from traditional software monopolies?

AI pricing power stems from a combination of unique model performance and extremely high switching costs, creating a lock-in that traditional antitrust tools struggle to address. Unlike with standard software, a business cannot easily migrate its investment in a fine-tuned model; custom weights, prompt libraries, and filters are not portable. This allows vendors to raise the effective cost through mechanisms like mandatory consumption commitments, even if per-token prices seem stable.

Which factors could trigger US or EU intervention?

Intervention is no longer tied solely to high-value mergers. In the US, regulators are investigating below-threshold minority stakes, cloud credit deals, and exclusive data access as key areas of concern. In the EU, authorities are using "call-in" powers to review transactions of any size that could consolidate control over compute, data, or model distribution. A key red flag is any single entity controlling a significant portion of critical inputs, such as GPUs or specialized training data.

How can policy preserve innovation while curbing extraction?

Regulators are focusing on policies that lower switching friction rather than imposing direct price caps. Three key levers include:

- Interoperability Mandates: Requiring open APIs to allow services to work together.

- Data Portability & Escrow: Enabling customers to move their fine-tuned models and access base datasets on fair terms.

- Transparent Metering: Mandating clear, audited reporting of effective costs so customers can accurately compare vendors.

These measures are designed to foster competition on merit, similar to how interoperability helped drive down prices in the cloud infrastructure market.

Are there ready-made analogies from cloud or platform regulation?

Yes, several regulatory precedents exist. The EU's Data Act provides a framework for cloud provider switching that is now being adapted for AI. German interoperability orders for AWS and Azure serve as a model for ensuring equal API access. Furthermore, remedies like the Google Shopping case, which mandated non-discriminatory access, are seen as a template for creating "model gardens" where third-party LLMs can compete fairly. The key is transposing these ideas with specific technical metrics.

What should boards do before raising the next AI price list?

Boards should take proactive steps to mitigate regulatory risk. This includes mapping dependencies on critical inputs like compute and data to identify any concerning partner concentration levels. It is also wise to prepare a public technical appendix for data portability, run effective-price simulations to avoid billing surprises, and grandfather existing customers when retiring pricing tiers. Engaging early with regulators and offering voluntary interoperability commitments can also significantly de-risk pricing changes.