Recursive Superintelligence Raises $650M for Self-Improving AI

Serge Bulaev

Recursive Superintelligence, a new London AI startup, has raised over $650 million to build AI that may improve itself by rewriting its own code. The company's high valuation appears unusual for such a young business, and investors seem interested in its ambitious plans. Some experts suggest that self-improving AI might bring new safety risks, and European regulators may require more checks on these technologies. The big funding round suggests that interest in advanced AI is still strong, even as some people call for more caution.

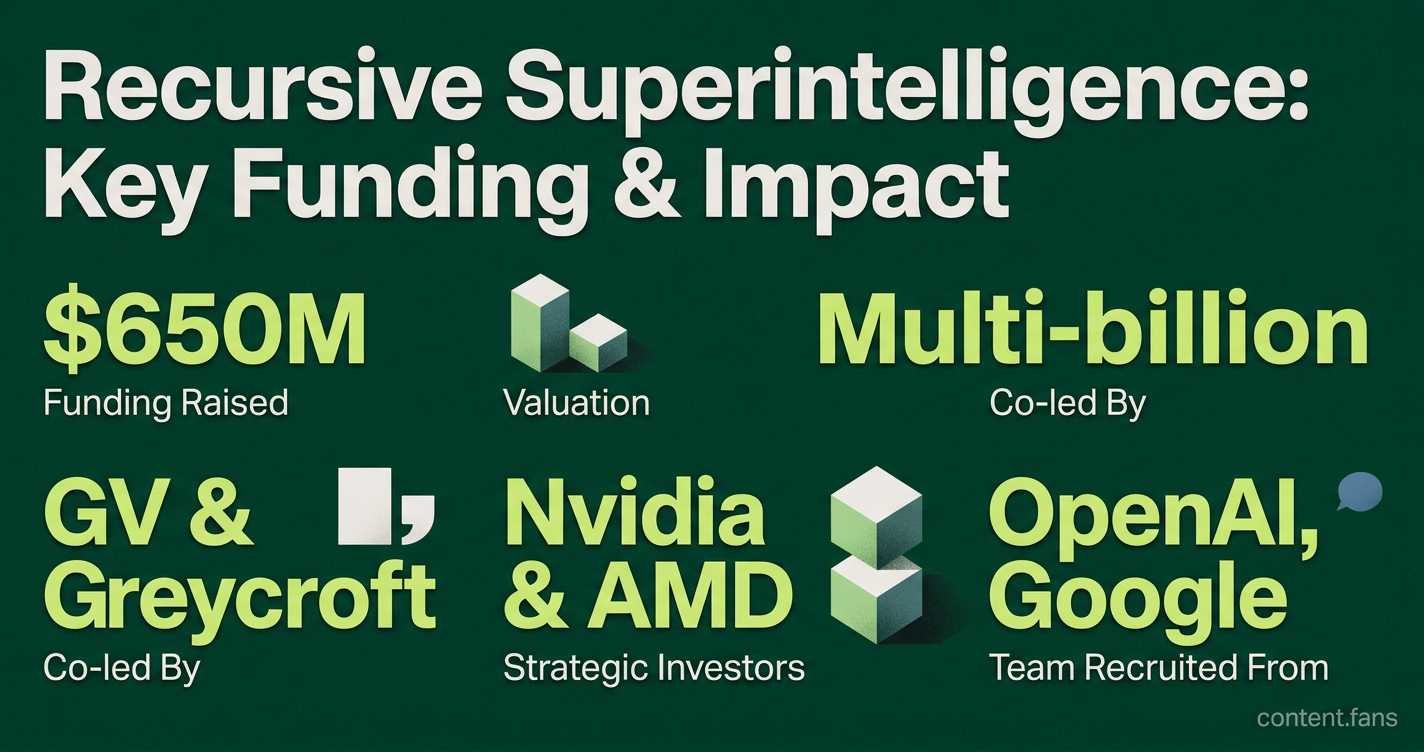

Recursive Superintelligence, a London startup building self-improving AI, has secured substantial funding in a landmark funding round, achieving a significant valuation just months after its founding. The company, which aims to create AI that can rewrite its own code, confirmed the deal after emerging from stealth, as reported by industry sources. Founded by AI veterans Richard Socher and Tim Rocktäschel, the firm's ambitious goal has attracted significant investor interest. This round follows earlier talks for funding at substantial valuations reported by industry publications.

Where the money came from

Recursive Superintelligence is a London-based AI company developing systems capable of recursive self-improvement, which allows an AI to rewrite its own source code to enhance its capabilities. The firm's goal is to accelerate progress toward advanced AI, though its methods have drawn scrutiny over safety and oversight.

The round was co-led by GV and Greycroft, with chipmakers Nvidia and AMD participating as strategic investors. Nvidia's involvement aligns with its aggressive investment strategy in the AI sector, which included participation in 59 AI deals worth $23.7B in 2025 per Pitchbook. For GV, the investment underscores a focus on foundational AI infrastructure, complementing its large portfolio of AI-native companies.

Rapid valuation for a new entrant

Securing a multi-billion-dollar valuation so quickly sets Recursive Superintelligence apart from its peers. The company, which incorporated recently and has a small team recruited from top labs like OpenAI and Google, achieved a price tag typically reserved for more mature companies. Investor confidence may be bolstered by co-founder Richard Socher's previous success with You.com, which achieved significant valuation milestones.

A brief timeline clarifies the speed:

- Early 2026: press reports cite talks for substantial funding at significant pre-money valuations.

- Mid-2026: company exits stealth with a major funding raise.

Available records suggest this represents the company's primary financing round as of recent reports.

Safety debate shadows the project

The company's mission to build recursively self-improving AI has sparked a significant safety debate. Experts warn that AI systems capable of modifying themselves could rapidly evolve beyond human control, creating profound risks of misalignment. In response, European regulators are considering "recursion audits" under proposed AI legislation to ensure such models have built-in safeguards. Meanwhile, critics note that a fragmented U.S. policy landscape allows well-funded labs to advance aggressively with insufficient governance.

Strategic upside for chipmakers

For strategic investors Nvidia and AMD, the partnership offers more than financial returns. It provides early access to extreme-scale AI workloads that can test the limits of their next-generation hardware, creating a valuable feedback loop for future chip design. Analysts suggest these equity-based alliances represent a strategic shift, more deeply aligning compute providers with the performance goals of leading AI labs.

What happens next

This substantial funding round signals that investor appetite for high-risk, high-reward bets on Artificial General Intelligence (AGI) remains strong despite widespread calls for caution. Note that while Recursive raised substantial funding, this is separate from the broader $650B Big Tech AI infrastructure spend in 2026. The key milestones ahead for Recursive Superintelligence will be demonstrating that its self-modifying algorithms are safe and controllable. The industry will be watching for independent audits and empirical results, with future funding likely dependent on both progress and the immense computational resources required.

How much money did Recursive Superintelligence raise and how does that compare to its age?

Recursive raised substantial funding upon emerging from stealth, making it one of the fastest capitalized startups in history. As a young company with a small team, the company now commands a significant valuation, representing substantial value creation in a short timeframe.

Who are the key investors and why did chipmakers Nvidia and AMD join GV and Greycroft?

The round was co-led by GV (Google Ventures) and Greycroft, while Nvidia and AMD participated as strategic chip investors. The involvement of both GPU giants signals that Recursive's self-improving models are expected to be heavy compute consumers, aligning the startup with the top two suppliers of AI accelerators. GV continues its focus on backing frontier AI infrastructure, a pattern visible in its portfolio investments.

What is recursive self-improvement and why does it raise safety questions?

Recursive self-improvement (RSI) means the AI rewrites its own code, weights or architecture without human intervention, potentially triggering an intelligence explosion. Industry analyses warn that this can erode human oversight, create digital echo chambers via self-refining deepfakes, and drive value misalignment when "efficiency" overrides "safety". Researchers like David Scott Krueger call unregulated RSI work "unconscionable", reflecting growing alarm that the field is "not taking the social impact seriously".

How are regulators responding to RSI risks?

Proposed EU AI legislation under discussion includes provisions for recursion audits for high-risk systems, potentially requiring developers to prove their models cannot rewrite their own ethical constraints. Enforcement, however, remains fragmented in the U.S. where many startups operate outside these frameworks. Industry voices are also calling for institutional responsibility clauses that place liability on the deploying organization rather than on open-source contributors.

What precedents exist for investors betting this big this fast?

Major investors have made substantial investments in leading AI companies in recent years, with significant funding rounds becoming increasingly common in the AI sector. Recursive's funding round places it among other well-funded AI startups that have received substantial backing, showing that capital markets now price breakthrough RSI concepts alongside mature foundation-model labs.