New AI Compliance Checklist Secures Regulated Workflows for Hospitals, Brokerages

Serge Bulaev

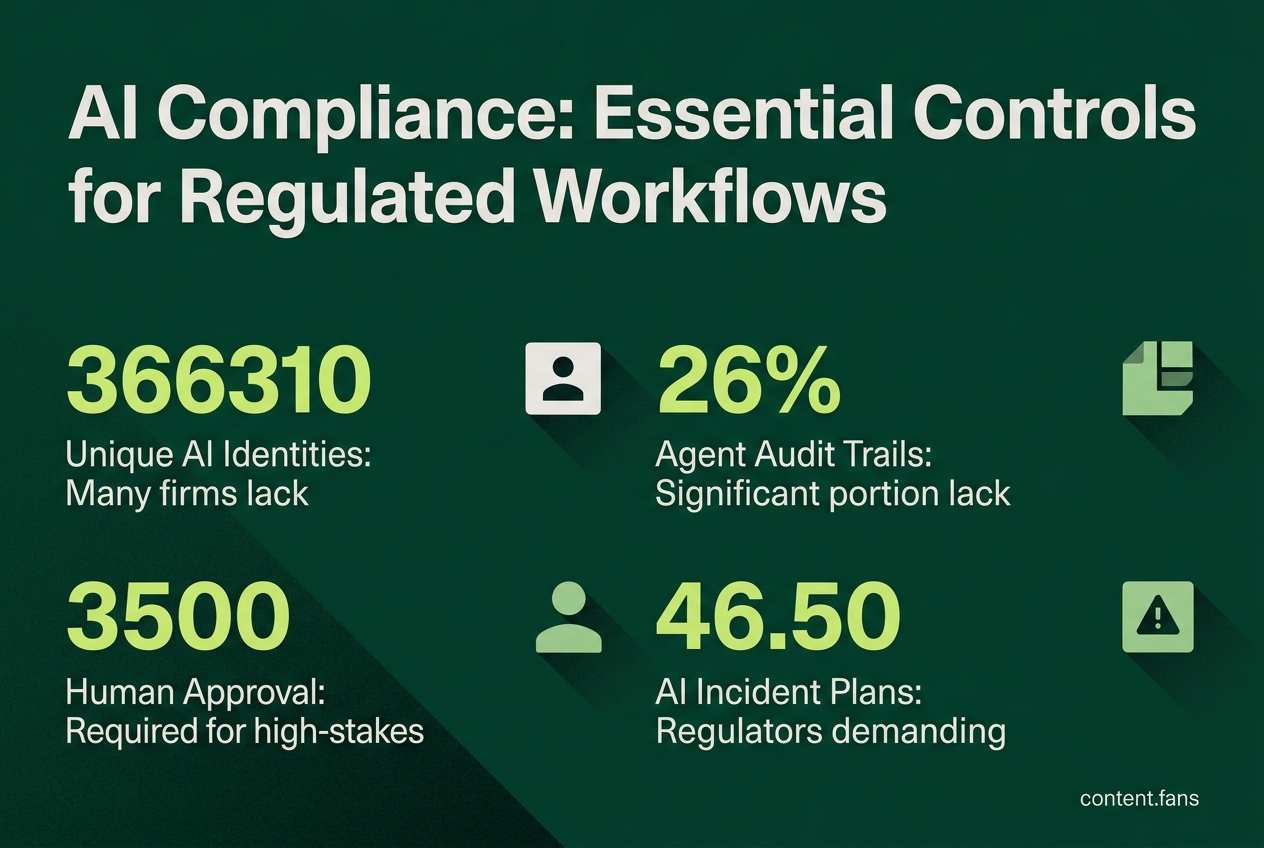

The new AI compliance checklist may help hospitals, brokerages, and insurers follow important security and privacy rules when using AI. It suggests making sure each AI agent has its own identity, keeping detailed records of what agents do, and limiting the data they can see. The checklist also says that humans should double-check risky actions and that companies should be ready to quickly handle any problems with AI. This playbook appears to offer a helpful starting point, but it might not solve all possible risks regulators are looking at.

Using an AI compliance checklist is now essential for deploying artificial intelligence in regulated workflows. Hospitals, brokerages, and insurers must navigate a complex web of federal, state, and industry-specific security and privacy rules that can halt deployments if not managed proactively. The controls below, cited in sector guidance and emerging standards, help teams satisfy auditors before code reaches production.

Identity, Privilege, and Audit

An effective AI compliance checklist outlines key controls for AI agents in regulated fields. It emphasizes unique agent identities, strict access permissions, comprehensive audit logs, data minimization practices, clear consent mechanisms, and mandatory human review for critical decisions to satisfy auditors and regulators.

Every autonomous agent must be treated as a unique identity. According to industry reports, many firms are not issuing unique credentials for AI agents, meaning most agents are over-permissioned. To mitigate this, apply granular role-based or attribute-based access control (RBAC/ABAC), restrict tool access with allowlists, and limit token scope to the task duration. Every action an agent performs must be recorded in an immutable audit trail detailing who, what, when, where, and why. Industry reports indicate that a significant portion of organizations lack any agent audit trail, creating a compliance gap for SOC 2 or HIPAA.

Data Minimization and Consent Controls

Healthcare agents handling protected health information (PHI) are bound by HIPAA's minimum necessary standard. This requires limiting data columns, rows, and retention periods by default. Several states mandate real-time disclosures when AI interacts with patients. For example, various state laws prohibit therapeutic decisions without licensed professional oversight, and some jurisdictions require disclaimers on AI-generated messages. To comply with TCPA and FTC rules, embed dynamic consent prompts and provide clear opt-out paths.

Human Approval Gates and Validation

High-stakes actions, such as clinical recommendations, trade executions, or insurance coverage denials, demand human-in-the-loop checkpoints. Industry standards underscore the importance of containment boundaries and action logging as foundational pillars. Risk teams must implement pre-dispatch policy checks to validate every tool call against an approved capabilities list before execution.

Incident Response Readiness

During audits, regulators are increasingly demanding to see AI-specific incident response plans. The IAPP outlines traditional incident response stages adapted for AI (preparation, identification, containment, etc.) with clear roles across engineering and legal teams, and jurisdiction-specific notification timelines. Industry experts recommend conducting tabletop exercises regularly to rehearse detection, containment, and recovery. Comprehensive logs are critical for accelerating forensic analysis and ensuring timely disclosures.

Security & Compliance Checklist for Agent-Driven Regulated Workflows

- Unique identity per agent with granular least-privilege scopes

- Immutable audit logging of inputs, tool calls, reasoning, and outputs

- Data minimization aligned with sector rules like HIPAA and GLBA

- Real-time consent and disclosure mechanisms for patient or consumer interactions

- Human approval gates for high-risk actions plus pre-dispatch policy enforcement

- Tested incident response playbook with kill switch procedures and regulator-ready templates

Mapping to Finance and Healthcare Stacks

Finance teams must align AI deployments with SEC, FINRA, GLBA, and UDAAP requirements, while healthcare operators contend with HIPAA, FDA SaMD guidance, and numerous state laws. A field report from Callsphere advocates a vertical-first approach: build for the strictest rule set, then reuse components. As AI agents become more autonomous, continuous behavioral monitoring is replacing static annual audits - a trend highlighted in a LinkedIn governance analysis.

While this playbook may not address every potential regulatory concern, it provides a crucial shared baseline for engineering and compliance teams. Implementing these controls early minimizes rework, streamlines vendor reviews, and prevents promising AI initiatives from being derailed by regulatory scrutiny.

What minimum controls must be in place before a hospital or brokerage turns an AI agent loose on live data?

Deploying any autonomous agent requires five non-negotiables:

1. Immutable audit trail that captures every input, tool call and reasoning chain

2. Least-privilege identity unique to the agent (no shared human credentials)

3. Human-in-the-loop approval gate for clinical, trading or coverage decisions

4. Pre-dispatch policy engine that blocks actions exceeding the agent's scope

5. Vendor SLA with rapid containment capabilities for incident response

Miss any one of these and regulators now treat the system as uncontrolled AI, exposing the organization to separate compliance violations.

Which regulators actually audit AI agents in healthcare and finance today?

- Healthcare: FDA (numerous AI devices cleared), FTC ("AI-washing" claims), FCC (TCPA on patient messages) plus many states with use-case-specific statutes

- Finance: SEC (investment-advice algorithms), FINRA (suitability), CFPB (UDAAP), state insurance commissioners (anti-discrimination)

States have enacted numerous new AI healthcare laws, many requiring real-time disclosure to patients or investors. Waiting for "federal pre-emption" is no longer a viable strategy.

How do you stop an agent that suddenly starts calling the wrong APIs or denying legitimate claims?

Runtime pre-dispatch guards are the highest-leverage control. Before the agent's request leaves the container, the guard compares the action against an allow-list, user entitlements and a risk score. If the call is outside policy the guard returns a hard stop and logs the attempt. Industry reports suggest this single control prevents the majority of over-privilege incidents without hurting latency.

What belongs in an AI-specific incident-response playbook for regulated entities?

Regulators typically look for these sections in audits:

- Preparation - defined harm tiers, rapid response capabilities, regular tabletop drills

- Detection - behavioral baselines, automated flagging, customer report channel

- Containment - isolate agent, revoke tokens, freeze downstream workflows

- Investigation - immutable logs, model version snapshot, prompt history

- Recovery - clean baseline redeploy, phased rollout with gates

- Notification - clock starts at detection, not confirmation; include vendor chain

Firms without this playbook face shortened state-level breach windows and separate fines for "lack of AI incident plan."

Can we rely on generic AI governance templates, or do we need vertical-specific policies?

Vertical-first is the recommended approach. Industry experts note that horizontal products force customers to map HIPAA, GLBA, SEC and state rules themselves, adding significant time to procurement. Instead:

- Pick one vertical (health, wealth, insurance)

- Embed the strictest data-flow controls at design time

- Ship with pre-signed BAAs, FINRA/SEC logs and state-mandated disclaimers

Products that arrive "compliance-default" move through vendor risk reviews more efficiently and help organizations stay aligned with compliance requirements and governance best practices.