DeepMind unveils 'Magic Pointer,' a Gemini-powered intent-aware cursor

Serge Bulaev

DeepMind has introduced 'Magic Pointer', a cursor powered by Gemini AI that may understand what users want to do based on what they point at and say. Early tests show it can turn a date into a calendar invite, make charts from tables, and find addresses in videos with just a click or a short command. The tool is still in limited testing and may appear on Googlebook laptops later in 2026. Studies on similar tools suggest it might help people work faster by reducing the need to switch between apps. DeepMind has not set a full release date yet and is still collecting feedback and making improvements.

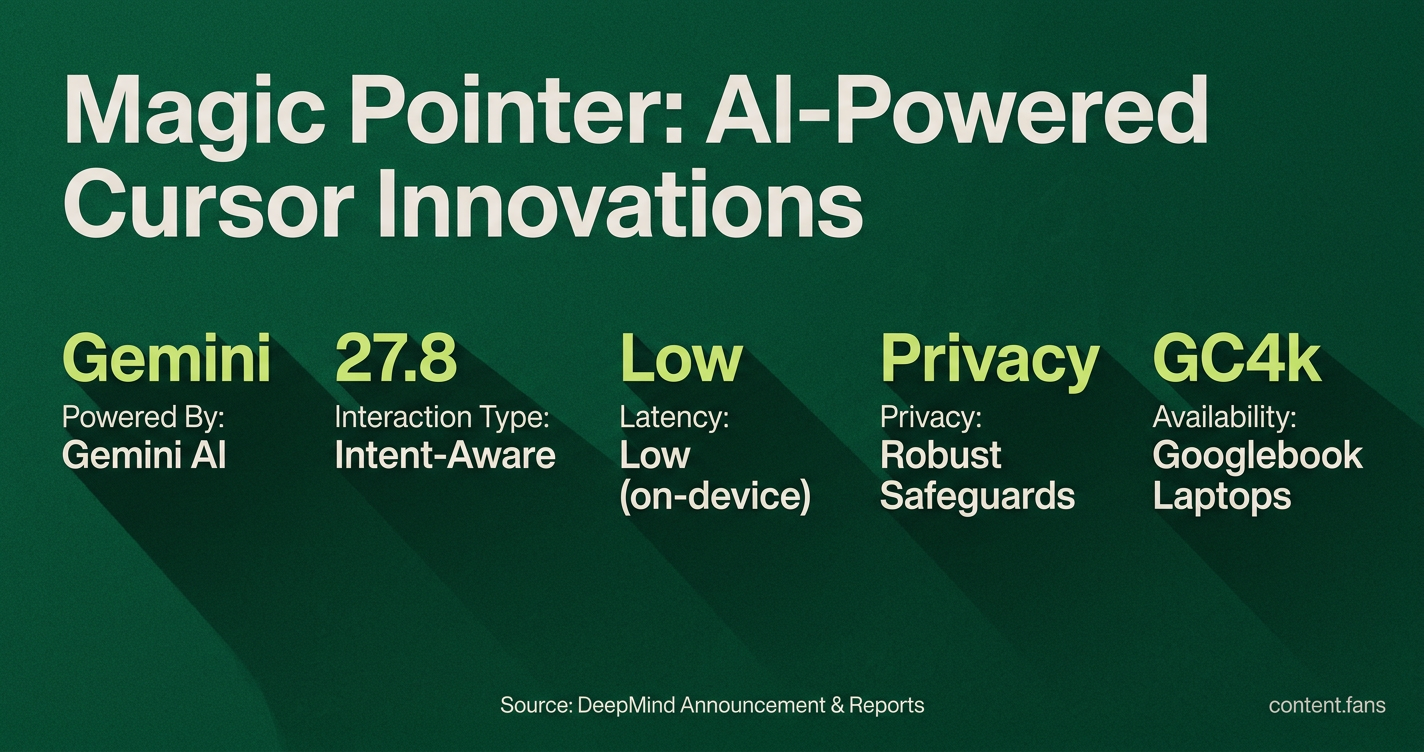

Google DeepMind has introduced the 'Magic Pointer,' a Gemini-powered intent-aware cursor designed to understand user actions through visual and semantic context. Announced in May 2026, this advanced prototype allows users to trigger complex actions with a simple click or voice command by interpreting on-screen elements, according to a 9to5Google report. By embedding AI at the cursor level, DeepMind aims to eliminate the friction of switching between applications. This new approach brings the power of Gemini directly into any workflow, as highlighted in the project's research-blog quotation.

How the Intent-Aware Pointer Works

The Magic Pointer is an experimental AI cursor that analyzes on-screen content like text, images, and layout. It combines this visual data with a user's verbal or typed command to create a context-rich prompt for the Gemini model, enabling complex, single-click actions within any application.

The system operates by capturing pixels, text, and layout information near the cursor's position. This data is paired with a spoken or typed user hint to form a dynamic, internal prompt for Gemini. Early demonstrations in Google AI Studio showcase its ability to perform several common tasks:

- Turning a date inside an email into a calendar invitation

- Converting a pasted table into a bar chart

- Finding the street address for a restaurant shown in a paused video frame

These actions leverage on-device Gemini inference to ensure low latency. Engineers have emphasized that robust privacy safeguards and granular user permissions are central to the design, as the pointer can access on-screen information.

Availability and Release Timeline

Currently, the Magic Pointer prototype is in limited testing. While two public demos are available in AI Studio, a controlled rollout has also begun for "Gemini in Chrome," allowing testers to query highlighted webpage text directly. According to DeepMind's timeline, broader availability is expected with the launch of new Googlebook laptops, which will ship with Magic Pointer pre-installed "later this fall 2026."

A Shift Away From Prompt Boxes

This technology represents a significant shift away from traditional prompt boxes. Researchers describe this type of interface as an "AI-mediated interaction surface," where the cursor can "anticipate, suggest, and automate" actions by understanding its surrounding context. The Magic Pointer brings this academic concept to mainstream users, replacing lengthy chat queries with simple, direct commands like "chart this" or "book dinner here."

Possible Productivity Effects

The potential for productivity gains is substantial, as suggested by enterprise studies on similar agentic tools. For example, a Larridin guide noted an average productivity increase of 30% for engineers using AI coding assistants, with some power users reporting gains up to 80%. Similarly, a Monday.com analysis of its work-management AI calculated a 346% ROI. While from different products, these figures highlight the value of reducing context-switching - the core problem Magic Pointer is designed to solve.

What Happens Next

While DeepMind has not announced a public release date beyond the Googlebook launch this fall, development continues. Current testers are providing crucial feedback on latency, privacy, and performance to help refine the on-screen parsing engine. Future research will likely focus on the model's ability to scale across multilingual content, complex data sets, and mixed-media environments.