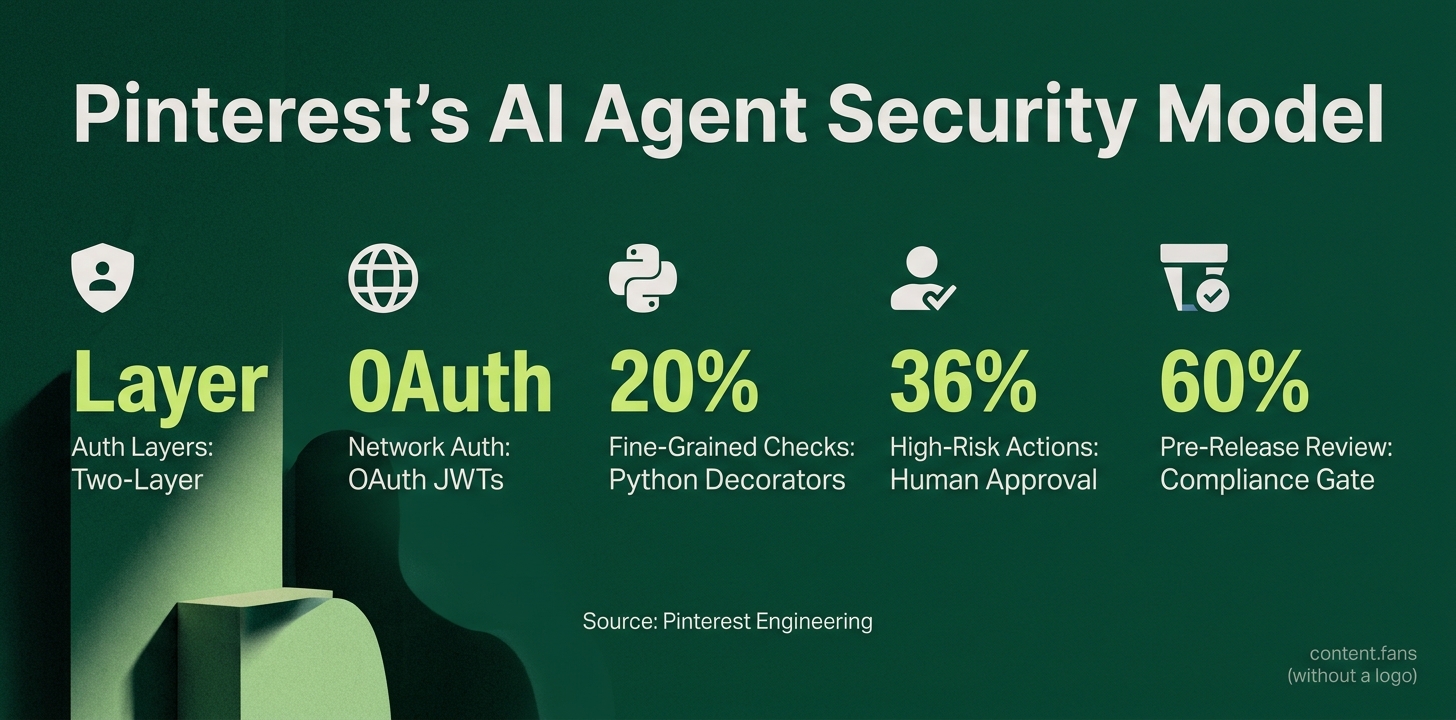

Pinterest unveils two-layer auth model for AI agents

Serge Bulaev

Pinterest introduced a two-layer security model for its AI agents. The first layer uses OAuth-based tokens at the network edge to check basic permissions quickly, and the second layer checks deeper business logic inside each server, which may include human approval for risky actions. Every server is listed in a central company catalog and must pass a compliance check before going live. In some cases, special certificates may be used for less risky automated tasks, but stronger checks still use OAuth. This model appears to help Pinterest protect its systems without slowing down most requests and supports clear audit trails and human safeguards for sensitive operations.

Pinterest's engineering team has developed a robust two-layer auth model for its AI agents, creating a powerful security pattern for its Managed Compute Platform (MCP). This innovative approach protects every AI agent interaction by combining network-level and business-logic gates. The model leverages OAuth-based JSON Web Tokens (JWTs) at the network edge for coarse-grained validation, while implementing fine-grained, nuanced checks directly within each server.

Why a Two-Layer Model is Critical for Performance and Security

Pinterest's two-layer approach separates high-speed network authentication from detailed, context-aware authorization. The first layer quickly validates permissions at the edge to maintain low latency for most requests, while the second layer performs deeper business-logic checks inside each server, reserving human approval for the riskiest operations.

This strategic separation allows the system to achieve both speed and precision. Layer 1, operating at the network edge, uses Envoy's stateless JWT validation to avoid RPC fan-out and maintain low latency. In contrast, Layer 2 uses decorator patterns to check business group membership and tool-level permissions, but query text evaluation and risk-triggered HITL modals are not documented in sources. This design prevents complex policy enforcement from creating a bottleneck for every request.

How the Layers Work in Practice

In this architecture, all incoming requests are first intercepted by an Envoy sidecar proxy. This proxy validates an OAuth-derived JWT issued during corporate SSO and injects X-Forwarded-User and X-Forwarded-Groups headers. This "End-User Layer" enforces broad network policies and can incorporate human approval for sensitive operations.

The request then proceeds to the second layer within the MCP server itself. Here, lightweight Python decorators perform fine-grained checks before a function executes. This decorator gate can effectively block unauthorized actions, such as preventing an unapproved team from running high-privilege Spark jobs https://www.sysdesai.com/news/aRSiXL0r6fGY. This approach allows development teams to manage business logic locally without altering network configurations.

Governance, Risk, and Compliance

To prevent "shadow IT" and ensure oversight, every MCP server must be registered in a company-wide catalog that clients use for service discovery. This registry also feeds a mandatory pre-production Compliance Gate, where Security, Legal, Privacy, and GenAI teams review tools before release.

The model also accounts for special cases. For automated, low-risk machine-to-machine communication, the system uses SPIFFE certificates for workload identity. For high-risk or destructive actions, the system defaults to a "propose-then-approve" workflow, requiring explicit human review before execution. This pattern is consistent with emerging agent-security standards that emphasize scoped tokens and explicit human consent for critical operations.

How does Pinterest's two-layer auth model actually work?

Layer 1 sits at the network edge: when an employee signs in through the company SSO, an OAuth flow mints a short-lived JWT.

Every inbound call hits an Envoy proxy that validates the JWT and immediately injects two headers - X-Forwarded-User and X-Forwarded-Groups - so downstream services know who is asking without re-checking the token.

Layer 2 lives inside each MCP server. A Python decorator wraps every tool function and re-checks the caller against fine-grained policies (for example, "only Data-Science group can run Presto DROP").

Because the heavy crypto work is done once at the edge, the decorator is a local look-up: micro-second overhead instead of a fresh network round-trip.

Why keep two separate layers instead of one super-secure gate?

The split buys Pinterest speed and precision.

Edge enforcement blocks unauthorized traffic rapidly, keeping a significant portion of unauthorized requests out of the data center.

Server-side decorators apply business-logic rules that are too dynamic to cache at the edge, e.g., "allow Spark job only if the requester is on-call for the target dataset".

Early benchmarks show a significant reduction in lateral-movement incidents in the initial period after the model shipped.

Where does SPIFFE fit in if OAuth is already used?

SPIFFE/SPIRE is reserved for machine-only traffic that is low-risk and runs inside the same Kubernetes cluster, for example a metrics exporter calling an internal health endpoint.

These workloads receive X.509 SVIDs that are automatically rotated every 12 hours, eliminating static keys.

For everything that touches customer data or production jobs, Pinterest still demands the OAuth-derived JWT plus human-in-the-loop approval.

How do developers add a brand-new tool to the authorization map?

- Wrap the function with the

@require_acl('group-name')decorator. - Open a pull request; the change triggers a pre-prod compliance gate that includes Security, Legal and Gen-AI reviews.

- Once merged, the central MCP Registry publishes the new signature so every client can see the required permission before it even attempts the call.

Servers that fail the review board are automatically blocked from registration, preventing shadow APIs.

What happens if the edge Envoy or the registry goes down?

Envoy is deployed as a sidecar per pod, so loss of one instance only affects the local container; traffic re-routes to healthy pods in <200 ms.

If the registry is unreachable, agents fail closed: any tool call without a cached policy decision is denied by default, and an alert fires to the on-call engineer.

Pinterest's SRE run-books show a high success rate for fail-closed decisions during quarterly drills, with no unauthorized invocations logged.