CFOs Adopt New Frameworks to Balance AI Costs and Productivity

Serge Bulaev

CFOs are adopting new rules to watch AI costs and keep productivity high. Surveys suggest that most finance leaders see AI as very important, but there is uncertainty about how to control spending and measure returns. Experts recommend clear policies, named owners for each AI system, and regular checks to avoid extra costs from unused models. Many companies now track AI spending closely and may use special profit and loss statements to understand the real costs. Ongoing monitoring and regular reviews appear to help avoid hidden expenses and keep AI projects useful.

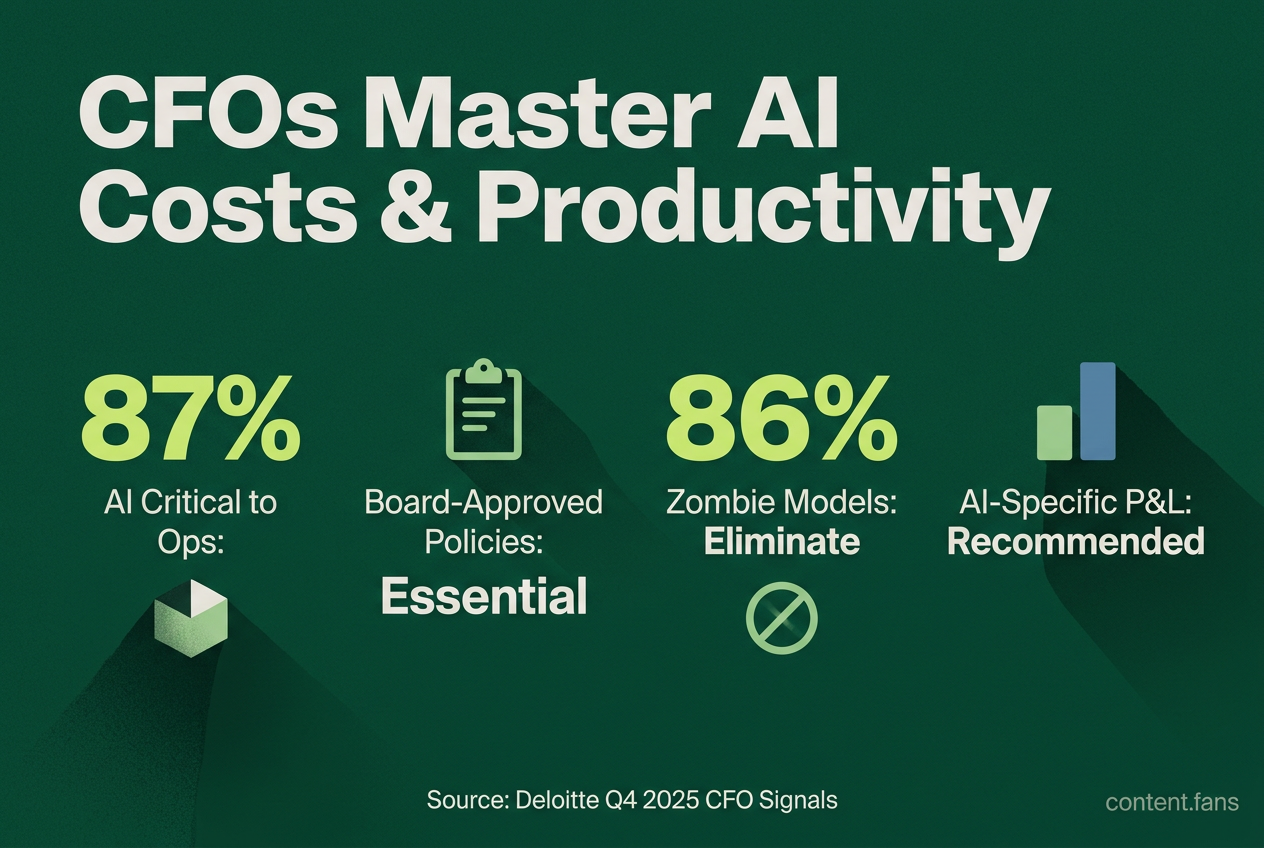

As AI transitions from experimental technology to a core business function, CFOs are adopting new frameworks to balance AI costs and productivity. Finance leaders now track AI model expenses with the same rigor as capital assets. This shift is driven by rising optimism about efficiency gains and an urgent need to formalize spending, measure returns, and govern AI projects effectively. Deloitte's Q4 2025 CFO Signals survey confirms this trend, with 87% of finance leaders rating AI as critical to operations.

Establish board-level guardrails before scaling

To manage AI spending, chief financial officers are implementing robust governance structures. This involves creating board-approved policies, assigning clear ownership for each AI system, inventorying all models to eliminate waste, and converting technical performance data into financial KPIs to accurately track ROI and control costs.

Effective AI governance begins with a concise, one-page policy outlining prohibited uses and escalation paths, as recommended by ClearPoint Strategy. This clarity prevents scope creep and simplifies audits. The next step is a thorough inventory of all AI systems; industry reports suggest that initial reviews often uncover significantly more models than expected, revealing substantial opportunities to retire "zombie" models that generate costs without value. Finally, assign a primary and backup owner to every model to ensure accountability for costs, compliance, and performance, preventing tasks from being orphaned.

Translate technical metrics into P&L language

After cataloging AI assets, finance and engineering must collaborate to define KPIs that translate technical performance into financial impact. Key metrics include:

- Cost per inference and its quarterly trend

- Incremental revenue or savings per automated task

- Margin delta per employee after deployment

These metrics should feed into dashboards linked to core financial statements like COGS or SG&A. Because AI spending is often distributed, many firms now create AI-specific profit and loss (P&L) statements to understand its true unit economics. Strategic procurement is also critical. While many CFOs are increasing tech budgets, they are also seeking targeted cost reductions by negotiating cloud committed-use discounts and embedding cost controls in vendor contracts.

Gate scale decisions with scenario tests

To control expansion, every scaling decision should pass through a gated review with three potential outcomes:

- Optimize: Reduce model size, batch inferences, or use lower-cost GPUs if costs are rising without a performance benefit.

- Scale: Approve expansion only when the return on investment per headcount remains above a predetermined threshold.

- Hybrid: Pursue limited scaling while simultaneously investing in efficiency engineering.

This disciplined approach aligns with research showing that CFOs increasingly measure AI's value through long-term productivity gains, not just immediate cash returns. For example, industry reports indicate that companies are achieving significant savings by automating sourcing transactions, as noted by The CFO. Similarly, according to BCG, organizations are developing GenAI tools that deliver substantial value in short timeframes.

Monitor, retire, repeat

AI governance is a continuous cycle, not a one-time setup. Ongoing monitoring with automated drift detection and detailed audit logs is essential for protecting margins from hidden costs. When a model's performance degrades or usage patterns change unexpectedly, its designated owner must trigger a formal retraining or retirement workflow. This process is reinforced by recurring inventory reviews, held at least semi-annually, which use structured sunset checklists to decommission underperforming models and prevent indefinite costs that erode ROI.

How can CFOs prevent AI budgets from eroding net margin instead of improving it?

Tag every AI dollar before it is spent.

- Create cost centers for each model or use-case so finance can run a mini-P&L.

- Pair the tags with showback/chargeback rules that bill business units for the inferences they consume.

- Require a cross-functional approval gate - finance, engineering and risk - before any new model moves from pilot to production.

These steps stop "shadow AI" bills and turn usage-based cloud invoices into negotiable, forecastable spend.

Which KPIs show whether AI is creating or destroying economic value?

Replace vanity metrics with three hard numbers:

1. Cost per inference - tracked daily to catch price creep.

2. Incremental revenue per inference - links each API call to booked sales or savings.

3. Revenue per employee delta - isolates the productivity lift after headcount changes.

According to industry surveys, a growing number of CFOs now judge AI ROI on efficiency gains, not short-term profit, because the delta tells them whether margin improvement is sustainable.

What procurement levers cut AI operating costs without throttling innovation?

Lock in economics before models scale.

- Negotiate cloud committed-use discounts early; list prices can double an annual bill once GPU hours exceed free tiers.

- Insert AI-specific clauses into vendor contracts: right to audit model cards, 48-hour incident alerts and annual price-cap escalators.

- Model the capex vs. opex trade-off; for stable workloads, on-prem GPU racks can pay back in 14-18 months versus pay-per-use.

Industry reports suggest that companies applying similar tactics have achieved significant reductions in contract time and spending.

When should a company optimize inference efficiency versus scale the model?

Run the three-scenario gate at every quarterly review:

1. Optimize - if cost per inference is rising but accuracy is flat, distill the model, prune parameters or switch to a smaller specialized LLM.

2. Scale - if incremental revenue per inference stays above the finance-set hurdle rate, increase tokens and user caps.

3. Hybrid - keep the large model for edge cases, route routine queries to a cheaper micro-model; gate the switch on real-time cost telemetry.

Without this gate, engineering teams often scale by default and AI bills can erase the productivity gains they just delivered.

Why is finance-engineering collaboration the single biggest cost control?

Because modeling choices become recurring cloud invoices.

- Finance shares the ROI hurdle rate before training starts so engineers can pick data sets and model sizes that stay under the ceiling.

- Engineers expose the latency-vs-cost curve so finance can decide whether faster response times are worth premium pricing.

Case evidence: industry reports show that organizations can build GenAI solutions in short timeframes once finance establishes clear savings targets; such tools can deliver substantial value in recovered costs.