OpenAI's o1-preview AI Outperforms Doctors in ER Diagnosis Study

Serge Bulaev

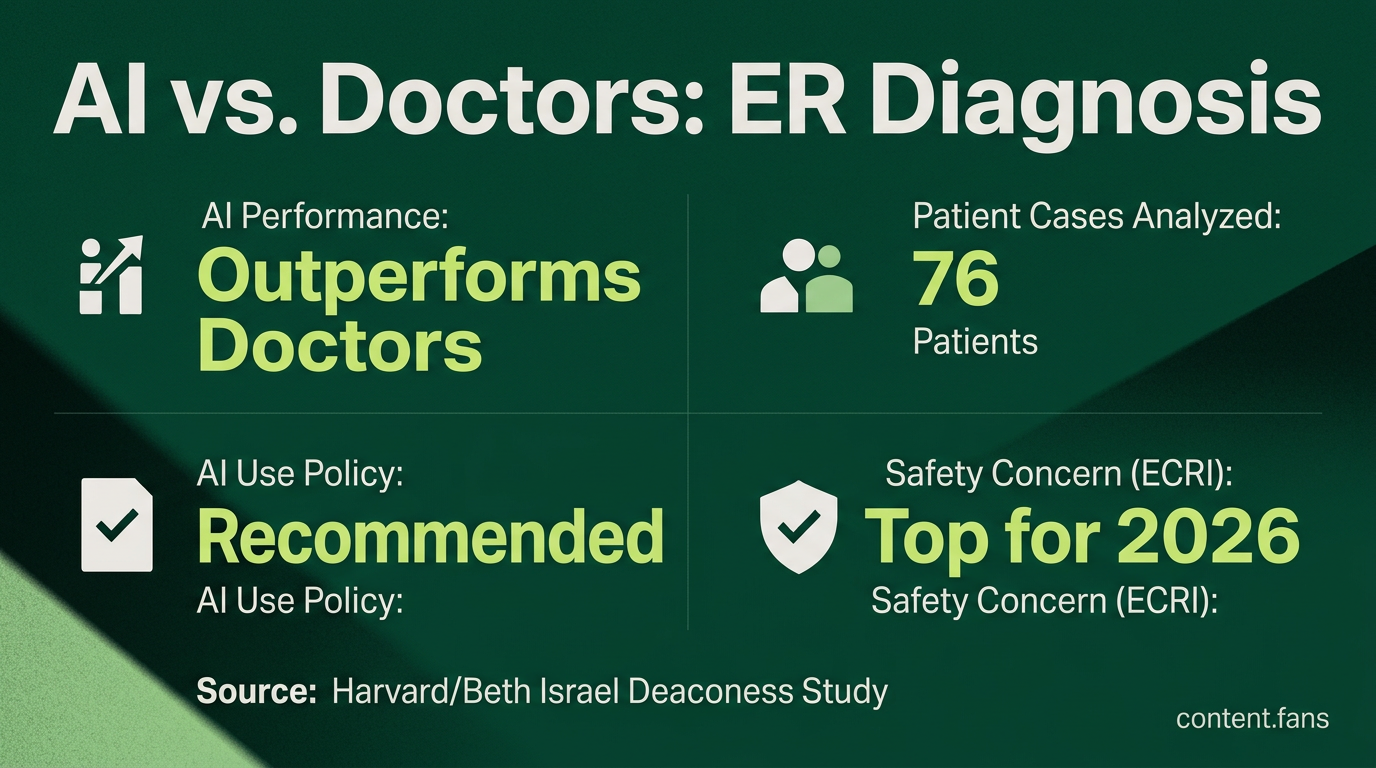

A study suggests that OpenAI's o1-preview AI may list the correct or near-correct diagnosis more often than doctors in a Boston emergency room. However, its performance improvement was not seen in all areas, and emergency teams still need to check the AI's uncertainty before using its advice. Patient-safety groups and regulators are watching AI in healthcare closely, recommending strict policies and monitoring for any problems. Newer AI models appear to do even better in some high-stakes cases, but more studies are needed to see if these tools really lead to safer and faster care in real hospitals.

A landmark study suggests OpenAI's o1-preview AI can aid emergency room diagnosis, outperforming attending physicians in some key measures. Research from Harvard and Beth Israel Deaconess, published in Science, found the model provided a correct or near-correct diagnosis more frequently after analyzing 76 de-identified patient records from a Boston ER.

These findings are accelerating the debate on integrating AI into clinical workflows. Experts emphasize that current models like o1-preview should serve as a diagnostic aid under clinician supervision, not a replacement. In response, regulators are developing safety guardrails to ensure a secure and effective collaboration between humans and AI.

How LLMs Can Aid Emergency-Room Diagnosis: Study Details

The study evaluated OpenAI's o1-preview against ER doctors using 76 real patient cases. When scored by senior clinicians, the AI's list of potential diagnoses was more likely to include the correct one. This suggests AI can be a powerful tool for augmenting a physician's diagnostic process.

However, the AI's performance gains were not uniform. The study noted no significant improvement in areas like probabilistic reasoning or triage recommendations. This highlights the ongoing need for emergency teams to critically evaluate AI-generated advice and its confidence levels before making clinical decisions.

Guardrails and Regulatory Momentum

The potential for diagnostic AI has drawn scrutiny from patient-safety advocates. Citing concerns over inconsistent real-world performance, the ECRI Institute listed AI-assisted diagnosis as a top patient safety concern for 2026 link. Following this guidance, the American Hospital Association advises hospitals to:

- define formal AI use policies

- document every instance of AI-assisted diagnosis

- monitor outcome metrics for drift and bias

Regulatory bodies are also responding. The FDA and EU now emphasize the need for continuous post-market surveillance of adaptive AI systems. A Bipartisan Policy Center brief highlights that U.S. reviewers are scrutinizing whether AI tools perform consistently across diverse patient populations and maintain their integrity through software updates.

Successor Models and Ongoing Evidence Generation

Development has continued beyond o1-preview. Successor models are reportedly achieving performance on par with PhD-level experts on biology benchmarks. A Healthcare IT News report indicates that newer models have shown improvements over the earlier GPT-4 in many additional high-stakes scenarios, though these findings await full peer review.

Experts agree the next crucial step involves pragmatic clinical trials. These studies must measure tangible results such as patient outcomes, effects on clinical workflow speed, and the rate of error detection. This research will determine if the diagnostic accuracy seen in controlled studies translates to measurably safer and more efficient care in real-world hospital environments.

How did the study test OpenAI o1-preview against doctors?

Researchers at Harvard Medical School and Beth Israel Deaconess Medical Center conducted a blinded evaluation with 76 real emergency-room cases. These de-identified charts were provided to both the o1-preview model and human physicians. Senior clinicians, who were unaware of the source, then scored the resulting differential diagnoses and reasoning chains. The study found that o1-preview listed the correct diagnosis significantly more often than the doctors.

How large was the performance gap?

A sub-analysis of 70 cases revealed a notable difference: o1-preview achieved 88.6% diagnostic accuracy, compared to 72.9% for physicians. Regarding the quality of diagnostic reasoning, the model received a perfect score in 78 of 80 evaluations, whereas doctors achieved 28 perfect scores and residents just 16.

Is OpenAI o1-preview ready to replace ER physicians?

No. The study's authors, OpenAI, and other experts agree the model is intended as a second-opinion tool, not an autonomous replacement for clinicians. Key weaknesses include probabilistic reasoning and triage recommendations. Furthermore, regulatory guidance from bodies like ECRI and the FDA mandates rigorous post-market surveillance for such AI systems.

What makes o1-preview better than earlier models?

The o1 series was designed by OpenAI to "spend more time thinking before responding," a process known as chain-of-thought reasoning. According to NIH/PMC commentary, this deliberate, step-by-step approach is why o1-preview excels over instant-response models like GPT-4, especially in complex cases involving multiple systems.

When will clinical trials with medical AI begin?

They are already underway. Initial studies, including the "Preliminary Study of o1 in Medicine" (arXiv 2024) and subsequent Stanford/Harvard benchmarks, represent early-phase evidence. Regulators and healthcare institutions anticipate larger prospective trials during 2025-2026 prior to broader clinical integration, which will require continuous revalidation after deployment.