HR adopts checklist for safe AI use with employee data

Serge Bulaev

HR leaders are rapidly adding AI tools to their work, but this may risk exposing personal employee data. A checklist is suggested to help HR teams safely use AI by first reviewing policies and legal issues, mapping data, limiting data use, informing workers, testing for bias, and controlling vendors. Legal reviews may be required under upcoming laws and existing regulations. The checklist offers steps to create records showing that HR considered risks before using AI, but it does not guarantee full compliance. Careful following of these steps may help build trust and provide proof if questions arise later.

Ensuring safe AI use with employee data is critical as HR leaders race to adopt generative AI for recruiting and performance management. This rush can expose sensitive personnel data without a crucial pause for a policy and legal review. A practical safeguard, the Responsible AI Adoption Checklist for HR, is echoed in guidance from AI Law Tracker and privacy consultant Jeff Arnold's 2025 compliance article.

This checklist reframes AI adoption not as a side project, but as a regulated data processing activity. It mandates that HR teams treat every dataset, model, and vendor with the diligence required for an imminent audit by regulators or employees.

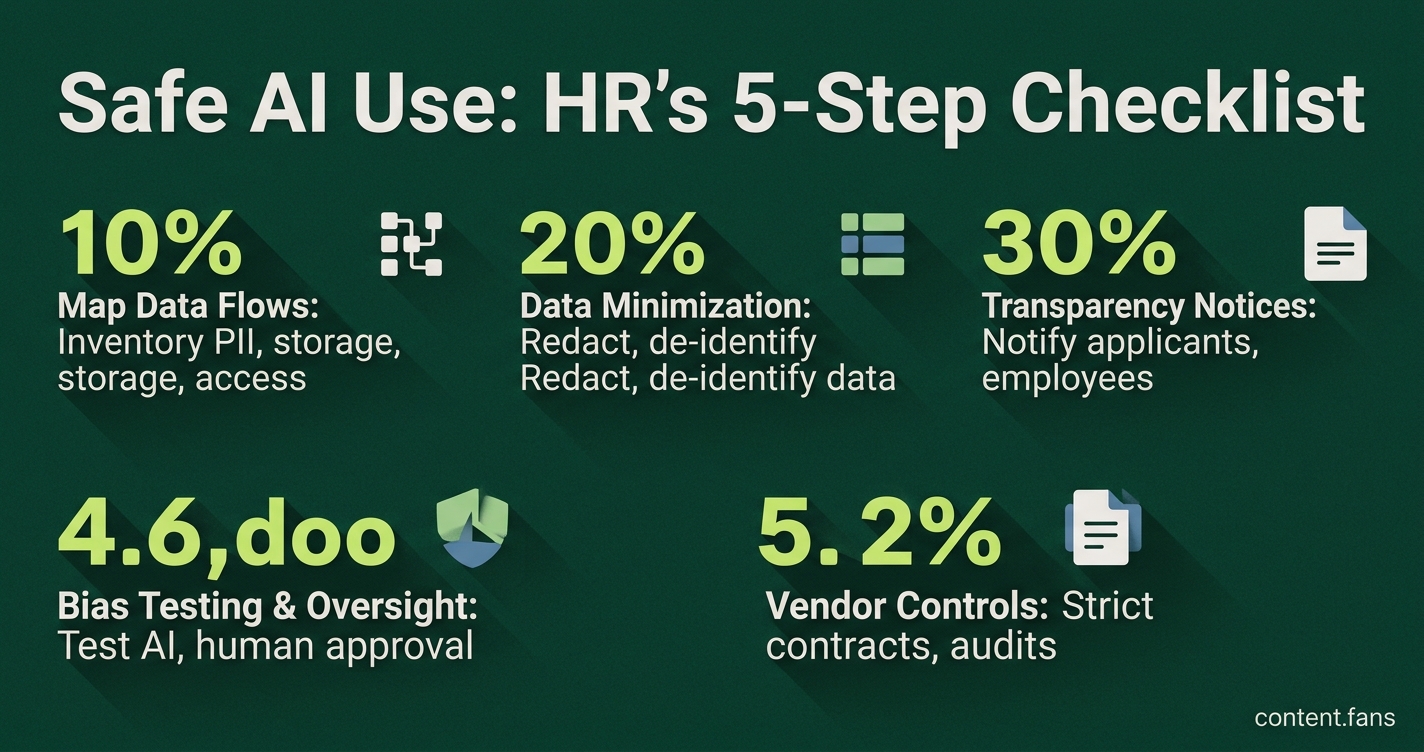

Responsible AI Adoption Checklist for HR

Safely using AI with employee data requires a structured approach. HR teams must first map all data flows, minimize the data collected, and provide clear transparency notices to employees. This process also includes conducting rigorous bias testing, ensuring human oversight, and enforcing strict data protection controls with all vendors.

- Map data flows - Conduct a comprehensive data inventory for every AI-driven workflow. Document all personally identifiable information (PII) it processes, its storage location, and access controls. Privacy consultant Jeff Arnold calls this foundational mapping exercise the essential first step.

- Data minimization - Limit data exposure by redacting unnecessary fields and de-identifying personal data whenever possible before it is fed into an AI model, a key recommendation from AI Law Tracker.

- Transparency notices - Provide clear, upfront notifications to applicants and employees when AI is used in hiring or other workplace decisions. As noted by WorkTango, these notices must explain what data is processed and detail the process for appealing automated outcomes.

- Bias testing and human oversight - Systematically test all AI tools for recruitment, promotion, and termination to identify and mitigate disparate impact. Mandate that a trained human reviewer must approve any adverse decision generated by an AI system.

- Vendor controls - Enforce strict vendor management through contracts. As detailed in the AI Law Tracker's vendor guidance, these agreements must prohibit vendors from training their models on your employee data, require breach notifications within 72 hours, and grant full access to audit logs.

Why legal review comes first

Impending regulations underscore the urgency of legal review. For example, California's CCPA/CPRA, effective in 2026, will mandate privacy risk assessments for high-risk automated decision-making, as highlighted in a Littler legal alert. This requirement means HR teams using AI for candidate screening or employee scoring must consult legal counsel before exporting any data to a third-party model. Similarly, GDPR's Article 22 grants employees the right to request human review of decisions based solely on automated processing, a point summarized by Moveworks.

Operational guardrails

- Retention rules - Establish clear data retention policies. AI Law Tracker advises retaining AI decision logs for a minimum of three years or the applicable statute of limitations, while promptly deleting the raw input data.

- Incident response - Develop an AI-specific incident response plan. Following Jeff Arnold's recommendation, create a dedicated escalation path for flagging model failures, biased outcomes, or data exposure events.

- Training and ownership - Assign clear accountability by designating a cross-functional AI governance committee. This body should be tasked with conducting and documenting quarterly reviews of every AI system in production.

Before a full-scale rollout, pilot AI tools in an anonymized sandbox environment to test workflows against these checkpoints without using live HRIS or applicant data. According to WorkTango, building trust during implementation is best achieved through independent bias audits and clear, plain-language communication with employees.

While this checklist does not guarantee absolute compliance, it establishes a crucial, auditable trail. Following these steps creates a defensible record, demonstrating that the organization performed due diligence and addressed key risks before deploying AI to process employee data.

What is the first step before connecting any AI tool to employee data?

Map all data flows and identify sensitive fields as the non-negotiable first step, completed before signing any vendor contract. Create a data inventory that specifies:

- What data the AI will touch (payroll, medical notes, disciplinary records)

- Where it is stored and who can access it

- How long it must be kept to satisfy local labor law

This inventory must be a living document, updated immediately when a new model, purpose, or integration is introduced. Regulators often view an outdated data map as a sign of negligence, making a detailed version log essential.

Which internal partners must HR involve before purchasing AI software?

Involve legal, privacy, security, and key HR business partners in the evaluation process from the very beginning. Secure written sign-off from each stakeholder confirming:

- The processing purpose is lawful under current statutes

- Vendor data use is limited to that purpose

- Employees will receive a plain-language notice of the AI's role

Treat this legal review as mandatory, not optional. Proactive consultation with counsel is far less costly than facing regulatory fines or employee litigation.

What vendor contract clauses protect employee data in 2026?

To protect employee data, insist on these five non-negotiables in every vendor agreement:

1. No secondary use - vendor may not train foundation models on your data unless you give explicit, revocable consent

2. Deletion on demand - all personal data must be erased or returned within an agreed number of days after contract end, with written certification

3. Audit logging - vendor must keep tamper-evident logs of access, exports, and deletions for at least the statutory period

4. Incident notice - alert your team within 24-72 hours of any confirmed or suspected breach

5. Sub-processor transparency - provide an up-to-date list of sub-vendors and flow down the same obligations

If a vendor resists these terms, be prepared to walk away. The competitive HR technology market of 2025 offers safer, more compliant alternatives.

Which data types should never reach an AI model without extra safeguards?

Categorize the following data types as high-risk or "red flag" data. Implement masking, hashing, or segregation before allowing any AI model to process them:

- Health or biometric records

- Union membership or protected concerted activity notes

- Criminal or disciplinary files

- Compensation details beyond aggregated ranges

Both GDPR and California's regulators classify these categories as high-risk. Processing this data with an AI without a comprehensive privacy impact assessment can lead to severe penalties, including mandatory breach notifications.

How can HR pilot AI while proving good faith compliance?

Demonstrate good faith and mitigate risk by first piloting the AI in an anonymized sandbox environment:

- Strip direct identifiers and run a small, time-boxed trial

- Enable full audit logging so every prompt and output is traceable

- Publish a short summary of fairness metrics and error rates for staff review

- Document lessons learned before scaling to the full workforce

This approach creates evidence of a "progressive, risk-based deployment," a factor that courts and regulators value. A well-documented pilot demonstrates measured, incremental adoption over a rushed, untested implementation.