OpenAI Says 80% of Its Code Is Now AI-Written

Serge Bulaev

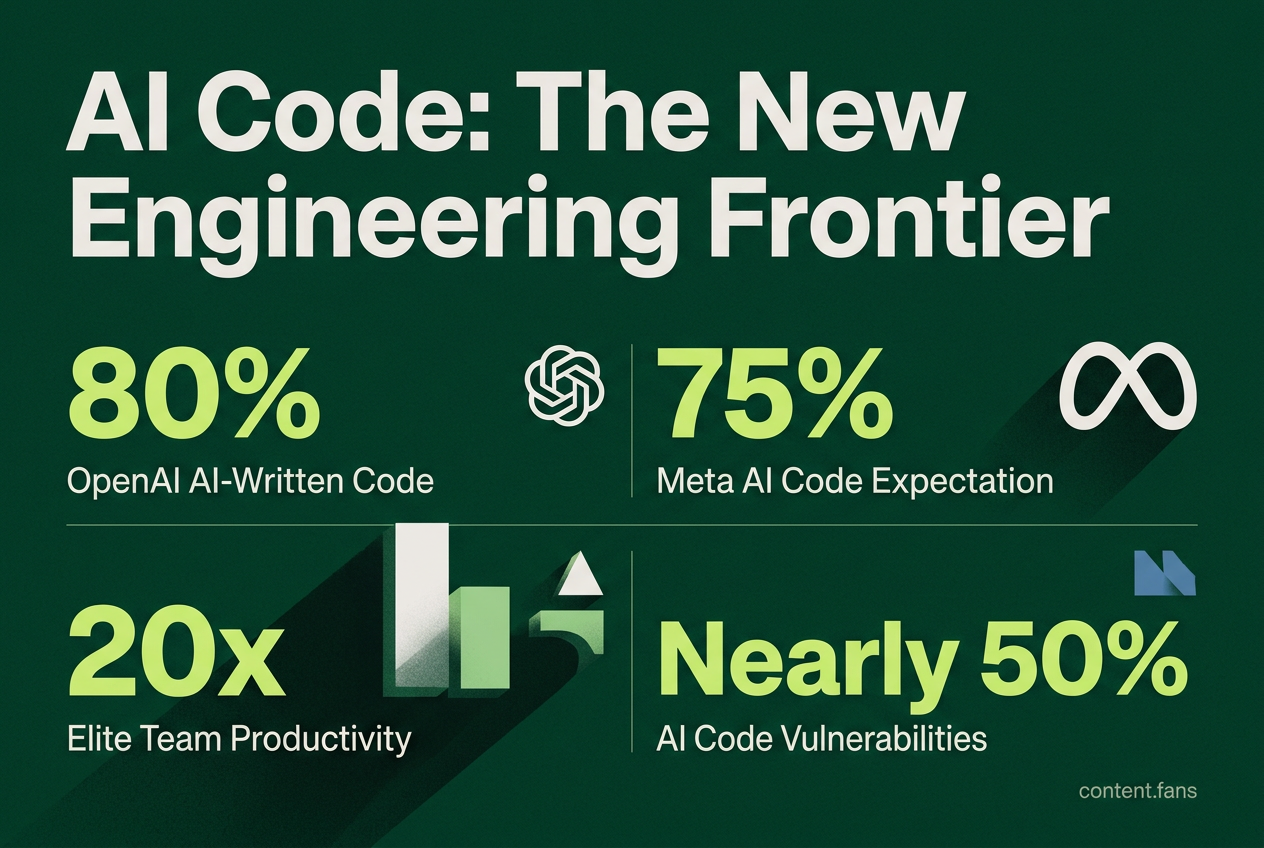

OpenAI's president Greg Brockman says that AI now writes about 70-80% of the company's code, though it is unclear if this means lines of code or tasks done. This may show a quick shift toward more AI use in programming at OpenAI and other big tech companies like Google and Meta. Studies suggest AI tools might help some teams work much faster, but most firms do not see clear productivity gains yet. There are worries about code quality and security, as some tests suggest AI-written code often has vulnerabilities. For now, human engineers still review, test, and take responsibility for the final code, even if most of it is made by AI.

Recent reports suggest that OpenAI has dramatically increased its use of AI-generated code, highlighting a massive internal shift toward automated software development. The statement from President Greg Brockman points to a profound change in engineering workflows at the influential AI lab, but also raises critical questions about productivity, code quality, and security.

Deconstructing the AI Code Claims

At Sequoia Capital's AI Ascent 2026 conference, OpenAI President Greg Brockman announced that internal "agentic coding tools" now handle a substantial majority of code commits, representing a sharp rise from the previous month. He noted this shows that AI coding has crossed a significant productivity threshold.

Brockman's statement, first reported by Business Insider and later analyzed by The Next Web, left some ambiguity as to whether the metric refers to lines of code or completed engineering tasks. However, he emphasized that every merge request still requires a human engineer to take responsibility.

An Industry-Wide Shift to AI Coders

OpenAI's metric is part of a broader trend among tech giants. Google CEO Sundar Pichai stated that a significant portion of his company's new code is AI-generated. Similarly, Meta expects 65% of engineers in creation organization to write more than 75% of committed code using AI, according to Business Insider. Meanwhile, Anthropic CEO Dario Amodei predicted AI could be writing the vast majority of code within months.

The Impact on Engineering Teams

The rapid integration of AI coding assistants has led to several key outcomes:

- Productivity Disparity: While McKinsey research suggests elite teams can achieve up to 20x leverage with agentic tools, industry reports indicate that many firms using AI report no measurable productivity gains, highlighting a wide gap between top performers and the average company.

- Increased Review Load: Data from JetBrains shows that as junior developers generate more code with AI, the demand for code reviews from senior engineers has surged.

- Stagnant Security: According to industry reports, the security pass rate for AI-generated code remains concerning, indicating that quality and security are not keeping pace with generation speed.

Governance and Security in the Age of AI Code

The rapid adoption of AI coding tools has prompted significant security concerns, with many application security (AppSec) experts advising teams to treat all AI-generated code as untrusted until it has been thoroughly audited. Veracode warns that nearly half of all unprompted AI code contains known vulnerabilities. Others describe the output as a "black box" that can inherit and replicate security flaws from the public code it was trained on.

Recommended safeguards include:

- Mandate Automated Scanning: Enforce both static (SAST) and dynamic (DAST) analysis before any code is merged.

- Ensure Full Traceability: Require auditable prompts and explicit human sign-off for every AI-assisted contribution.

- Isolate Critical Systems: Limit or prohibit the use of AI-generated code in high-risk areas like authentication and payment processing.

- Implement Secure Prompting: Train developers to use security-focused prompting techniques to improve the quality of generated code.

- Educate on AI Limitations: Provide ongoing training on the contextual limits and potential biases of large language models.

The Bottom Line: Human Oversight is Non-Negotiable

Brockman's claims signal a new era of software development, but the lack of detailed supporting data and troubling security metrics paint a complex picture. The distinction between lines of code generated and tasks assisted remains a key point of ambiguity. Ultimately, functionality gains do not guarantee safer software. For the foreseeable future, human engineers remain the essential element for review, testing, and final accountability - even when AI writes the vast majority of the initial code.

What exactly did Greg Brockman say about OpenAI's use of AI to write code?

At Sequoia Capital's AI Ascent 2026 conference, OpenAI President Greg Brockman stated that agentic coding tools inside OpenAI had dramatically increased their share of code generation, representing a significant jump from the previous month. He framed this jump as proof that AI coding has crossed a "productivity threshold" and added, "it's hard to know what percent is not being written by AI," hinting the real share could be even higher.

How does OpenAI's AI code usage compare with other tech giants?

Google CEO Sundar Pichai revealed that a significant portion of new Google code is now AI-generated (still reviewed by humans), while Meta expects 65% of engineers in creation organization to write more than 75% of committed code using AI. Anthropic CEO Dario Amodei predicts AI will write the vast majority of all code within 3-6 months and "nearly all" inside a year.

What new engineering workflow has emerged from heavy AI-code use?

Teams have moved from sprint-based cycles to continuous, high-speed loops where:

- Developers define "what" to build, not "how"

- Humans act as reviewers and approvers - every merged line still requires human sign-off

- Junior engineers generate more code, creating a surge in senior-level review demand

- Entire repositories can be designed collaboratively by AI agents handling tests, docs and refactoring

What are the biggest risks when most code is machine-written?

- Security pass rates lag far behind syntax rates: Industry reports indicate that AI-generated code often fails basic security checks, with certain vulnerability types showing particularly poor pass rates

- Silent technical debt rises as engineers accept suggestions they do not fully understand

- Governance gaps appear when authorship and risk ownership are unclear

- Black-box training data can replicate old vulnerabilities into fresh commits

Which best practices help keep AI-written code safe and reliable?

- Treat AI output as untrusted until proven otherwise - run SAST, DAST and behavioral tests

- Use security-focused prompts - they can significantly improve secure-code rates

- Introduce AI-specific review gates for privilege scope, data handling and auth flows

- Layer defenses: pair static analysis with runtime monitoring and dependency scanning

- Preserve human accountability - tag every AI contribution and require explicit human approval before merge