OpenAI expands enterprise push with new Frontier Alliance, targets 50% revenue from agents

Serge Bulaev

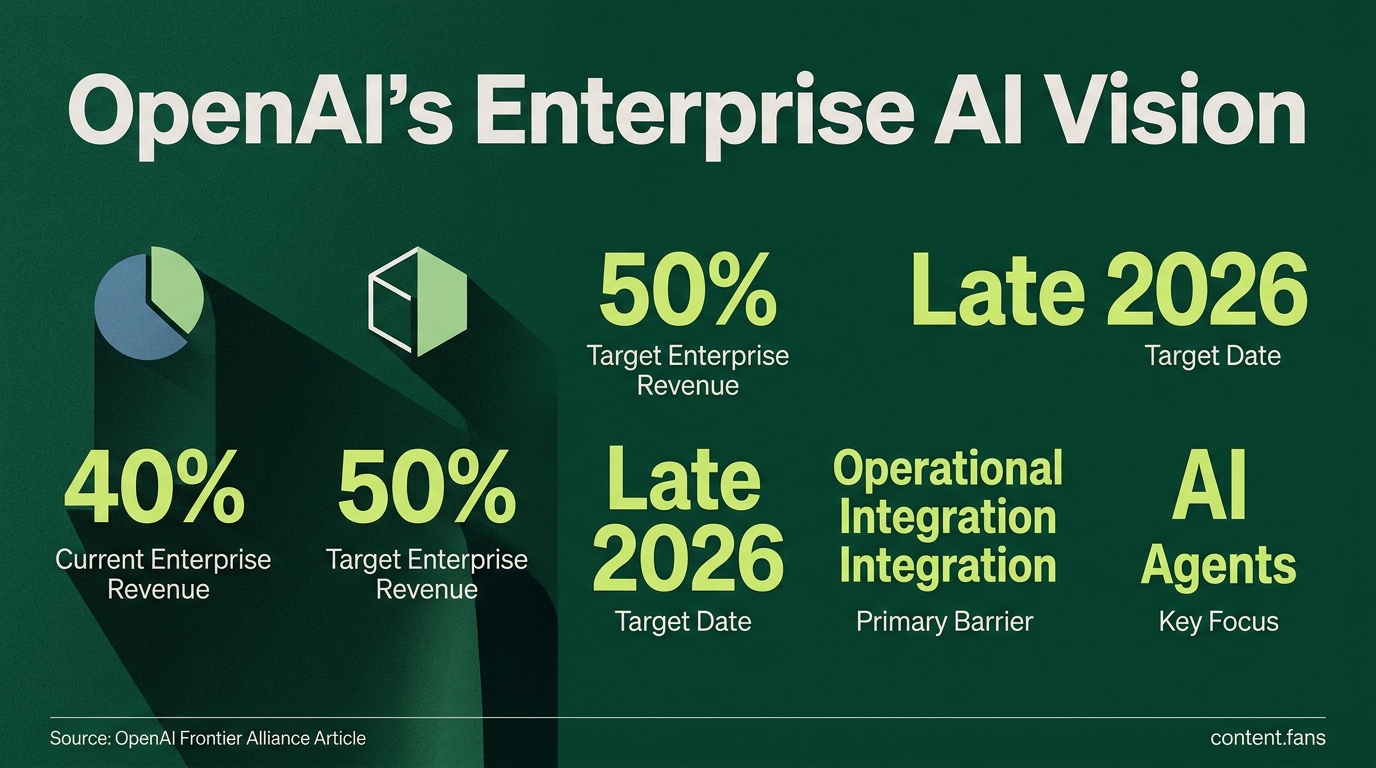

OpenAI has started the Frontier Alliance to help big companies use AI agents in their main work processes. The main challenge for companies appears to be making AI fit into their existing systems, not making the AI smarter. Some reports suggest that businesses may pay for ongoing support and monitoring instead of just trying one-time AI prototypes. There are risks, such as data leaks, over-reliance on one vendor, and mistakes in AI use, but suggested solutions include regular audits and using more than one AI provider. OpenAI says that about 40% of its revenue now comes from enterprise customers and this may rise to 50% by late 2026, though it is unclear if these projects will lead to full-scale use.

OpenAI's Frontier Alliance signals a major strategic pivot towards enterprise services, embedding its engineers directly with customers to accelerate the deployment of AI agents. Recognizing that the primary barrier for large companies is operational integration - not raw model intelligence - this initiative prioritizes practical engineering to move AI workflows from pilot programs to full-scale production.

How the Alliance Works

The Frontier Alliance pairs OpenAI's technical specialists with an enterprise's internal teams and major consulting partners. This collaborative model focuses on integrating AI agents into core business operations by handling workflow redesign, governance, context wiring, and memory management, thereby streamlining the path from development to deployment.

In this structure, consulting partners like BCG, McKinsey, and Accenture manage workflow redesign and establish governance playbooks. OpenAI engineers focus on the technical stack, including context layer wiring and observability dashboards, while customer teams contribute essential domain logic and manage data stewardship for frontline adoption.

Barriers, Risks, and Mitigation

Enterprises adopting the Alliance must navigate significant risks. Key concerns include data security, with warnings about potential data leakage from model training and ungoverned "shadow AI" tools. Another major hurdle is vendor dependency, as many companies are actively creating strategies to avoid over-reliance on a single AI provider. Finally, accountability gaps from model hallucinations or misconfigurations create compliance challenges. To mitigate these issues, experts recommend regular portability audits, implementing multi-vendor stacks using models from other providers like Anthropic, and enforcing strict data usage opt-out policies.

Competitive Landscape

While OpenAI's Alliance is a branded, high-touch initiative, competitors are also embedding resources. Analysts note that Anthropic provides engineering support through cloud marketplace channels like AWS Bedrock, though without a formal alliance structure. OpenAI's strategy mirrors established cloud co-sell programs but places a unique emphasis on agent observability and shared memory infrastructure.

Revenue Impact and Future Outlook

The enterprise push is critical to OpenAI's financial strategy. The company reports that enterprise clients already account for approximately 40% of its revenue, with a target to increase this to 50% by late 2026. This projection suggests that revenue from consulting-led agent deployments could soon rival that of its core API consumption. However, with no public ROI case studies yet available, the industry is watching to see if these initial pilot programs will successfully scale into full production systems.

What is OpenAI's Frontier Alliance, and how does it reshape enterprise AI adoption?

The Frontier Alliance represents OpenAI's strategic shift from pure model provision to hands-on implementation services. The initiative pairs OpenAI engineers directly with enterprise customers alongside major consulting partners including Boston Consulting Group (BCG), McKinsey & Company, Accenture, and Capgemini to deploy AI agents into core business workflows. This embedded approach addresses what OpenAI identifies as the critical bottleneck in enterprise AI: the engineering and operational work required to build, run, and integrate agents, rather than raw model capabilities. Organizations receive support for agent engineering, permissions design, context plumbing, and evaluation frameworks through multi-year partnerships designed to move deployments from pilot to production scale.

Which enterprises are participating in Frontier initiatives, and what are OpenAI's revenue targets?

Early adopters of the Frontier platform include major organizations such as HP, Intuit, Oracle, State Farm, Thermo Fisher Scientific, and Uber, with additional pilots underway at BBVA, Cisco, and T-Mobile. State Farm's Chief Digital Information Officer Joe Park notes that combining OpenAI's deployment expertise with existing capabilities accelerates AI adoption and creates new ways to serve customers. Financially, OpenAI currently derives approximately 40% of revenue from enterprise customers and is targeting 50% of revenue from enterprise customers by the end of 2026, signaling a major bet that organizations will pay premium rates for operational expertise rather than just API access.

What risks should enterprises evaluate before embedding OpenAI engineers into their operations?

Deep integration with AI model providers introduces significant strategic risks that many organizations actively seek to avoid, while a significant portion of enterprises report that existing vendor lock-in has already hindered their ability to adopt better tools. Key concerns include data security vulnerabilities through unverifiable training data usage and shadow AI proliferation, where unsanctioned tools create uncontrolled data flows. Vendor lock-in risks compound at every layer when agents run on proprietary orchestration layers, creating strategic dependency on specific model behaviors that do not transfer cleanly between providers. Additionally, governance fragmentation and unclear accountability structures raise compliance concerns, particularly when AI systems impact regulated decisions without formal contractual frameworks defining liability.

How does OpenAI's Frontier Alliance compare to Anthropic's enterprise strategy?

While OpenAI pursues breadth through consulting ecosystems, Anthropic competes through depth in agentic workflows and multi-cloud flexibility. Anthropic offers direct engineering access for enterprise tiers and emphasizes safety-critical applications through partnerships with AWS Bedrock and Google Vertex AI, without locking customers into single-cloud environments. Unlike OpenAI's custom fine-tuning capabilities, Anthropic focuses on long-context reasoning and transparent safety measures. Industry reports suggest that a growing number of organizations with mature AI programs now use multiple providers simultaneously, with the OpenAI-Anthropic pairing becoming a common pattern as enterprises seek to balance capability breadth with operational resilience.

Why is OpenAI transitioning from model provider to implementation partner?

The strategic pivot reflects a fundamental industry realization: the primary barrier to capturing AI value is not model intelligence but the operational work required to deploy agents effectively. Enterprises struggle with context plumbing, permissions architecture, and evaluation frameworks that turn promising pilots into production systems. By embedding engineers directly into client organizations, OpenAI acknowledges that enterprises will pay for deployment and operational expertise from model providers. This services-led approach accelerates time-to-value while creating tighter customer relationships, though it requires careful management of the inherent tensions between vendor support and long-term strategic independence.