OpenAI: AI Writes 80% of Production Code, Up From 20%

Serge Bulaev

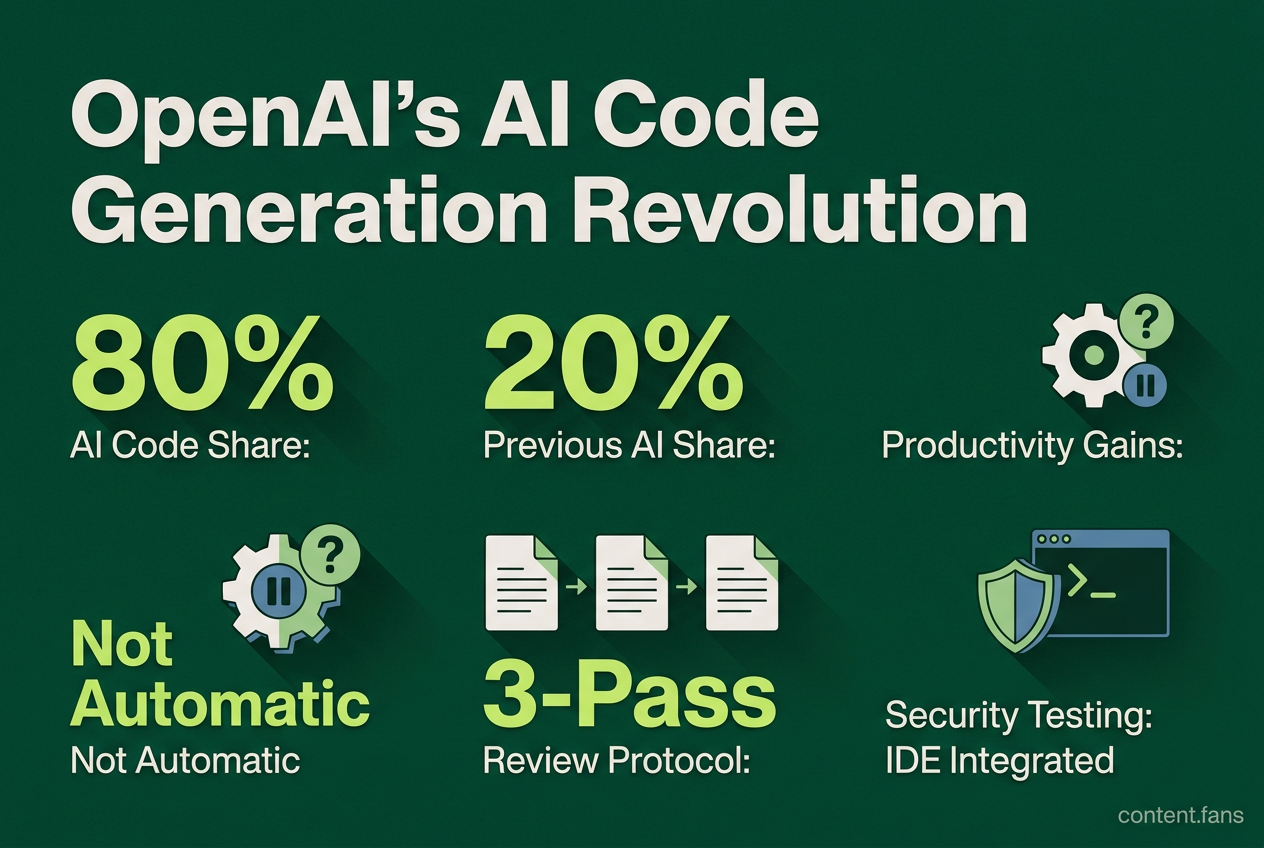

OpenAI President Greg Brockman said in May 2026 that AI now writes about 80 percent of the company's production code, up from 20 percent five months earlier, though the exact meaning of this figure is unclear. This shift means engineers spend more time on designing prompts and reviewing code rather than writing it from scratch, and senior engineers now supervise AI work while fewer junior roles are needed. Some studies suggest most companies using AI in software have not seen big productivity gains, and there are concerns about risks like bugs or accidents from less oversight. Experts recommend strong review processes and clear rules to manage these risks, and there are worries that fewer entry-level jobs may hurt long-term skills development. Overall, while AI may help produce more code, it also requires new ways to ensure quality, safety, and good team structure.

At OpenAI, AI writes a significant portion of production code, according to industry reports, representing a substantial increase from previous levels. Recent announcements signal a profound shift in software development. While The Next Web highlights ambiguity in the metric - whether it's lines of code or task involvement - the trend is clear: engineers are evolving from creators to reviewers.

Inside OpenAI, developers now focus on high-level prompt engineering, system architecture, and rigorous review cycles instead of manual coding. This evolution transforms programming into a supervisory role, presenting a new paradigm for engineering teams adopting advanced agentic tools.

The New Engineering Team: AI's Impact on Roles

Industry reports reflect a dramatic restructuring of engineering teams. Senior engineers are shifting into "orchestrator" roles, tasked with vetting AI-generated logic, scalability, and security, while opportunities for junior developers diminish. An MIT Technology Review analysis noted teams replacing many junior developers with fewer senior engineers and AI agents.

AI code generation is reshaping software engineering by elevating senior developers into review and architectural roles while reducing the need for entry-level coders. This model requires a new focus on prompt engineering and system oversight, turning the traditional development process into a human-AI collaborative workflow.

However, broader industry data presents a more nuanced picture. A February 2026 NBER survey found that most firms using generative AI for software saw no significant productivity gains. Similarly, industry research indicates typical software development life cycle (SDLC) improvements are more modest than initially expected. These findings suggest that productivity benefits are not automatic; they are realized only when organizations fundamentally redesign their workflows around AI-driven output, rather than simply adding AI tools to existing processes.

Redefining Quality Assurance for AI-Generated Code

The surge in AI-generated code volume introduces a parallel surge in potential defects. A "database wipeout incident" cited by India Today, reportedly caused by an under-supervised AI script, underscores the risks. To mitigate this, a new consensus on quality assurance is emerging. Experts advocate for a rigorous three-pass review protocol targeting architecture, logic, and security. Furthermore, Veracode's 2026 guidance recommends integrating static application security testing (SAST) directly into the IDE, enabling developers to catch vulnerabilities at the point of code generation, long before a merge request is created.

Practical Safeguards for AI Code Integration

To safely integrate AI coding assistants, engineering teams are adopting a new set of best practices for governance and security:

- Establish Clear Guardrails: Document coding styles, anti-patterns, and tool-specific rules in shared files like

CLAUDE.mdor.cursorrules(GroovyWeb). - Enhance Pull Request Transparency: Mandate that every pull request includes the prompts used to generate code and a rationale for the approach (Exceeds.ai).

- Ensure Traceability: Tag all AI-generated code blocks to maintain clear audit trails and simplify debugging (Exceeds.ai).

- Automate Security Gates: Integrate mandatory SAST, Software Composition Analysis (SCA), and secret scanning into the CI/CD pipeline to block vulnerabilities before they reach production (Growexx, Veracode).

- Combat Technical Debt: Require AI tools to generate corresponding edge-case tests alongside new features to prevent the accumulation of silent bugs (GroovyWeb).

The Impact on Workforce and Education

The efficiency gains from AI are restructuring the tech workforce. The Pragmatic Engineer newsletter notes that a team of four developers using agentic tools can match the output of a traditional ten-person team. This shift prioritizes high-level skills like system design, complex debugging, and deep domain expertise over basic coding. In response, educational programs are rapidly adapting. Bootcamps are replacing syntax drills with curricula focused on AI fluency, code review, and prompt engineering, preparing junior candidates for a new set of day-one expectations. However, industry observers warn that the decline in entry-level positions could create a long-term skills gap, as fewer engineers will have the opportunity to learn complex systems from the ground up.

The Future: Security, Governance, and Human Oversight

As AI's role expands, robust governance becomes as critical as the AI's capability itself - a lesson learned from OpenAI's own experiments with AI-generated codebases. Industry best practices emphasize the need for centralized toolchain approval, baseline performance measurement before AI adoption, and regular audits of tool usage. Critics like Gary Marcus rightly argue that simply passing unit tests is not a guarantee of software safety. This is supported by studies showing modest productivity gains in many enterprises, suggesting that benefits are not universal. Consequently, engineering leaders must balance the drive for increased throughput with the critical need for expanded human oversight, including rigorous code reviews, comprehensive security scanning, and strong architectural guardrails. This ensures that automated code generation enhances, rather than compromises, system integrity.

What exactly did OpenAI disclose about AI-written code, and how reliable are the figures?

During a May 2026 talk at Sequoia's AI Ascent conference (reported by Business Insider), OpenAI President Greg Brockman stated that internal tooling now writes a significant portion of code, representing a substantial increase from previous levels.

- The company describes the jump as an inflection point where AI moves from "sideshow" to "main thing".

- Ambiguity persists: the figures may mean lines of code, commits, or tasks touched by AI, not necessarily full replacement.

- No court testimony currently ties these figures to the ongoing OpenAI-Elon Musk litigation.

How are software engineering roles changing because of AI code generation?

- Junior roles are shrinking fast: teams that once hired many juniors now operate with fewer senior engineers plus AI agents.

- Senior engineers become orchestrators, focusing on prompt engineering, architecture, and final review rather than keystrokes.

- Oversight becomes critical: OpenAI runs internal experiments where AI-generated codebases are only allowed if humans refine prompts and documentation.

- Career paths shift toward systems thinking, domain expertise, and AI-tool governance.

What new QA processes are teams adopting for AI-generated code?

Leading teams treat AI output as third-party code and layer extra gates around it:

| Traditional QA Step | AI-Specific Addition |

|---|---|

| Code review | Mandatory three-pass protocol: architecture fit, logic trace, security scan |

| Unit testing | AI must generate edge-case tests together with the implementation |

| CI/CD | Extra pipeline stage: IDE-integrated SAST before merge (Veracode 2026 guide) |

| Documentation | AI code annotation tags every generated block for traceability |

Result: review volume rises, but depth concentrates on high-level correctness and security rather than style or typos.

What security pitfalls appear when machines write most of the codebase?

- Silent debt: without rigorous review, repositories fill with "unnecessary abstractions" that later become attack surfaces (Rootstack 2026).

- Secret sprawl: a significant portion of developers were found using unsanctioned AI tools with personal accounts, risking credential leaks (Growexx 2026).

- Validation illusion: code that compiles and passes unit tests can still harbor SQL-injection or XSS vectors missed by naive AI tests.

Mitigation checklist applied at each PR:

- OWASP Top-10 scan

- Automated secret detection

- SBOM publication to track AI-introduced dependencies

How should universities and bootcamps adapt their curricula for an AI-first era?

Bootcamps are pivoting away from syntax drills to prompt engineering, code-review labs, and domain storytelling.

- New core skills: AI-fluency, systems design, performance tuning, and security review.

- Compression of learning: instead of 12-week "from scratch" courses, programs teach "from prompt to production" in 4 - 6 weeks.

- Experiment tracks: students practice controlling AI projects under senior supervision to understand failure modes early.

Early data from Stanford shows the 22-25 age group of developers saw a significant employment drop since 2022 as AI absorbed entry-level tasks, underscoring the urgency for curriculum change (MIT Technology Review 2025).