OpenAI: AI Writes 80% of Our Code, Humans Still Review Every Line

Serge Bulaev

OpenAI says that AI now writes about 70-80% of their code, but humans still review and approve every change. Other tech companies like Google and Meta also report high amounts of AI-generated code, which may show a big shift in how software is created. Some studies suggest that AI code might have more security issues and defects, so human checks remain important. New rules in Europe will soon require companies to keep records and manage risks for AI-made code. Developer jobs may change, with more focus on planning and checking code, while AI does more of the actual writing.

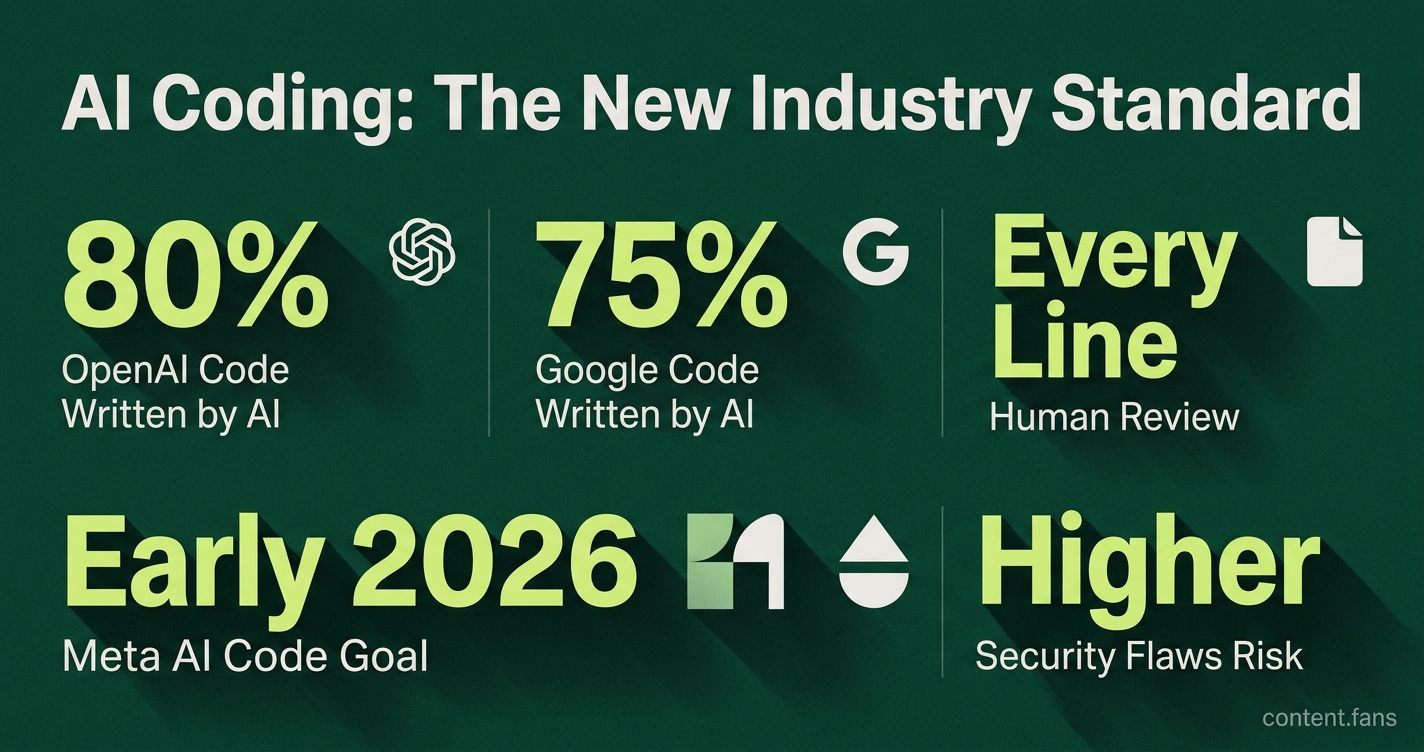

At OpenAI, AI writes a significant portion of the code, but human engineers still review every line before it's merged. OpenAI President Greg Brockman revealed this significant jump in AI-assisted development, stating the company's tools went from writing a smaller portion to a substantial majority of code in a single month, according to a Business Insider report. Despite this automation, he stressed that a human remains accountable for every change, ensuring quality and oversight.

OpenAI's announcement reflects a broader industry shift toward AI-first development. Google reports that AI generates approximately three-quarters of its new code, and Meta anticipates that a significant portion of its engineers will primarily commit AI-written code by early 2026. This trend signals that automated coding is rapidly becoming the industry standard, not just an experiment.

Why heavy AI code generation worries security teams

OpenAI reports that AI models now generate a substantial portion of their internal code, a trend echoed by other tech giants like Google and Meta. While this accelerates development, the practice requires stringent human oversight, as every line of AI-generated code is still reviewed and approved by an engineer.

While OpenAI has not released internal defect data, independent research highlights potential quality concerns. Industry reports suggest AI-generated code can introduce significantly more security flaws and defects than human-written code. Unmonitored, this can lead to increased static analysis warnings and substantial technical debt accumulation, underscoring the necessity of the mandatory human review Brockman emphasized.

Regulatory bodies are also taking notice. The forthcoming EU AI Act is expected to mandate detailed logging, risk management protocols, and documented human oversight for high-risk AI systems. To ensure compliance and avoid significant fines, experts recommend adopting agent registries and policy-as-code controls proactively.

Emerging guardrails inside engineering workflows

To manage these risks, many enterprises are adopting a structured, three-stage playbook for integrating AI coding tools, as outlined by industry research:

Verified 90-day structures from sources include: (1) Visibility → Policy → Enforcement model; (2) Baseline audit → Agent-readable files → Session hooks → Classification/routing → Secrets gating → Audit telemetry; (3) Readiness → Stakeholder alignment → Tool deployment → Integration → Measurement. These frameworks help organizations systematically implement AI coding tools while maintaining security and quality standards.

While OpenAI hasn't detailed its specific internal workflows, Brockman's focus on accountability - "we still want a human to be accountable" - is consistent with the critical final review step in these emerging frameworks.

Shifting roles for developers

The rise of AI code generation is fundamentally reshaping the role of the software developer. Industry analysts predict that a significant portion of developers will shift their focus from writing code to higher-level tasks like system architecture, integration, and product strategy. This creates new career paths where engineering judgment and oversight are valued more than raw coding output.

The job market is already reflecting this evolution. Postings for machine learning and MLOps roles are increasing while generic software engineering positions decline. Companies are prioritizing talent that can manage AI quality and security, suggesting that human-led quality assurance is becoming a critical function, potentially replacing some traditional entry-level coding tasks.

Ultimately, OpenAI's substantial reliance on AI-generated code is more than just a statistic; it marks a pivotal moment in software development. As AI transitions from a helpful assistant to the primary author of code, the role of human engineers is becoming more crucial than ever - not as typists, but as the architects, guardians, and final arbiters of machine-generated systems.

What exactly did Greg Brockman say about OpenAI's AI-generated code share?

At a Sequoia Capital event, Brockman shared that "over the course of December we went from these agentic coding tools writing a small portion of your code to writing the vast majority of your code", turning AI from a "sideshow" into the "main thing" developers work with. He also emphasized that every merged line is still reviewed and signed off by a human to keep accountability intact.

How does OpenAI's AI-code ratio compare with other tech leaders?

OpenAI is now in the same bracket as Google, whose CEO reported that a significant majority of new code is AI-generated. Meta Creation Org expects that a substantial portion of its engineers will commit primarily AI-written code by H1 2026. The numbers show that AI-first development has quietly become the norm, not the exception, among frontier labs.

What new risks appear when most code is written by models?

Industry reports suggest that AI-generated code carries significantly more security flaws and is more likely to contain correctness bugs than human-written code. A growing number of breaches traced back to production systems have originated in model-suggested snippets. Because models can produce large diffs in seconds, review bandwidth becomes the new bottleneck, and technical debt can accumulate much faster if governance lags behind adoption.

How are human engineers reshaping their day-to-day work?

Developers are shifting from typing syntax to orchestrating multi-agent workflows: one model writes, another critiques, a third generates tests. Many developers expect their role to be re-defined, moving toward system design, integration and AI oversight. Junior hiring has declined while senior headcount is steady, illustrating that judgment-heavy tasks are gaining value and routine implementation is being automated away.

Which concrete guardrails help teams keep quality high?

Leading teams combine IDE-integrated security scanners, multi-agent review loops and policy-as-code gates inside CI/CD. Verified implementation structures include:

- Visibility phase: audit tool usage, tag AI vs human commits, block shadow AI accounts

- Policy phase: enroll only approved models, require human sign-off, log agent decisions

- Enforcement phase: run automated remediation, simulate audits and publish documentation for every release

These steps help maintain code quality even as output volume grows significantly, and they prepare companies for evolving regulatory requirements around AI system documentation.