New FinOps Tools Track AI Token Spend Like Payroll

Serge Bulaev

Finance teams are starting to use special tools to track every AI request, a process that may soon look like tracking payroll. Analysts suggest these tools are needed because current cloud billing does not show costs per feature or user. New products offer dashboards, alerts, and ways to see which teams use the most tokens and where the money goes. Experts say these tools might help prevent surprise bills and show which AI features bring in revenue. There are still some gaps in the tools, but early users report that being able to see costs clearly already helps manage spending.

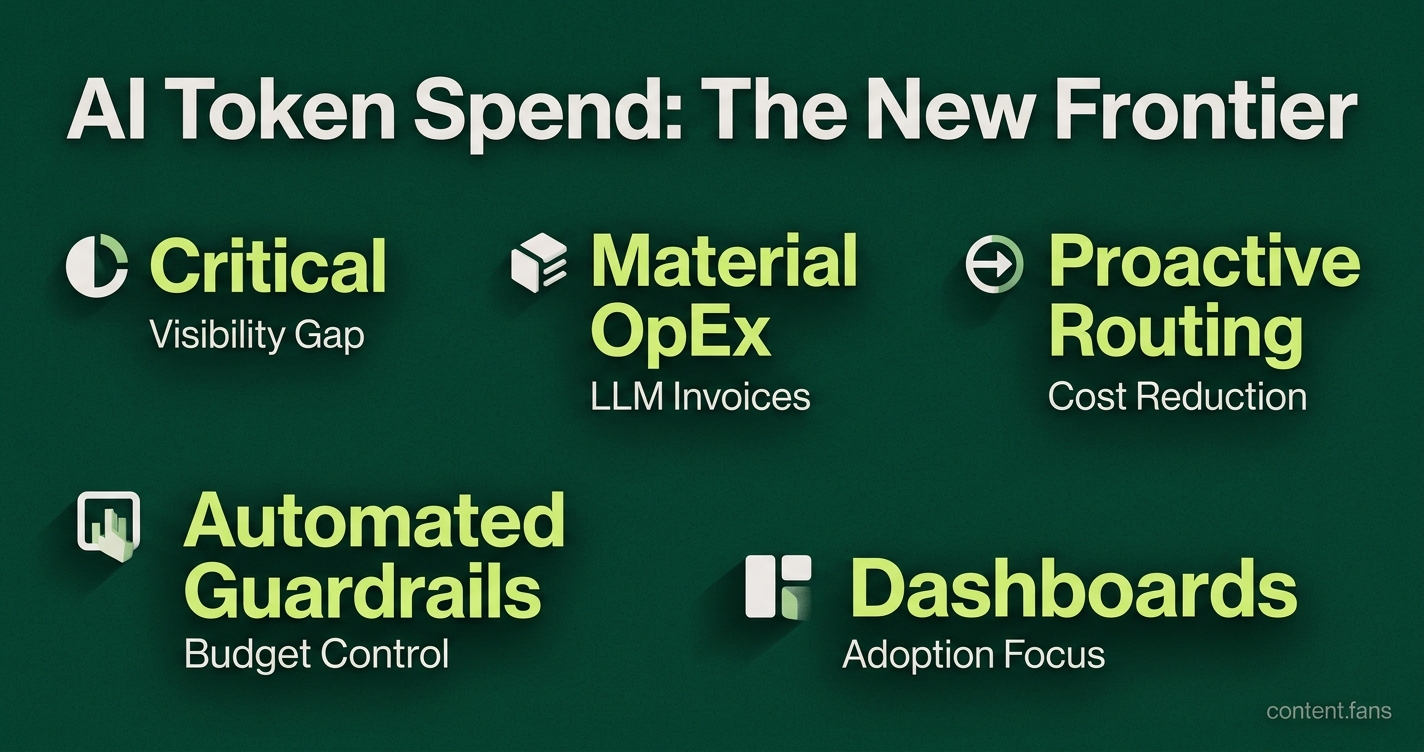

New FinOps tools help teams track AI token spend with payroll-like precision, solving a critical visibility gap in cloud cost management. As AI usage evolves from isolated experiments into core business functions, LLM invoices are becoming a material operating expenditure. The first wave of dedicated products provides dashboards, allocation, and unit economics, allowing managers to govern this new category of compute spend as a measurable resource.

Demand for these tools is surging because native cloud consoles do not offer granular cost breakdowns per feature or user. For example, Vantage allows customers to connect their OpenAI and Anthropic accounts and see detailed token-based metrics across different models and requests. Similarly, Finout emphasizes virtual tagging across complex environments, noting that conventional FinOps platforms face challenges with token-based API billing models.

Token-Finance Dashboards Reach Production

These token-finance platforms connect directly to AI provider accounts, like OpenAI and Anthropic, to ingest billing data. They then display token burn rates, attribute costs to specific teams or features, and send automated alerts to prevent budget overruns, offering previously unavailable granular visibility into AI expenditure.

Initial enterprise adoption focuses on dashboards for monitoring daily token consumption and detecting cost spikes. While smaller teams may still use native provider billing pages, mid-market organizations are adopting unified platforms to consolidate multi-vendor data. These tools can send variance alerts directly to Slack and enable automated guardrails that freeze runaway processes when spending exceeds preset thresholds.

A second layer of tooling focuses on optimizing efficiency. Dashboards identify which applications or teams are using expensive models for simple tasks, nudging developers toward more cost-effective model versions or context windows. According to industry reports, proactive model routing can reduce LLM spending by directing routine tasks like summarization to more appropriate model tiers.

From Dashboards to Value Attribution

Beyond cost control, executive boards now demand to know which AI initiatives are driving revenue. Advanced tools provide allocation engines that map token spend back to specific products or SKUs, even without native provider tags, offering a clear view of gross margins. As AI budgets shift from central IT to line-of-business operating expenses, these FinOps tools must integrate with ERP and BI systems to give CFOs a complete picture of AI's impact on financial performance.

An emerging approach involves structured investment allocation: dedicating the majority of AI spend to proven workflows, a significant portion to promising candidates for scaling, and a smaller allocation to pure experimentation. This framework helps leaders instrument ROI with precision and prevents budget fragmentation across unproven initiatives.

Core Features for AI Financial Governance

Finance leaders are prioritizing a core set of features for managing AI spend:

- Token Dashboards: Visualizing hourly consumption against budget caps.

- Efficiency Tracking: Ranking teams by cost per token, adjusted for output.

- Anomaly Detection: Providing sub-hourly alerts via Slack or other platforms.

- Cost Allocation: Mapping spend directly to products, features, or customers.

While current products have limitations, such as handling complex contract data or agent-to-agent payments, early adopters confirm that even basic visibility is enough to prevent major invoice shocks. As AI adoption accelerates, these dashboards and attribution modules are expected to become as standard as cloud cost explorers.

What makes AI token spend harder to control than traditional cloud costs?

AI tokens are usage-based micropayments that scale with every prompt, retry, and larger context window. Unlike provisioned VMs, a single developer can burn thousands of dollars in a weekend by accidentally looping premium models.

- Enterprise LLM spend is growing rapidly across organizations, with many companies reporting significant increases in AI-related expenses.

- Invoice shocks arrive 60-90 days later, because engineering teams self-serve without finance visibility.

Which early tools already give finance teams token-level visibility?

Vantage and Finout have moved fastest, ingesting native bills from OpenAI and Anthropic side-by-side with AWS, Azure, and GCP.

- Vantage creates unit metrics such as cost per token per user session and surfaces per-model dashboards.

- Finout adds virtual tagging to map spend back to teams when providers lack native labels, plus sub-hour anomaly alerts that catch run-away agents before they blow the monthly budget.

What features should startups build to turn token spend into "payroll-like" governance?

Box CEO Aaron Levie predicts the next wave of startups will ship token dashboards, ROI-attribution modules, and budget guardrails that feel as familiar as an HRIS dashboard.

- Dashboards that show which product features burn the most tokens.

- Automatic routing that pushes low-risk tasks to cheaper models, locking premium usage behind approval queues.

- Weekly variance digests pushed to Slack or Teams, so every manager sees their "AI head-count" cost in real time.

Why are AgenticOps budgets becoming a priority?

Enterprises are shifting token budgets out of IT and into line-of-business OpEx, with many departments allocating significant portions of their budgets for autonomous agents.

- Global AI spend is forecast to grow substantially over the coming decade, with tech budgets representing an increasing share of company revenue.

- Boards now require ROI per agent the same way they ask for head-count productivity, making governance tooling a purchase priority, not a "nice-to-have".

How can companies get started in the next 30 days without building custom software?

- Connect OpenAI/Anthropic admin APIs to Vantage or Finout within your existing cloud FinOps workspace.

- Tag each API key by team, product, and environment; create a daily budget with automatic anomaly alerts.

- Export the cost data to Snowflake or BigQuery, then layer on Tableau or Grafana for quick dashboards and tracking.

- Review the first unit-economics report in finance stand-up; adjust guardrails before the next sprint ships.