New AI Engineering Model Organizes Project Speeds Into Five Layers

Serge Bulaev

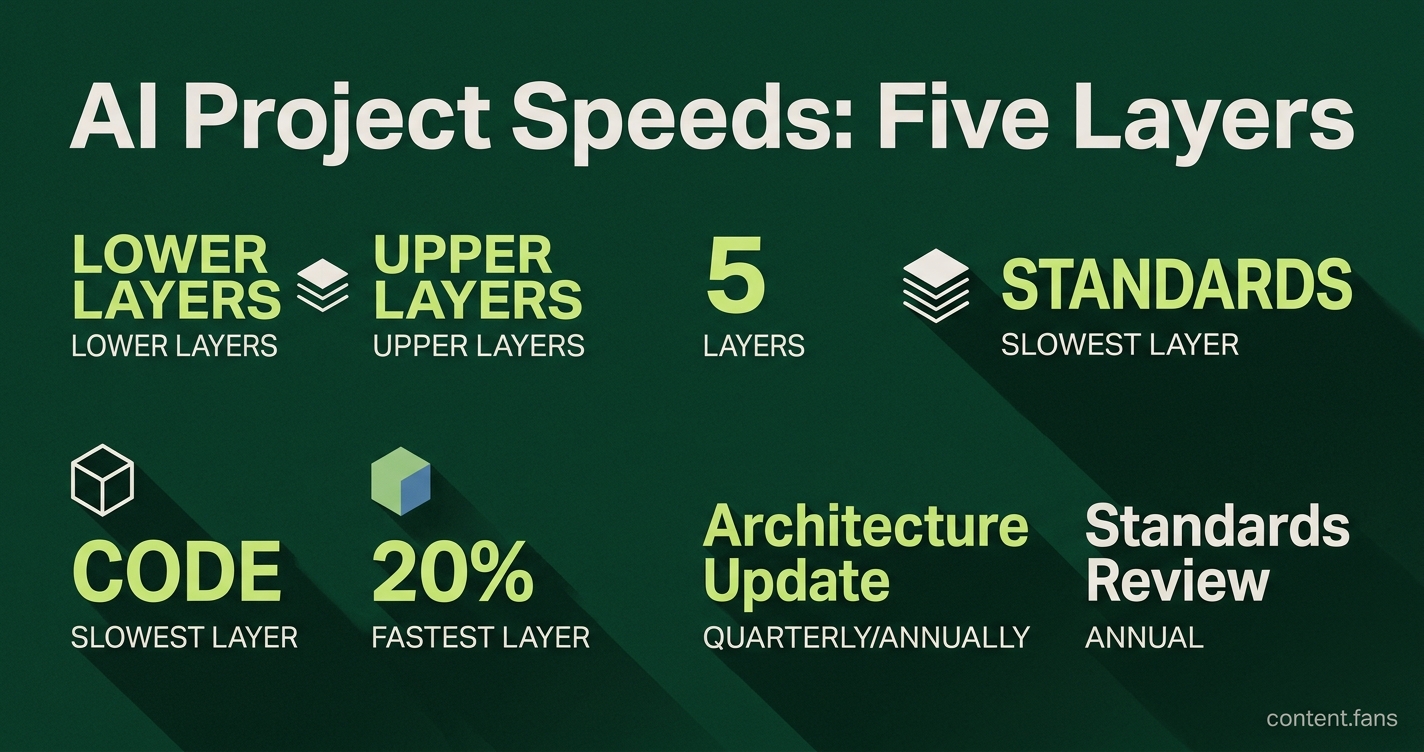

The new AI engineering model sorts project activities into five layers that move at different speeds: standards, architecture, specs, plans, and code. This model suggests that aligning the pace of decisions and updates in each layer may reduce friction and help teams work better together. Patterns or rules may move upward through the layers only after they prove stable over time. Teams reportedly use specific checkpoints for each layer to keep projects on track. The model appears to help mix fast-changing code with slower-changing rules and structures, which might make long AI projects more manageable.

This new AI engineering model, called the Pace Layers of AI Engineering, provides a solution to a chronic project management issue: mismatched decision speeds. By adapting Stewart Brand's pace layer concept, the model gives AI teams a five-tier framework to align their governance and work cadences, preventing chaos and improving project outcomes.

Why Mismatched Project Speeds Create Friction

In many AI projects, foundational guidelines, system architecture, and daily code commits all live in one repository but evolve at vastly different rates. Ignoring these distinct rhythms causes friction, leading to constant code churn while deeper architectural problems go unaddressed (The Culture of AI Engineering). According to industry reports, effective policy automation requires stable data and governance foundations.

The model organizes project work into five distinct layers, each operating at a different speed: standards, architecture, specs, plans, and code. This layered approach allows teams to match their governance and decision-making cadence to the appropriate layer, reducing friction between fast-moving code and slow-changing foundations.

The Five Layers of AI Engineering

- Standards: Foundational norms, coding conventions, and ethical principles that change very rarely.

- Architecture: System diagrams and dependency maps that embody the standards, evolving quarterly or annually.

- Specs: Detailed technical contracts, API schemas, and acceptance criteria that are updated periodically.

- Plans: Short-term goals, task boards, and agent prompts valid for a sprint or a few weeks.

- Code: The fastest-moving layer, where daily commits and experiments occur.

The model's core principle is that lower, foundational layers move slowly, while upper layers move quickly. Promoting a pattern from a faster layer to a slower one - such as elevating a local coding practice to a global standard - is a deliberate process that requires proven stability over time.

How to Promote Patterns Through the Layers

The framework provides a clear upgrade path for successful innovations. An experiment begins as code and only "graduates" to a higher, slower layer after demonstrating repeated success and stability. This process can be reinforced with tooling: a linter enforces a pattern at the code level, a CI/CD policy promotes it to a 'spec,' a design template solidifies it in 'architecture,' and a company handbook cements it as a 'standard.'

For example, in multi-model orchestration, routing policies can be set at the architecture layer to ensure stability, while code-level agents remain free to optimize prompts on a daily basis.

Governance Checkpoints for Each Layer

To maintain discipline, teams can implement specific governance checkpoints for each layer:

- Standards: Conduct an annual review and measure adoption with compliance dashboards.

- Architecture: Perform quarterly threat model updates and verify against performance benchmarks.

- Specs: Manage API versions and link them to traceable test cases.

- Plans: Hold sprint retrospectives and continuously capture feedback from AI agents.

- Code: Use continuous integration and flag generative AI changes for human review.

This checklist helps teams make targeted decisions. For instance, an observability gap was identified as an architectural issue, leading to the creation of a shared telemetry library instead of scattered, code-level log entries.

Aligning with External Governance and Compliance

This layered model is not just an internal tool; it aligns with external governance frameworks from regulatory bodies and academia. For example, its slow-to-fast gradient mirrors academic models that flow from laws to standards to certification. This allows compliance officers to trace a high-level regulation, like an article in the EU AI Act, all the way down to a specific unit test in the code layer.

Crucially, this approach separates revision cycles, not development stages. It enables agile, daily code pivots while ensuring foundational standards evolve with caution, maintaining long-term project coherence for teams of humans and AI agents.