Microsoft's Azure GPU Policy Shifts, Pressures OpenAI's Hardware Plans

Serge Bulaev

Microsoft appears to be making it harder for smaller customers, including OpenAI, to get high-end GPUs on its Azure cloud due to supply shortages. The company now gives priority to its top spenders and internal projects, meaning many smaller firms face long waits or quota denials. OpenAI still depends on Azure for much of its AI work, but may need to speed up building its own chips and data centers or look at other cloud options. These challenges may continue through at least late 2026, and could slow OpenAI's plans to become less reliant on Microsoft and Nvidia.

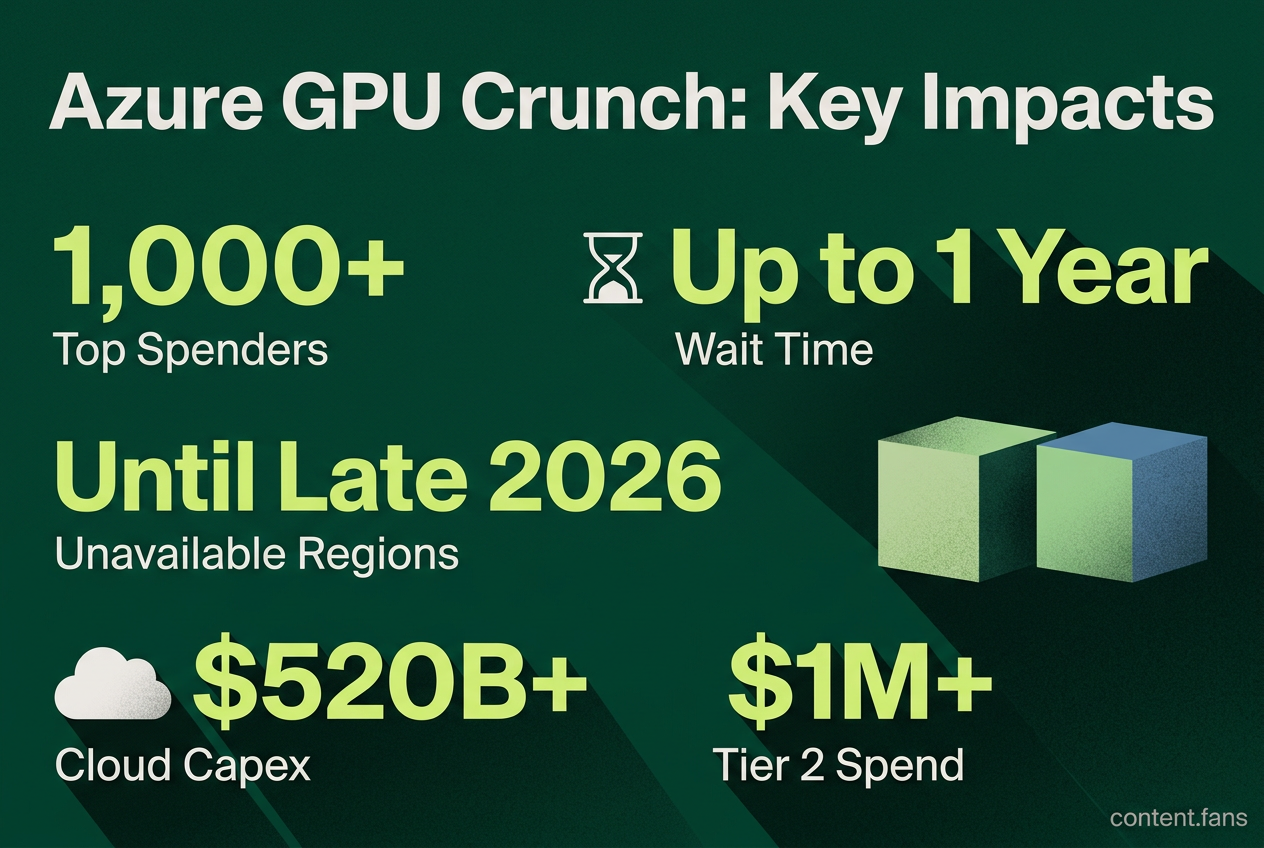

Microsoft's Azure GPU policy is tightening, shifting from a background concern to a daily operational challenge for partners like OpenAI. Amid a global GPU supply crunch, Microsoft has implemented a tiered customer system on Azure that prioritizes its top 1,000 spenders. Smaller firms now face automated quota denials and waits of up to a year, a trend highlighted in a LinkedIn post by Grep AI. This policy shift is compounded by severe capacity constraints in key data center regions like UK South, which is unavailable for new high-end GPU deployments until late 2026.

These constraints directly impact OpenAI, which relies heavily on Azure for its AI workloads as it develops custom silicon and expands its data center footprint. With Microsoft prioritizing its internal products like Copilot for the limited GPU supply, external partners must contend with an allocation queue dictated by Microsoft's strategic roadmap. Industry analysts predict combined cloud provider capital expenditures will surpass $520 billion in 2026, underscoring the immense scale of investment and competition for resources.

Azure's three-tier allocation rules

Microsoft's strict new GPU allocation rules on Azure create significant hurdles for AI firms. By prioritizing top spenders and internal projects, the policy forces smaller companies and even major partners like OpenAI to confront long hardware wait times, potential service disruptions, and strategic pivots to other cloud providers.

- Tier 1 - Commit to 1,000+ Nvidia Blackwell chips for at least one year.

- Tier 2 - Hold a US$1 million or greater Enterprise Agreement.

- Tier 3 - Smaller customers handled by partners such as CDW, often facing quota denials.

Sources report that a strict "use it or lose it" policy compounds these tiers, allowing Microsoft to revoke access to idle instances within hours, a risk that extends even to startups using program credits. Furthermore, new H100 or H200 GPU subscriptions are currently blocked in high-demand regions, including UK South, East Asia, and several U.S. hubs.

Pressure on OpenAI's diversification timetable

Azure's GPU rationing directly pressures OpenAI's hardware diversification roadmap. The company is actively pursuing a multi-vendor strategy, including Broadcom-designed ASICs, a 10-gigawatt "Stargate" data center, and evaluating alternatives like AMD Instinct and Cerebras' wafer-scale engines due to performance issues with some new Nvidia GPUs.

However, Microsoft's policies may complicate this pivot. With Azure capacity constraints expected to last until at least late 2026, OpenAI faces a strategic trilemma: secure top-tier Azure status, accelerate its independent data center construction, or migrate significant workloads to other cloud providers - each with substantial cost and timing implications.

Cloud leverage reshapes chip procurement

This dynamic reflects a broader industry trend where cloud providers, not just chipmakers, dictate hardware access. Hyperscalers like Google, AWS, and Meta are increasingly developing custom ASICs to gain cost control and supply chain stability. While Microsoft is developing its own Maia 2 chip, its heavy reliance on Nvidia gives it immense leverage. With hyperscaler spending on GPUs and servers projected in the hundreds of billions for 2026, these cloud giants can set demanding contract terms, minimum commitments, and regional availability for all customers.

A table of illustrative impacts:

| Impact area | Reported effect on AI firms |

|---|---|

| Wait times | Weeks for legacy A100s, up to a year for Blackwell class |

| Cost | KuCoin cites 32 percent hourly price increase for some startups |

| Utilization | Idle instances risk instant revocation under "use it or lose it" |

| Strategy | Shift to multi-cloud or colocation to bypass Azure queues |

Snapshot of immediate risk factors

- Long queues in constrained regions (UK South, East Asia)

- High commitment thresholds for Tier 1 access

- Revocation penalties for low utilization

- Internal Microsoft product priority over external customers

These operational details frame the current bargaining space between Microsoft and OpenAI. As long as Azure keeps strict GPU rationing, OpenAI's timeline for custom silicon and alternative suppliers remains exposed to Microsoft's internal scheduling and regional capacity decisions.

What specific allocation rules is Microsoft Azure applying that most constrain OpenAI?

Microsoft now enforces a tiered GPU caste system plus "use-it-or-lose-it" utilization watchdogs. Tier 1 customers must pre-commit to 1,000+ Blackwell chips for at least one year and spend tens of millions annually. Idle instances are tracked hour-by-hour and can be revoked even if paid for through Azure credits. OpenAI qualifies for Tier 1 today, yet every reserved chip that is not drawing power within a rolling four-hour window can be pulled back by Microsoft and reassigned to Copilot or another Tier 1 client, making it risky for OpenAI to stage future architectures it does not yet need non-stop.

How does Microsoft's control alter the timeline for OpenAI's in-house chips?

Azure's capacity outlook shows that general relief is not expected before the end of 2026. Because Microsoft also controls the physical regions where OpenAI's reserved clusters live (U.S. West and East, Japan, and Korea Central), any delay in Microsoft's data-center power or cooling build-out directly pushes back OpenAI's own custom "Titan" ASIC deployment schedule. A one-month slip in Azure's North-American builds can translate into a one-month slip for Stargate, since the new chips must first be validated inside Microsoft-controlled racks.

What options does OpenAI have to bypass Microsoft and still hit volume?

OpenAI has already diversified GPU purchasing and committed massive capital elsewhere:

- AWS EC2 UltraServers: A November 2025 agreement gives OpenAI immediate access to hundreds of thousands of GB200/GB300 GPUs on AWS, letting it run parallel training while Azure quotas stabilize.

- Cerebras wafer-scale contract: Signed January 2026, the multi-billion-dollar deal supplies alternative accelerators optimized for memory-intensive training jobs.

- AMD Instinct commitment: OpenAI has pledged to buy 6 gigawatts of AMD Instinct GPUs over the decade, equivalent to roughly $90 billion in hardware revenue.

Despite these options, OpenAI still estimates it will need roughly 10 gigawatts of custom inference silicon by 2035, and only Azure today has the global fiber mesh and PLC (private line circuits) required to stitch those clusters into one contiguous "AI factory." So bypassing Microsoft entirely would require an equal build-out of dark-fiber and regional substations, a task OpenAI now budgets at well over $100 billion.

How do startups feel downstream of this Azure-OpenAI dynamic?

Tier 3 Azure clients face a 32 % price hike ($3.70 per hour vs. $2.80 a year ago) and year-long waits, according to KuCoin's April 2026 survey. Demand exceeds supply by a factor of ten, prompting many founders to pivot to multi-cloud or colocation. This vacuum indirectly strengthens OpenAI's bargaining power: Microsoft cannot afford to push OpenAI toward AWS in the same way it can squeeze smaller startups.

What does this power shift signal for the broader AI-hardware supply chain?

The cloud provider is becoming the gatekeeper, not the chip maker. In 2026, combined hyperscaler capex is projected to exceed $520 billion, up 61 % YoY, and more than half of that will flow into custom ASICs (Trainium 3, TPU v7p, Maia 2, MTIA v3). For any AI lab that lacks a direct pipe to those ASICs or reserved racks, the window to negotiate is closing fast. OpenAI's dilemma is therefore every AI lab's dilemma, only at a scale 10-100× larger.