Governments now audit AI's 'invisible' political realities

Serge Bulaev

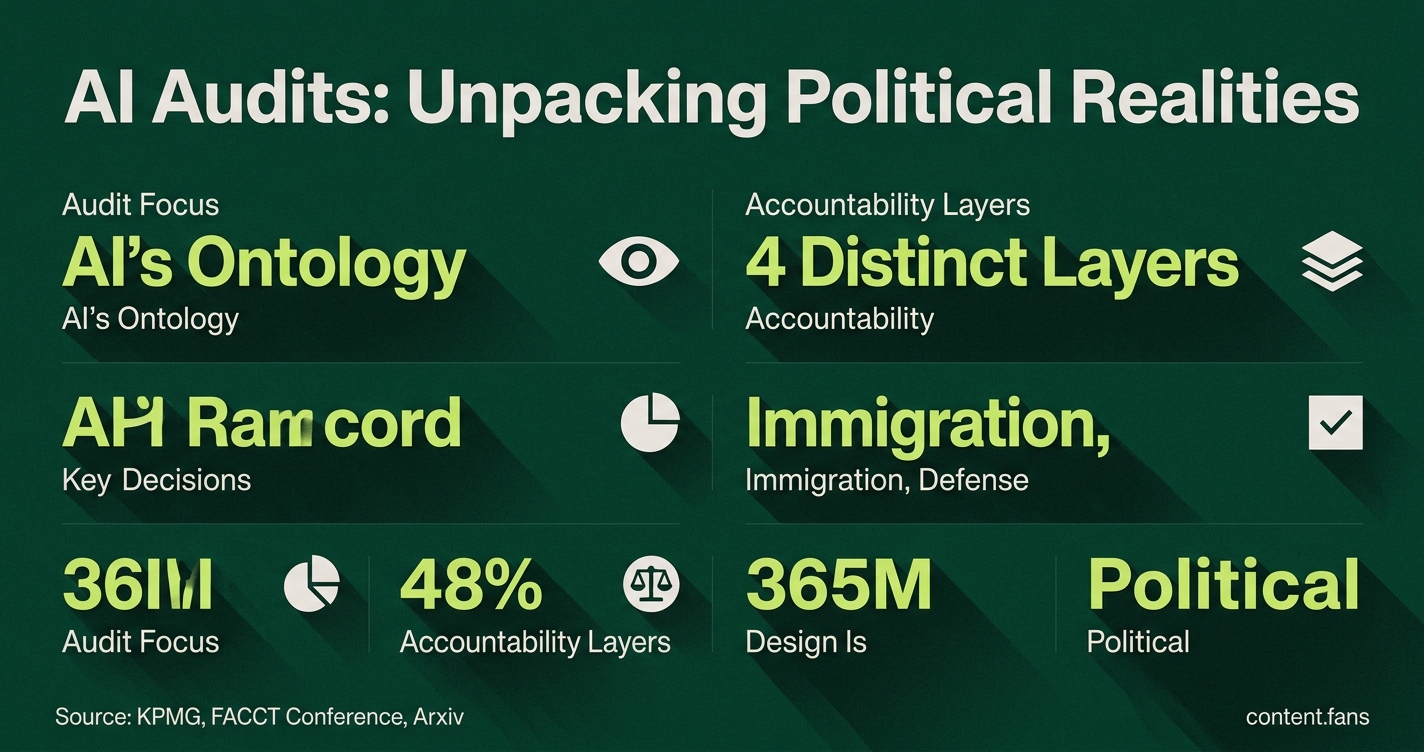

Governments have started to audit how AI systems make technical decisions that can shape policy and society. Since 2024, agencies may check how categories, data labels, and thresholds in AI systems are chosen, since these can quietly influence political outcomes. Audits now often look at four layers: governance, data, performance, and monitoring, and laws like New York City's Local Law 144 require special bias checks. Studies suggest there are still problems, such as missing bias metrics and weak monitoring after launch. Experts suggest that ongoing audits, clear rules, and showing proof of fixes are needed, and that real accountability might come from careful oversight, not promises of perfect fairness.

Governments now audit AI's 'invisible' political realities, examining how algorithms' technical choices can steer policy and society. Oversight bodies now treat these design choices - like data labels and risk thresholds - as auditable political decisions, a major shift in public sector accountability.

From Theory to Practice: A New Audit Checklist

The idea that "design is political" has officially moved from an academic slogan to an auditor's checklist. Growing numbers of oversight bodies have been using structured examinations to trace how an AI's core assumptions are made. Industry reports suggest that KPMG research exemplifies this shift, treating these foundational choices as documented items that can be tested and verified.

AI audits now focus on the system's 'ontology' - the categories, labels, and priorities it uses to interpret the world. Instead of just testing for accuracy, auditors check what the model considers important, who it includes or excludes, and how it defines concepts like 'risk' or 'match'.

The Four Layers of Algorithmic Accountability

Auditors now trace four distinct layers to ensure comprehensive oversight, moving far beyond simple performance testing:

- Governance: The stated purpose, named executive oversight, and paths for human appeal.

- Data: Quality checks, data lineage logs, and transparent model cards.

- Performance: Disparate impact testing across protected classes and their intersections.

- Monitoring: Post-launch drift checks and periodic re-certification.

Many jurisdictions are implementing independent bias audits for automated hiring tools. Similarly, academic frameworks provide detailed methods for scoring each layer, emphasizing the need for intersectional analysis that combines factors like race and gender, rather than viewing them in isolation.

High-Stakes Decisions: Audits in Immigration and Defense

The real-world impact is clear in immigration technology. Research on Customs and Border Protection's Traveler Verification Service revealed it acts as "permanent infrastructure of border governance." According to industry reports, recent rules have formalized mandatory biometric collection practices. The system's simple labels - "match," "non-match," or "exception" - directly determine who can move freely and who faces secondary inspection, embedding policy into a facial recognition algorithm.

Defense auditors face parallel challenges. With the Joint Warfighting Cloud Capability aggregating data for automated targeting, reviewers now routinely verify the ontology that classifies entities as "hostile" versus "unknown." Guidance from the National Telecommunications and Information Administration stresses that independent red-teaming is critical for such high-risk systems.

Common Weaknesses Exposed by New Frameworks

These rigorous audits consistently expose three critical institutional weak spots:

- Sparse lineage records make it difficult to prove data provenance.

- Intersectional bias metrics that analyze combined groups are often missing.

- Continuous monitoring plans frequently lapse after launch, leaving model drift unchecked.

The Emerging Consensus on Remedies and Oversight

While no single methodology has become a universal standard, a consensus on best practices is emerging. Experts point to algorithm registers, modeled on the system in the Netherlands, which require public disclosure of an AI's purpose, datasets, and human appeal channels. Industry reports also recommend mandatory filings for systems impacting benefits, taxes, or sanctions.

Ultimately, regulators are converging on key requirements: documented risk assessments, ongoing audits, and clear evidence that agencies fix identified problems. The trajectory suggests a maturing field where real accountability might come from careful oversight, not promises of perfect fairness, and design choices once hidden in code are becoming line items in public records.