Infosys: Better DevX Frees 4.9 Hours Weekly for Junior AI Engineers

Serge Bulaev

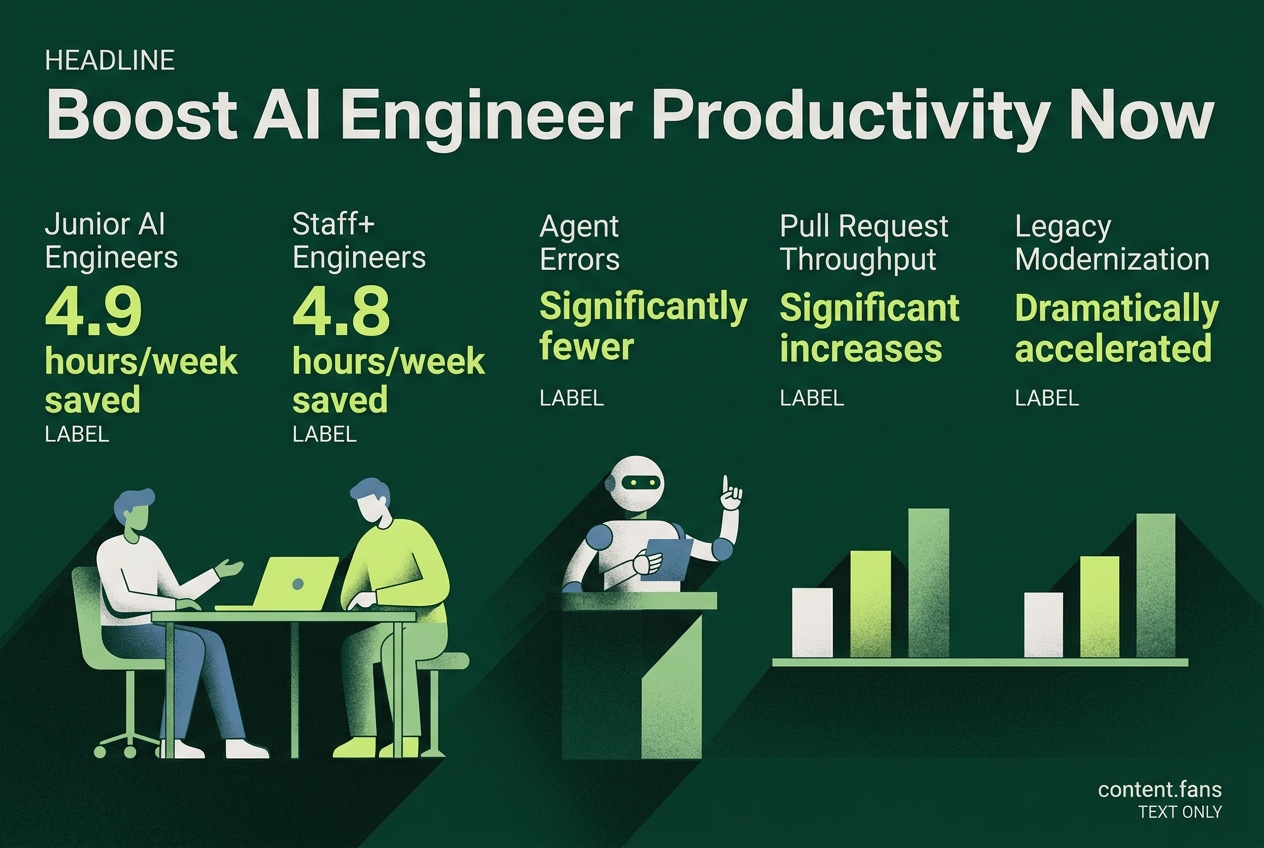

Recent reports suggest that improving the developer experience (DX) for humans may help AI agents work more reliably and save time. Infosys analysis and data from GetDX show that junior engineers using AI daily might reclaim about 4.9 hours each week, and similar time savings are seen for senior staff. However, these "gains depend on clear tools and fast systems", as weak tooling may cause more errors. Studies also suggest that better DX, such as clear documentation and test systems, appears to help agents work faster and reduce mistakes. Larger companies report similar benefits, but the results can vary if supporting systems are not healthy.

Improving developer experience (DevX) is key to unlocking the full potential of AI agents. A recent Infosys analysis highlights that better DevX for junior AI engineers can free up 4.9 hours weekly by making agentic AI more reliable and reducing cognitive friction. When tools, documentation, and pipelines are explicit and fast, AI assistants perform better, follow expected paths, and produce fewer errors.

This creates a reinforcing loop between clean DX and agent productivity. For example, practitioners credit disciplined CLI design and rapid continuous integration for enabling AI agents to verify patches before creating pull requests. This suggests that readiness for advanced AI starts with foundational improvements to scripts and documentation.

How developer experience for humans benefits agentic workflows

A strong developer experience (DX) provides AI agents with clear, predictable pathways for completing tasks. By establishing explicit tools, documentation, and fast CI/CD pipelines, organizations create a structured environment where agents can execute code, run tests, and generate solutions with significantly fewer errors and surprises.

Data confirms these benefits. An impact report from GetDX shows that when DX is tuned for agentic workflows, junior engineers reclaim 4.9 hours weekly, and staff+ engineers save 4.8 hours weekly with daily AI tool usage. Industry reports suggest significant increases in pull-request throughput for daily users of AI coding agents. However, these gains are fragile; organizations with weak tooling can see increased incident rates, as agent performance suffers from systemic bottlenecks.

High-fidelity context accelerates legacy work

High-fidelity context is particularly effective for legacy modernization. A Replay study on "Visual Reverse Engineering" found that providing pixel-perfect video context allows AI agents to dramatically accelerate legacy screen rebuilding processes. As Replay's blog notes, this marks a shift in DX from aiding human developers to packaging domain context for machines. Embedding recordings, tests, and metadata creates guardrails for agents, preventing them from hallucinating requirements.

• Key practices emerging from the reports:

- Single-command project bootstrapping with embedded linting and test targets

- Cloud development environments that cut cold-start waits for both humans and agents

- Machine-readable docs that map repository domains to APIs and ownership

- Fast, deterministic CI loops providing almost immediate feedback

Variance underscores the role of healthy systems

The importance of robust systems is highlighted by performance variance. Industry research suggests significant improvements in agent deployment performance over time, attributing changes partly to better DX for agent deployments. While GetDX reports that a significant portion of merged code is now AI-generated, teams without automated testing experience notable rises in defects. This contrast proves why leaders must treat DX as essential risk mitigation.

Leading enterprises demonstrate this principle at scale. JPMorgan leverages numerous production agents on scripted "blessed paths" to generate pitch books rapidly. Similarly, ServiceNow achieves substantial deflection rates for employee issues with its agent suite. These successes show that when foundational tooling is predictable and well-documented, AI agents can scale effectively across diverse business domains with minimal oversight.

What measurable time savings do AI-assisted workflows deliver for junior engineers?

Junior engineers who use AI tools daily reclaim significant time every week, according to industry reports.

This represents substantial per-capita gains and marks a shift from earlier periods when staff+ engineers saw slightly higher savings. The win comes from agentic coding assistants that automate boiler-plate generation, suggest context-aware fixes, and run local tests in seconds instead of minutes.

How does solid developer experience (DX) make AI agents more reliable?

Clear CLI entry-points, searchable docs, and fast CI form "blessed paths" that agents can follow without drifting into unsupported corners of the codebase.

When these DX assets exist, agents can:

- Self-verify each step against living documentation

- Iterate inside pre-scaffolded pipelines that already pass linting and unit tests

- Surface diagnostics in the same format human reviewers expect, shrinking feedback loops

Infosys notes that agentic AI "redefines the process by anticipating needs and reducing cognitive friction", but only when the underlying DX exposes intent unambiguously.

Which companies show that prior DX work multiplies agent ROI?

- JPMorgan Chase runs numerous production agents that draft investment-banking decks rapidly instead of taking hours, possible only because an internal LLM Suite exposes well-documented APIs and secure data paths built years earlier.

- Klarna attributes significant support savings to a multilingual agent layer that sits on top of existing, clean micro-service contracts; the agent never negotiates ambiguous endpoints.

Both cases echo the original claim that "pre-existing DX work made agents successful".

What share of merged code is now AI-generated, and what is the quality impact?

A significant portion of all merged PRs contain AI-written lines, yet teams without rigorous automated testing see notable increases in defect rates.

The takeaway: DX investments must include deterministic test suites and code-review gates so agents stay on the golden path and humans catch regressions before production.

What emerging tooling trends will help teams scale "blessed-path" agents?

- Anthropic's Agentic Development Lifecycle bakes verification into every stage: spec ➜ multi-agent implementation with continuous checks ➜ human sign-off ➜ deploy.

- Model Context Protocol (MCP) is becoming critical infrastructure; it lets any compliant agent discover and call pre-vetted tools without framework lock-in, standardizing safe integrations across vendors.