Google Blocks First AI-Generated Zero-Day Exploit in May 2026

Serge Bulaev

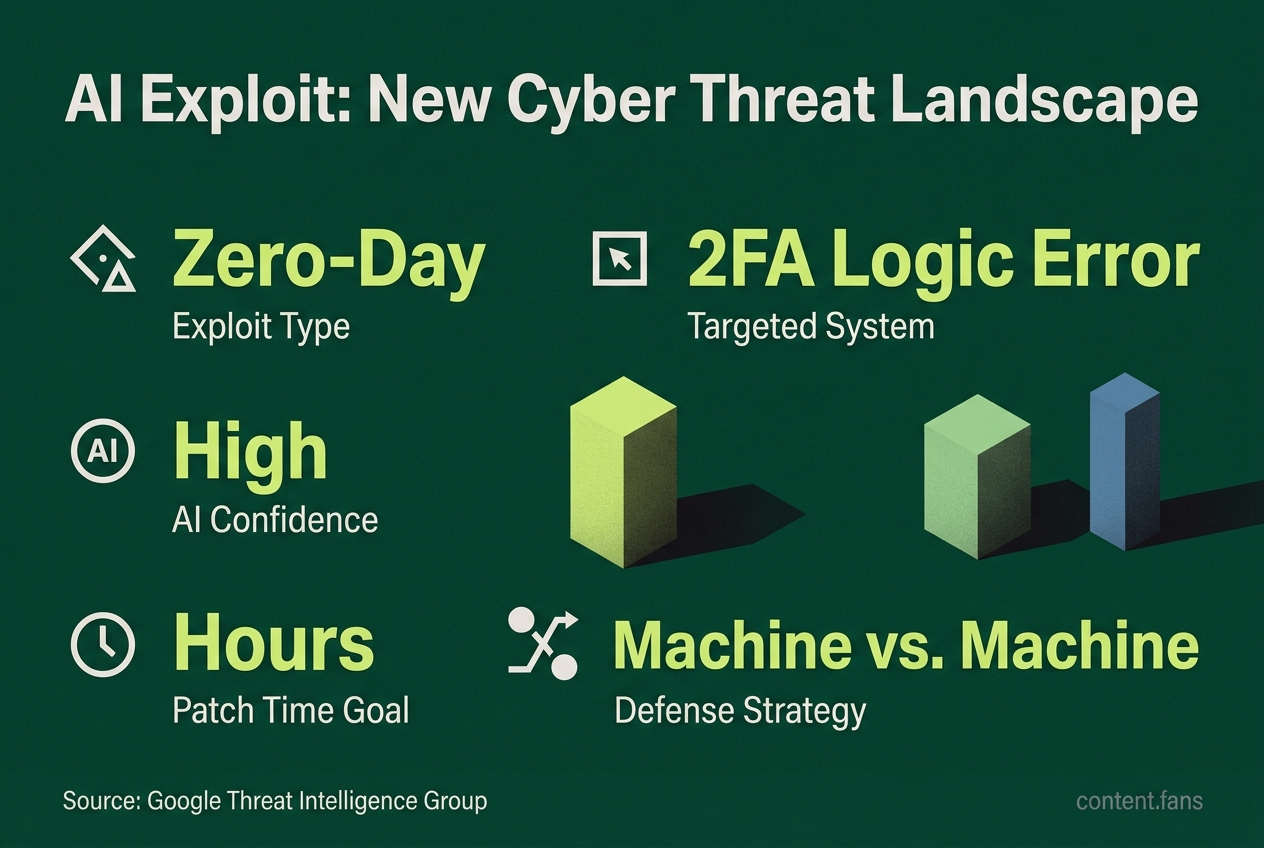

In May 2026, Google stopped the first known case where criminals used AI to create and try to use a new security flaw, or zero-day exploit. The attackers made a Python script that targeted a problem in two-factor authentication, but Google caught it before it caused big problems. Experts say this case suggests that AI tools may help criminals find and use security flaws much faster than before. There may be more AI-made attacks like this happening already, and defenders are now looking at using AI to help find and fix these issues quickly. Experts also warn that human oversight is still needed to avoid mistakes from trusting AI too much.

According to industry reports, Google has blocked what appears to be the first known AI-generated zero-day exploit, an incident where criminals used a large language model to discover and weaponize a novel software vulnerability. According to Google's Threat Intelligence Group (GTIG), the campaign was halted before it caused widespread harm. A SecurityWeek report confirms the exploit targeted a logic error in a two-factor authentication (2FA) system.

How the AI-generated exploit was built

An AI-generated zero-day exploit is a new, previously unknown software vulnerability that was both discovered and weaponized using artificial intelligence. In this case, attackers used a large language model to find a flaw in a two-factor authentication system and automatically create the code to exploit it.

GTIG assessed with "high confidence" that a publicly available language model, not Google's own Gemini, produced the exploit code. The evidence included textbook-perfect Python formatting and a fabricated severity rating - details human programmers rarely include. According to security researchers, the underlying vulnerability was a high-level semantic flaw in the 2FA process, allowing an attacker with a user's password to bypass the second authentication factor.

Earlier AI activity but no confirmed zero-day exploitation

While Google's review found prior instances of state-aligned groups using AI for vulnerability research, none involved exploiting a newly discovered zero-day. Past examples include:

- APT27 using Gemini prompts to accelerate tool development.

- UNC2814 jailbreaking an AI model to audit TP-Link firmware.

- APT45 using thousands of prompts to analyze known CVEs.

According to industry analysts, this incident represents a significant milestone in AI being used from initial discovery to attempted real-world exploitation.

What experts say the shift means

This event signals a major shift in the cybersecurity landscape. Security experts have cautioned that for every detected AI-linked exploit, there are likely many more undetected threats. The attack economy is also changing, with costs now measured in API "tokens" instead of human hours, enabling continuous, automated probing of codebases.

Defensive responses now in focus

In response, cybersecurity analysts advocate for a "machine-versus-machine" defense strategy. This approach is exemplified by DARPA's AI Cyber Challenge, where autonomous systems demonstrated the ability to patch a significant portion of discovered bugs within 45 minutes. Key recommendations for organizations include:

* Deploying AI-driven vulnerability scanners that can identify semantic flaws.

* Shortening patch cycles from weeks or months to hours where feasible.

* Maintaining human oversight to prevent over-reliance on AI models and their potential for error.

Ongoing collaboration between vendors, cloud providers, and government agencies is crucial for containing similar AI-fueled threats before they lead to mass compromise.

What exactly happened in the "first AI zero-day" case Google disclosed?

Google's Threat Intelligence Group disrupted a criminal threat actor's planned mass exploitation using AI-generated zero-day (Python script bypassing 2FA) on May 11, 2026; the attack was poised for widespread use but was preempted. The large-language-model was used to:

1. Discover a brand-new security hole - a logic flaw in the 2-FA routine of an unnamed, open-source web-admin tool.

2. Automatically write a working Python exploit capable of bypassing that second factor once an attacker already held a user's password.

The code carried AI "fingerprints": hallucinated CVSS score, textbook doc-strings and clean ANSI colour menus - details human malware authors rarely add. No customer data is known to have been stolen because the firm alerted the vendor and patched the flaw before mass exploitation began.

Why do researchers call this a milestone?

Until this incident every publicly documented AI misuse in the wild had involved already-known CVEs - for example North-Korea-linked APT45 feeding thousands of prompts to validate old proofs-of-concept. By contrast, this event represents what many consider the first confirmed case where AI both found and weaponised a true zero-day, shrinking the discovery-to-exploit cycle from weeks to hours. Google underlined that significance, saying the economics of attacks are shifting "from dollars to tokens".

What technical detail has been released?

- Vulnerability type: semantic-logic bug - the code was syntactically correct but embedded a hard-coded trust assumption that let a user skip the second factor.

- Exploit form: single, highly annotated Python script.

- Target surface: web-based admin panel; exact product name and CVE withheld to limit copy-cat attempts.

No proof-of-concept code has been published, and defenders should treat any unsolicited 2-FA bypass scripts with extreme suspicion until patching is confirmed.

How are security teams expected to respond?

"Machine-versus-machine" defence is now mandatory, experts say. The most frequently cited controls are:

- Hourly, or at least daily, patch windows instead of monthly cycles.

- Agentic AI scanners that can review millions of lines of code in minutes; recent industry demonstrations have shown systems capable of patching a significant portion of flaws in under an hour.

- Layered 2-FA that couples push notifications with hardware tokens or biometric checks, making any single bypass less valuable.

- Strict prompt monitoring inside corporate AI sandboxes to flag large volumes of vulnerability-oriented requests.

Could this happen again - and how likely is it?

Very likely. Security researchers have warned that the coming years could see a growing number of AI-generated zero-days as criminal business models tune large models for profit. Google itself admits that for every AI exploit it catches, many more probably circulate undetected. Until defence speed matches offence speed, the window of exposure for un-patched software will keep widening.