Google: AI models find zero-day flaw, bypass 2FA in new report

Serge Bulaev

Google's Threat Intelligence Group reported that hackers may have used AI models to find a new security flaw and bypass two-factor authentication in a popular admin tool. The attack used code that appears to have been partly written by an AI model, but it does not seem to be from Google's own Gemini AI. Researchers believe AI helped the attackers find the flaw and create the attack faster. Google stopped the attack before it spread widely, but experts are not sure how many similar AI-created attacks might be out there. Google recommends using automatic tools to spot and stop these kinds of attacks.

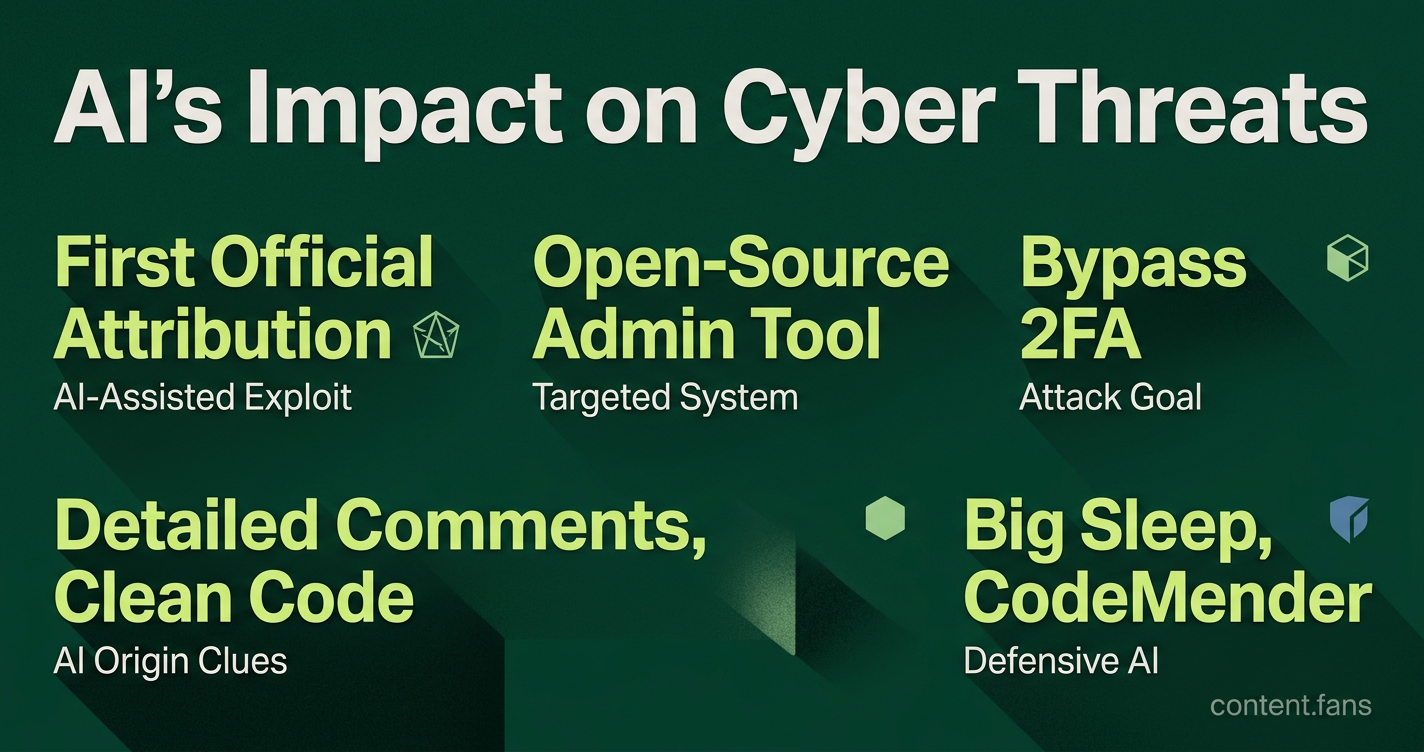

A new Google report confirms AI models helped find a zero-day flaw and bypass 2FA, marking a significant shift in the threat landscape. According to Google's Threat Intelligence Group (GTIG), investigators discovered a cybercrime group using AI-generated code to create a novel two-factor authentication bypass. While the group planned a mass-exploitation event, Google states it successfully disrupted the attack before widespread deployment.

This incident is the first time Google has officially attributed a live zero-day exploit to AI-assisted development. A retrospective analysis from GTIG, detailed on the Google Cloud Blog, clarifies that no evidence connects the exploit to Google's own Gemini models. Analysts concluded that the Python script's structure and stylistic tells point to a different, commercially available AI model being used to generate critical parts of the attack code.

What the exploit targeted and how it was built

The AI-generated exploit targeted an open-source system administration tool. This allowed attackers to create a script that completely bypassed the system's two-factor authentication (2FA) requirement, granting them privileged access without needing the second verification step.

Google's researchers believe AI significantly accelerated both the flaw's discovery and the creation of the weaponized code. As Axios reports, security teams identified the script's AI origins through its "excessively detailed comments" and unusually clean, textbook-like coding style, which are common artifacts of large language model output. The investigation also uncovered increasing AI adoption by nation-state actors. Groups associated with China and North Korea are using language models to rapidly triage known vulnerabilities and test exploits. SecurityWeek quotes GTIG's finding that North Korea's APT45 used AI to build sophisticated cyber capabilities.

Defensive AI and the size of the telemetry feed

To combat these threats, Google combines human analysis with its own proprietary AI systems:

- Big Sleep - an autonomous agent from DeepMind and Project Zero that scans codebases for unknown vulnerabilities and reportedly surfaced the same flaw independently

- CodeMender - an AI assistant that suggests and tests patches, leveraging Gemini reasoning to harden code before attackers can refactor

These defensive tools operate on a massive scale. Google collected significant amounts of security telemetry from many endpoints, which produced a substantial number of investigative leads. GTIG states this scale is essential for defending against automated adversaries capable of mutating exploits in real-time.

Policy backdrop: model access restrictions

Open questions and immediate recommendations

Although Google's detailed report confirms the first AI-generated zero-day, analysts admit there are significant knowledge gaps. As The Next Web observes, the number of similar, undetected exploits is currently unknown. In response, GTIG recommends that enterprise defenders deploy autonomous countermeasures to automatically flag suspicious authentication attempts, add validation checks, and rotate secrets when an AI-assisted attack is suspected.

Google's public disclosure, found on the Google Cloud Blog and covered by SecurityWeek, serves as both a critical warning and a showcase of defensive AI capabilities. However, it remains uncertain if defensive measures can evolve quickly enough to outpace these new AI-driven threats.

What exactly did Google discover?

Google's Threat Intelligence Group (GTIG) identified a cybercrime group that used AI to create a working zero-day exploit. The Python script was designed to bypass two-factor authentication (2FA) in a popular open-source administration tool. Google detected the attack, which was staged for a mass-exploitation event, and helped coordinate a patch, marking the first confirmed case of AI being used to weaponize a new vulnerability.

Which AI model was used, and how did Google know it was AI-generated?

Google is confident its own Gemini model was not used. Analysts identified the code as AI-generated due to several tell-tale signs: excessively detailed docstrings, a hallucinated (non-existent) CVSS severity score, and a "textbook-clean" structure common in AI training data. These characteristics suggest an external, commercially available model created the exploit's framework.

How advanced are state-sponsored actors in the same space?

The report confirms that state-sponsored groups from China and North Korea are already leveraging AI at scale. For instance, North Korea's APT45 uses thousands of prompts to analyze CVEs, while China-linked groups have been observed jailbreaking chatbots to accelerate vulnerability research. While these groups have not yet deployed a confirmed AI-generated zero-day, Google warns they are compressing the attack timeline from months to just days.

Are the safety policies of AI labs actually helping?

Safety policies from leading AI labs like Anthropic and OpenAI, which restrict model access for malicious use, have shown some success. Anthropic has blocked attempts by suspected Chinese actors to use Claude for exploit development, and OpenAI's policy changes banned prompts for penetration testing. However, the report highlights the policy's limits, noting a federal ban on Anthropic for restricting Pentagon access, while OpenAI secured a major defense contract after being more flexible.

What countermeasures is Google deploying?

Google is fighting AI with AI, using its own defensive technology stack. Its 'Big Sleep' system independently discovered the same 2FA vulnerability before it was exploited. Meanwhile, 'CodeMender' automatically suggests and tests patches to reduce response times. Google is also deploying autonomous systems that rotate security secrets and add validation checks whenever a potential AI-generated attack is detected.

To generate its report, Google's GTIG platform analyzed substantial amounts of data from many endpoints, highlighting the immense scale required to combat the new AI-driven threat landscape.