EY withdraws study after GPTZero finds AI hallucinations, fake citations

Serge Bulaev

Ernst & Young (EY) withdrew a study about loyalty rewards after reviewers, including the AI tool GPTZero, found fake citations and possibly made-up data. Some claims in the report, like the size of the loyalty-points market and fraud rates, could not be traced to real sources or seemed inconsistent. EY said it is investigating how this happened and stressed its commitment to using AI responsibly. Experts say that errors like these may risk spreading false information, especially when trusted firms are involved. The incident suggests that companies are starting to add more checks to AI-generated work, like human reviews and source tracking.

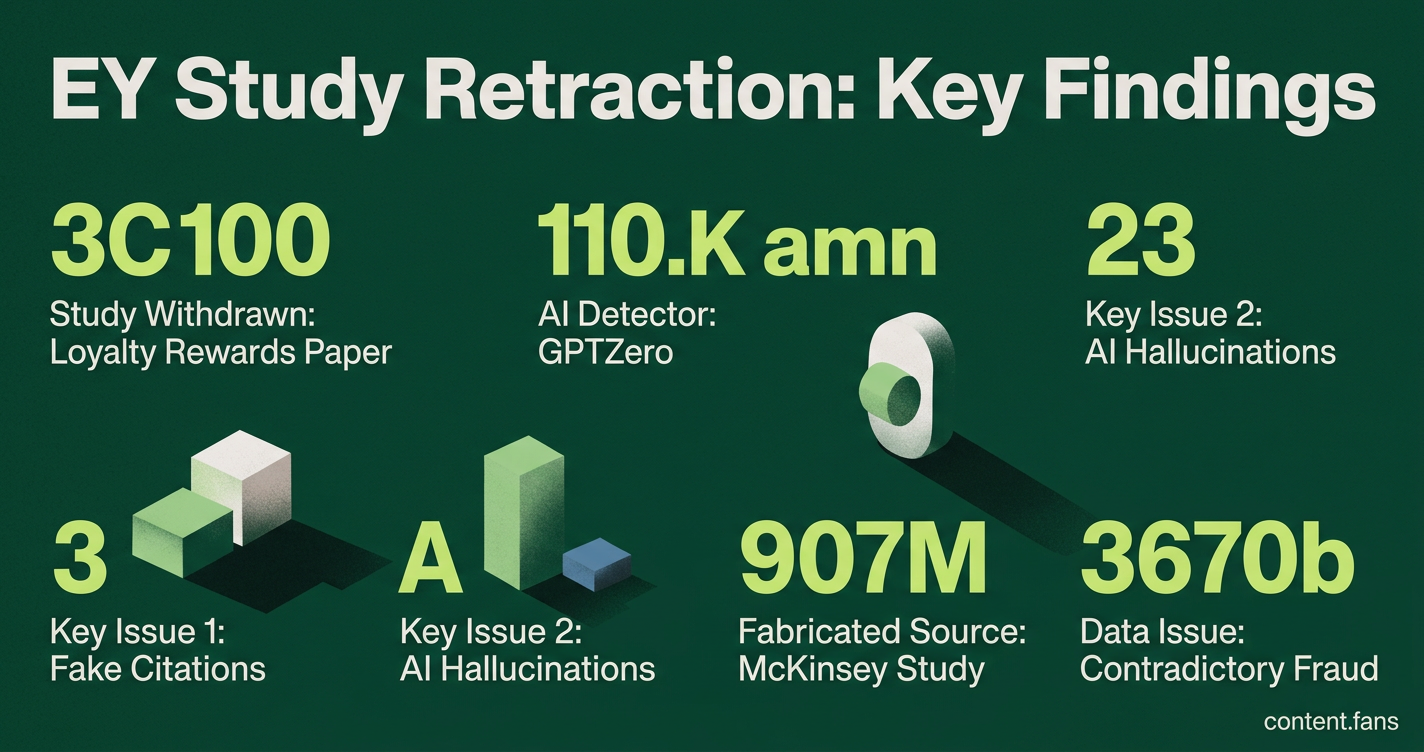

Ernst & Young (EY) has withdrawn a major study after the AI detection tool GPTZero found it contained significant AI hallucinations and fake citations. The retraction of the loyalty rewards paper, "Points of Attack: Uncovering Cyber Threats and Fraud in Loyalty Systems," followed an external review that flagged fabricated data and non-existent sources, startling consultants who rely on such research.

The investigation was led by AI-detection startup GPTZero. In a public report on the GPTZero investigation, the company described the EY document as "stuffed with fake citations and inaccurate claims," highlighting the risk that such errors could poison both human and AI-powered research databases.

What Reviewers Found

Reviewers, led by AI detection firm GPTZero, found the EY report contained fabricated citations, including a non-existent McKinsey study and broken web links. The analysis also flagged contradictory data on fraud rates and unverifiable market size claims, prompting the withdrawal due to concerns over AI-generated misinformation.

Key discrepancies flagged by the review include:

- Unverifiable Market Data: A core claim about the global loyalty-points market value and the significant portion of unused points could not be traced to any public source.

- Contradictory Fraud Statistics: The report claimed loyalty fraud attacks had increased substantially in recent years, but different sections attributed varying timeframes and percentages to these increases.

- Fabricated and Broken Sources: A cited McKinsey report was found to be non-existent, and several web links led to error pages, a finding corroborated by the Financial Times.

EY's Official Response

In a statement, an EY spokesperson confirmed the firm "removed the report" and is actively "reviewing the circumstances that led to this article's publication." While reaffirming its commitment to responsible AI use, EY has not provided a timeline for the investigation's conclusion, potential republication, or any disciplinary actions.

Broader Implications for the Consulting Industry

This incident highlights the significant risks of using unverified AI-generated data in professional services. Industry analysts warn that hallucinated statistics can compromise client decisions, as reports from "Big Four" firms are frequently used in high-stakes investment memos and competitive analyses. An error in a source document can rapidly amplify misinformation.

Furthermore, the event underscores the emerging role of third-party AI verification tools like GPTZero. Their ability to flag anomalies was sufficient to compel a major global firm to retract a published study, signaling a shift toward more rigorous external validation.

Emerging Best Practices for AI Governance

The EY retraction accelerates the adoption of robust AI governance frameworks. According to industry reports, leading firms are implementing safeguards to prevent similar incidents:

- Mandatory Human Oversight: Requiring human review for all AI-assisted content before publication.

- Rigorous Source Verification: Manually checking all citations and data sources for authenticity and relevance.

- Transparent Audit Trails: Logging AI prompts, model versions, and human edits to ensure accountability.

- Data Escalation Protocols: Establishing clear procedures for handling statistics that lack a verifiable primary source.

- Heightened Scrutiny: Applying special review standards for high-stakes content related to finance, law, and healthcare.

These controls demonstrate a move toward integrating quality assurance directly into AI workflows rather than prohibiting the technology.