Anthropic Urges Chip Export Controls to Maintain US AI Lead by 2028

Serge Bulaev

Anthropic's policy paper suggests the U.S. should tighten export controls on advanced chips to help keep its lead in artificial intelligence by 2028. The company warns that foreign actors may be using fake accounts to copy U.S. AI models, which weakens current chip export rules. U.S. officials appear to be responding with stricter export rules and new laws that might require more checks on where chips go. Anthropic also recommends more ways to block large-scale copying of AI models and better tracking of exported chips. Experts say the exact rules and their effects are still being discussed and may change.

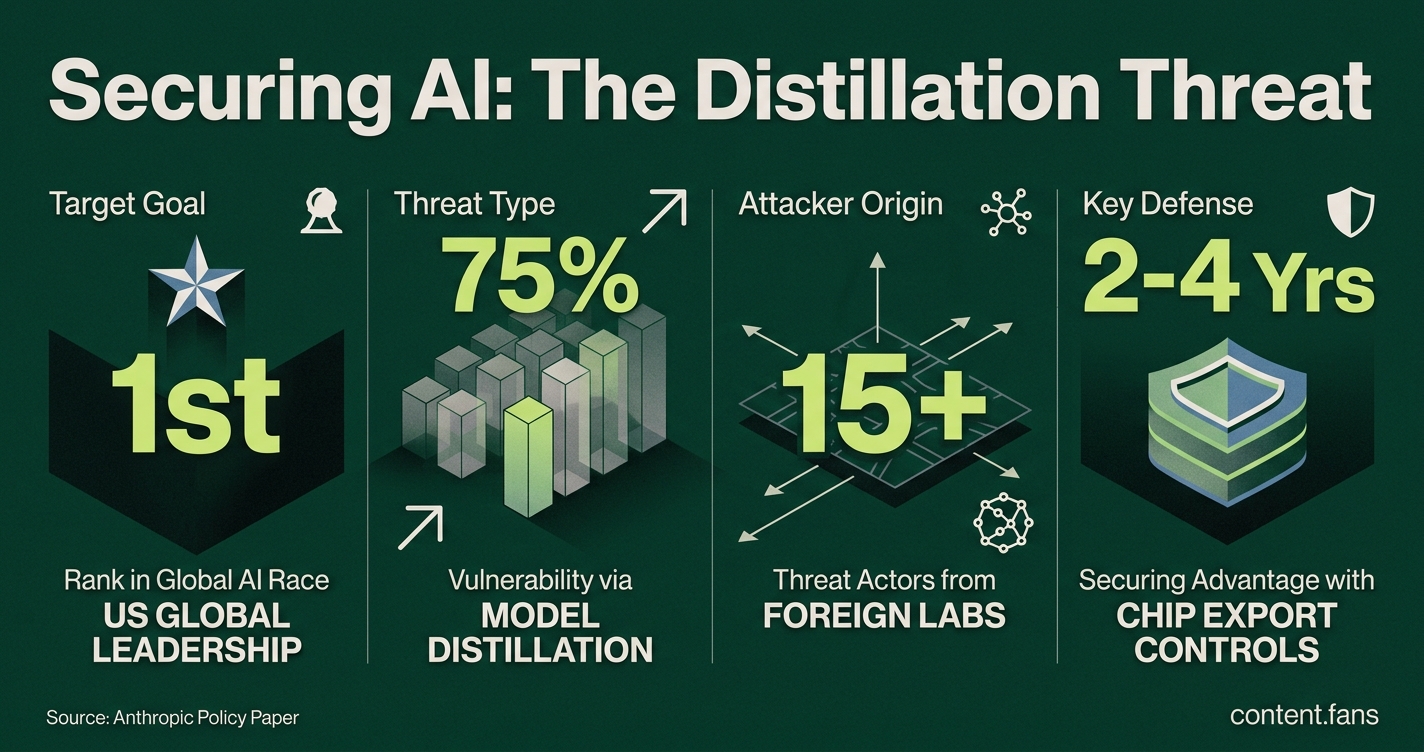

In a recent policy paper, AI firm Anthropic urges stricter chip export controls to secure America's lead in artificial intelligence through 2028. The company warns that existing restrictions are being undermined by illicit "model distillation," where foreign entities use advanced chips for industrial-scale theft of U.S. AI capabilities through millions of automated queries.

The Threat of Model Distillation

Anthropic's concerns are based on evidence of large-scale 'distillation attacks.' Reuters reports the company tracked significant interactions with its Claude AI from numerous fraudulent accounts linked to Chinese labs DeepSeek, Moonshot AI, and MiniMax. In a public post, Anthropic states these attacks, which aim to recreate a model's abilities, "depend in significant part on access to advanced chips," reinforcing the case for tighter export controls (Anthropic).

Anthropic advocates for stronger chip export controls because it has detected adversaries using advanced hardware to steal its AI model's capabilities on a massive scale. This process, known as "distillation," allows foreign actors to replicate U.S. technology, undermining both economic competitiveness and national security.

Government Reaction Balances Security and Market Access

The U.S. government appears to be aligning with this perspective, balancing national security with market access. According to industry reports, Washington has devoted significant resources to blocking Beijing's access to frontier AI technology. The Commerce Department has formalized stricter licensing criteria for high-end AI chips while permitting conditional sales to partners who provide security assurances. Draft rules described by Reuters could require foreign buyers of large chip quantities to offer government-to-government assurances or invest in U.S. data infrastructure.

Congress is also taking action. According to industry reports, the Chip Security Act has advanced through committee and would mandate on-site audits and ping-based location verification for exported chips to ensure lifecycle monitoring.

Technical Focus: Distillation Attacks

Anthropic distinguishes between legitimate internal use of distillation for compressing models and the risk of external actors harvesting proprietary outputs. The company reports that these attack campaigns are becoming "increasingly sophisticated and intense," sometimes using tailored prompts to bypass safety filters. Underscoring the national security threat, according to industry reports, White House guidance calls for enhanced intelligence sharing with private labs and exploring accountability for foreign actors engaged in this practice.

Anthropic's recommended interventions include:

- Stricter controls on advanced chip exports, particularly to China.

- Robust identity and usage verification to block mass scraping of model outputs.

- Additional enforcement funding for the Commerce Department and intelligence agencies.

What the 2028 Clock Means

Anthropic frames 2028 as a critical deadline, presenting two possible futures: one where the U.S. cements its AI lead with stronger controls, and another where adversaries close the gap by copying American innovations at a fraction of the time and cost. While experts caution this is advocacy, not a prediction, the paper has fueled a policy trend toward a multi-pronged strategy of hardware licensing, software access limits, and real-time oversight. The final shape of these regulations is unsettled, but the distillation threat is now a central consideration in U.S. tech policy.

What is Anthropic's 2028 deadline about?

Anthropic says the U.S. and its allies have a narrow 1-2 year window - ending around 2028 - to lock in a lead over China in frontier AI. The firm argues that tighter chip export controls and blocking large-scale model copying ("distillation attacks") are the fastest levers left to widen the gap before China closes it.

How do "distillation attacks" threaten U.S. AI leadership?

Distillation turns a proprietary model into a smaller copy by training on its answers. Anthropic tracked significant Claude interactions from numerous fraudulent accounts it links to DeepSeek, Moonshot and MiniMax, claiming the three Chinese labs harvested outputs to recreate similar capabilities at a fraction of the cost. Because distilled copies can strip out safety filters, the practice is framed as both an economic and national-security risk.

Why does Anthropic want even stricter chip controls?

Advanced chips are still needed to run the millions of queries that feed a distillation campaign. Anthropic's logic: limiting high-end GPU access not only slows direct training of rival models but also shrinks the scale at which foreign actors can copy U.S. systems, making controls doubly effective.

What new government actions are already underway?

- Commerce Department has codified tiered licensing rules for AI chips and is weighing government-to-government assurances plus local investment requirements for large shipments.

- House Foreign Affairs Committee has advanced the Chip Security Act, which would demand post-export audits and ping-based location tracking of controlled processors.

- According to industry reports, the White House has issued guidance ordering agencies to share threat data with AI firms and explore penalties for foreign distillers.

Could tighter rules hurt U.S. cloud business abroad?

Possibly. Mayer Brown notes the new framework "will reverberate across the AI ecosystem, affecting sales to allies and partners", and Reuters reports some countries may have to invest in U.S. data centers or give security pledges just to receive chips. Anthropic counters that without controls the technology edge disappears faster, so short-term revenue risk is preferable to long-term strategic loss.