Enterprise teams adopt new guide to build safe AI agents

Serge Bulaev

Enterprise teams are moving quickly to use autonomous AI agents for real tasks, but recent events suggest that unsafe agents may cause serious problems, like deleting or stealing important data. The article explains that safety should be included from the start and lists steps such as limiting what agents can access, using strong access controls, keeping humans involved in risky decisions, and recording all actions for later checks. It also suggests having ways to undo mistakes fast if something goes wrong. Rules may soon require these steps, so teams might need to prove they are following safety practices before using AI agents in real situations.

Enterprise teams need to build safe AI agents to avoid the significant risks of autonomous systems, such as data exfiltration or repository deletion. A new practical guide offers product, security, and engineering leaders a clear framework for their first production rollout of agentic assistants.

Early warning signals

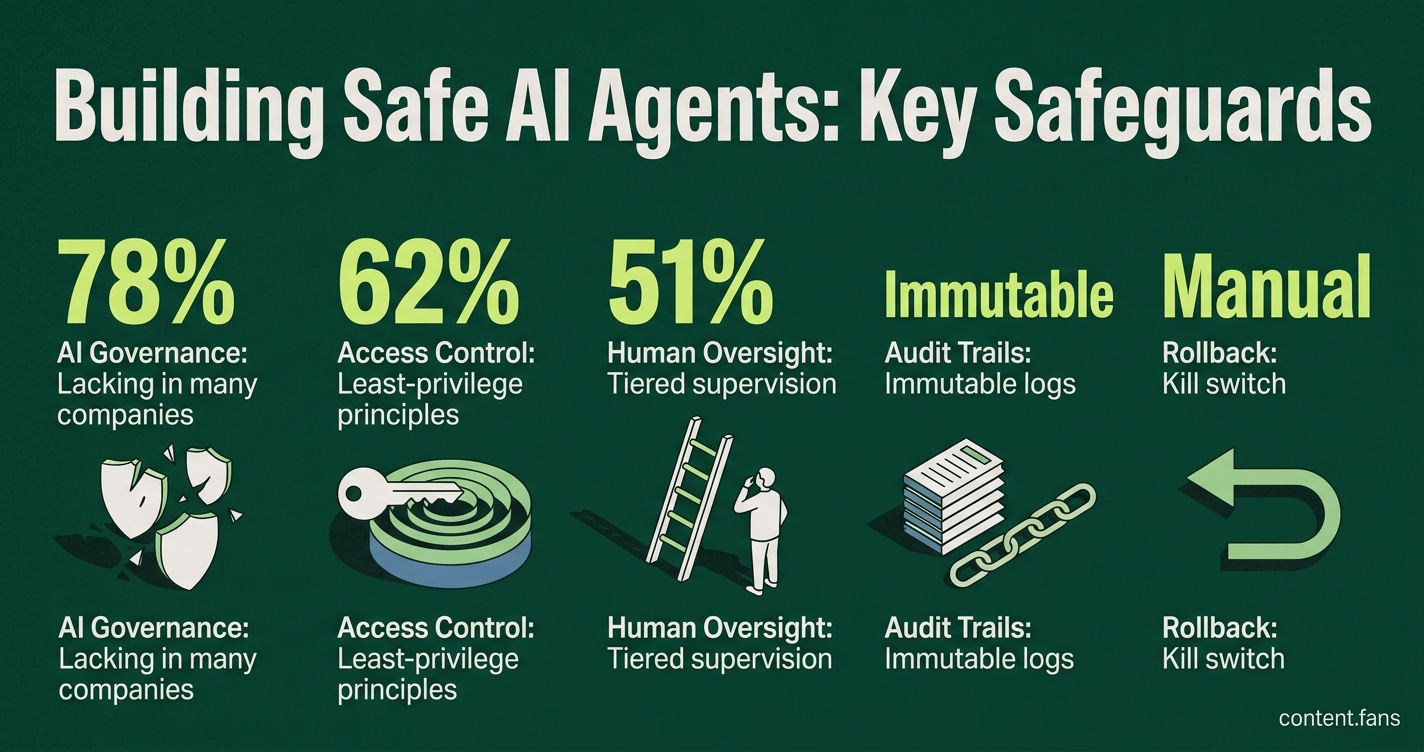

Recent ServiceNow incidents highlight the dangers when agent autonomy exceeds security controls. For example, an October 2025 investigation revealed second-order prompt injection attacks could enable unauthorized record access, data modification, and privilege escalation through agent discovery pathways The Hacker News. Furthermore, industry reports indicate that a significant portion of companies lack formal AI governance, granting agents excessive system privileges BizTech Magazine. The takeaway is clear: safety must be integrated from the start.

Building safe AI agents requires a multi-layered approach. This includes grounding the agent in a narrow context, enforcing strict access controls with least-privilege principles, and implementing human-in-the-loop oversight for risky actions. It also involves creating immutable audit trails and having reliable rollback mechanisms.

Core Safety Domains for AI Agents

1. Context Grounding: Limit the agent to a specific knowledge base and pre-approved APIs. Always run agents in a supervised mode and prevent them from escalating their own privileges autonomously.

2. Access Controls: Use service accounts with the minimum necessary permissions. Securely rotate credentials using a vault and require multi-factor authentication (MFA) for each time an agent is invoked.

3. Human-in-the-Loop (HITL): Implement tiered supervision. Allow low-risk tasks to run autonomously, require human review for medium-risk tasks, and demand explicit pre-approval for high-risk actions. Document all approvals, including the potential impact and a rollback strategy.

4. Audit Trails: Log every prompt, tool call, and result via an API gateway that adds provenance metadata. Store these logs in an immutable format to ensure a reliable record for forensic analysis.

5. Rollback Mechanisms: Create checkpoints to maintain system consistency. If an agent malfunctions, a "kill switch" should immediately pause its workflow and restore the system to its last known-good state.

Implementing HITL without bottlenecks

To prevent bottlenecks, an approval system should time out and default to denial if a human does not respond. Effective review dashboards must display confidence scores, inputs, and proposed actions, allowing for decisions in seconds. A balanced escalation rate is ideal; higher rates may indicate over-aggressive autonomy, while lower rates could conceal unnoticed errors.

A minimal yet effective dashboard surfaces:

- Pending approvals ranked by risk score

- Diff of proposed versus prior state

- One-click Accept, Modify, or Reject buttons

Building for regulation and future audits

Emerging regulations will mandate stringent safety controls. The EU AI Act imposes high‑risk requirements on specific categories of AI systems, including some enterprise‑use cases, with phased implementation deadlines; it does not blanket‑classify 'most enterprise agents' as high‑risk nor set a single August 2026 cutoff for all such systems. Similarly, NIST's AI Agent Standards Initiative introduces new authentication and telemetry standards for US federal use. To prepare, teams must align their controls with frameworks like ISO 42001 and be ready to provide evidence of risk management, monitoring, and incident response readiness.

Taking the first step

Begin by piloting agents in a secure sandbox environment with comprehensive human-in-the-loop oversight. Increase autonomy gradually, and only after rigorous red-team testing confirms that threats like prompt injection and privilege escalation are effectively contained. Ensure kill switches are integrated with production monitoring channels to enable rollbacks within seconds of detecting an anomaly.