DeepMind unveils 'Magic Pointer,' a Gemini-powered cursor that understands intent

Serge Bulaev

Google DeepMind has unveiled 'Magic Pointer,' an experimental cursor powered by Gemini that may understand user intent. Early demos suggest it can let users edit images or find places on a map just by pointing and speaking, instead of typing prompts. The system appears to work by capturing what is near the cursor and sending it to Gemini for quick processing, which might help the computer react to what the user is focusing on. Magic Pointer is still being tested, with limited access now and possible wider rollout later in 2026. Some reviewers suggest this tool could make using computers faster, but there are still technical and privacy challenges to solve.

Google DeepMind has introduced Magic Pointer, an experimental Gemini-powered cursor that understands user intent through pointing and speaking. This technology upgrades the familiar mouse cursor with advanced language and vision models, allowing it to interpret on-screen context and streamline complex tasks without requiring users to type detailed prompts.

How the AI pointer works

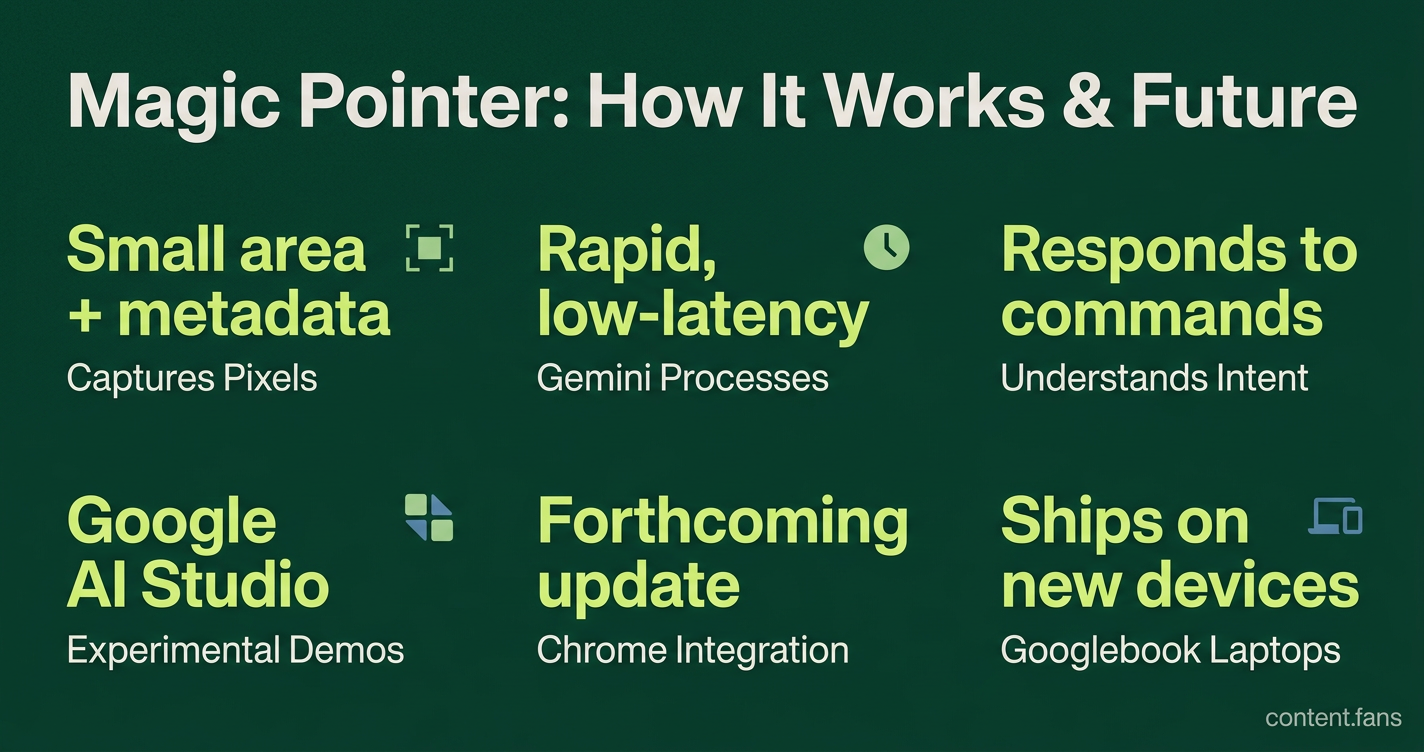

Magic Pointer works by capturing a small area of pixels and associated metadata around the cursor. This contextual bundle is sent to the Gemini model for rapid, low-latency processing, which allows the system to understand what the user is focusing on and respond to verbal commands instantly.

This core mechanism enables Gemini to perform tasks directly based on visual cues. For example, it can answer questions about a highlighted paragraph or create a calendar event from a visible date. According to DeepMind engineers, the goal is to let users "show intent through location," significantly reducing the effort of composing prompts.

Availability and Rollout Timeline

Magic Pointer is being released in phases to manage expectations and gather user feedback. According to industry reports, the rollout includes three key stages:

- Experimental Demos: Accessible now in Google AI Studio for tasks like image editing and map searches by pointing and speaking.

- Chrome Integration: A forthcoming update will integrate the feature into the Chrome browser.

- Googlebook Laptops: The feature is expected to ship on new devices from major manufacturers.

The Future of UI: Intent-Driven Design

Industry analysts see Magic Pointer as a key component in the broader shift toward ambient, intent-driven user experiences. Instead of interacting with static chat boxes, AI is evolving into an invisible layer that anticipates user needs. This technology positions the cursor as a primary sensor for capturing user focus, feeding that context to deeper agentic systems that can orchestrate tasks across multiple applications.

Technical Challenges and Solutions

Despite its promise, significant technical hurdles remain before widespread adoption. Key challenges include maintaining sub-second latency, preventing reasoning errors as context grows (a problem known as "context drowning"), and ensuring robust security and permission models for cross-application data access. Researchers are exploring solutions like specialized routing and advanced decoding techniques to overcome these obstacles.

As these challenges are addressed, the familiar cursor is poised to become a frontline sensor in an increasingly intelligent and responsive computing environment.

What is Magic Pointer, and how does it differ from a regular cursor?

Magic Pointer is a Gemini-powered AI cursor that replaces "where am I pointing?" with "why am I pointing?". Instead of merely indicating screen coordinates, it infers user intent from the object under the cursor and then triggers context-aware actions such as turning an email date into a calendar event, converting a table into a chart, or identifying a restaurant in a paused video frame. In short, the pointer starts to carry part of the prompt for you, eliminating the need for cumbersome typing.

When and where can I try Magic Pointer today?

- Right now: experimental demos are live in Google AI Studio (try editing an image or locating a map feature just by pointing and speaking).

- Next: the feature is rolling out to Gemini in Chrome so you can highlight part of any webpage and ask Gemini about it with zero typing.

- Later: it will ship on Googlebook laptops from major manufacturers.

The phased rollout means you can experiment today and expect deeper system-level integration within months rather than years.

What concrete tasks does Magic Pointer actually perform?

Early examples from testing include:

- Event creation: point at a date in an email and instantly generate a calendar invite.

- Data visualization: select a raw table and receive a ready-to-use chart.

- Place search: hover over a restaurant shown in a paused video frame and get directions pulled up automatically.

In each case, the cursor encodes contextual pixels and semantics so Gemini can finish the job without the user composing a prompt.

How does this change the broader trend in AI interfaces?

Industry reports suggest that a significant portion of customer interactions are projected to be AI-powered in the coming years, moving away from static UI toward "living" systems. Magic Pointer fits this shift by turning the 50-year-old mouse pointer into an ambient AI layer that compresses multi-step workflows into a single gesture. Designers now focus less on "how it looks" and more on "how it behaves," summarizing the trend to invisible, context-aware assistance without overt prompts.

What technical hurdles still need to be solved?

For Magic Pointer to feel instant and reliable, Google must tackle several technical challenges:

- Low-latency inference: avoiding significant runtime degradation seen in some embedded AI systems after sustained use.

- Cross-app context parsing: breaking documents into parts (text, tables, images) and routing each to the best specialized model instead of forcing one large model to "read everything."

- Security and permissions: ensuring the cursor can safely read pixel data across apps without exposing sensitive information.

Solutions on the horizon include agentic parsing pipelines and speculative decoding, which speed up inference by having smaller draft models pre-approve multiple tokens before a larger model signs off.