Anthropic adopts unique governance to balance AI safety, fast shipping

Serge Bulaev

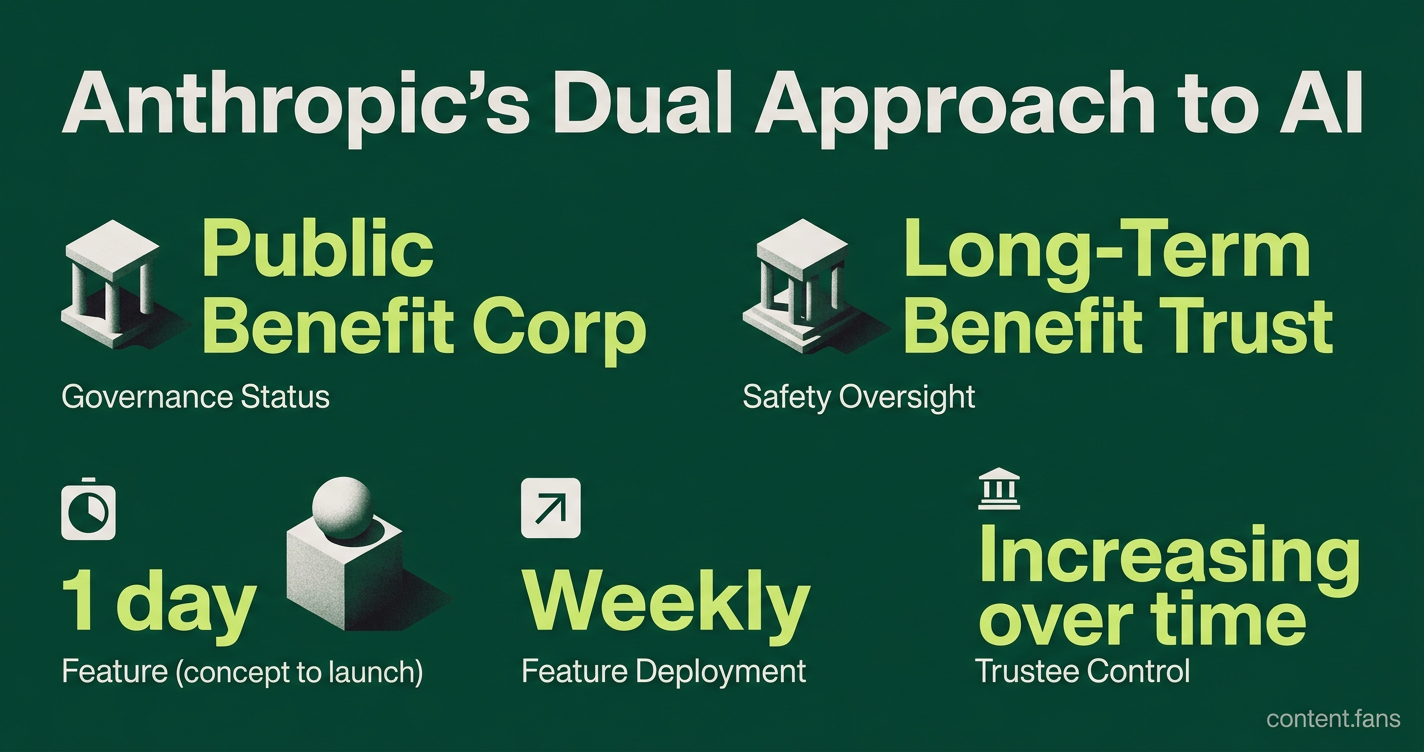

Anthropic appears to balance AI safety with fast product development through a unique governance approach and team structure. The company created a trust that may gradually control its board, aiming to prioritize long-term benefits for humanity over profits. This trust, run by independent experts, is supposed to shield Anthropic from investor pressure, though it has not fully filled all its board seats yet. The product team uses quick feedback and strong internal tools, which reportedly allows them to release new features much faster than competitors. However, some observers note the system's success might depend on the trust fully controlling the board before more advanced AI systems are released.

Anthropic's unique governance for balancing AI safety and fast shipping is a frequent talking point among AI policy analysts. The AI startup's approach enables it to deploy weekly features while prioritizing safety, a balance many rivals struggle with. This strategy stands on two pillars: a novel governance architecture to insulate its mission from profit-only incentives and a product organization built for maximum speed.

Governance Structure for Mission Durability

Anthropic balances AI safety and product speed through a two-part system. Its Public Benefit Corporation status and a unique Long-Term Benefit Trust insulate its mission from profit motives, while its product team uses agile methods like 'research previews' and powerful internal tools to accelerate development cycles.

As a Public Benefit Corporation, Anthropic's charter legally defines its purpose as existing "for the long-term benefit of humanity." To enforce this, the co-founders established the Long-Term Benefit Trust (LTBT) in 2023. According to industry reports, this trust holds non-economic Class T shares that will grant it increasing control of the board over time.

The LTBT's trustees, who cannot be employees or major investors, include experts in AI and national security. According to TIME magazine, this structure is designed to shield leadership from pressure from major investors like Amazon or Google, neither of whom has a board seat. However, critics point out that the mechanism is not yet fully proven, as the trust's board representation remains limited according to industry reports.

Operational Tactics for High-Speed Development

While the LTBT provides safety oversight, Anthropic's product team is engineered for speed. An interview with Head of Product Cat Wu, summarized on Summify, reveals that the company can take features from concept to launch in as little as one day. This velocity is driven by several key practices:

- "Research preview" labels that let engineers ship experimental features weekly and collect feedback quickly.

- Hiring engineers with strong product judgment, reducing layers between concept and code.

- Internal tools like Claude Code that automate repetitive tasks and free capacity for iteration.

According to industry reports, these methods have significantly reduced release cycles, aligning with regular major model updates. This rapid cadence is supported by external observations of multiple major Claude updates throughout the year.

Mission Alignment as a Decision-Making Compass

Ultimately, both governance and operations are guided by the same principle: developing safe AI for humanity. This mission clarity allows product teams to resolve trade-offs decisively - if a feature undermines safety, it is halted. At the board level, the LTBT has the power to remove directors who disregard critical safety reviews on topics like bio-risk or national security.

This dual structure helps explain why Anthropic has received recognition for its safety practices, even with its accelerated product releases. Still, observers caution that the system's full effectiveness depends on the trust securing greater board influence before more advanced AI systems are developed.

How does Anthropic's Long-Term Benefit Trust (LTBT) actually protect its safety mission?

The LTBT is a purpose trust that holds special Class T shares; these shares carry zero financial upside but provide board representation rights that are expected to increase over time according to industry reports. Because trustees are legally barred from being employees, executives, or investors, they can block any decision that elevates near-term profit over catastrophic-risk considerations. According to industry reports, the trust has already influenced board-level reviews of frontier-model launches, showing the mechanism works even in its early stages.

What operational tricks let Anthropic ship code daily without abandoning safety gates?

Anthropic significantly collapsed its release cycle by replacing PRDs with "research-preview" launches: every feature ships behind an experimental flag, gathers live metrics for a short period, then graduates or dies. Engineers, not PMs, own the spec; hiring favors "product taste" over layers of oversight. Internal agent tools (Claude Code, Cowork) auto-generate tests, docs and rollback scripts, letting small squads push production code faster than traditional larger teams while still running automated safety evals on each diff.

How does the Public Benefit Corporation charter interact with the LTBT in practice?

The PBC charter gives directors legal cover to weigh public interest, but the LTBT turns that permission into a mandate. If management rushes a model to market without required risk assessments, the LTBT can fire directors or refuse re-election. Investors such as Amazon and Google hold zero board seats, so the LTBT remains the only concentrated power that can compel safety spending even when it compresses margins.

Did the governance design survive major fundraising rounds?

Yes. According to industry reports, recent funding deals preserved LTBT voting rights and barred any investor from acquiring Class T shares. New capital entered as non-voting preferred equity, leaving mission control untouched. One condition: trustees added national-security expertise to the board slate, showing outside money accepted tighter safety oversight as part of the price of admission.

Where has speed-plus-safety delivered measurable market impact?

Anthropic has maintained a rapid release cadence with multiple major Claude model updates throughout the year. Anthropic conducted the only human participant bio-risk uplift trials and received the highest grade of C+ in the 2025 AI Safety Index. The data suggest customers reward visible safety work when it does not slow feature velocity.