Anthropic unveils Managed Agents upgrades, partners with SpaceX Colossus

Serge Bulaev

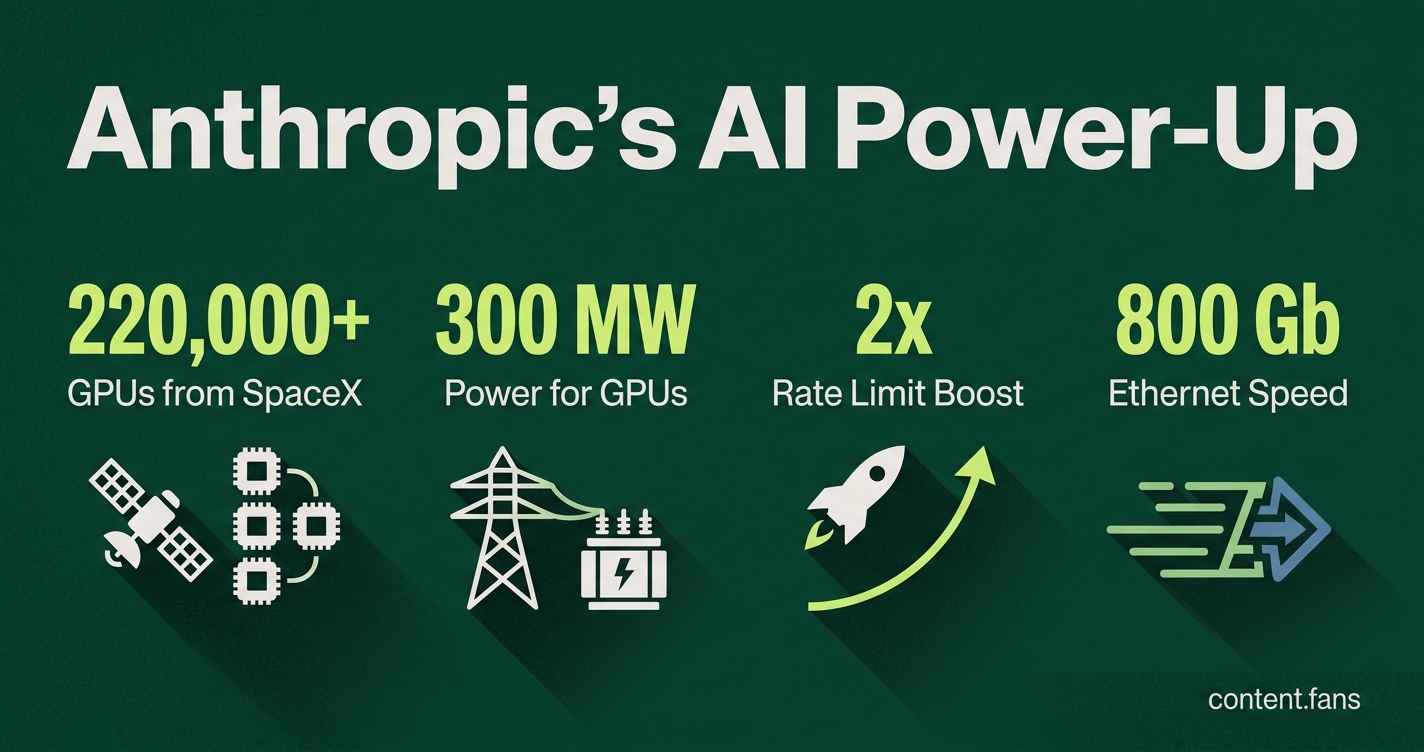

Anthropic announced new features for its Managed Agents and a partnership with SpaceX's Colossus supercomputer. These upgrades may help agents run all the time without needing people to watch over them. The Managed Agents now include tools for working in teams, learning from past sessions, and measuring progress. Anthropic also gained access to over 220,000 GPUs from SpaceX to handle more traffic. This suggests Anthropic wants to make it easier for businesses to use and manage AI agents at a large scale.

At its Code with Claude developer event, Anthropic announced major Managed Agents upgrades and a pivotal partnership with SpaceX's Colossus supercomputer. These updates deliver enterprise-grade support for persistent AI workflows, focusing on platform enhancements over new models. This strategic move pairs advanced orchestration software with dedicated compute, enabling AI agents to operate continuously without human supervision.

Three capabilities now live in Managed Agents

Anthropic's update introduces three core capabilities: Multi-Agent Orchestration for parallel sub-agent tasks, Dreaming for autonomous agent improvement by reviewing past sessions, and Outcomes, which allows agents to iterate on tasks until they meet predefined success criteria. These features enable more complex, self-sufficient agent workflows.

According to industry reports, three additions are now available to developers:

- Multi-Agent Orchestration: A primary agent can now deploy multiple specialist sub-agents to work in parallel. Each sub-agent operates with its own tools and context, while the runtime manages threading and a shared file system.

- Dreaming: This feature runs a scheduled background process for agents to review past sessions, refine their memory, and identify recurring errors, enabling continuous improvement between tasks.

- Outcomes: Developers can define specific success metrics, allowing an agent to iterate on a task until all criteria are fulfilled. This provides clear, measurable progress for complex, long-running objectives.

A live demonstration featured an incident-response workflow where a lead agent delegated log analysis and deployment history reviews to two sub-agents. After five minutes of parallel processing, the lead agent reconciled the findings, with all reasoning steps fully traceable in the Claude Console.

Rate-limit lifts and pre-built routines

In addition to agent capabilities, Anthropic boosted platform performance by doubling the five-hour rate limits for Claude Code and eliminating peak-hour throttling for all paid plans. API allowances for Claude Opus have also reportedly increased. A new quality-of-life feature includes schedulable "Claude Code Routines," which function like cron jobs for tasks such as automated pull-request audits.

SpaceX compute agreement brings 220 000 GPUs

To support the increased workload from these new agentic features, Anthropic has secured exclusive access to the SpaceX Colossus 1 supercluster in Memphis. The facility provides over 220,000 NVIDIA H100, H200, and GB200 GPUs, supported by approximately 300 megawatts of power and Spectrum-X 800 Gb Ethernet, as reported by Gagadget gagadget.com/en/708917-anthropic-locks-in-spacexs-colossus-supercluster-and-eyes-orbital-data-centers. The infrastructure features advanced cooling systems and power management for stability.

Anthropic executives confirmed the extra bandwidth has already enabled higher burst quotas for customers. Anthropic has access to 300+ MW at Colossus 1. SpaceX/xAI has separate long-term plans to expand to 1 million GPUs, but this is not part of the Anthropic agreement. The deal is exploratory regarding orbital compute. However, environmental groups have raised concerns, noting that the 35 on-site gas turbines could produce 1,200-2,000 tons of NOx emissions annually without a published carbon offset plan.

Why scale matters for agents

The need for such massive scale is driven by the economics of AI agents. According to industry reports, continuous-inference agents can significantly increase token consumption compared to standard chatbots, driving monthly costs substantially higher. Industry analysts note that costs from "near-constant inference" are outpacing hardware cost reductions. With accelerated servers comprising a significant portion of the growing AI hardware market, dedicated clusters like Colossus become critical. They provide predictable cost models, acting as a financial control against the volatility of on-demand cloud capacity.

This positions Claude Managed Agents as a turnkey solution that simplifies both software orchestration and hardware logistics. By abstracting these complexities, Anthropic offers enterprises a unified console to deploy, monitor, and optimize autonomous AI workflows at scale.

What exactly are the three new Managed Agent capabilities Anthropic just shipped?

- Multi-Agent Orchestration - A coordinator agent can spawn specialist sub-agents that run in parallel on a shared filesystem, each with its own model, prompt and tools.

- Dreaming - A nightly job that reviews past sessions, surfaces recurring mistakes and auto-updates memory so the agent improves between runs.

- Outcomes - You can now write explicit success rubrics; the agent keeps iterating until every criterion is met, turning long-horizon tasks into self-validating loops.

These features are now available in the Claude Console following the Code with Claude developer event.

How does the SpaceX Colossus deal change what I can do with Claude?

Anthropic has exclusive use of Colossus 1 - a 220 000+ GPU, 300 MW Memphis supercluster - for the next several years.

Immediate impact you will notice:

- Claude Code five-hour rate limits doubled on every paid plan

- Peak-hour throttling removed for Pro/Max users

- Opus API limits raised, letting you run fatter agent fleets without queuing

Behind the scenes the extra silicon also funds trillion-parameter model training and 24/7 agent inference that would have cost customers significant amounts in cloud tokens.

Why pair software orchestration with a physical super-cluster?

Industry data shows agentic workloads multiply token consumption significantly compared with one-off generative calls, and monthly inference bills for large enterprises are growing substantially.

By owning both layers Anthropic can:

- Guarantee memory-hot GPUs for stateful agents (cuts latency)

- Schedule sub-agent fleets at high cluster throughput (reduces waste)

- Offer predictable flat-rate pricing instead of pay-per-token volatility

In short, software-level lifecycle controls plus raw metal keeps 24/7 production agents both fast and affordable.

Are there sustainability or regulatory concerns with Colossus?

The facility uses 35 on-site gas turbines to supplement the 250 MW grid draw; third-party estimates put NOx emissions at 1 200-2 000 tons per year.

Anthropic has not yet published carbon-offset plans, so enterprise buyers with ESG mandates should factor this into supplier-risk reviews.

The deal provides >300 MW capacity within the month; no financial or duration details verified.

What still limits agent scale even with Colossus in the mix?

- Memory bandwidth per GPU remains the choke point when thousands of agents maintain hot state.

- Diminishing returns - research shows accuracy gains flatten after significant GPU allocation per workflow, so bigger clusters help throughput more than intelligence.

- Idle capacity - without autoschedulers, firms still leave substantial GPU resources dormant while teams queue jobs.

Bottom line: Colossus fixes the "can I get enough GPUs?" problem, but you still need solid FinOps and workflow design to avoid the "I have GPUs but they're idle" trap.