Anthropic Hits $30B Run Rate, Pentagon Bans Its AI Models

Serge Bulaev

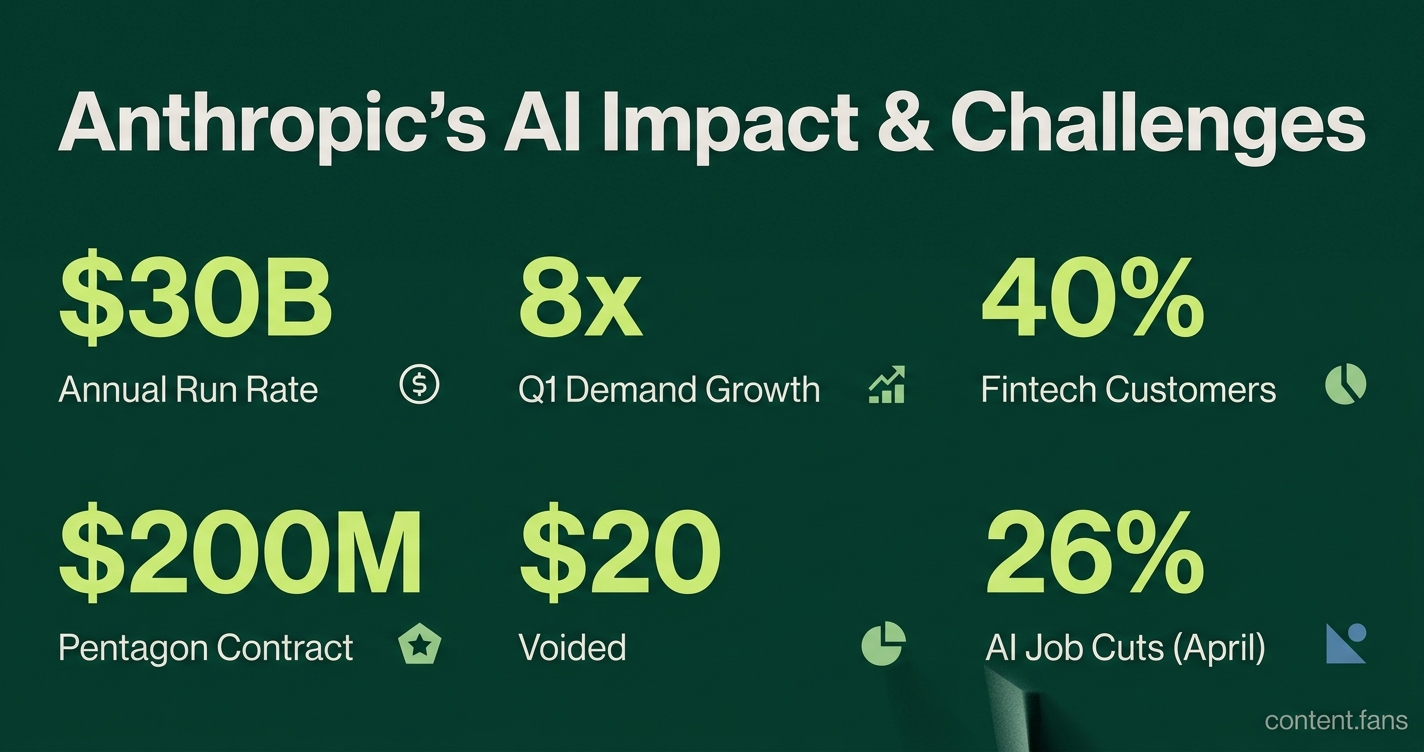

Anthropic's revenue grew very quickly in early 2026, reaching a possible $30 billion run rate, mainly because of strong business demand for its coding tools and finance-focused agents. Many financial companies seem to be using Anthropic's AI for jobs that need careful records and fast responses. However, the Pentagon did not choose Anthropic for new contracts, possibly because the company refused to allow its AI for certain military uses, which the government saw as a risk. Layoffs linked to AI are rising, with many jobs in tech and routine office work being affected. Experts suggest that while many jobs may change because of AI, only a smaller portion might be removed completely.

Recent developments regarding Anthropic's $30B run rate and the Pentagon's ban of its AI models reveal the powerful commercial and policy forces shaping the AI landscape. For executives and engineers, these events provide critical data on revenue drivers, regulatory risks, and emerging labor market trends.

Unpacking Anthropic's Enterprise Growth

Anthropic's explosive revenue growth stems from intense enterprise demand for its Claude Code AI programming tool and its specialized workflow agents for the financial sector. These products cater to high-value business needs, from accelerating software development to ensuring regulatory compliance, driving rapid commercial adoption.

CEO Dario Amodei announced at the Code with Claude conference that Q1 demand outpaced forecasts eightfold, elevating the company's annualized revenue run rate to approximately $30 billion LinkedIn. This growth is attributed to Claude Code and specialized workflow agents for finance and compliance. Fintech adoption is particularly strong, with financial services firms comprising an estimated 40% of Anthropic's top 50 customers. This high-compute workload is driving large capacity purchases from cloud providers.

Pentagon Exclusion Highlights AI Safety Stance

On May 1, the Department of Defense excluded Anthropic from new classified-network agreements with AI vendors. The decision followed Anthropic's refusal to allow its models to be used for fully autonomous weapons or mass domestic surveillance - stances the company considers non-negotiable. The administration subsequently designated Anthropic a "supply chain risk," which voided a $200 million contract and barred its models from military networks CNN.

While legal challenges to overturn the designation are underway, competitors like OpenAI have accepted the Pentagon's "all lawful purposes" clause. This divergence highlights a growing split between AI firms focused on integrated safety guardrails and government agencies that require doctrinal flexibility.

Automation's Impact on Tech Employment

Beyond government contracts, AI-related job cuts are increasing. According to Challenger, Gray and Christmas, AI was a factor in 21,490 job eliminations in April, representing 26% of all U.S. cuts that month. Globally, nearly half of the 70,000 to 80,000 tech roles lost in Q1 were attributed to automation pressures.

A snapshot of recent labor market data:

- Tech sector job cuts reached 33,361 in April, the largest of any sector.

- Microsoft reduced its workforce by 15,000 over the last year while increasing AI investments.

- Employee anxiety about job security rose from 28% to 40% year-over-year.

Boston Consulting Group projects that while 10-15% of jobs may be eliminated, 50-55% will be reshaped by AI. Roles involving routine data handling are at high risk, whereas work requiring complex judgment is more likely to be augmented, not replaced.

Why Developers Care

For developers and tech leaders, these trends offer clear takeaways. Anthropic's success pinpoints enterprise demand for coding assistants and specialized agents. The Pentagon's decision shows how safety policies can directly impact market access, while layoff data confirms that skills in AI integration and oversight are becoming paramount as repetitive tasks are automated.

Why did Anthropic's revenue run-rate leap to $30 billion in early 2026?

The jump from $9 billion ARR at the end of 2025 to $30 billion by April 2026 was powered by an unexpected 80-fold surge in enterprise compute demand, far above the 10-fold increase Anthropic had forecast.

- Claude Code - the company's AI-assisted programming tool - was the single biggest driver, with CEO Dario Amodei saying "the majority of code at Anthropic itself is now written by Claude Code."

- Workflow agents sold to banks and other large institutions account for the rest; 40% of Anthropic's top 50 customers are financial-services firms, attracted to high-margin, compliance-friendly automation.

- Result: >1,000 companies now spend more than $1 million a year on Claude services, double the February 2026 count, pushing monthly revenue per active user to $16.20 - seven times OpenAI's figure.

Why has the Pentagon banned Anthropic models from future AI contracts?

The Department of Defense designated Anthropic a "supply-chain risk" in February 2026 and cancelled a $200 million classified-network deal after the company refused to drop two contractual red lines:

1. No use in fully autonomous weapons (human must remain in the loop for lethal decisions).

2. No mass domestic surveillance of U.S. citizens.

DoD asked for "all lawful operational use" language; Anthropic held firm, negotiations collapsed, and an executive order followed, barring any contractor from using Claude in defense work. Competitors including OpenAI, Google and Microsoft signed the new framework within hours.

How is AI-related automation affecting tech employment in 2026?

AI was cited in 26% of all U.S. job cuts in April 2026 (about 21,500 positions) and in roughly half of the 78,000 global tech layoffs recorded in Q1 2026, according to industry trackers.

- Coding, QA, IT support and back-office data handling are the tasks most frequently eliminated or consolidated.

- Financial analysts are next in line: routine modelling, earnings summaries and regulatory filings are increasingly handled by agent tools, but roles requiring judgment, client management or strategic advice are being reshaped rather than removed.

- Long-term projection: 10-15% of current jobs could disappear, while 50-55% will be AI-amplified, per BCG's 2026 workforce outlook.

Which enterprise AI products are pulling ahead of OpenAI in revenue share?

Counterpoint Research puts Anthropic at 31.4% of global LLM revenue in Q1 2026, nudging past OpenAI (29%) for the first time.

Key differentiators:

- Claude Code - deep repository context, multi-file editing and terminal access make it popular with engineering teams inside Fortune 500 firms.

- Financial and compliance agents - pre-built modules that pass SOC-2 and FedRAMP audits, letting banks plug Claude into loan-approval, risk-reporting and trading-floor workflows without extra certification cycles.

- Average revenue per MAU of $16.20 versus OpenAI's $2.20 shows Anthropic's narrower but higher-paying customer base.

What capacity steps is Anthropic taking to keep up with 80-times compute demand?

To close an 8-times forecast gap, Anthropic announced or finalized deals in May 2026 for more than 10 gigawatts of dedicated AI capacity:

- Full output of xAI's "Colossus 1" super-cluster in Memphis (≈ 1 GW) booked via SpaceX.

- Multi-billion-dollar expansions with Amazon AWS and Google Cloud, each pledging additional gigawatts and custom Tranium/TPU slices.

- Internal code efficiency gains - 30% drop in tokens per generated unit test - partly offset hardware shortages while new clusters come online.